Validating Automated PES Sampling: A Guide for Biomedical Researchers

Automated sampling of potential energy surfaces (PES) is revolutionizing computational chemistry and drug discovery by enabling large-scale, quantum-accurate simulations.

Validating Automated PES Sampling: A Guide for Biomedical Researchers

Abstract

Automated sampling of potential energy surfaces (PES) is revolutionizing computational chemistry and drug discovery by enabling large-scale, quantum-accurate simulations. However, the predictive power of these methods hinges on rigorous validation to ensure reliability in modeling biomolecular interactions and reaction mechanisms. This article provides a comprehensive framework for the validation of automated PES sampling algorithms. We explore the foundational principles, detail current methodological approaches and software tools, address common troubleshooting and optimization strategies, and establish key metrics for rigorous performance benchmarking. Aimed at researchers and drug development professionals, this guide synthesizes best practices to foster robust and reproducible computational research, accelerating the path from simulation to therapeutic discovery.

The Critical Need for Validation in Automated PES Sampling

Defining the Potential Energy Surface (PES) and Its Role in Biomolecular Modeling

The Core Concept: What is a Potential Energy Surface?

A Potential Energy Surface (PES) describes the energy of a system, particularly a collection of atoms, as a function of their relative positions [1] [2]. It is a foundational concept in quantum chemistry and biomolecular modeling, providing an "energy landscape" where the potential energy (height) is plotted against molecular geometrical coordinates (the landscape's longitude and latitude) [1] [3].

The Born-Oppenheimer approximation, which states that nuclear motion is separate from and much slower than electron motion, is fundamental to the PES concept. This allows the energy to be calculated for any given arrangement of nuclei [4]. The dimensionality of a PES is typically 3N-6 for a non-linear molecule of N atoms, representing the number of internal degrees of freedom [1] [4].

Key topological features on the PES provide critical insights into molecular stability and reactivity:

- Energy Minima: correspond to stable molecular structures, such as reactants, products, or reaction intermediates. At a minimum, the curvature of the PES is positive in all directions [2] [3] [4].

- Saddle Points (Transition States): represent the highest energy point on the lowest energy pathway connecting two minima. They are characterized by negative curvature in one direction (the reaction coordinate) and positive curvature in all others [2] [5] [4]. Identifying transition states is essential for understanding reaction kinetics and feasibility [6].

Comparative Analysis of Automated PES Exploration Algorithms

Automated exploration of PES is crucial for studying complex biomolecular systems. The table below compares the core methodologies, strengths, and application contexts of different modern approaches.

| Algorithm / Program | Core Methodology | Key Innovation / Strategy | Reported Strengths & Applications |

|---|---|---|---|

| ARplorer [6] | Quantum Mechanics (QM) + Rule-based | Large Language Model (LLM)-guided chemical logic; Active-learning TS sampling; Parallel multi-step reaction searches. | Effectively handles complicated organic/organometallic systems; High computational efficiency in identifying multistep pathways. |

| aims-PAX [7] | Machine Learning Force Fields (MLFF) | Parallel, multi-trajectory Active Learning (AL); Utilizes general-purpose MLFFs for initial sampling. | Reduces required DFT calculations by up to 100x; Efficient for large, flexible systems (e.g., peptides). |

| ArcaNN [8] | Machine Learning Interatomic Potentials (MLIP) | Concurrent learning integrated with enhanced sampling techniques; Query-by-committee uncertainty measure. | Accurately samples high-energy transition states; Designed for chemical reactions in condensed phases. |

| Traditional QM/MD [6] | Quantum Mechanics / Molecular Dynamics | Unbiased search of the PES without pre-defined filters or guidance. | Theoretically comprehensive; Often generates impractical pathways and requires substantial time. |

Experimental Protocols for PES Algorithm Validation

Protocol 1: LLM-Guided Exploration (ARplorer)

This protocol validates a method that integrates general chemical knowledge for efficient PES exploration [6].

- Knowledge Base Curation: A general chemical knowledge base is created by processing textbooks, research articles, and databases. This is refined into general reaction patterns (SMARTS patterns) [6].

- System-Specific Logic Generation: The specific reaction system is converted into SMILES format. A specialized LLM, prompted with the general knowledge base, generates system-specific chemical rules and active site patterns [6].

- Iterative PES Exploration:

- Active Site Identification: Using the curated chemical logic, the program identifies active atoms and potential bond-breaking/forming locations [6].

- Transition State Search & Optimization: Molecular structures are optimized through iterative TS searches that blend active-learning sampling with potential energy assessments [6].

- Pathway Verification: Intrinsic Reaction Coordinate (IRC) analysis is performed to confirm the pathway connects correct minima. Duplicates are removed, and the structure is finalized for the next iteration [6].

- Validation: The final output is a set of validated reaction pathways and transition states. Performance is benchmarked against conventional QM methods by comparing the number of computational steps required to locate key TS in multi-step reactions [6].

Protocol 2: Active Learning for MLFFs (aims-PAX)

This protocol outlines the automated active learning workflow for generating robust Machine Learning Force Fields [7].

- Initial Dataset & Model Generation: An initial ensemble of MLFFs is created. This can be done via short ab initio simulations or, more efficiently, by using a general-purpose MLFF to generate physically plausible geometries, which are then labeled with a reference ab initio method [7].

- Parallel Active Exploration:

- Uncertainty-Driven Sampling: Multiple molecular dynamics (MD) trajectories are run in parallel using the current MLFF. The model's uncertainty is computed in real-time for new configurations encountered [7].

- Adaptive Selection & Labeling: Configurations that exceed a pre-set uncertainty threshold are selected, and their energies/forces are recalculated using the accurate reference method (e.g., DFT) [7].

- Model Retraining: The newly labeled data is added to the training set, and the MLFF is retrained. This loop continues until the model's uncertainty is low across all relevant regions of the PES [7].

- Validation: The resulting MLFF is validated by running long-timescale MD simulations and comparing properties (e.g., radial distribution functions, energy distributions) against direct ab initio MD results or experimental data [7].

The Scientist's Toolkit: Essential Research Reagents

The following table details key computational tools and methodologies essential for automated PES exploration.

| Tool / Method | Function in PES Research |

|---|---|

| Quantum Chemistry Software (e.g., Gaussian, FHI-aims) [6] [7] | Provides high-accuracy reference calculations (energies and forces) for specific molecular configurations using methods like Density Functional Theory (DFT). |

| Semi-Empirical Methods (e.g., GFN2-xTB) [6] | Offers a faster, less accurate quantum mechanical method for initial PES scanning and geometry pre-optimization before higher-level calculation. |

| Machine Learning Interatomic Potentials (MLIPs) [7] [8] | A class of models that learn the PES from reference data, enabling near-quantum accuracy at a fraction of the computational cost for molecular dynamics simulations. |

| Active Learning (AL) Framework [7] | An iterative algorithm that uses the model's own uncertainty to decide which new data points need a costly reference calculation, optimizing the data collection process. |

| Enhanced Sampling Techniques [8] | A set of computational methods (e.g., metadynamics) designed to drive simulations into high-energy, rarely sampled regions (like transition states) that are critical for studying reactivity. |

| Intrinsic Reaction Coordinate (IRC) [6] | A computational analysis following a transition state downhill to confirm it connects the correct reactant and product minima on the PES. |

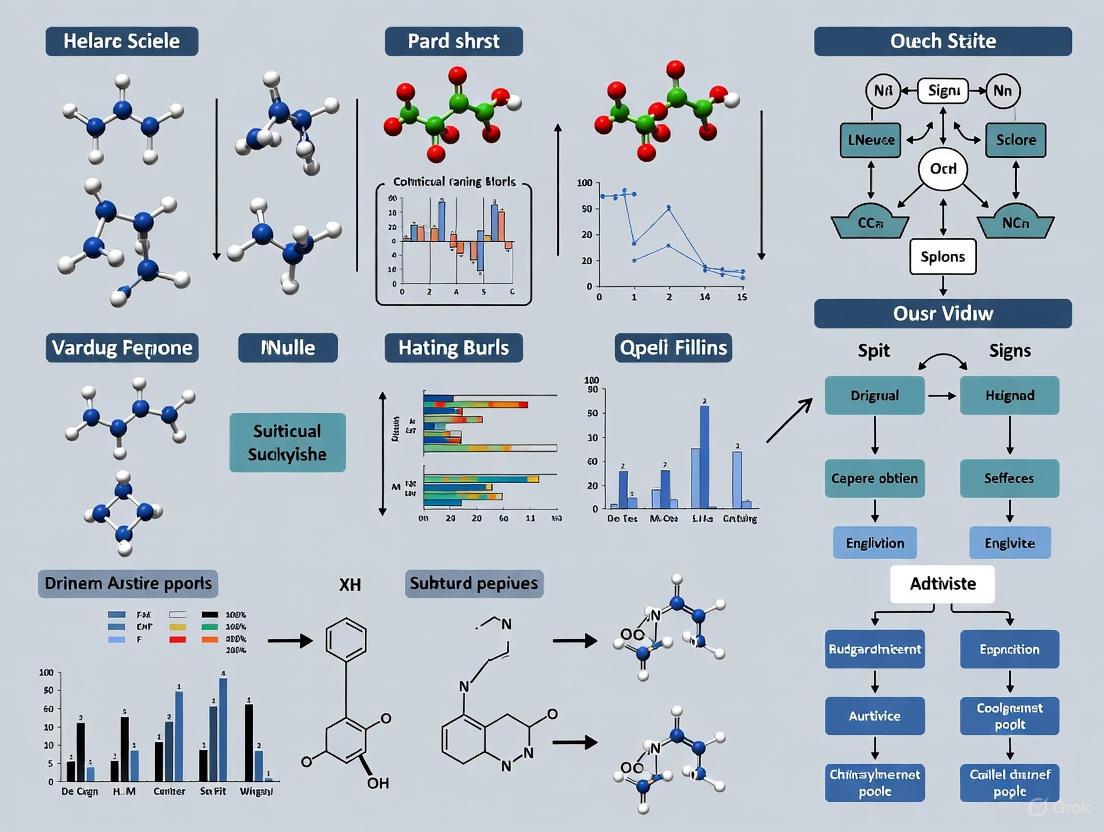

Workflow Visualization: Automated PES Exploration

The diagram below illustrates the logical structure of a generalized, iterative active learning workflow for automated PES exploration, integrating elements from protocols like aims-PAX and ArcaNN.

Key Insights for Biomolecular Modeling

Understanding the PES is paramount for biomolecular modeling. The "folding funnel" hypothesis, which conceptualizes protein folding as a journey to the lowest free energy state on a complex PES, is a direct application of this concept [2]. Accurately modeling these landscapes allows researchers to predict stable protein conformations, understand folding pathways, and identify misfolded states implicated in disease.

The advances in automated PES sampling algorithms directly address the critical challenge of rare events in biomolecular simulations. While traditional molecular dynamics might require impractically long simulation times to observe events like ligand unbinding or conformational changes, the integration of enhanced sampling with active learning MLFFs, as demonstrated by ArcaNN and aims-PAX, provides a powerful framework to systematically and efficiently explore these high-energy but functionally crucial regions of the PES [7] [8]. This enables a more predictive understanding of biomolecular function and facilitates rational drug design by providing accurate thermodynamic and kinetic parameters.

In computational chemistry and materials science, Automated Potential Energy Surface (PES) sampling algorithms have become indispensable for exploring reaction mechanisms, predicting material properties, and accelerating drug discovery. These algorithms efficiently navigate the complex energy landscape of atomic systems to identify critical points such as local minima and transition states [9]. However, the computational efficiency of these methods is meaningless without rigorous validation of their predictive power. The fundamental challenge lies in the distinction between interpolation—where models perform well on data similar to their training set—and genuine predictive capability—where models accurately describe unseen configurations and rare events crucial for understanding chemical reactivity and molecular dynamics.

Recent research has revealed that machine learning interatomic potentials (MLIPs) with impressively low average errors can still produce significant discrepancies in molecular dynamics simulations, failing to accurately capture diffusion processes, defect properties, and rare events [10]. This validation gap has profound implications for drug development, where inaccurate PES models can mislead researchers about binding mechanisms, reaction pathways, and stability properties. This article examines why comprehensive validation strategies are non-negotiable for reliable PES sampling in scientific and industrial applications, providing comparative analysis of validation methodologies and their impact on predictive reliability.

The Interpolation Fallacy: Limitations of Conventional Metrics

The Deception of Low Average Errors

Conventional validation of PES models typically reports low average errors, such as root-mean-square error (RMSE) or mean-absolute error (MAE), of energies and atomic forces across testing datasets. State-of-the-art MLIPs often achieve remarkably low errors, with forces as low as 0.03-0.05 eV Å⁻¹, creating a false sense of security about their reliability [10]. However, these metrics primarily measure performance on data points that are structurally similar to those in the training set, emphasizing interpolation capability rather than true predictive power.

Table 1: Common Validation Metrics and Their Limitations

| Metric | Typical Range | What It Measures | Blind Spots |

|---|---|---|---|

| Energy RMSE | 1-10 meV/atom | Interpolation accuracy for stable configurations | Rare event pathways, transition states |

| Force RMSE | 0.03-0.3 eV/Å | Local force field accuracy | Dynamical properties, collective motions |

| Defect Formation Energy | 0.1-0.5 eV error | Single-point defect properties | Migration barriers, complex defect interactions |

| Phonon Spectrum | <5% error | Harmonic vibrations | Anharmonic effects at high temperature |

Case Study: The Silicon MLIP Discrepancy

A revealing study on silicon MLIPs demonstrated that models with low force RMSE (below 0.3 eV Å⁻¹) still showed significant errors in predicting vacancy and interstitial migration barriers, even when similar structures were included in training [10]. Some MLIPs underestimated diffusion energy barriers by more than 20% compared to reference DFT calculations, highlighting how conventional metrics fail to capture errors in dynamic processes essential for understanding material behavior and chemical reactivity.

Beyond Interpolation: Essential Validation for Predictive Power

Rare Events and Defect Dynamics

Comprehensive validation must specifically address a model's performance for rare events and defect dynamics, which are critical for predicting chemical reactivity and material properties. Research shows that MLIPs optimized using rare event-based evaluation metrics demonstrate significantly improved prediction of atomic dynamics and diffusional properties [10]. Validating rare event prediction requires:

- Migration barrier accuracy: Comparing energy barriers for vacancy, interstitial, and adatom migration against reference calculations

- Transition state identification: Verifying the correct identification of saddle points on the PES

- Pathway validation: Ensuring the model reproduces correct reaction pathways, not just endpoint energies

Molecular Dynamics and Thermodynamic Properties

True predictive power emerges when PES models accurately reproduce thermodynamic properties and dynamic behavior over extended simulation times. Key validation aspects include:

- Radial distribution functions: Comparing structural properties against ab initio MD and experimental data

- Diffusion coefficients: Validating mass transport properties across relevant temperature ranges

- Phase stability: Ensuring correct prediction of phase transitions and relative stability

- Thermal expansion: Verifying response to temperature changes

Fu et al. reported that some MLIPs produce errors in radial distribution functions and can even fail completely after certain simulation durations, despite excellent performance on static validation metrics [10].

Spectroscopy and Experimental Validation

For astrophysical applications, ML-generated PESs must accurately reproduce spectroscopic data. A study on noble gas-containing molecules (NgH₂⁺) demonstrated that ML-PES models could successfully compute vibrational bound states and characterize isotopologues, with results comparing favorably with available spectroscopic data [11]. This experimental validation provides crucial confidence when applying these models to predict properties of molecules where spectroscopic data is limited or unavailable.

Comparative Analysis of PES Validation Methodologies

Quantitative Validation Metrics

Table 2: Advanced Validation Metrics for Predictive Power

| Validation Category | Specific Metrics | Target Performance | Application Context |

|---|---|---|---|

| Rare Event Accuracy | Force errors on migrating atoms (eV/Å) | <0.15 eV/Å | Diffusion, chemical reactions |

| Energy barrier error (eV) | <0.05 eV | Reaction rate prediction | |

| Dynamic Properties | Phonon band center error (cm⁻¹) | <10 cm⁻¹ | Thermal properties |

| Melt temperature error (K) | <50 K | Phase stability | |

| Defect Properties | Vacancy formation energy error (eV) | <0.1 eV | Radiation damage, aging |

| Surface energy error (J/m²) | <0.05 J/m² | Nanostructure stability | |

| Spectroscopic Accuracy | Vibrational frequency error (cm⁻¹) | <10 cm⁻¹ | Spectroscopic characterization |

Protocol for Comprehensive PES Validation

Based on recent research, we propose a comprehensive validation protocol for automated PES sampling algorithms:

Static Property Validation

- Formation energies of perfect crystals and common defects

- Elastic constants and mechanical properties

- Surface and interface energies

Dynamic Property Validation

- Phonon spectra and vibrational densities of states

- Molecular dynamics at relevant temperatures

- Diffusion coefficients and migration barriers

Rare Event Validation

- Nudged elastic band calculations for known transitions

- Transition state theory rate constants

- Rare event sampling efficiency

Experimental Cross-Validation

- Comparison with spectroscopic data when available

- Validation against thermodynamic measurements

- Assessment against kinetic data

The EMFF-2025 neural network potential for energetic materials demonstrates this comprehensive approach, validating predictions of structure, mechanical properties, and decomposition characteristics against both DFT calculations and experimental data [12].

Visualization: Validation Workflow for Predictive PES Models

Diagram 1: Comprehensive validation workflow for PES models, highlighting the iterative process of identifying and addressing failure modes.

Table 3: Research Reagent Solutions for PES Validation

| Tool/Category | Representative Examples | Function in Validation | Key Features |

|---|---|---|---|

| MLIP Architectures | DeePMD [12], GAP [10], M3GNet [13] | Core PES models with different accuracy/efficiency tradeoffs | Varied descriptor systems, training approaches |

| Sampling Algorithms | Automated PES Exploration [9], Enhanced Sampling [14] | Generate diverse configurations for training and testing | Process search, basin hopping, rare event focus |

| Reference Data | MatPES [13], r2SCAN calculations | Provide high-quality training and benchmarking data | Carefully sampled structures, improved DFT functionals |

| Validation Metrics | Force performance scores [10], RE-based metrics | Quantify predictive power beyond interpolation | Focus on rare events, dynamic properties |

| Specialized Software | AMS PES Exploration [9], DP-GEN [12] | Automated exploration and refinement of PES | Expedition-based exploration, transfer learning |

The journey from interpolation to genuine predictive power in automated PES sampling requires moving beyond conventional validation metrics. The research community must adopt comprehensive validation protocols that specifically address rare events, dynamic properties, and experimental observables. As MLIPs and automated PES sampling algorithms continue to evolve, robust validation remains the non-negotiable foundation for their reliable application in drug development, materials design, and fundamental scientific research. The development of specialized validation metrics focused on rare events and dynamic properties [10] represents a crucial step toward closing the gap between interpolation capability and true predictive power, ultimately enabling more trustworthy computational predictions across chemical and materials space.

In the field of computational chemistry and materials science, the accurate prediction of molecular behavior hinges on effectively exploring the potential energy surface (PES)—a multidimensional landscape that maps energy to atomic configurations [14] [15]. This pursuit is fundamentally constrained by two interconnected challenges: the curse of dimensionality inherent in high-dimensional configuration spaces, and the rare event problem associated with infrequent but critical transitions between metastable states [14] [16]. As molecular systems increase in complexity, their PES exhibits an exponential growth in local minima and transition states, with theoretical models suggesting the number of minima scales as e^ξN, where N is the number of atoms and ξ is a system-dependent constant [15]. This complexity creates a formidable sampling barrier for conventional computational methods.

Automated PES sampling algorithms have emerged as essential tools for addressing these challenges, enabling researchers to efficiently locate global minima, identify reaction pathways, and quantify kinetic barriers [15]. This guide provides a comprehensive comparison of current methodologies, focusing on their performance in handling high-dimensional spaces and rare events, with specific attention to validation protocols and quantitative benchmarking essential for research in drug development and materials design.

Comparative Analysis of Sampling Methodologies

Method Classification and Key Characteristics

Table 1: Classification of Automated PES Sampling Approaches

| Method Category | Representative Algorithms | Theoretical Basis | Dimensionality Handling | Rare Event Efficiency |

|---|---|---|---|---|

| Enhanced Sampling with ML | MetaD, Steered MD, Umbrella Sampling [14] [16] | Statistical Mechanics | ML-derived Collective Variables reduce dimensionality [14] | Active learning targets uncertain regions [16] [17] |

| Stochastic Global Optimization | Genetic Algorithms, Basin Hopping, Simulated Annealing [15] | Evolutionary Algorithms/Monte Carlo | Population-based parallel search [15] | Temperature protocols enhance barrier crossing [15] |

| Deterministic Global Optimization | Single-Ended Methods, GRRM [15] | Gradient/Curvature Analysis | Systematic following of reaction paths [15] | Direct localization of transition states [15] |

| Hybrid ML-Enhanced | ARplorer, ArcaNN, Differentiable Sampling [6] [17] [8] | Quantum Mechanics + ML Guidance | Chemical logic filters search space [6] | Enhanced sampling targets high-energy regions [8] |

Quantitative Performance Comparison

Table 2: Performance Benchmarking Across Methodologies

| Method | Activation Energy Error (kcal/mol) | Configuration Sampling Efficiency | Computational Cost (Relative to DFT) | System Size Limitations (Atoms) |

|---|---|---|---|---|

| Active Learning NNPs [16] | <1.0 | High (Targeted sampling) | 3-5 orders of magnitude faster [16] | Thousands (with locality approximation) [8] |

| Enhanced Sampling with CVs [14] | 1-3 (CV-dependent) | Medium-High (with good CVs) | 2-4 orders of magnitude faster [14] | Hundreds to thousands [14] |

| Genetic Algorithms [15] | N/A (Finds minima) | High (Broad exploration) | 1-3 orders of magnitude faster [15] | Hundreds (scaling with population) |

| LLM-Guided (ARplorer) [6] | System-dependent | Very High (Filtered search) | DFT-level accuracy with enhanced efficiency [6] | Complex organometallics demonstrated [6] |

Experimental Protocols and Validation Frameworks

Active Learning for Neural Network Potentials

Protocol Overview: The iterative active learning (AL) framework combines neural network potentials (NNPs) with enhanced sampling to systematically improve rare event prediction [16] [8]. This methodology addresses the critical limitation of conventional NNPs, which typically perform poorly outside their training domain and fail catastrophically for rare events [16] [17].

Key Methodological Steps:

- Initialization: Train an ensemble of NNPs on an initial dataset of reference configurations

- Uncertainty Quantification: Employ committee disagreement to estimate prediction reliability

- Enhanced Sampling: Use steered molecular dynamics or other biasing methods to explore configuration space

- Configuration Selection: Apply criteria combining uncertainty metrics and structural similarity

- Iterative Refinement: Retrain models with expanded dataset until target accuracy is achieved [16]

Validation Metrics: Success is quantified through activation energy errors (<1 kcal/mol target), force prediction accuracy, and stability in production molecular dynamics simulations [16]. The ArcaNN framework extends this protocol through automated enhanced sampling generation of training sets specifically for reactive systems [8].

Diagram 1: Active Learning Workflow for NNP Development. This iterative process systematically expands the training set to incorporate rare event configurations.

Enhanced Sampling with Collective Variables

Protocol Overview: Enhanced sampling methods accelerate rare events by biasing simulations along carefully chosen collective variables (CVs)—low-dimensional descriptors of slow system modes [14]. Machine learning has transformed CV construction through data-driven approaches that automatically identify relevant system features.

Methodological Framework:

- CV Identification: Use dimensionality reduction techniques (autoencoders, non-linear PCA) on simulation data to extract relevant CVs [14]

- Biasing Potential: Apply well-tempered metadynamics or adaptive biasing forces to flatten energy landscape along CVs

- Free Energy Calculation: Reconstruct unbiased free energy surfaces through reweighting schemes

- Path Sampling: Identify mechanistic pathways between metastable states

Validation Approach: Assess convergence through free energy profile stability, committor analysis for transition states, and comparison with experimental kinetics where available [14]. The quality of ML-derived CVs is validated by their ability to discriminate between metastable states and describe reaction mechanisms [14].

LLM-Guided Reaction Pathway Exploration

Protocol Overview: The ARplorer program integrates quantum mechanics with rule-based methodologies underpinned by large language model (LLM)-assisted chemical logic [6]. This approach combines the precision of quantum mechanical calculations with chemically intelligent pathway filtering.

Implementation Details:

- Chemical Logic Curation: LLMs process scientific literature to generate general chemical knowledge and system-specific reaction patterns

- Active Site Identification: Pybel module compiles active atom pairs and potential bond-breaking locations

- Parallel Multi-step Search: Execute simultaneous reaction pathway exploration with energy-based filtering

- Transition State Validation: Intrinsic reaction coordinate (IRC) analysis confirms connection between reactants and products [6]

Performance Validation: Method effectiveness is demonstrated through case studies including organic cycloadditions, asymmetric Mannich-type reactions, and organometallic Pt-catalyzed reactions, with comparison to established theoretical and experimental results [6].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Computational Tools for Automated PES Sampling

| Tool/Category | Representative Examples | Primary Function | Application Context |

|---|---|---|---|

| ML Potential Frameworks | ANI, DeePMD, MACE [16] [8] | High-dimensional PES fitting | Large-scale MD with quantum accuracy [16] |

| Enhanced Sampling Packages | PLUMED, SSD [14] | Collective variable-based biasing | Rare event acceleration in biomolecules [14] |

| Active Learning Platforms | DP-GEN, ArcaNN, ChecMatE [16] [8] | Iterative dataset expansion | Automated training of reactive MLIPs [8] |

| Global Optimization Software | GRRM, GMIN, LASP [15] | Structure prediction and pathway exploration | Nanoclusters and complex molecular systems [15] |

| Quantum Chemistry Codes | Gaussian, ORCA, GFN2-xTB [6] | Reference energy/force calculations | Training data generation and method validation [6] |

Integrated Workflows for Challenging Systems

Reactive Machine Learning Interatomic Potentials

For chemically reactive systems in condensed phases, the ArcaNN framework demonstrates how enhanced sampling can be integrated with active learning to generate comprehensive training sets [8]. The methodology addresses the critical challenge of sampling high-energy transition states that are rarely visited in conventional molecular dynamics.

Diagram 2: Integrated Workflow for Reactive MLIP Development. This framework ensures uniform accuracy along the complete reaction coordinate.

Application Case Study: For a nucleophilic substitution reaction in solution, this approach achieved uniform prediction errors (<1 kcal/mol) across the entire reaction coordinate, including the transition state region [8]. The resulting potentials enabled nanosecond-scale reactive simulations with quantum accuracy, demonstrating the capability to predict both thermodynamic and kinetic properties in complex environments.

Differentiable Sampling for Efficient Exploration

A recent innovation in the field, differentiable sampling using adversarial attacks on uncertainty metrics, enables direct navigation to high-likelihood, high-uncertainty configurations without exhaustive molecular dynamics simulations [17]. This approach inverts the traditional sampling paradigm by using gradient-based optimization to actively seek configurations where model performance is poor.

Implementation: By treating atomic coordinates as differentiable parameters and maximizing committee-based uncertainty metrics subject to likelihood constraints, the method efficiently identifies transition states and rare event configurations [17]. When combined with active learning loops, this technique bootstraps and improves neural network potentials while significantly reducing calls to computationally expensive ground-truth methods.

Performance: Demonstrated applications include sampling of kinetic barriers for nitrogen inversion, collective variables in alanine dipeptide, and supramolecular interactions in zeolite-molecule systems [17]. The approach provides substantial efficiency gains over traditional molecular dynamics for exploring poorly characterized regions of the potential energy surface.

The validation of automated PES sampling algorithms requires multifaceted assessment of their performance across several domains: accuracy in predicting kinetic parameters (activation energies, reaction rates), efficiency in configuration space exploration, transferability across related chemical systems, and robustness in production simulations [16] [8]. Current methodologies show particular strength in different aspects of this challenge—active learning NNPs excel in achieving quantum accuracy for targeted processes, LLM-guided approaches enable efficient navigation of complex reaction networks, and enhanced sampling methods provide robust thermodynamic characterization.

Future methodology development will likely focus on increasing automation through end-to-end workflows, improving uncertainty quantification for reliable adaptive sampling, and enhancing transferability through better descriptors and architecture designs [14] [8]. As these computational tools mature, their integration with experimental validation will be crucial for establishing comprehensive benchmarks, particularly for pharmaceutical applications where predicting rare events like ligand binding and conformational changes directly impacts drug discovery pipelines.

Global Minima, Transition States, and Reaction Pathways

The exploration of Potential Energy Surfaces (PES) is fundamental to computational chemistry and materials science, enabling the prediction of reaction mechanisms, material properties, and kinetic parameters. Global minima represent the most stable configurations of a system, transition states (TS) are first-order saddle points on the PES that define energy barriers for chemical reactions, and reaction pathways describe the minimum energy paths connecting reactants, transition states, and products. Accurate sampling of these features is crucial for rational design in catalyst development, drug discovery, and functional materials engineering.

Traditional computational methods, including density functional theory (DFT) and quantum chemistry calculations, have provided valuable insights but face significant limitations in computational cost and scalability, particularly for complex systems with vast configurational spaces. The recent integration of machine learning (ML) and artificial intelligence (AI) has revolutionized PES sampling, enabling rapid exploration of previously inaccessible regions with near-quantum accuracy at dramatically reduced computational cost. This guide objectively compares the performance, methodologies, and applications of leading automated PES sampling algorithms, providing researchers with a framework for selecting appropriate tools based on specific scientific objectives.

Comparative Analysis of Key Algorithms

Table 1: Performance Comparison of Automated PES Sampling Algorithms

| Algorithm | Primary Function | Computational Efficiency | Key Metrics | Reported Performance | Applicable System Size |

|---|---|---|---|---|---|

| Self-Optimizing MLIP (ACNN) [18] | Crystal structure prediction & global minima search | 4 orders of magnitude speedup vs. DFT | Structure prediction accuracy, Sampling completeness | Exploration of ~10 million configurations in Mg–Ca–H and Be–P–N–O systems | Multi-component complex materials |

| React-OT [19] | Transition state generation | 0.4 seconds per TS generation | Structural RMSD: 0.044-0.103 Å, Barrier height error: 0.74-1.06 kcal/mol | Median RMSD 0.053 Å, 25% improvement with pretraining | Organic molecules (up to 7 heavy atoms) |

| Action-CSA [20] | Multiple reaction pathway finding | More efficient than long MD simulations | Pathway identification completeness, Transition time accuracy | Identified 8 pathways for alanine dipeptide consistent with 500μs Langevin dynamics | Biomolecular systems & flexible molecules |

| ARplorer [6] | Multi-step reaction pathway exploration | Efficient filtering reduces unnecessary computations | Success in identifying complex multi-step mechanisms | Demonstrated for organic cycloaddition, asymmetric Mannich-type, and Pt-catalyzed reactions | Organic and organometallic systems |

Table 2: Methodological Approaches and Validation of PES Sampling Algorithms

| Algorithm | Computational Approach | ML Architecture | Sampling Strategy | Validation Method |

|---|---|---|---|---|

| Self-Optimizing MLIP (ACNN) [18] | Attention-coupled neural network potential | Attention-coupled neural network (ACNN) with atomic cluster expansion | Self-evolving pipeline with iterative refinement | Comparison with DFT calculations on ternary and quaternary systems |

| React-OT [19] | Optimal transport theory | Object-aware SE(3) equivariant scoring network (LEFTNet) | Deterministic transport from linear interpolation of reactants and products | Structural RMSD and barrier height error on Transition1x test set (1,073 reactions) |

| Action-CSA [20] | Onsager-Machlup action optimization | Not applicable | Conformational space annealing with crossovers and mutations | Comparison with long Langevin dynamics simulations (500μs) |

| ARplorer [6] | Quantum mechanics + rule-based | LLM-guided chemical logic with SMARTS patterns | Active-learning TS sampling with energy filtering | Identification of known multi-step mechanisms in organic and organometallic reactions |

Experimental Protocols and Workflows

Self-Optimizing MLIP for Crystal Structure Prediction

The automated crystal structure prediction framework utilizing the Attention-Coupled Neural Network (ACNN) potential implements a self-optimizing workflow for global minima search in complex materials [18]. The methodology begins with initial dataset generation using active learning to sample diverse local minima across the potential energy surface. The ACNN architecture explicitly incorporates translational, rotational, and permutational invariance for energy predictions, and rotational equivariance for forces and stress tensors, with atomic energies expanded using n-body correlation functions within the atomic cluster expansion framework [18].

The self-evolving pipeline operates iteratively: (1) MLIP-driven crystal structure prediction explores configurational space, (2) candidate structures are screened, (3) anomalies are identified, and (4) the MLIP is autonomously refined using newly acquired data, progressively expanding its generalizability to unknown structures. This workflow was validated on Mg-Ca-H ternary and Be-P-N-O quaternary systems, demonstrating capability to explore nearly 10 million configurations with four orders of magnitude speedup compared to DFT while maintaining ab initio accuracy [18].

React-OT for Transition State Generation

React-OT implements an optimal transport approach for deterministic transition state generation from reactant and product structures [19]. The experimental protocol utilizes the Transition1x dataset containing 10,073 organic reactions with DFT-calculated TS structures for training and evaluation. The method employs an object-aware SE(3) equivariant transition kernel to preserve all required symmetries in elementary reactions.

The workflow begins with linear interpolation between reactant and product geometries as the initial guess. React-OT then simulates the sampling process as an ordinary differential equation (rather than a stochastic process), transporting the initial structure to the precise transition state through optimal transport theory. For inference, the model requires only fixed reactant and product conformations and generates the TS structure in a single deterministic pass, eliminating the need for multiple sampling runs and ranking models [19].

Validation metrics include structural RMSD between generated and reference TS structures, and barrier height error calculated from the energy difference between reactants and the transition state. React-OT achieves median structural RMSD of 0.053 Å and median barrier height error of 1.06 kcal/mol, improved to 0.044 Å and 0.74 kcal/mol with pretraining on a larger dataset computed with GFN2-xTB [19].

Action-CSA for Multiple Reaction Pathways

Action-CSA (Conformational Space Annealing) implements a global optimization approach for identifying multiple reaction pathways between fixed initial and final states [20]. The methodology is based on optimization of the Onsager-Machlup action, which determines the relative probability of pathways in diffusive processes.

The computational procedure incorporates: (1) Pathway representation as chains of states connecting endpoints, (2) Global optimization using conformational space annealing, which combines genetic algorithms, simulated annealing, and Monte Carlo with minimization, and (3) Local optimization of pathways using classical action without requiring second derivatives of the potential energy [20].

Key to the method is the maintenance of a diverse "bank" of pathways that undergoes iterative refinement through crossover operations (mixing segments of different pathways) and mutations (local perturbations). This approach enables efficient exploration of pathway space regardless of energy barrier heights. Validation against 500μs Langevin dynamics simulations for alanine dipeptide demonstrated accurate recovery of 8 distinct pathways with correct rank ordering and transition time distributions [20].

ARplorer for Automated Reaction Pathway Exploration

ARplorer integrates quantum mechanical calculations with rule-based approaches guided by large language models (LLMs) for automated exploration of multi-step reaction pathways [6]. The algorithm operates recursively: (1) Active site identification analyzes molecular structures to identify potential bond formation/breaking locations; (2) Structure optimization employs active-learning sampling and potential energy assessments; (3) IRC analysis derives new reaction pathways from optimized structures.

The chemical logic implementation combines two components: pre-generated general chemical logic derived from literature sources (books, databases, research articles), and system-specific chemical logic generated by specialized LLMs using SMILES representations of reaction systems. This dual approach enables both broadly applicable and case-specific reaction exploration [6].

The computational framework integrates GFN2-xTB for rapid PES generation with Gaussian 09 algorithms for TS searching, though it maintains flexibility to utilize different computational methods. For efficiency, ARplorer implements energy filtering and parallel computing to minimize unnecessary computations, successfully demonstrating application to organic cycloadditions, asymmetric Mannich-type reactions, and organometallic Pt-catalyzed reactions [6].

Workflow Visualization

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools for PES Sampling

| Tool/Resource | Type | Primary Function | Key Features | Accessibility |

|---|---|---|---|---|

| ACNN Potential [18] | Machine Learning Interatomic Potential | Energy and force prediction | Attention mechanism, n-body correlations, SE(3) invariance | Research implementation |

| React-OT [19] | Optimal Transport Model | Transition state generation | Deterministic inference, 0.4s per TS, object-aware equivariance | Research code |

| Action-CSA [20] | Global Optimization Algorithm | Multiple pathway finding | Onsager-Machlup action optimization, conformational space annealing | Research implementation |

| ARplorer [6] | Automated Reaction Explorer | Multi-step pathway discovery | LLM-guided chemical logic, QM/rule-based hybrid approach | Python/Fortran program |

| Transition1x Dataset [19] | Reaction Database | Training and benchmarking | 10,073 organic reactions with DFT TS structures | Research dataset |

| GFN2-xTB [6] [19] | Semi-empirical Quantum Method | Rapid PES generation | Low-cost electronic structure calculations | Open source |

| SMARTS Patterns [6] | Chemical Pattern Language | Reaction rule encoding | Molecular substructure matching for chemical logic | Standard cheminformatics |

| LLM Chemical Logic [6] | Knowledge Base | Reaction guidance | Literature-derived and system-specific reaction rules | Specialized implementation |

The validation of automated PES sampling algorithms demonstrates significant advances in computational efficiency and accuracy across diverse chemical domains. Self-optimizing MLIPs enable comprehensive exploration of complex material configurational spaces, React-OT provides deterministic transition state generation with exceptional speed and accuracy, Action-CSA facilitates global discovery of multiple reaction pathways, and ARplorer integrates chemical knowledge for multi-step reaction exploration. Each algorithm offers distinct advantages tailored to specific research objectives, from solid-state materials to solution-phase organic reactions.

Future development should focus on several key areas: (1) Improved generalizability across broader chemical spaces, particularly for organometallic and heterogeneous catalytic systems; (2) Enhanced uncertainty quantification to guide automated sampling and model refinement; (3) Integration of multi-fidelity data combining high-accuracy quantum calculations with lower-cost methods; (4) Standardized benchmarking protocols and datasets to enable objective comparison across methodologies [21] [19]. As these algorithms mature, they will increasingly enable predictive computational design of novel materials and catalysts, accelerating discovery across chemical sciences and drug development.

Current Algorithms and Automated Workflows for PES Exploration

Stochastic vs. Deterministic Global Optimization Methods

Global optimization methods are fundamental for navigating complex search spaces in scientific and engineering disciplines, from aerospace guidance systems to materials discovery and drug development. These algorithms are broadly categorized into deterministic and stochastic approaches, each with distinct philosophical underpinnings and performance characteristics. Deterministic methods, such as branch-and-bound and DIRECT-type algorithms, provide rigorous, mathematically guaranteed convergence but often at high computational cost. In contrast, stochastic methods—including evolutionary algorithms, Bayesian optimization, and random search—use probabilistic processes to explore vast solution spaces efficiently, offering good average performance without convergence guarantees. This guide objectively compares their performance, supported by experimental data, within the critical context of developing automated Potential Energy Surface (PES) sampling algorithms.

Core Methodologies and Theoretical Foundations

Deterministic Global Optimization

Deterministic algorithms are designed to find the global optimum with mathematical certainty for problems satisfying specific conditions, such as Lipschitz continuity. They operate on fixed rules, ensuring reproducible results.

- Key Algorithms: DIRECT (Dividing RECTangles) and its variants, branch-and-bound, and spatial branch-and-bound are prominent examples [22]. These methods systematically partition the search space, eliminating regions that cannot contain the global optimum.

- Mathematical Basis: A foundational approach involves solving a recurrence relation for the density distribution of a downhill random walk to predict the average number of steps needed to hit a target region in a monotonically decreasing energy landscape [23].

- Recent Hybrids: A growing trend embeds deterministic solvers within larger frameworks. For instance, deterministic global optimization can be used to solve the inner acquisition function in Bayesian optimization, guaranteeing the optimal selection of the next sample point [24].

Stochastic Global Optimization

Stochastic methods incorporate randomness to explore the search space. They do not offer deterministic guarantees but are often more computationally tractable for high-dimensional or noisy problems.

- Key Algorithms: This category includes a wide range of techniques, such as Genetic Algorithms (GA), Particle Swarm Optimization (PSO), Differential Evolution, and Bayesian Optimization (BO) [25] [26].

- Exploration-Exploitation: Methods like Bayesian Optimization balance exploration (probing uncertain regions) and exploitation (refining known good solutions) using an acquisition function, such as Expected Improvement (EI) or Lower Confidence Bound (LCB) [24] [26].

- Theoretical Insight: The efficiency of a pure stochastic search can be analyzed by modeling it as a biased random walk. The number of rejected steps between successful downhill moves increases significantly as the search nears the target, quantifying the method's intrinsic inefficiency [23].

Performance Comparison and Experimental Data

Computational Benchmark Studies

Large-scale numerical benchmarks provide direct comparisons. One extensive study evaluated 64 derivative-free deterministic algorithms against state-of-the-art stochastic solvers on 800 test problems generated by the GKLS generator and 397 problems from the DIRECTGOLib v1.2 collection. The results, summarized in the table below, highlight a clear performance dichotomy [22].

Table 1: Benchmark Results from DIRECTGOLib v1.2 and GKLS Tests

| Algorithm Type | Performance on GKLS-type & Low-Dimensional Problems | Performance in Higher Dimensions | Computational Cost |

|---|---|---|---|

| Deterministic | Excellent | Less efficient | High (rigorous bounding) |

| Stochastic | Less efficient | More efficient | Lower (no guarantees) |

The study concluded that deterministic algorithms, particularly modern DIRECT-type methods, excel on structured, low-dimensional problems. In contrast, stochastic algorithms show superior efficiency when scaling to higher-dimensional search spaces [22].

Real-World Application: Aerospace Guidance Trajectories

A comparative study in aerospace engineering tested six stochastic evolutionary algorithms, one Bayesian optimization method, and three deterministic search algorithms for real-time generation of guidance trajectories for suborbital spaceplanes. The algorithms were evaluated on computational complexity, robustness, and the diversity of solutions generated [25].

The findings demonstrated that reliable, real-time trajectory generation is feasible when the optimizer and its settings are carefully chosen. Furthermore, the stochastic and heuristic methods were particularly adept at generating a diverse set of trajectories connecting the initial and terminal conditions, a valuable property for operational flexibility [25].

Performance in Materials Science and PES Sampling

The exploration of Potential Energy Surfaces is a prime application area where these methods are benchmarked.

- Automated Frameworks: Software packages like

autoplexandAsparagusautomate the construction of machine-learned interatomic potentials (MLIPs), a process that relies heavily on global optimization for PES sampling. These frameworks often leverage random structure searching (RSS), a stochastic method, to efficiently explore the configurational space [27] [28]. - The Rise of Hybrid AI Models: Newer approaches, such as Deep Active Optimization (DAO), highlight a shift towards using deep neural networks as surrogates. For example, the DANTE algorithm combines a deep neural surrogate with a tree search method guided by a data-driven upper confidence bound (DUCB). This hybrid strategy has successfully tackled complex, high-dimensional problems (up to 2000 dimensions) with limited data, a domain where traditional stochastic and deterministic methods struggle [26].

Table 2: Performance in Potential Energy Surface (PES) Sampling

| Method / Framework | Core Approach | Key Strength in PES Context | Reference |

|---|---|---|---|

| autoplex | Stochastic (RSS) | Automated, high-throughput exploration of diverse crystal structures and stoichiometries. | [27] |

| Asparagus | Agnostic (User-Guided) | Streamlined, reproducible workflow for building ML-PES; lowers entry barrier. | [28] |

| DANTE | Hybrid (Neural-Surrogate) | Solves high-dimensional problems with non-cumulative objectives and very limited data. | [26] |

| AiiDA-TrainsPot | Stochastic (Active Learning) | Automated NNIP training with calibrated committee models for uncertainty estimation. | [29] |

Experimental Protocols in Practice

Protocol for Benchmarking Optimization Solvers

The large-scale benchmark study [22] provides a template for rigorous comparison:

- Problem Generation: Use a standardized test suite like the GKLS generator to create hundreds of benchmark problems with known optima.

- Solver Configuration: Test a wide array of solvers (e.g., 64 deterministic and several stochastic) using their default or well-tuned settings.

- Performance Metric: Define a primary metric, such as the number of successful runs (finding the global optimum within a tolerance) or the average number of function evaluations to convergence.

- Computational Execution: Execute a massive number of solver runs (e.g., over 239,400) to ensure statistical significance, which required over 531 days of single CPU time in the cited study.

- Result Aggregation: Analyze results stratified by problem type (e.g., GKLS vs. traditional) and dimensionality to identify performance trends.

Protocol for Autonomous PES Exploration

The autoplex framework [27] outlines a modern, application-focused protocol:

- Initialization: Start with a small set of initial atomic structures relevant to the target material system.

- Iterative Exploration and Fitting:

- Exploration: Use Random Structure Searching (RSS) to propose new, diverse candidate structures.

- Labeling: Evaluate a subset of these structures (e.g., 100 per iteration) with high-fidelity, expensive ab initio (e.g., Density Functional Theory) calculations to generate training data.

- Training: Fit or retrain a machine-learned interatomic potential (MLIP) like a Gaussian Approximation Potential (GAP) on the accumulated data.

- Validation and Convergence: Test the current MLIP on a set of known crystal structures and monitor the prediction error (e.g., Root Mean Square Error). The loop continues until a target accuracy (e.g., 0.01 eV/atom) is achieved.

This workflow, formalized in the diagram below, highlights the central role of stochastic search in the data generation step.

The following table details essential "research reagents"—the software, algorithms, and computational tools—fundamental to conducting research in global optimization and automated PES sampling.

Table 3: Essential Research Toolkit for Global Optimization and PES Sampling

| Tool / Resource | Type | Primary Function | Context of Use |

|---|---|---|---|

| DIRECTGOLib v1.2 | Benchmark Library | A curated collection of test problems for systematic benchmarking of global optimization algorithms. | Provides a standard set of problems (e.g., 397) to ensure fair and reproducible solver comparisons [22]. |

| GKLS Generator | Software Tool | Generates custom benchmark classes of optimization problems with known global minima and local traps. | Used for large-scale computational studies to test algorithm robustness and scalability [22]. |

| Bayesian Optimization | Algorithmic Framework | A stochastic strategy for global optimization of expensive black-box functions using a probabilistic surrogate model. | Ideal for hyperparameter tuning and optimizing experiments/simulations where each evaluation is costly [24] [26]. |

| Random Structure Search (RSS) | Stochastic Method | Explores a material's configurational space by randomly generating and evaluating atomic structures. | Core component in automated PES exploration pipelines like autoplex and AIRSS [27]. |

| Gaussian Approximation Potential (GAP) | Machine Learning Model | A type of MLIP based on Gaussian process regression, prized for its data efficiency and uncertainty quantification. | Used as the surrogate model in frameworks like autoplex to learn from ab initio data [27]. |

| autoplex / Asparagus | Software Framework | Automated, modular workflow packages for the exploration and fitting of machine-learned potential energy surfaces. | Democratizes and streamlines the creation of accurate MLIPs, reducing manual effort [27] [28]. |

The choice between stochastic and deterministic global optimization methods is not a matter of superiority but of strategic application. Deterministic methods provide mathematical certainty and excel in well-defined, lower-dimensional problems, making them valuable for rigorous, verifiable results. Stochastic methods, including modern hybrids like DANTE, offer unparalleled efficiency and scalability for high-dimensional, complex, and noisy landscapes, which are characteristic of real-world scientific problems like PES sampling. The ongoing trend, powerfully illustrated in materials science, is towards automated, data-driven frameworks that leverage stochastic search for exploration and increasingly sophisticated models to guide it. For researchers in drug development and materials science, this means that stochastic and hybrid methods currently offer the most practical and powerful path forward for tackling the immense complexity of molecular and material design.

The exploration of potential energy surfaces (PES) is a fundamental challenge in computational materials science and chemistry, directly impacting applications from catalyst design to drug discovery. Automated frameworks have emerged as critical tools for mapping these complex energy landscapes, reducing manual effort, and systematically improving the accuracy of machine learning interatomic potentials (MLIPs). This guide compares three prominent frameworks—autoplex, LASP, and DP-GEN—focusing on their methodological approaches, performance characteristics, and applicability to different research scenarios. Understanding the capabilities and experimental validation of these tools provides researchers with a foundation for selecting appropriate PES sampling strategies for their specific scientific objectives.

Framework Comparison at a Glance

The table below summarizes the core architectural and application characteristics of the three automated frameworks.

Table 1: Core Characteristics of Automated PES Sampling Frameworks

| Framework | Primary Methodology | Core Innovation | Software Integration | Reported Application Domains |

|---|---|---|---|---|

| autoplex | Random Structure Searching (RSS) | Automated iterative exploration and MLIP fitting using single-point DFT evaluations [27] | atomate2, Materials Project infrastructure [27] | Titanium-oxygen system, SiO₂, water, phase-change materials [27] |

| LASP | Not specified in search results | Information not available from search results | Information not available from search results | Information not available from search results |

| DP-GEN | Active learning | Deep potential generator for iterative dataset construction and model training [30] | DeepMD-kit [30] | General ML interatomic potentials, molecular dynamics workflows [30] |

Experimental Performance and Benchmarking

Quantitative Performance Metrics

Experimental validation of these frameworks typically focuses on their efficiency in achieving target prediction accuracies and the computational resources required. The following table compares key performance indicators as reported in the literature.

Table 2: Experimental Performance Comparison Across Frameworks

| Framework | Accuracy Target | Structures to Convergence | Computational Efficiency | Key Validation Systems |

|---|---|---|---|---|

| autoplex | ~0.01 eV/atom [27] | ~500 (diamond Si), ~few thousand (oS24 Si) [27] | Requires only DFT single-point evaluations, no full relaxations [27] | Silicon allotropes, TiO₂ polymorphs, full Ti-O system [27] |

| DP-GEN | Not explicitly quantified in results | Information not available | LGPL-3.0 licensed; PyPi monthly downloads: ~5.4K [30] | General ML-IAPs, molecular dynamics workflows [30] |

Domain-Specific Performance Analysis

autoplex demonstrates variable performance across different material systems. For elemental silicon, it achieves the target accuracy of 0.01 eV/atom for the diamond structure with approximately 500 DFT single-point evaluations, while the more complex oS24 allotrope requires a few thousand evaluations [27]. In binary systems like TiO₂, common polymorphs (rutile, anatase) are captured effectively, though the bronze-type (B-) polymorph proves more challenging to learn [27]. When expanding to the full titanium-oxygen system with multiple stoichiometries (Ti₂O₃, TiO, Ti₂O), achieving target accuracy requires significantly more iterations due to increased chemical complexity [27].

DP-GEN employs a different approach centered on active learning for generating deep-learning-based interatomic potential models. As a well-established tool in the ecosystem, it has been applied to numerous systems, though specific accuracy metrics were not detailed in the available search results [30].

Detailed Methodologies and Experimental Protocols

autoplex Workflow and Protocol

The autoplex framework implements an automated iterative process for exploring and learning potential-energy surfaces. The methodology can be visualized as follows:

Diagram 1: autoplex Iterative Workflow

The experimental protocol consists of four critical phases:

- Initialization: Define chemical system and initial parameters.

- Random Structure Search (RSS): Generate diverse initial structures.

- MLIP-Driven Exploration: Use current MLIP to relax structures and explore PES without expensive DFT calculations.

- Selective DFT Validation: Perform single-point DFT calculations on promising structures and add to training data.

- Iterative Refinement: Retrain MLIP with expanded dataset and repeat until target accuracy is achieved.

This approach specifically avoids full DFT relaxations, relying only on single-point evaluations to maximize computational efficiency [27].

DP-GEN Protocol

DP-GEN implements an active learning approach for generating interatomic potentials:

Diagram 2: DP-GEN Active Learning Cycle

Key methodological aspects include:

- Initial Model Training: Begin with a small initial dataset and train preliminary model.

- Exploration Phase: Run molecular dynamics simulations using current model to explore configuration space.

- Uncertainty-Based Selection: Identify configurations with high predictive uncertainty for DFT labeling.

- Iterative Refinement: Incorporate new data into training set and update model.

DP-GEN specifically addresses the challenge of creating comprehensive training sets that cover both typical and rare configurations encountered during simulations [30].

The Scientist's Toolkit: Essential Research Reagents

Implementing these automated frameworks requires familiarity with both computational tools and theoretical concepts. The following table outlines key "research reagents" essential for working with automated PES sampling algorithms.

Table 3: Essential Research Reagents for Automated PES Sampling

| Reagent Category | Specific Tools/Concepts | Function in PES Exploration |

|---|---|---|

| MLIP Architectures | Gaussian Approximation Potential (GAP) [27], Deep Potential [30] | Core machine learning models that learn interatomic interactions from quantum mechanical data |

| Sampling Methods | Random Structure Searching (RSS) [27], Active Learning [30] | Algorithms for exploring configuration space and identifying relevant structures |

| Quantum Engine | Density Functional Theory (DFT) | Provides reference data for training and validation of MLIPs |

| Automation Infrastructure | atomate2 [27] | Workflow management for high-throughput computations |

| Reaction Pathway Tools | Nudged Elastic Band (NEB), Growing String Method [31] | Specialized methods for mapping reaction pathways and transition states |

autoplex and DP-GEN represent complementary approaches to automated PES exploration, each with distinct strengths and methodological foundations. autoplex excels in broad configurational space exploration through efficient RSS combined with selective quantum validation, particularly effective for mapping complex polymorphic landscapes and multi-component systems. DP-GEN specializes in active learning for interatomic potential development, using uncertainty quantification to iteratively refine models. Framework selection depends significantly on research goals: autoplex offers advantages for initial PES mapping of unknown systems, while DP-GEN provides robust pipeline for production-ready potential development. Both frameworks demonstrate how automation accelerates reliable MLIP development, though LASP assessment requires additional documentation. Future developments will likely see increased integration of specialized sampling for reactive systems and enhanced uncertainty quantification for autonomous operation.

The accurate and efficient exploration of potential energy surfaces (PES) is a fundamental challenge in computational materials science and drug development. The arrangement of atoms in space dictates all physical and chemical properties of materials and molecules, making the identification of stable structures a critical task for discovering new materials with tailored functionalities [32]. Traditional methods for PES exploration often rely on computationally expensive electronic structure calculations like Density Functional Theory (DFT), which can render comprehensive searches prohibitively costly.

Two dominant paradigms have emerged to address this challenge: Random Structure Search (RSS) and Active Learning (AL). RSS employs stochastic generation of initial structures that are subsequently relaxed to local minima, systematically exploring the configurational space [33]. In contrast, Active Learning represents an iterative, data-driven approach where machine learning models guide the search toward informative regions of the PES, minimizing the number of expensive quantum-mechanical calculations required [32] [34]. This review provides a comprehensive comparison of these strategies, examining their performance, computational efficiency, and applicability to different research scenarios within the broader context of validating automated PES sampling algorithms.

Methodological Foundations

Random Structure Search (RSS)

Random Structure Search is an ab initio global optimization method that predicts crystal structures by generating random initial configurations and relaxing them to their nearest local minima on the PES. The underlying principle is that by sampling a sufficient number of random starting points, the algorithm will eventually discover the global minimum energy structure along with other relevant metastable configurations [33]. The Ab Initio Random Structure Searching (AIRSS) package is a prominent implementation of this approach, which creates numerous random structures subject to user-defined constraints such as minimum interatomic distances and cell volumes [33] [35].

Recent advancements have integrated machine learning potentials to accelerate RSS. For instance, Orbital-Free Density Functional Theory (OFDFT) has been used to drive RSS for free-electron-like metals such as Li, Na, Mg, and Al, achieving significant speedups over conventional Kohn-Sham DFT [33]. In one implementation, researchers relaxed 1000 random structures for each of these elements, successfully identifying both ground state structures and other low-energy configurations [33].

Active Learning (AL)

Active Learning represents a more guided approach to PES exploration that strategically selects the most informative data points for quantum-mechanical evaluation. AL frameworks typically operate through iterative cycles where machine learning models, often neural network force fields (NNFFs) with uncertainty estimation, propose promising candidate structures for DFT validation [32] [27]. These frameworks minimize the required number of DFT calculations by focusing computational resources on regions of the PES that are both low-energy and poorly understood by the current model.

Key to AL success is the query strategy that determines which unlabeled candidates to select for DFT evaluation. Common strategies include:

- Uncertainty sampling: Selecting structures where the model exhibits high predictive uncertainty

- Diversity sampling: Choosing candidates that increase the structural diversity of the training set

- Expected model change: Prioritizing samples that would most impact the current model

- Hybrid approaches: Combining multiple criteria to balance exploration and exploitation [36] [34]

Advanced implementations use neural network ensembles to estimate uncertainty, which serves both to guide structure selection and to trigger stopping criteria when all structures in the candidate pool have been sufficiently optimized [32].

Integrated Frameworks

Modern approaches increasingly combine RSS and AL principles into unified frameworks. The autoplex software package implements an automated workflow for exploring and learning PES that integrates random structure generation with active learning of machine learning interatomic potentials [27]. Similarly, other methods use active learning of neural network force fields to accelerate structure relaxations, guiding pools of randomly generated candidates toward their local minima while minimizing computational cost [32].

The following diagram illustrates a typical integrated workflow:

Figure 1: Integrated RSS and Active Learning Workflow. This diagram illustrates the iterative process combining random structure generation with active learning-guided optimization for efficient PES exploration.

Performance Comparison and Benchmarking

Quantitative Performance Metrics

A comprehensive benchmark study (CSPBench) evaluating 13 state-of-the-art Crystal Structure Prediction (CSP) algorithms provides valuable insights into the relative performance of different sampling strategies [35]. The table below summarizes key performance indicators across major algorithm categories:

Table 1: Performance Comparison of CSP Algorithm Categories

| Algorithm Category | Representative Examples | Success Rate Range | Computational Efficiency | Key Strengths | Key Limitations |

|---|---|---|---|---|---|

| De novo DFT-based | CALYPSO, USPEX [35] | Variable (system-dependent) | Low (DFT-intensive) | High accuracy for known systems | Extremely computationally expensive |

| ML Potential-based | GNoME, GNoA, AGOX with M3GNet [35] | Competitive with DFT-based | Medium to High | Good transferability; faster than DFT | Performance depends on potential quality |

| Template-based | TCSP, CSPML [35] | High (with similar templates) | High | Effective when templates available | Limited to known structure types |

| Active Learning-based | autoplex, GN-OA [32] [27] | Medium to High | High | Excellent data efficiency | Requires careful uncertainty calibration |

Computational Efficiency

Studies consistently demonstrate that Active Learning strategies can dramatically reduce computational requirements compared to traditional RSS. In benchmark systems including Si~16~, Na~8~Cl~8~, Ga~8~As~8~, and Al~4~O~6~, AL approaches reduced computational costs by up to two orders of magnitude while reliably identifying the most stable minima [32]. The efficiency gains were particularly notable for more complex, unseen systems such as Si~46~ and Al~16~O~24~, where AL successfully identified global minima after training only on smaller systems [32].

The autoplex framework demonstrates how automated AL can achieve high accuracy with minimal DFT calculations. For elemental silicon, the method achieved energy prediction errors below 0.01 eV/atom for the diamond structure with approximately 500 DFT single-point evaluations, and for the more complex oS24 allotrope within a few thousand evaluations [27].

Performance Across Material Systems

Different sampling strategies exhibit varying performance across material classes:

Table 2: Performance Across Material Systems

| Material System | RSS Performance | AL Performance | Key Findings |

|---|---|---|---|

| Elemental (Si) | Good for simple allotropes [33] | Excellent across all allotropes [32] [27] | AL achieves <0.01 eV/atom error with minimal DFT [27] |

| Binary Oxides (TiO~2~) | Moderate [33] | Good for common polymorphs [27] | TiO~2~-B polymorph challenging for both methods [27] |

| Complex Binaries (Ti-O) | Limited data | Effective across stoichiometries [27] | Full system training essential for multi-composition accuracy [27] |

| Quantum Liquids (Water) | Standard approach | Comparable to random sampling [37] | Active learning shows limited advantage for this system [37] |

Interestingly, a comparative study on quantum liquid water found that for a given dataset size, random sampling actually led to smaller test errors than active learning, contrary to common understanding [37]. This suggests that the optimal sampling strategy may be system-dependent, with AL providing the greatest advantages for complex, multi-minima PES landscapes.

Experimental Protocols and Methodologies

Standard RSS Protocol

The conventional RSS approach follows this methodology:

- Initialization: Define composition, space group constraints (if any), and volume ranges

- Structure Generation: Create random atomic configurations observing minimum interatomic distances

- Structure Relaxation: Use DFT or classical force fields to relax each structure to local minima

- Analysis: Compare energies and structures to identify lowest-energy configurations [33]

For example, in an OFDFT-driven RSS study of simple metals, researchers generated 1000 random structures for each element (Li, Na, Mg, Al) with unit cell volumes constrained within 5% of expected equilibrium volumes [33]. Each structure contained between 3-12 atoms, with 100 structures generated for each size [33].

Active Learning Protocol

A typical AL protocol for CSP includes these stages:

- Initialization: Generate a large pool of random candidate structures

- Selection: Sample initial training data based on scoring functions targeting low-energy PES regions

- DFT Computation: Obtain energies, forces, and stress for selected structures

- Model Training: Train neural network force field ensemble on updated training data

- Structure Relaxation: Use trained models to relax all candidate structures until uncertainty criteria triggered

- Convergence Check: Evaluate low-energy clusters in optimized pool; repeat from step 2 until convergence [32]

Critical to this process is the use of uncertainty estimation to guide sampling and determine when relaxation trajectories are complete without requiring DFT verification at each step [32].

Benchmarking Methodology

The CSPBench benchmark suite employs a standardized evaluation protocol:

- Test Set: 180 diverse crystal structures

- Metrics: Quantitative similarity measures between predicted and known structures

- Validation: Cross-comparison of predicted energies and structural features [35]

This approach enables direct comparison of algorithms across a common set of structures and performance indicators, addressing a critical gap in CSP validation [35].

Table 3: Essential Software Tools for PES Sampling Research

| Tool Name | Category | Primary Function | Access |

|---|---|---|---|

| AIRSS [33] [35] | RSS | Ab initio random structure searching | Open-source |

| CALYPSO [35] | De novo CSP | Particle swarm optimization-based CSP | Commercial |

| USPEX [35] | De novo CSP | Evolutionary algorithm-based CSP | Commercial |

| autoplex [27] | AL | Automated PES exploration and ML potential fitting | Open-source |

| CrySPY [32] [35] | Hybrid | Genetic algorithm/ Bayesian optimization with DFT | Open-source |

| GNoME [35] | ML Potential | Graph neural network potentials for materials | Open-source |

| AGOX [35] | AL | Global optimization with Gaussian processes | Open-source |

The comparison between Random Structure Search and Active Learning reveals a nuanced landscape where each approach offers distinct advantages. RSS provides a straightforward, robust method for systematic PES exploration, particularly valuable when prior knowledge of the system is limited. Active Learning delivers superior computational efficiency for complex systems with numerous local minima, strategically focusing quantum-mechanical calculations on the most informative regions of the PES.

Future developments in automated PES sampling will likely focus on several key areas: improved uncertainty quantification in AL frameworks, development of more transferable machine learning potentials, and enhanced benchmarking standards to facilitate objective algorithm comparison [35] [27]. The integration of physical principles and chemical intuition into data-driven sampling strategies represents another promising direction for advancing the field of computational materials discovery and drug development.

As benchmarking studies like CSPBench continue to mature [35], the research community will benefit from more standardized validation protocols, enabling more rigorous comparison of existing methods and clearer identification of promising directions for future methodological development.

In modern drug discovery, understanding the interactions between a protein and a small molecule (ligand) is fundamental to the design of effective therapeutics. These interactions are governed by the potential energy surface (PES), a conceptual map that defines how the energy of a molecular system changes with the positions of its atoms [28]. Accurately sampling this PES—exploring the key configurations, binding pathways, and energy minima—is a central challenge for computational methods. Reliable sampling allows researchers to predict how tightly a drug candidate will bind, a property known as binding affinity, and to understand the binding kinetics, which describes the rates of association and dissociation [38]. This review objectively compares the performance of leading computational sampling methodologies, framing the evaluation within the broader research thesis of validating automated PES sampling algorithms. We focus on their application to drug-like molecules and protein-ligand systems, providing comparative data and detailed protocols to guide researchers in selecting the appropriate tool for their projects.

Performance Comparison of Sampling Methodologies

Computational methods for sampling molecular interactions span a spectrum from highly detailed, computationally expensive simulations to faster, coarser-grained models. The table below summarizes the key performance characteristics of several prominent approaches.

Table 1: Performance Comparison of Computational Sampling Methodologies for Protein-Ligand Systems

| Method / Model | Sampling Approach | Reported Accuracy (vs. Experiment) | Key Performance Findings | Computational Cost / Sampling Time |

|---|---|---|---|---|

| Coarse-Grained Martini 3 [39] | Unbiased molecular dynamics (MD) simulations | Binding free energies for T4 Lysozyme L99A mutant: Mean Absolute Error (MAE) of 1 kJ/mol, max error 2 kJ/mol [39]. | Accurately identifies binding pockets and multiple binding/unbinding pathways without prior knowledge. Reproduces experimental binding poses with RMSD ≤ 2.1 Å [39]. | Millisecond-scale sampling achievable; 30 trajectories of 30 µs each (0.9 ms total) for T4 Lysozyme ligands [39]. |