The Local Refinement Advantage: Precision Techniques for Accelerating Global Optimization in Drug Discovery

This article provides a comprehensive guide to implementing efficient local refinement within global optimization workflows, a critical technique for researchers and drug development professionals.

The Local Refinement Advantage: Precision Techniques for Accelerating Global Optimization in Drug Discovery

Abstract

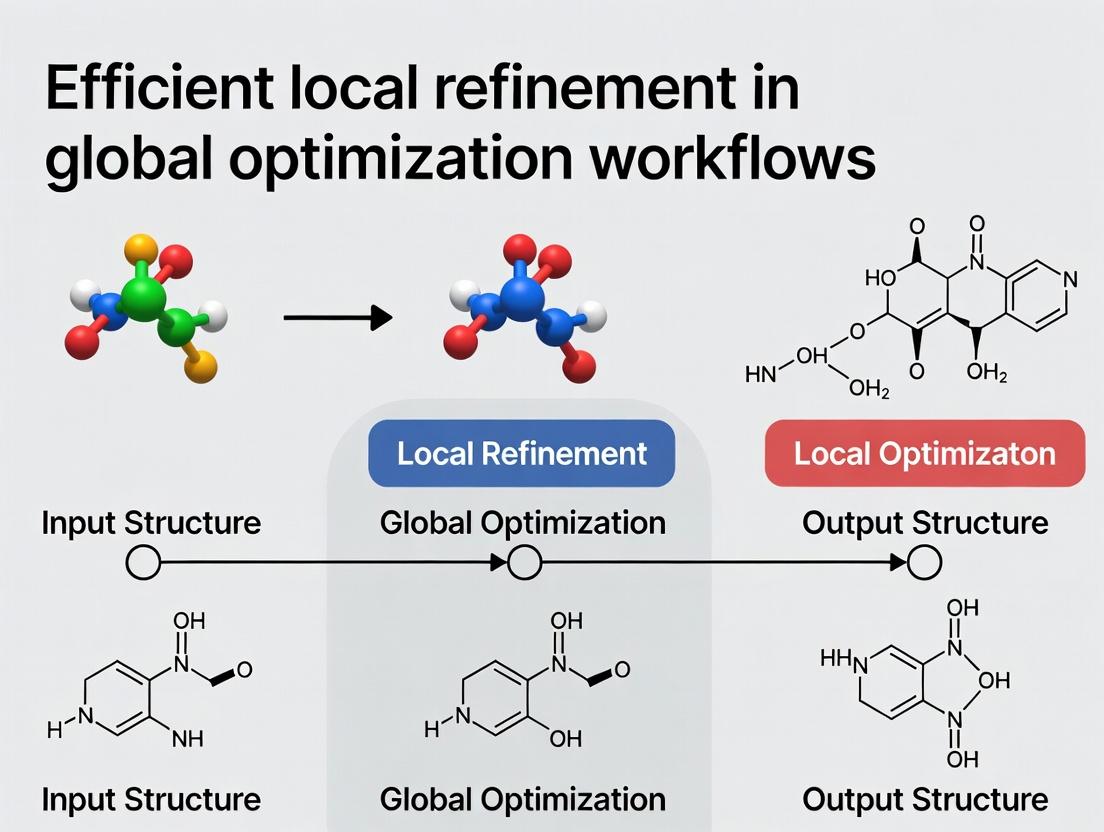

This article provides a comprehensive guide to implementing efficient local refinement within global optimization workflows, a critical technique for researchers and drug development professionals. We first establish the core concepts and necessity of this hybrid approach in navigating complex biomedical landscapes. Methodological sections detail practical implementation strategies for algorithms like multi-start and surrogate-assisted frameworks, with specific applications in molecular docking and protein design. The troubleshooting segment addresses common pitfalls in convergence and parameter tuning, while the validation section offers comparative analysis of benchmarks and real-world case studies. The conclusion synthesizes how strategic local refinement accelerates the path from computational screening to viable clinical candidates, shaping the future of computational biology and precision medicine.

Why Local Refinement is the Missing Link in Global Optimization

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My global optimization algorithm (e.g., genetic algorithm) has converged on a suboptimal region of the parameter space. It seems to be stuck exploring broadly and cannot refine the solution. What is the issue and how can I fix it? A: This is a classic pitfall of a purely global search strategy. The algorithm excels at exploration but lacks the mechanism for focused exploitation. To resolve this, implement a hybrid workflow. Use the global method to identify promising regions, then switch to a local optimizer (e.g., Nelder-Mead, BFGS) to refine the best candidates. Ensure a smooth transition by passing the global best parameters as the initial guess for the local search.

Q2: When I start my local refinement (e.g., using gradient descent) from a random point, it often converges to a poor local minimum. How can I increase the chances of finding the global optimum? A: A purely local search is highly sensitive to the initial starting point. The solution is to integrate a global sampling step. First, run a low-density global sampling (e.g., Latin Hypercube Sampling, random search) to map the objective function's landscape. Use the top N samples (e.g., lowest energy or highest score) as multiple, distinct starting points for parallel local refinement runs. This multi-start strategy mitigates the risk of being trapped.

Q3: In my molecular docking simulations, the scoring function is noisy and computationally expensive. How do I balance exploration and refinement efficiently? A: For expensive, noisy functions, Bayesian Optimization (BO) is a recommended hybrid framework. It builds a probabilistic surrogate model (global exploration) to predict promising regions and uses an acquisition function (like Expected Improvement) to guide where to perform the next expensive evaluation (informed local refinement). This sequentially balances global and local search. Key parameters to tune are the surrogate model kernel and the trade-off parameter in the acquisition function.

Q4: My optimization workflow is taking too long. How can I diagnose if the bottleneck is in the global or local phase? A: Profile your workflow. Instrument your code to log the objective function value vs. evaluation count. Create the following table from your profiling data:

| Optimization Phase | Number of Function Evaluations | Wall Clock Time (hrs) | Average Improvement per Evaluation |

|---|---|---|---|

| Global Search (Exploration) | 5,000 | 48.2 | 0.08 kcal/mol |

| Local Refinement (Exploitation) | 500 | 5.5 | 0.01 kcal/mol |

Interpretation: If the global phase shows minimal average improvement over many evaluations, it may be sampling inefficiently. If the local phase takes a disproportionate amount of time per evaluation, your refinement algorithm (e.g., gradient calculation) or convergence criteria may need optimization.

Detailed Experimental Protocol: Multi-Start Local Refinement

Objective: To find the global minimum of a rugged, high-dimensional potential energy surface.

Methodology:

- Global Sampling: Perform Sobol sequence sampling across the entire defined parameter space (e.g., dihedral angles, translational coordinates). Generate

Nsample points (e.g.,N=10,000). - Candidate Selection: Evaluate the objective function (e.g., force field energy) for all

Nsamples. Rank them by score. Select the topMdistinct points (e.g.,M=50) that are separated by a minimum RMSD (e.g., > 2.0 Å) to ensure diversity. - Parallel Local Refinement: For each of the

Mstarting points, launch an independent local minimization using the L-BFGS algorithm. Set convergence criteria (e.g., energy tolerance = 0.01 kcal/mol, gradient tolerance = 0.1 kcal/mol/Å). - Cluster Analysis: Cluster all

Mrefined solutions based on structural similarity (RMSD < 1.0 Å). Identify the lowest-energy structure within each cluster. - Global Minimum Identification: Select the structure with the absolute lowest energy as the putative global minimum. Report the energy and the cluster population as a measure of the basin's relative stability.

Signaling Pathway & Workflow Diagrams

Title: Hybrid Optimization Workflow Logic

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Optimization Workflows |

|---|---|

| Sobol Sequence Library | A quasi-random number generator for low-discrepancy sampling. Provides uniform coverage of the parameter space during the initial global search phase, reducing clustering bias. |

| L-BFGS Optimizer | A local, gradient-based optimization algorithm. Efficiently refines candidate solutions by approximating the Hessian matrix, ideal for high-dimensional problems in local refinement steps. |

| RMSD Clustering Tool | Measures structural convergence. Used post-refinement to cluster final results and identify unique low-energy conformations or solution basins. |

| Bayesian Optimization Framework (e.g., BoTorch, GPyOpt) | Provides a surrogate model and acquisition function. Automates the balance between exploring uncertain regions and exploiting known promising areas for expensive black-box functions. |

| Parallel Computing Scheduler (e.g., SLURM, Nextflow) | Manages job distribution. Enables simultaneous multi-start local refinements or parallel evaluation of global search candidates, drastically reducing wall-clock time. |

In the context of a broader thesis on Efficient local refinement in global optimization workflows, this support center addresses key technical challenges. In global optimization, a broad search space is first explored to identify promising regions. Local refinement then intensively searches these specific regions to find the precise optimal solution, balancing computational efficiency with accuracy. This is critical in fields like drug development for tasks such as molecular docking or lead optimization.

Troubleshooting Guides & FAQs

FAQ 1: During a molecular docking workflow, my global search identifies a potential binding pocket, but the subsequent local refinement fails to converge on a stable pose. What could be wrong?

- Answer: This often indicates a mismatch between the sampling algorithms or force fields used in the two phases. Ensure the local refinement protocol uses a higher fidelity scoring function or more precise conformational sampling than the global phase. Check for clashes ignored in the global screen but critical locally. Increase the number of local refinement iterations starting from the global seed points.

FAQ 2: How do I determine the optimal budget (e.g., computational time) to allocate to global search versus local refinement in my experiment?

- Answer: There is no universal rule, but a systematic approach is recommended. Start with a pilot study using a known benchmark. Allocate budgets in ratios (e.g., 70/30, 50/50, 30/70 global/local) and compare result quality. Use the data to fit a simple efficiency model. A typical starting point in many studies is a 60/40 global-to-local split.

Table: Example Budget Allocation Pilot Results for a Protein-Ligand Docking Run

| Global Search Time (%) | Local Refinement Time (%) | Average Binding Affinity (kcal/mol) | Top Pose RMSD (Å) | Total Runtime (hr) |

|---|---|---|---|---|

| 80 | 20 | -7.2 | 2.5 | 5.0 |

| 60 | 40 | -8.5 | 1.8 | 5.0 |

| 40 | 60 | -8.6 | 1.7 | 5.0 |

| 20 | 80 | -8.6 | 1.7 | 5.0 |

FAQ 3: My local refinement algorithm gets "stuck" in a suboptimal local minimum very close to the starting point provided by the global search. How can I encourage more exploration during refinement?

- Answer: This is a classic over-exploitation issue. Introduce mild stochasticity into your local refinement routine. Techniques include:

- Multiple Starts: Initiate local refinement from multiple top global solutions, not just the best one.

- Perturbation: Slightly perturb the coordinates or parameters of the seed point before beginning local optimization.

- Hybrid Methods: Use algorithms like Basin Hopping or Lamarckian GA that allow for controlled "jumps" during local search.

Experimental Protocol: Benchmarking Local Refinement Strategies

Objective: To evaluate the efficiency of three local refinement methods following a genetic algorithm (GA) global search for molecular conformation optimization.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Global Phase: Run a standard genetic algorithm (population size=100, generations=50) to generate 10 diverse, low-energy candidate conformations.

- Refinement Phase: For each of the 10 candidates, apply three local methods in parallel:

- A. Gradient-Based (BFGS): Perform local minimization using the BFGS algorithm until gradient tolerance < 0.01 kcal/mol/Å.

- B. Stochastic (MC): Run a Monte Carlo Simulated Annealing protocol (1000 steps, exponential cooling).

- C. Hybrid (Basin Hopping): Execute 50 basin hopping cycles, each comprising a random perturbation, minimization, and Metropolis criterion.

- Evaluation: Record the final energy, RMSD from the known crystal structure, and computational cost for each refined solution. Compare the best result from each method.

Workflow & Pathway Diagrams

Title: High-Level Global-Local Optimization Workflow

Title: Parallel Local Refinement of Multiple Global Candidates

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential Materials for Computational Local Refinement Experiments

| Item / Reagent | Function in Experiment | Example Vendor/Software |

|---|---|---|

| Molecular Dynamics (MD) Engine | Provides high-fidelity force fields for energy minimization and conformational sampling during local refinement. | GROMACS, AMBER, OpenMM |

| Docking & Sampling Suite | Contains algorithms for both global stochastic search (e.g., GA) and local gradient-based refinement. | AutoDock Vina, Schrödinger Glide, Rosetta |

| Force Field Parameter Set | Defines the energy landscape (bond, angle, dihedral, non-bonded terms) for accurate local geometry optimization. | CHARMM36, ff19SB, OPLS4 |

| Ligand Parameterization Tool | Generates necessary bond and charge parameters for novel small molecules prior to refinement. | antechamber (AMBER), CGenFF, LigParGen |

| High-Performance Computing (HPC) Cluster | Enables parallel execution of multiple local refinement runs from different global starting points. | Local Slurm Cluster, AWS Batch, Google Cloud |

| Visualization & Analysis Software | Used to visually inspect refined poses, calculate RMSD, and analyze interaction energies. | PyMOL, UCSF ChimeraX, VMD |

Technical Support Center: Troubleshooting Local Refinement in Global Drug Optimization

FAQs & Troubleshooting Guides

Q1: Our global search (e.g., using genetic algorithms) identifies a promising ligand pose, but subsequent local energy minimization collapses it into a high-energy, unrealistic conformation. What is the primary cause and solution?

A1: This is a classic symptom of inadequate force field parameterization or implicit solvent model failure during the local refinement step.

- Cause: The global search may use a simplified scoring function. The local minimizer, using a more detailed force field, encounters parameter mismatches (e.g., for a novel ligand torsional angle) or inaccurate solvation/entropic effects, pulling the pose into a locally stable but globally irrelevant well.

- Solution: Implement a multi-stage refinement protocol.

- Initial Relaxation: Use a softened potential (e.g., GB/SA with a distance-dependent dielectric) for the first minimization steps.

- Parameter Assignment: Ensure robust parameter derivation for novel ligand moieties using QM/MM fitting before final refinement.

- Ensemble Refinement: Refine not a single pose, but the top-N poses (e.g., N=10) from the global search, then re-rank using binding free energy estimates (MM/PBSA, MM/GBSA).

Q2: During Hamiltonian Replica Exchange MD (H-REMD) used for local basin exploration, we observe poor exchange rates (<15%) between adjacent replicas. This hampers sampling efficiency. How do we rectify this?

A2: Poor exchange rates indicate insufficient overlap in the potential energy distributions of adjacent replicas.

- Troubleshooting Steps:

- Check Lambda Spacing: Use a smaller difference in the coupling parameter (λ) between replicas. The number of replicas required scales with √N (degrees of freedom).

- Adjust Hamiltonian: For alchemical transformations, ensure soft-core potentials are properly tuned to avoid singularities.

- Diagnostic Table: Monitor potential energy overlap.

| Metric | Target Value | Observed Value | Corrective Action |

|---|---|---|---|

| Replica Exchange Rate | 20-30% | <15% | Increase replica count or optimize λ spacing. |

| Potential Energy Overlap | >0.3 | <0.2 | Use tools like pymbar to analyze and adjust λ schedule. |

| Simulation Time per Replica | >50 ps | 10 ps | Increase sampling time before attempting exchange. |

- Protocol: To optimize λ spacing, run a short simulation and calculate the energy variance. Use the formula for approximately constant acceptance probability: Δλ ∝ 1/√(∂V/∂λ)².

Q3: When applying a meta-dynamics simulation to escape a local energy minimum in a protein-binding pocket, the system becomes unstable. What controls are critical?

A3: Unstable dynamics typically arise from overly aggressive bias deposition or incorrect collective variable (CV) selection.

- Critical Controls:

- CV Selection: Use at least two CVs (e.g., ligand RMSD and a specific protein-ligand distance). A single CV may force unrealistic paths.

- Bias Parameters: Start with a height of 0.5-1.0 kJ/mol and a width 20-30% of the CV fluctuation. Deposition every 500-1000 steps.

- Wall Potential: Apply soft harmonic walls to prevent exploration of non-physical CV values.

- Protocol:

- Define 2-3 physically meaningful CVs.

- Perform a short unbiased simulation to estimate CV fluctuations (σ).

- Set Gaussian width to 0.2*σ.

- Use a well-tempered meta-dynamics variant to control bias growth:

biasfactor = 10-30. - Monitor CVs and protein backbone RMSD for stability.

Q4: In our FEP calculations for lead optimization, the calculated ΔΔG between two similar ligands shows high variance (>1.0 kcal/mol) between repeat windows. How can we improve precision?

A4: High variance points to insufficient sampling of conformational degrees of freedom or charge masking issues.

- Solution Guide:

- Extended Equilibration: Equilibrate each window for >250 ps before >2 ns of production sampling.

- Soft-Core Potentials: Ensure they are enabled for Lennard-Jones and Coulombic terms to avoid endpoint singularities.

- Charge Transformation Protocol: For charging/discharging atoms, use a decouple/annihilate protocol in explicit solvent, not direct alchemical conversion between two charged states.

| Reagent/Solution | Function in Local Refinement Context |

|---|---|

| Explicit Solvent Box (TP3P, OPC) | Models specific water-mediated interactions and entropy crucial for accurate local pose scoring. |

| Particle Mesh Ewald (PME) | Handles long-range electrostatic interactions accurately during MD-based refinement. |

| Soft-Core Potentials | Prevents singularities and numerical instabilities in alchemical FEP/REMD transformations. |

| Restrained Electrostatic Potential (RESP) Charges | Provides QM-derived, transferable partial charges for ligands, ensuring force field compatibility. |

| Linear Interaction Energy (LIE) Templates | Offers a faster, semi-empirical endpoint method for pre-screening poses before full FEP. |

| BioFragment Database (BFDb) | Supplies pre-parameterized fragments for novel chemotypes, reducing force field errors. |

Experimental Protocol: Integrated Global-Local Pose Refinement and Scoring

Objective: To refine and accurately score the top-10 poses from a global docking run against a kinase target.

Materials: Protein structure (PDB), ligand mol2 file, AMBER/OpenMM suite, high-performance computing cluster.

Method:

- Global Docking: Perform ensemble docking with 5 receptor conformations using a genetic algorithm (e.g., GOLD).

- Pose Clustering: Cluster the top 500 poses by ligand RMSD (cutoff 2.0 Å). Select centroid of each top-10 cluster.

- System Preparation: Solvate each protein-ligand complex in an OPC water box, add ions to 0.15M NaCl.

- Local Relaxation:

- Stage 1: Minimize with restraints on protein heavy atoms (force constant 5.0 kcal/mol/Ų).

- Stage 2: Minimize with restraints on protein backbone only.

- Stage 3: Full minimization (no restraints).

- Equilibration: NVT (100 ps, 298 K) → NPT (200 ps, 1 atm, 298 K).

- Production & Scoring: Run 5 ns MD per pose. Calculate MM/GBSA ΔG from 1000 evenly spaced frames. Perform statistical analysis (mean, SEM).

Workflow: Integrated Global-Local Pose Optimization

Meta-Dynamics Enhanced Sampling Mechanism

Technical Support Center: Troubleshooting & FAQs

General Workflow Optimization

Q1: My multi-start heuristic is converging to sub-optimal local minima despite numerous starts. What systemic issue might be at play?

A: This is often a problem of insufficient diversification in your initial sampling strategy. Ensure your starting points are generated via a Low-Discrepancy Sequence (e.g., Sobol sequence) or a well-tuned Latin Hypercube Sampling instead of pure pseudo-random numbers. For problems with n dimensions, a minimum of 10n to 50n starting points is typically required for complex energy landscapes. Check the spread of your final solutions; if they cluster in fewer than 3 distinct regions, your sampling is inadequate.

Q2: In a two-stage strategy, how do I determine the optimal handoff point from the global to the local solver?

A: The handoff is optimal when the cost of continued global search outweighs the expected refinement benefit. Implement a convergence monitor on the global phase. A practical rule is to trigger handoff when, over the last k iterations (k = 50-100), the improvement in the best-found objective value is less than a threshold ε (e.g., 1e-4). See Table 1 for metrics.

Table 1: Two-Stage Handoff Decision Metrics

| Metric | Calculation | Recommended Threshold |

|---|---|---|

| Relative Improvement | (f_best(iter-i) - f_best(iter))/(1e-10 + |f_best(iter)|) |

< 1e-4 for 50 consecutive iterations |

| Solution Cluster Radius | Std. dev. of top 10 solutions' parameters | < 0.05 * (Param Upper Bound - Lower Bound) |

| Solver Effort Ratio | (Global_Solver_Time) / (Estimated_Local_Refinement_Time) |

> 5.0 |

Q3: When using an embedded refinement strategy, my local search is causing computational bottlenecks. How can I mitigate this? A: This indicates your refinement is too frequent or too expensive. Implement adaptive embedded refinement:

- Trigger Condition: Only refine a solution if it is a promising basin candidate (e.g., its objective value is within the top 15% of all candidates in the current generation/population).

- Budget Limiter: Cap the number of local iterations (e.g., 50-100 gradient steps) or function evaluations per refinement call.

- Memoization: Cache refined solutions to avoid redundant local searches from similar starting points.

Experiment-Specific Protocols

Protocol 1: Benchmarking Multi-Start Strategies for Molecular Docking This protocol assesses the efficiency of different multi-start configurations in finding low-binding-energy poses.

- System Preparation: Prepare the protein receptor (fixed) and ligand (flexible) files in PDBQT format using AutoDock Tools.

- Parameter Space Definition: Define the search space (translational, rotational, torsional).

- Multi-Start Execution:

- Run

VinaorAutoDock-GPUwithexhaustiveness = N, where N is the number of starts (e.g., 8, 16, 32, 64). - For each N, perform 10 independent runs to account for stochasticity.

- Run

- Data Collection: Record the best binding affinity (kcal/mol) and runtime for each run.

- Analysis: Plot the best-found affinity vs.

exhaustivenessand runtime vs.exhaustiveness. The optimal N is at the knee of the curve where affinity gains diminish relative to time cost.

Protocol 2: Two-Stade Optimization for Force Field Parameterization This protocol uses a global metaheuristic followed by local gradient-based refinement to fit parameters.

- Stage 1 - Global Exploration:

- Use a Differential Evolution (DE) algorithm. Population size = 10 * number of parameters.

- Termination: After 200 generations or if population diversity (norm of std. dev. of parameters) < 1e-3.

- Output: The top 5 parameter vectors from the final population.

- Stage 2 - Local Refinement:

- For each parameter vector from Stage 1, initiate a Levenberg-Marquardt optimizer.

- Objective: Minimize the weighted sum of squared errors between calculated and experimental observables (e.g., bond lengths, angles, energies).

- Termination: On gradient norm < 1e-5 or 500 iterations.

- Validation: Select the overall best-refined parameter set and validate on a held-out set of experimental data.

Visualizing Strategies

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Optimization Experiments

| Item | Function in Optimization Workflow | Example Product/Software |

|---|---|---|

| Global Solver | Executes the high-level search (Multi-Start, Evolutionary, etc.) to explore the solution space broadly. | NLopt (DIRECT, CRS2), SciPy (differential_evolution), OpenMDAO. |

| Local Refiner | Performs intensive, convergent search from a given starting point to find a local minimum. | IPOPT, L-BFGS-B (SciPy), SNOPT, gradient descent in PyTorch/TensorFlow. |

| Surrogate Model | Provides a cheap-to-evaluate approximation of the objective function to guide sampling. | Gaussian Process (GPyTorch, scikit-learn), Radial Basis Functions. |

| Sampling Library | Generates high-quality initial points or search directions for multi-start or population methods. | Sobol Sequence (SALib), Latin Hypercube (PyDOE), Halton Sequence. |

| Benchmark Suite | Provides standardized test problems to validate and compare optimization strategy performance. | CUTEst, COCO (Black-Box Optimization), molecular docking benchmarks (PDBbind). |

| Convergence Analyzer | Monitors iteration history to automatically detect stagnation for handoff or termination decisions. | Custom scripts using metrics from Table 1; Optuna's visualizations. |

| Parallelization Framework | Manages concurrent evaluation of multiple starts or population members to reduce wall-clock time. | MPI (mpi4py), Python's multiprocessing, Ray, Dask. |

This technical support center provides guidance for researchers implementing optimization workflows within drug discovery and related fields. The content is framed within the broader research thesis on Efficient local refinement in global optimization workflows, addressing common challenges in balancing global exploration with local exploitation.

Troubleshooting Guides & FAQs

FAQ 1: How do I know if my optimization is stuck in a local optimum prematurely?

Answer: Monitor the "Improvement Rate" metric. A sustained period (e.g., 20 consecutive iterations) with less than 0.5% improvement in your objective function, while global uncertainty (measured by sample variance in unexplored regions) remains high, suggests premature exploitation. Implement a checkpoint to trigger a secondary, exploratory sampling protocol.

FAQ 2: What is a practical metric to quantify the exploration-exploitation balance in real-time?

Answer: Use the Global vs. Local Acquisition Ratio (GLAR). Calculate the ratio of resources (e.g., computational budget, experimental batches) dedicated to global search versus local refinement over a sliding window. The target ratio is problem-dependent but should be explicitly defined.

Table: Key Metrics for Balance Monitoring

| Metric | Formula/Description | Target Range (Typical) | Indicates Imbalance When... |

|---|---|---|---|

| Improvement Rate | (fbest(t) - fbest(t-n)) / n | >1% per n iters. (Adaptive) | Consistently near zero. |

| GLAR | (Budget on Global) / (Budget on Local) | 70/30 to 30/70 (Early/Late) | Stays >80/20 or <20/80. |

| Region Uncertainty | Avg. predictive variance of model in top N regions. | Relative to initial variance. | High but unexplored. |

| Diversity Score | Avg. distance between proposed samples. | Maintain >X% of initial score. | Clusters too tightly. |

FAQ 3: My local refinement step fails to improve the best-found candidate. How should I troubleshoot?

Answer: Follow this protocol:

- Verify Fidelity: Ensure your local surrogate model or experimental assay has sufficient precision. Re-run the current best point to confirm its performance.

- Check Gradient Reliability: If using model-based gradients, verify them against finite-difference approximations in a small neighborhood.

- Expand Refinement Radius: Temporarily increase the trust region or local search boundary by 50%. If improvement appears, the local basin is wider than estimated.

- Escalate to Hybrid: Trigger a "global-informed local" step: perform a short exploratory search focused on the most promising other region before returning to refine the current best.

FAQ 4: How do I set the iteration budget between global and local phases?

Answer: Use an adaptive schedule based on Expected Global Potential (EGP). EGP estimates the possible improvement in unexplored spaces versus expected local improvement. Switch phases when EGP for global exceeds that for local by a set threshold (e.g., 1.2x).

Experimental Protocols

Protocol: Iterative Optimization Cycle with Adaptive Switching

Purpose: To systematically balance exploration and exploitation in a computationally efficient manner. Methodology:

- Initialization: Perform a space-filling design (e.g., Latin Hypercube) for N initial samples (N = 10 * dimensionality).

- Model Training: Fit a global surrogate model (e.g., Gaussian Process, Random Forest) to all data.

- Phase Decision (Adaptive Switch):

- Calculate the Exploitation Score (ES): Predicted improvement from refining the current top 3 candidates.

- Calculate the Exploration Score (ER): Maximum predictive uncertainty across M random untested points.

- If ER / ES > threshold (θ), proceed to Global Phase. Else, proceed to Local Phase.

- Global Phase: Use an acquisition function (e.g., Expected Improvement, Upper Confidence Bound) to select the next batch of 3-5 points from the entire space.

- Local Phase: Apply a trust-region method (e.g., DIRECT, BOBYQA) or a local Gaussian Process refinement focused on the best candidate's region to select the next 1-2 points.

- Evaluation & Update: Run experiments/simulations for the proposed points, update the dataset, and return to Step 2. Terminate after budget exhaustion or convergence.

Protocol: Calibrating the Balance Threshold (θ)

Purpose: To empirically determine the optimal switching threshold for a specific class of problems. Methodology:

- Select 2-3 representative benchmark functions or historical datasets with known optima.

- Run the Iterative Optimization Cycle (above) 50 times per candidate threshold value (θ = [1.0, 1.5, 2.0, 3.0]).

- Record the final best objective value and the iteration at which the true optimum was first approximated within 5%.

- Analysis: Plot θ against both the final performance and the speed of convergence. The optimal θ minimizes convergence time without degrading final performance. Use this value for subsequent similar experiments.

Visualizations

Diagram: High-Level Optimization Workflow with Adaptive Switch

Diagram: Key Metrics Feedback to Phase Decision

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Optimization Workflow Experiments

| Item / Reagent | Function in Context | Example & Notes |

|---|---|---|

| Global Surrogate Model | Approximates the expensive objective function across the entire input space for prediction and uncertainty quantification. | Gaussian Process (GP) with Matérn kernel. Note: Use scalable approximations (e.g., sparse GP) for high dimensions. |

| Local Solver / Refiner | Performs intense search within a constrained region (trust region) to converge to a local optimum. | BOBYQA (Bound Optimization BY Quadratic Approximation). Note: Effective for derivative-free, constrained local refinement. |

| Acquisition Function | Balances exploration and exploitation by proposing the next most valuable point(s) to evaluate. | q-EI (Batch Expected Improvement). Note: Enables parallel, batch experimental design. |

| Adaptive Threshold (θ) | A calibrated parameter that controls the switch between global and local phases based on ER/ES ratio. | Determined via Protocol: Calibrating the Balance Threshold. Start with θ=1.5. |

| Benchmark Suite | Validates the optimization workflow's performance on problems with known solutions. | Synthetic: Branin, Hartmann functions. Industrial: Pharma QSAR datasets with published binding affinities. |

| High-Throughput Assay | The experimental system used to evaluate the objective function (e.g., binding affinity, yield). | Example: Fluorescence-based binding assay in 384-well plates. Critical for throughput. |

Implementing Local Refinement: Algorithms and Real-World Applications

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My gradient-based optimizer (e.g., L-BFGS-B) is converging to a poor local minimum from the starting point provided by my global search. What are the primary checks? A: This is a common issue in the refinement phase. Follow this protocol:

- Check Gradient Fidelity: Use finite-difference checks at the global solution seed point. Discrepancies >1e-6 suggest an error in the objective or gradient function implementation.

- Evaluate Starting Point Feasibility: Ensure the point satisfies all bound and nonlinear constraints. Infeasible starts can cause immediate failure.

- Adjust Optimizer Tolerance: For refinement, tighten

factr(L-BFGS-B) orgtolparameters. Suggested:factr=1e10(moderate) to1e12(tight). - Implement Multi-Start Refinement: Automate the launch of the local optimizer from the top N (e.g., 5-10) solutions of the global search, not just the best.

Q2: My quasi-Newton method fails with "non-positive definite Hessian" errors during molecular geometry optimization. How to resolve? A: This indicates ill-conditioning, often near saddle points or with numerical noise.

- Initial Hessian Strategy: Do not use a unit matrix. Use a scaled diagonal or, better, a calculated Hessian from a lower-level theory (e.g., MMFF94) for the initial guess.

- Trust-Region Enforcement: Use a trust-region method (e.g.,

trust-constrin SciPy) instead of line-search. It handles indefinite Hessians robustly. - Switch to Gradient-Only: As a diagnostic, temporarily use a gradient-only method (e.g., nonlinear conjugate gradient). If it proceeds, the issue is Hessian approximation.

- Regularization: Add a small Levenberg-Marquardt damping term (

lambda * I) to the Hessian update to enforce positive definiteness.

Q3: The surrogate model (e.g., Gaussian Process) in my optimization loop is inaccurate, leading to failed local refinements. How to improve it? A: Surrogate inaccuracy often stems from poor training data or hyperparameters.

- Active Learning for Refinement: In the local basin, enrich the surrogate training set with points from a Design of Experiment (DoE) around the best point before refinement. A spherical LHS with radius=0.2*norm(globalsearchrange) is effective.

- Hyperparameter Re-Optimization: Re-optimize GP kernel scales and noise parameters using MLE after the global phase and before local refinement.

- Hybrid Objective: For refinement, use a weighted sum of surrogate mean and standard deviation (Expected Improvement) to balance exploitation and exploration locally.

- Dimensionality Check: For >20 dimensions, consider using partial dependence plots to check if the surrogate has captured variable sensitivities.

Q4: How do I balance computational cost between global exploration and local refinement when optimizing a costly molecular property? A: This is the core of efficient workflow design. Implement an adaptive budget allocator.

Table 1: Comparative Performance of Local Methods for Refinement (Hypothetical Benchmark)

| Method | Avg. Function Calls to Converge | Success Rate (%) | Avg. Final Objective Improvement | Best For |

|---|---|---|---|---|

| BFGS (Gradient) | 45 | 85 | 15.2% | Smooth, low-dim problems |

| L-BFGS-B (Gradient) | 55 | 92 | 14.8% | Bounded, medium-dim problems |

| SLSQP (Gradient) | 65 | 88 | 16.1% | Constrained problems |

| DFP (Quasi-Newton) | 50 | 82 | 14.9% | Historical comparison |

| Surrogate-Assisted (EI) | 20 (surrogate) + 3 (true) | 95 | 17.5% | Very expensive objectives |

Experimental Protocol for Benchmarking Refinement Methods

- Objective: Compare the efficiency of local refinement methods post-global search.

- Procedure:

- Run a differential evolution global search for 1000 iterations on a test suite (e.g., 10 shifted Schwefel functions). Record the top 5 candidate solutions.

- For each local method (BFGS, L-BFGS-B, SLSQP), initialize from each of the 5 candidates with identical, tight tolerance settings (

gtol=1e-9). - For the surrogate-assisted method, build a GP model on the final 200 points from the global search. Refine the best point using the EI criterion, validating with a true function call every 5 surrogate steps.

- Measure: number of true function calls to reach

gtol, final objective value, and success (convergence within max iterations).

- Key Parameters: Population size=50, CR=0.9, F=0.8 (DE). GP kernel=Matern 5/2. Max local iterations=200.

Title: Hybrid Global-Local Optimization Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Toolkit for Optimization Experiments

| Item / Solution | Function in the "Experiment" | Example / Specification | |||

|---|---|---|---|---|---|

| Global Optimizer | Provides diverse starting points for local refinement. | Differential Evolution (SciPy), Bayesian Optimization (Ax), CMA-ES. | |||

| Gradient Calculator | Supplies 1st-order info for gradient-based methods. | Automatic Differentiation (JAX, PyTorch), Adjoint Solvers, Finite Differencing. | |||

| Hessian Approximator | Builds 2nd-order model for quasi-Newton methods. | BFGS, SR1, or L-BFGS update routines (from SciPy, NLopt). | |||

| Surrogate Model | Creates a cheap-to-evaluate proxy of the expensive objective. | Gaussian Process (GPyTorch, scikit-learn), Radial Basis Functions. | |||

| Convergence Monitor | Tracks progress and decides termination of refinement. | Custom logger checking `| | grad | < gtolandΔf < ftol` over window. |

|

| Benchmark Problem Set | Validates and compares the performance of the full toolkit. | COBYLA, Shifted-Schwefel, or proprietary molecular property functions. |

Title: Information Flow in a Local Refinement Step

Technical Support Center

Troubleshooting Guide

Issue T1: Solver Handoff Failure

- Symptoms: The global solver completes its run, but the local solver does not initiate. The workflow halts or errors with a "boundary condition not met" message.

- Diagnosis: This is typically a data formatting or interface mismatch. The output from the global solver (e.g., a candidate solution vector, basin identifier) is not in the precise format or structure expected by the local solver's input API.

- Resolution:

- Implement a validation and translation layer (an "adapter") between the solvers.

- Log the exact output of the global solver and compare it to the expected input schema of the local solver.

- Ensure numerical precision (e.g., single vs. double) and parameter bounds are explicitly passed and respected.

Issue T2: Premature Convergence or Cycling

- Symptoms: The coupled system converges to a suboptimal solution or appears to oscillate between a few points without refining.

- Diagnosis: Inadequate criteria for triggering the switch from global exploration to local refinement. The handoff may be happening too early (before the global basin is identified) or the local solver is being called repeatedly on the same region.

- Resolution:

- Implement and tune a robust handoff criterion. Common metrics are listed in Table 1.

- Introduce a tabu or caching mechanism to prevent the global solver from revisiting and re-submitting recently refined regions.

Issue T3: Prohibitive Computational Overhead

- Symptoms: The overall runtime of the coupled system is much higher than the sum of isolated solver runtimes, negating the benefit of integration.

- Diagnosis: Excessive communication overhead (e.g., file I/O, process spawning) or an inefficient parallelization strategy between the global and local components.

- Resolution:

- Shift from file-based to memory-based (e.g., shared, message-passing) inter-process communication.

- Use a lightweight, persistent local solver instance that can be warm-started, rather than launching a new process for each refinement task.

Frequently Asked Questions (FAQs)

Q1: What is the most critical parameter to configure in a coupled architecture? A1: The handoff criterion. This logic determines when and where to invoke the local solver based on the global solver's progress. A poorly set criterion is the primary cause of inefficiency or failure in integrated workflows.

Q2: Can I couple a gradient-based local solver with a derivative-free global solver? A2: Yes, this is a common and powerful pattern. The key is to ensure the global solver provides a sufficiently refined starting point within the convergence basin of the local solver. You may need to configure the local solver with conservative initial step sizes to bridge the fidelity gap.

Q3: How do I manage different levels of model fidelity between solvers? A3: Implement a surrogate or proxy model. Use a fast, lower-fidelity model (e.g., coarse-grid, molecular mechanics) for the global explorer. When a promising region is identified, switch to a high-fidelity model (e.g., all-atom, quantum mechanics) for the local refinement. Calibration between model fidelities is essential.

Q4: What are the best practices for parallelizing such a workflow? A4: Employ an asynchronous master-worker pattern. The global solver (master) continuously proposes candidate points. Idle workers request these points and conduct local refinements in parallel. Results are asynchronously fed back to inform the global search, preventing bottlenecks.

Data & Methodology

Table 1: Common Handoff Criteria for Solver Coupling

| Criterion | Metric Description | Best For | Typical Threshold Range |

|---|---|---|---|

| Population Cluster Density | Coefficient of variation of candidate points in a promising region. | Population-based global solvers (e.g., GA, PSO). | Variance < 0.1 * Search Space |

| Trust Region Radius | Size of the region around the best candidate where a local model is trusted. | Surrogate-assisted or Bayesian optimization. | Radius < 5-10% of domain |

| Probability of Improvement | Likelihood that a candidate point will outperform the current best. | Bayesian Optimization frameworks. | PoI > 0.15 |

| Gradient Estimate Norm | Magnitude of an estimated gradient (finite difference) at the candidate point. | Heuristic link to gradient-based local search. | ||Gradient| < 1e-3 |

Experimental Protocol: Benchmarking Coupled Architectures

Objective: Quantify the efficiency gain of a coupled Global-Local solver versus a standalone global solver for molecular conformation search.

- Problem Set: Select 5 small organic molecules with known conformational energy landscapes (e.g., from PubChem).

- Solver Setup:

- Global: Stochastic algorithm (e.g., Particle Swarm Optimization) using an MMFF94 force field.

- Local: Gradient-based algorithm (e.g., L-BFGS) using the same or a higher-fidelity (DFT) method.

- Coupling: Implement a handoff when the PSO cluster density criterion (Table 1) is met.

- Execution: For each molecule, run (a) Global-only for 10,000 iterations, and (b) Coupled system with a handoff budget of 200 local iterations.

- Metrics: Record the best energy found and wall-clock time to reach within 5% of the known global minimum. Average results over 20 independent runs to account for stochasticity.

Visualizations

Diagram Title: Basic Synchronous Coupling Workflow

Diagram Title: Asynchronous Master-Worker Parallel Architecture

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Integration Experiments |

|---|---|

| Optimization Framework (e.g., Pyomo, SciPy) | Provides the scaffolding to define objective functions, constraints, and manage solver interfaces. |

| Message Passing Interface (MPI) | Enables high-performance, parallel communication between globally distributed and locally focused solver processes. |

| Surrogate Model Library (e.g., scikit-learn, GPyTorch) | Used to build fast approximate models (Gaussian Processes, Neural Networks) for the global exploration phase. |

| Containerization (Docker/Singularity) | Ensures solver environment consistency and portability across HPC clusters, crucial for reproducible workflows. |

| Molecular Mechanics Force Field (e.g., OpenMM) | Acts as the fast, lower-fidelity "global" evaluator for conformational search in drug development. |

| Quantum Chemistry Package (e.g., PySCF, ORCA) | Acts as the high-fidelity "local" refiner for accurate electronic energy calculations. |

| Data Serialization (Protocol Buffers, HDF5) | Enables efficient, language-agnostic data transfer of complex candidate solutions between solver components. |

Troubleshooting Guides & FAQs

Q1: During a global optimization run, my algorithm fails to trigger local refinement even when it appears to have entered a promising parameter basin. What are the primary criteria checks that might be failing?

A: The failure to trigger local refinement is typically due to one or more of the following criteria not being met. Verify these conditions sequentially:

- Basin Stability Criterion: The point must reside within a region of parameter space where the objective function value has shown consistent improvement or minimal fluctuation (low variance) over a defined number of consecutive iterations (

N_stable). A common failure is a too-short stability window. - Gradient Norm Threshold: While not always computed in derivative-free global methods, proxy gradient estimates (e.g., from simplex vertices or recent steps) may be used. The norm must fall below a set threshold (

ε_grad). Check if your threshold is too strict. - Significant Improvement Criterion: The candidate point must represent an improvement over the current best solution by a margin greater than a noise tolerance level (

Δ_significant). This prevents refinement on statistically insignificant fluctuations. - Resource Budget Check: Local refinement may be withheld if the allocated budget (e.g., function evaluations, time) for the global phase is exhausted or if the remaining budget is insufficient for a minimum local refinement run.

Q2: What are robust experimental protocols for validating basin detection and refinement triggers in a synthetic test environment?

A: Follow this detailed protocol to validate your triggering logic:

Protocol: Validation of Refinement Triggers on Synthetic Functions

- Preparation: Select a set of standard benchmark functions with known basin locations (e.g., Rosenbrock, Rastrigin, Ackley functions).

- Instrumentation: Modify your global optimization algorithm to log all proposed trigger points, the state of all triggering criteria at that iteration, and the final decision (trigger/not trigger).

- Ground Truth Labeling: For each iteration, determine if the current solution is actually within a predefined radius (

r_basin) of a known global/local minimum (ground truth basin). - Run Experiments: Execute multiple optimization runs on the benchmark set. Record all data.

- Analysis: Calculate the True Positive Rate (correct triggers inside true basins) and False Positive Rate (erroneous triggers outside true basins) for your criteria. Adjust criterion thresholds to optimize this balance.

Q3: How do I quantify the efficiency gain from an adaptive local refinement trigger versus a fixed-interval schedule?

A: The efficiency gain is measured by comparing resource consumption to reach a target solution quality. Conduct the following comparative experiment:

Protocol: Comparative Efficiency Measurement

- Control Group: Run your optimization workflow with a fixed-interval local refinement schedule (e.g., trigger every

Kiterations). - Test Group: Run the same workflow with your adaptive basin-detection trigger.

- Metrics: For both groups, record the total number of function evaluations and computational time required for the best-found solution to reach a pre-specified objective value threshold (

V_target). - Calculation: Compute the percentage reduction in evaluations and time for the test group versus the control. Statistical significance should be assessed using multiple independent runs.

Table 1: Example Quantitative Results from a Benchmark Study (Hypothetical Data)

| Benchmark Function | Fixed-Trigger Evaluations (Mean) | Adaptive-Trigger Evaluations (Mean) | Reduction in Evaluations | Probability of Successful Trigger (True Positive) |

|---|---|---|---|---|

| Rosenbrock (2D) | 15,750 | 9,420 | 40.2% | 92% |

| Rastrigin (5D) | 52,300 | 38,950 | 25.5% | 85% |

| Ackley (10D) | 121,000 | 110,200 | 8.9% | 78% |

Workflow & Logical Diagram

Title: Logical Flow for Triggering Local Refinement

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for a Hybrid Optimization Workflow

| Item | Function/Explanation |

|---|---|

| Global Optimizer (e.g., CMA-ES, Bayesian Optimization) | Explores the broad parameter space to identify promising regions, avoiding premature convergence to local minima. |

| Local Refinement Solver (e.g., L-BFGS, Nelder-Mead) | Once a basin is detected, this efficient local algorithm converges rapidly to the precise local minimum. |

| Basin Detection Module | Contains the logic (criteria) for analyzing the optimizer's trajectory to signal a potential convergence basin. |

| Benchmark Function Suite | Synthetic landscapes with known properties for validating trigger accuracy and algorithm performance. |

| Performance Metrics Logger | Tracks key data (evaluations, time, objective value) to quantify the efficiency gains of the adaptive trigger. |

Troubleshooting Guides & FAQs

Q1: During conformer generation, my workflow stalls with the error "Failed to generate low-energy conformers." What are the primary causes? A: This typically indicates an issue with the input geometry or parameterization. First, verify the initial 3D structure is valid (no atomic clashes, reasonable bond lengths). Second, ensure the correct force field (e.g., MMFF94s, GAFF2) is applied for your molecule type (small organic vs. metallocomplex). Third, increase the maximum iteration limit for the energy minimization step. A protocol adjustment is to first perform a coarse conformational search using a faster method (e.g., ETKDG) followed by local refinement with the more precise force field.

Q2: The docking scores from my locally refined poses show high variance (>3 kcal/mol) between repeated runs on the same protein-ligand pair. How can I stabilize the results? A: High variance suggests insufficient sampling during the local refinement stage. Implement the following: 1) Increase the number of refinement steps (e.g., from 50 to 200 in the local optimizer). 2) Apply a stronger conformational restraint on the protein's backbone during ligand pose refinement to prevent unnatural protein drift. 3) Use a consistent and reproducible random seed for the optimization algorithm. The core thesis of efficient local refinement emphasizes balancing sampling depth with computational cost; a slight increase in refinement iterations often stabilizes scores without major time penalties.

Q3: After local refinement of docked poses, the ligand is distorted with unusual bond angles. What went wrong? A: This is a failure in the force field's bonded parameters or an over-aggressive optimization. Apply this protocol: First, check that the ligand was correctly parameterized (atom types assigned correctly). Second, in the local refinement script, increase the weight of the bonded terms (bonds, angles, dihedrals) relative to the non-bonded (vdW, electrostatic) terms in the scoring function. This ensures molecular integrity is prioritized during the local search.

Q4: How do I quantify the improvement from adding a local refinement step to my global docking pipeline? A: You must compare key metrics with and without refinement. Run your standard global docking (e.g., Vina, QuickVina 2) on a benchmark set, then apply your local refinement (e.g., using OpenMM for minimization). Compare the results as shown in Table 1.

Table 1: Docking Performance Metrics With vs. Without Local Refinement

| Metric | Global Docking Only | Global + Local Refinement | Measurement Protocol |

|---|---|---|---|

| RMSD to Crystal Pose (Å) | 2.5 ± 0.8 | 1.2 ± 0.4 | Calculate after aligning protein backbone. |

| Average Docking Score (kcal/mol) | -7.1 ± 1.5 | -8.9 ± 1.2 | More negative scores indicate stronger predicted binding. |

| Pose Ranking Accuracy (%) | 65% | 89% | % of cases where top-ranked pose is <2.0 Å RMSD to crystal. |

| Computational Time (sec/ligand) | 45 ± 10 | 68 ± 12 | Measured on a standard CPU node. |

Experimental Protocol for Benchmarking:

- Dataset Preparation: Select the PDBbind core set (or a relevant subset of 50-100 protein-ligand complexes with high-resolution crystal structures).

- Global Docking: For each complex, separate the ligand, generate 10 conformers, and dock into the prepared protein binding site using your chosen global method (e.g., exhaustiveness=32 in Vina). Save the top 10 poses.

- Local Refinement: For each of the top 10 global poses, perform a local energy minimization. Protocol: Use the OpenMM toolkit with the AMBER ff14SB force field for the protein and GAFF2 for the ligand. Solvate implicitly (GBSA). Run 1000 steps of steepest descent minimization.

- Re-scoring: Score the refined poses using the same scoring function as the global docker for fair comparison.

- Analysis: For each complex, identify the pose with the best score after refinement. Calculate its RMSD to the crystal ligand pose. Aggregate statistics across the entire dataset.

Q5: My locally refined poses cluster into very similar conformations, suggesting a lack of diversity. How can I maintain diversity while improving accuracy? A: This is a key challenge in efficient local refinement. To address it, modify your workflow to apply local refinement to a broader set of initial poses (e.g., top 20 instead of top 5) and incorporate a diversity filter post-refinement. Cluster the refined poses by RMSD and select the best-scoring pose from each major cluster. This aligns with the thesis of using local refinement to polish multiple promising regions identified by the global search.

Diagram: Workflow for Diverse & Accurate Pose Refinement

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Conformer Search & Docking Experiments

| Item/Software | Function & Application | Key Consideration |

|---|---|---|

| RDKit | Open-source cheminformatics toolkit for ligand preparation, conformer generation (ETKDG), and basic molecular operations. | The default ETKDG algorithm is fast but may require parameter tuning (numConfs) for complex macrocycles. |

| Open Babel / Gypsum-DL | Used for standardizing molecular formats, generating protonation states, and tautomers at a specified pH. | Critical for preparing a realistic, enumerative set of ligand states before docking. |

| OpenMM | High-performance toolkit for molecular dynamics and energy minimization. Used for local pose refinement with explicit force fields. | Allows precise control over the refinement protocol (steps, constraints, implicit solvent model). |

| AutoDock Vina / QuickVina 2 | Widely-used global docking engines for rapid sampling of the protein's binding site. | Serves as the initial, broad sampling stage. Exhaustiveness parameter directly impacts initial pose quality. |

| AMBER/GAFF or CHARMM/CGenFF | Force field parameter sets for proteins and small molecules, providing the energy terms for local refinement. | Choice depends on system compatibility; GAFF2 is broadly applicable for drug-like ligands. |

| PDBbind Database | Curated collection of protein-ligand complexes with binding affinity data, used for method validation and benchmarking. | The "core set" is the standard for rigorous accuracy testing against known crystal structures. |

Diagram: Thesis Context of Local Refinement in Optimization

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During pose refinement, my simulation crashes with the error "NaN (not a number) detected in forces." What are the common causes and solutions? A: This typically indicates an instability in the molecular dynamics (MD) engine.

- Cause 1: Overlapping atoms due to a poor initial pose or bad van der Waals parameters.

- Solution: Re-center the ligand in the binding site with a small initial minimization step. Use a

soft-corepotential during the initial equilibration phase.

- Solution: Re-center the ligand in the binding site with a small initial minimization step. Use a

- Cause 2: Incorrectly assigned protonation states at the chosen simulation pH.

- Solution: Use a tool like

PROPKAto re-calculate protonation states of protein residues (especially Asp, Glu, His, Lys) before system preparation. Ensure ligand protonation is correct.

- Solution: Use a tool like

- Cause 3: An unstable covalent bond parameter for a modified residue or ligand.

- Solution: Check the force field assignment. For non-standard residues/ligands, validate parameters with QC methods before simulation.

Q2: My calculated relative binding free energy (ΔΔG) between two similar ligands has an error > 2.0 kcal/mol, which is unusable. What steps should I take to debug? A: High error suggests poor phase space overlap or sampling insufficiency.

- Step 1 - Check Lambda Schedule: For alchemical transformations with large structural changes, increase the number of intermediate λ windows (e.g., from 12 to 20), especially near end-states (λ=0.0 and λ=1.0).

- Step 2 - Analyze Overlap: Plot the potential energy difference distributions between adjacent λ windows. Poor overlap appears as separate peaks.

- Protocol: Use analysis tools (e.g.,

alchemical-analysis.py) to generate the overlap matrix. If off-diagonal elements are near zero, sampling is insufficient or the schedule is wrong.

- Protocol: Use analysis tools (e.g.,

- Step 3 - Extend Sampling: Increase simulation time per λ window. A good starting point is 5 ns per window for complex and solvent legs. For difficult transformations, 10-20 ns may be required.

Q3: After running an ensemble of refinements, how do I choose the final "best" pose when scores conflict (e.g., MM/GBSA suggests Pose A, but the binding pocket hydration analysis suggests Pose B)? A: Implement a consensus decision protocol.

- Cluster the Poses: Cluster refined poses by RMSD (e.g., 2.0 Å cutoff).

- Apply Multi-Metric Scoring: Create a ranked table for each cluster representative.

- Prioritize Experimental Data: If a crystallographic water network is known, the pose that best accommodates it is preferred.

- Perform a Brief Unbiased Simulation: Run a short (50-100 ns) unbiased MD of the top contenders. The pose with greater stability (lower RMSD) and more persistent key interactions is favored.

Q4: In the context of global optimization workflows, when should I use fast VSGB 2.0 scoring versus more rigorous but slower PMF-based refinement? A: The choice is a trade-off between throughput and accuracy, dependent on the workflow stage.

| Workflow Stage | Sample Size | Recommended Method | Typical Compute Time per Pose | Purpose |

|---|---|---|---|---|

| Pre-screening | 1,000 - 10,000 | Fast Docking & MM/GBSA (VSGB) | 2-10 minutes | Filter to top 50-100 candidates. |

| Local Refinement | 10 - 100 | MM/GBSA (VSGB 2.0) with MD | 1-4 hours | Rank poses, assess interaction stability. |

| High-Confidence | 1 - 10 | Alchemical (PMF) Methods (TI, FEP) | 24-72 hours | Quantitative ΔΔG for lead optimization. |

Experimental Protocols

Protocol 1: MM/GBSA Refinement with Explicit Solvent Sampling This protocol refines docked poses and estimates binding affinity.

- System Preparation: Use

tleap(Amber) orpdb2gmx(GROMACS) to solvate the protein-ligand complex in an orthorhombic water box (10 Å buffer), add ions to neutralize, and optionally add 150 mM NaCl. - Minimization & Equilibration:

- Minimize solvent and ions with protein-ligand heavy atoms restrained (500 steps steepest descent, 500 conjugate gradient).

- Heat system from 0 K to 300 K over 100 ps in NVT ensemble with restaints.

- Equilibrate density at 300 K/1 bar over 200 ps in NPT ensemble.

- Release restraints over 500 ps of NPT equilibration.

- Production MD: Run an unrestrained MD simulation for 20-50 ns. Use a 2 fs timestep, PME for electrostatics, and maintain temperature/pressure with a Langevin thermostat and Berendsen barostat.

- Trajectory Sampling & MM/GBSA: Extract snapshots every 100 ps from the last 10 ns. For each snapshot, calculate the binding free energy using the MM/GBSA model (e.g.,

MMPBSA.pyin AmberTools). The VSGB 2.0 solvation model is recommended.

Protocol 2: Relative Binding Free Energy (RBFE) Calculation using Thermodynamic Integration (TI) This protocol calculates ΔΔG for two ligands (LigA -> LigB).

- Topology Preparation: Create dual-topology hybrid structures for the ligand in complex and in solvent. Ensure proper mapping of atoms between LigA and LigB.

- Lambda Scheduling: Define a set of 12-24 λ values for coupling both electrostatic and Lennard-Jones interactions. Use a non-linear schedule (e.g.,

lambda_powers = 2) to place more points near end-states. - Simulation at Each Lambda:

- For each λ window, minimize, equilibrate (as in Protocol 1), and run production MD (2-5 ns per window).

- Use a soft-core potential for Lennard-Jones interactions to avoid endpoint singularities.

- Analysis: For each λ window, calculate the ensemble average of

dV/dλ. Numerically integrate (∫ <dV/dλ> dλ) over λ using the trapezoidal rule or Simpson's method. ΔΔGbind = ΔGcomplex - ΔG_solvent. Estimate statistical error using bootstrapping.

Diagrams

Global Optimization with Local Refinement Workflow (73 chars)

Troubleshooting High FEP/TI Error (45 chars)

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Rationale |

|---|---|

| AMBER/GAFF Force Fields | Provides parameters for organic drug-like molecules (GAFF) and standard bio-polymers (ff19SB). Essential for consistent MD and free energy calculations. |

| VSGB 2.0 Solvation Model | A fast, implicit solvation model with good accuracy for MM/GBSA, enabling rapid scoring of refined poses from MD trajectories. |

| Hydrogen Mass Repartitioning (HMR) | Allows a 4 fs MD timestep by increasing the mass of hydrogen atoms, significantly accelerating conformational sampling without loss of accuracy. |

| Soft-Core Potential | Prevents simulation instabilities (NaNs) in alchemical calculations by removing singularities in the Lennard-Jones potential when atoms are created/annihilated. |

| Orthorhombic TIP3P Water Box | The standard explicit solvent environment for hydration. A 10-12 Å buffer ensures the protein is fully solvated and minimizes periodic boundary artifacts. |

| Multi-Ensemble Thermostat (e.g., Langevin) | Maintains correct temperature distribution and aids sampling by introducing stochastic collisions, crucial for NVT ensemble simulations. |

Troubleshooting Guide & FAQs

Q1: In my GA for molecular docking, the population converges to a suboptimal ligand pose too quickly. How can I maintain diversity?

A: This indicates premature convergence. Implement a niching or fitness sharing technique. The following protocol is recommended:

- Calculate the phenotypic distance (e.g., RMSD) between all individuals in the population.

- For each individual i, calculate a shared fitness: f'_i = f_i / (sum(sh(d_ij))), where sh(d) is a sharing function (typically 1 if d < σshare, else 0) and σshare is the niche radius.

- Proceed with selection using the shared fitness values. This penalizes crowded solutions. Key Parameter Table:

| Parameter | Typical Range for Docking | Function |

|---|---|---|

| Niche Radius (σ_share) | 2.0 - 5.0 Å | Defines phenotypic distance for sharing |

| Sharing Function Alpha (α) | 1.0 | Controls shape of sharing function |

| Population Size | 100 - 500 | Larger sizes aid diversity |

Q2: My Evolution Strategy (ES) for force field parameter optimization shows high variance in offspring performance. How do I stabilize it?

A: High variance suggests unstable step-size adaptation or excessive mutation strength.

- Switch from a simple (1,λ)-ES to a (μ/ρ,λ)-ES with recombination (e.g., μ=15, ρ=5, λ=100). Recombination of parental parameters stabilizes the search.

- Implement the derandomized Cumulative Step-size Adaptation (CSA) instead of the 1/5th success rule. CSA uses a longer-term correlation of successful steps.

Protocol for CSA Update:

- Initialize evolution path pσ(0) = 0.

- Each generation g, update: pσ(g+1) = (1 - cσ)pσ(g) + sqrt(cσ(2 - cσ)μeff) C(g)^(-1/2) (m(g+1) - m(g)) / σ(g)

- Then adapt step-size: σ(g+1) = σ(g) * exp( ||pσ(g+1)|| / χn - 1 ) Where cσ ~ 1/√n, μeff is the variance effective selection mass, χn is expectation of ||N(0,I)||.

Q3: How do I effectively balance exploration and exploitation in a hybrid GA-ES workflow for conformer search?

A: Use a staged approach where GA performs global exploration and ES performs local refinement. Experimental Protocol:

- Phase 1 (GA - Exploration): Run GA for N generations (N = 50-100) with a high mutation rate (e.g., 0.1 per gene) and relaxed selection pressure (tournament size k=2).

- Phase 2 (Transition): Select the top 10% of GA solutions as seeds. Initialize μ parents for ES around each seed with small Gaussian noise.

- Phase 3 (ES - Exploitation): Run a (μ+λ)-ES on each seed cluster for local refinement. Use a decaying step-size schedule: σ(t) = σ_initial * exp(-t / τ), with τ=20 generations.

Table 1: Performance Comparison of Convergence Preventers in GA (Protein-Ligand Docking)

| Method | Average Final Best Energy (kcal/mol) | Standard Deviation | Avg. Generations to First Improvement |

|---|---|---|---|

| Fitness Sharing (σ=3Å) | -9.34 | 0.41 | 12 |

| Deterministic Crowding | -8.95 | 0.58 | 8 |

| Standard GA (Baseline) | -7.22 | 1.05 | 5 |

Table 2: (3/3,21)-ES vs. (1,21)-ES on Force Field Parametrization

| Metric | (3/3,21)-ES with CSA | (1,21)-ES with 1/5th Rule |

|---|---|---|

| Avg. RMSE vs. QM Data (kcal/mol) | 1.56 | 2.87 |

| Parameter Standard Deviation (Final Gen) | 0.08 | 0.31 |

| Generations to Reach Target (RMSE<2.0) | 142 | Did Not Converge |

Visualizations

Title: Hybrid GA-ES Workflow for Conformer Search

Title: Evolution Strategy with Cumulative Step-Size Adaptation

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in GA/ES Optimization | Example/Note |

|---|---|---|

| Fitness Evaluation Engine | Computes the objective function (e.g., binding affinity). The core of the optimization loop. | Molecular docking software (AutoDock Vina, GOLD), Quantum Mechanics (QM) calculation package (Gaussian, ORCA). |

| Genetic Representation Library | Defines how a solution (e.g., a molecule, set of parameters) is encoded as a genome. | SMILES string, torsion angle array, real-valued parameter vector. Critical for crossover/mutation design. |

| Niching & Diversity Module | Prevents premature convergence by maintaining population diversity. | Fitness sharing, deterministic crowding, or speciation algorithms. Often requires custom implementation. |

| Step-Size Adaptation Controller | Dynamically adjusts mutation strength in ES for stable convergence. | Cumulative Step-size Adaptation (CSA) or Mirrored Sampling with Pairwise Selection. More robust than the 1/5th rule. |

| Parallelization Framework | Distributes fitness evaluations across compute resources to manage wall-clock time. | MPI for distributed clusters, OpenMP for multi-core nodes, or cloud-based task queues (AWS Batch). |

| Analysis & Visualization Suite | Tracks convergence, population diversity, and solution quality over generations. | Custom scripts (Python/matplotlib) to plot fitness trends, parameter distributions, and solution clusters. |

Overcoming Pitfalls: Optimizing Your Refinement Strategy for Robust Results

Technical Support Center

Troubleshooting Guide

Issue: Optimization algorithm stops improving objective function value prematurely. Symptoms:

- Stagnation of fitness/energy score before expected convergence.

- Repeated sampling of similar parameter sets with no diversity.

- Failure to reach known global optimum in benchmark tests.

Diagnostic Steps:

- Monitor Population Diversity: Track the standard deviation of parameter values or solution vectors across iterations. A rapid decline indicates premature convergence.

- Run Multiple Random Seeds: Execute the optimization workflow from different initial random seeds. Consistent convergence to the same suboptimal value suggests a local minimum trap.

- Perform Landscape Probing: Sample points in a radius around the converged solution. If better scores are found nearby, convergence was premature.

Corrective Actions:

- Increase Exploration: Adjust algorithm hyperparameters (e.g., increase mutation rate in evolutionary algorithms, temperature in simulated annealing).

- Hybridize Methods: Switch to a local refinement method only after a global method has broadly explored the parameter space.

- Implement Restart Mechanisms: Upon detecting stagnation, re-initialize a portion of the search population while keeping the current best solution.

Frequently Asked Questions (FAQs)

Q1: How can I distinguish between premature convergence and legitimate convergence to the global optimum? A: Legitimate convergence is typically accompanied by high confidence across multiple runs. Use statistical benchmarks: if 95% of independent runs from diverse starting points cluster within a tight tolerance of the same optimal value, it is likely global. Premature convergence will show clusters at different, suboptimal values.

Q2: My drug candidate docking simulation converges to a binding pose with a -9.2 kcal/mol score. How do I know if a better pose exists? A: This is a classic local minima problem in molecular docking. Employ a multi-pronged approach: 1) Use a consensus scoring function from different algorithms (see Table 1), 2) Perform a meta-dynamics simulation to push the ligand out of the current binding pocket and re-dock, 3) Use a genetic algorithm with a high initial mutation rate for pose generation before local refinement.

Q3: What is the most computationally efficient way to escape a known local minimum in a high-dimensional parameter space? A: Directed escape strategies are more efficient than full restarts. Based on recent literature, two effective protocols are:

- Nudged Elastic Band (NEB): Maps a minimum energy path away from the local minimum.

- Iterated Local Search (ILS): Applies a strong, random perturbation to the current best solution, performs local search, and accepts the new solution based on a meta-criterion.

Q4: Are there specific optimization algorithms more resistant to this failure mode in the context of molecular design? A: Yes. Benchmark studies indicate that algorithms incorporating adaptive exploration/exploitation balance perform better.

Table 1: Comparison of Optimization Algorithm Robustness to Local Minima

| Algorithm Class | Typical Use Case | Premature Convergence Risk | Suggested Mitigation | Avg. Additional Function Calls for Escape* |

|---|---|---|---|---|

| Gradient Descent | Local Refinement | Very High | Use multiple random starts | N/A (Restart Required) |

| Simulated Annealing | Global Search | Medium | Adaptive cooling schedule | 1,200 - 2,500 |

| Covariance Matrix Adaptation ES | Continuous Param. Optimization | Low | Built-in adaptation | 300 - 800 |

| Differential Evolution | Molecular Conformation | Medium-Low | Increase crossover rate | 500 - 1,200 |

| Particle Swarm Optimization | Protein Folding | Medium | Dynamic topology switching | 700 - 1,500 |

*Estimated calls for a 50-dimensional problem, based on 2023 benchmarking studies.

Experimental Protocols

Protocol A: Benchmarking Algorithm Susceptibility to Local Minima Objective: Quantify the propensity of an optimization algorithm to converge prematurely on a known test landscape.

- Select a benchmark function with documented local and global minima (e.g., Rastrigin function).

- Configure the optimization algorithm with a conservative convergence threshold (e.g., ∆f < 1e-10 over 50 iterations).

- Execute 100 independent runs, each with a unique random seed.

- Record the final converged value and the number of iterations/function calls.

- Analysis: Calculate the percentage of runs that converged to the global optimum vs. any local optimum. Compute the average number of function calls for successful vs. failed runs.

Protocol B: Iterated Local Search (ILS) for Conformational Sampling Objective: Efficiently escape local energy minima in molecular conformational search.

- Initialization: Generate an initial candidate molecular conformation

C_current. Perform a local energy minimization (e.g., using MMFF94) to find the local minimumC_best. - Perturbation: Apply a strong stochastic perturbation to

C_best(e.g., random torsion angle adjustments of ±90-180°) to createC_perturbed. - Local Search: Perform local energy minimization on

C_perturbedto yieldC_candidate. - Acceptance Criterion: If the energy of

C_candidateis lower thanC_best, or meets a probabilistic criterion (e.g., Metropolis criterion at a low annealing temperature), setC_best = C_candidate. - Iteration: Return to Step 2 for a fixed number of cycles or until a target energy is achieved.

- Validation: Cluster final conformations and compare to known crystal structures or ab initio predictions.

Diagrams

Title: Workflow for Detecting and Escaping Premature Convergence

Title: Iterated Local Search (ILS) Escape Protocol Cycle

The Scientist's Toolkit: Research Reagent Solutions

| Item Name | Supplier/Example | Function in Context |

|---|---|---|

| Benchmark Function Suites | COCO (Comparing Continuous Optimizers), NoisyOPT | Provides standardized, multi-modal landscapes with known minima to test algorithm robustness against premature convergence. |

| Metaheuristics Libraries | DEAP (Python), MEIGO (MATLAB), Nevergrad (Facebook) | Open-source frameworks providing implementations of evolutionary algorithms, swarm intelligence, and other global optimizers with tunable parameters to balance exploration/exploitation. |

| Molecular Force Fields | OpenMM, RDKit (MMFF94, UFF) | Provides the energy scoring functions for local refinement steps in conformational search and molecular docking, defining the landscape's local minima. |

| Docking & Scoring Software | AutoDock Vina, GNINA, Schrödinger Glide | Integrates global search (e.g., Monte Carlo) with local refinement (e.g., gradient-based) for pose prediction; their scoring functions are the objective landscape. |

| Adaptive Parameter Controllers | irace (R), SMAC3 (Python) | Automated algorithm configuration tools to optimize hyperparameters (like mutation rate) to avoid premature convergence for a specific problem class. |

| Visualization & Analysis Tools | Matplotlib (Python), Plotly, PCA & t-SNE libraries | Critical for monitoring population diversity, convergence traces, and visualizing high-dimensional parameter spaces in lower dimensions to diagnose stagnation. |

Technical Support & Troubleshooting Center

Frequently Asked Questions (FAQs)

Q1: During a hybrid global-local optimization run, the process is consuming excessive time on the global search phase, delaying critical local refinement. How can I reallocate computational budget effectively?

A1: This indicates a suboptimal global budget threshold. Implement an adaptive budget controller. Monitor the rate of improvement in the global objective function. Pre-define a convergence slope threshold (e.g., <1% improvement per 100 iterations). Once met, the system should automatically re-allocate remaining compute hours to the local refinement phase. The protocol below provides a detailed method.

Q2: My local refinement steps are failing to improve solutions found by the global optimizer, often worsening the score. What are the primary troubleshooting steps?

A2: This is typically a mismatch in fidelity between models. Follow this checklist:

- Verify Model Consistency: Ensure the local refinement algorithm (e.g., molecular dynamics, gradient-based solver) is operating on the same mathematical or physical model used for the global score evaluation. Inconsistencies in force fields or approximation levels are a common culprit.

- Check Parameter Transferability: Validate that all parameters from the global solution are correctly mapped to the local solver's input schema.

- Adjust Local Search Radius: The local optimizer's initial step size or search radius may be too large, causing it to move away from the promising global basin. Reduce the trust region or step size by 50% as an initial test.

Q3: How do I determine the optimal initial split (e.g., 70/30, 60/40) between global and local computation for a novel problem in drug candidate scoring?

A3: There is no universal optimum. Perform a rapid preliminary calibration experiment using a down-sampled dataset or a simplified proxy model. The table below, synthesized from recent literature, provides a starting heuristic based on problem characteristics.

Table 1: Heuristic for Initial Computational Budget Allocation

| Problem Characteristic | High-Dimensional (>100 params) Rugged Landscape | Lower-Dimensional (<50 params) Smooth Basins | Noisy/Stochastic Objective Function |

|---|---|---|---|

| Recommended Global % | 75-85% | 50-65% | 60-75% |

| Key Rationale | Requires extensive exploration to avoid local minima. | Less exploration needed; refinement is key. | Global phase must average noise to find true promising regions. |

| Primary Global Method | Bayesian Optimization, CMA-ES | Efficient Global Optimization (EGO) | Surrogate-based Optimization (e.g., Kriging) |

| Primary Local Method | Quasi-Newton (L-BFGS-B) | Newton-type, Gradient Descent | Pattern Search, Direct Search |

Detailed Experimental Protocols

Protocol 1: Calibrating Adaptive Budget Switching

Objective: To dynamically shift computational resources from global exploration to local exploitation based on real-time convergence metrics.

Methodology:

- Setup: Define total computational budget

B_total(e.g., in CPU-hours or iteration count). - Initial Allocation: Assign

B_global_init = 0.7 * B_total. - Monitoring Window: During global optimization, track the best objective value