Taming Complexity: Advanced Strategies for High-Dimensional Optimization in Molecular Design and Drug Discovery

High-dimensional optimization presents a formidable 'curse of dimensionality' challenge in molecular science, from simulating protein folding to designing novel therapeutics.

Taming Complexity: Advanced Strategies for High-Dimensional Optimization in Molecular Design and Drug Discovery

Abstract

High-dimensional optimization presents a formidable 'curse of dimensionality' challenge in molecular science, from simulating protein folding to designing novel therapeutics. This article provides a comprehensive guide for researchers and drug development professionals, addressing the full lifecycle of tackling these complex problems. We begin by defining the foundational challenge—why molecular systems are inherently high-dimensional and what makes their optimization difficult. We then explore and compare core methodological frameworks, including dimensionality reduction techniques, enhanced sampling algorithms, and machine learning-driven approaches. The guide delves into practical troubleshooting and optimization strategies for improving convergence, managing computational cost, and avoiding common pitfalls. Finally, we cover critical validation, benchmarking, and comparative analysis protocols to ensure robustness and reliability. By synthesizing current methodologies and best practices, this article serves as a roadmap for navigating the intricate energy landscapes of biological macromolecules and accelerating rational molecular design.

Navigating the Curse of Dimensionality: Why Molecular Landscapes Are Inherently Complex

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: My molecular dynamics (MD) simulation is not converging to a stable energy minimum. What could be wrong? A: This often stems from insufficient sampling of the conformational space or incorrect force field parameters. First, verify your simulation time is adequate for the system's relaxation (often >100 ns for protein folding). Check dihedral angle distributions for unusual restraints. Re-evaluate your solvation model and ion concentration. Running multiple independent replicates from different starting conformations can help diagnose sampling issues versus parameter problems.

Q2: How do I choose between explicit and implicit solvent models for conformational sampling? A: The choice balances computational cost against accuracy needs. Use explicit solvent (e.g., TIP3P, SPC/E water models) for studying specific solvent interactions, binding events, or when electrostatic screening is critical. Use implicit solvent (e.g., GB/SA models) for rapid, high-throughput scanning of large conformational spaces or for very large systems. Refer to the table below for a quantitative comparison.

Table 1: Explicit vs. Implicit Solvent Model Comparison

| Parameter | Explicit Solvent (e.g., TIP3P) | Implicit Solvent (e.g., GBSA) |

|---|---|---|

| Computational Cost | High (~10-100x more than implicit) | Low |

| Sampling Speed | Slow | Fast |

| Handling of Solvation Effects | Atomistic, includes specific H-bonds | Mean-field, approximate |

| Best For | Detailed mechanism studies, binding free energy | Conformational searching, docking, large-scale screening |

Q3: When performing conformational clustering, how do I determine the optimal number of clusters? A: There is no universal answer, but a systematic protocol is recommended. 1) Calculate pairwise RMSD for all saved frames. 2) Use an algorithm like Daura et al. or k-means. 3) Plot the number of clusters against a metric like the Davies-Bouldin index or silhouette score; the "elbow" point often indicates a good balance. 4) Ensure your dominant clusters collectively represent >70-80% of the population. 5) Validate by checking if representatives from different clusters have distinct functional relevance (e.g., active site accessibility).

Q4: My energy landscape visualization is too complex and high-dimensional to interpret. What simplification strategies are valid? A: Dimensionality reduction is essential. Principal Component Analysis (PCA) applied to backbone dihedrals or Cartesian coordinates is standard. Use the first 2-3 principal components, which typically capture >60% of the variance, to create a 2D/3D landscape. Alternatively, use time-lagged independent component analysis (tICA) to find slow, functionally relevant motions. Always project free energy (as -kT ln(P)) onto these collective variables to create a Free Energy Surface (FES). Avoid over-interpreting minor basins containing <5% population.

Experimental Protocol: Principal Component Analysis (PCA) of MD Trajectories for Landscape Visualization

- System Preparation: Run a well-equilibrated MD simulation (NPT ensemble, stable RMSD).

- Trajectory Processing: Align all frames to a reference structure (e.g., the protein backbone) to remove rotational/translational motion.

- Coordinate Extraction: Extract the coordinates of the atoms of interest (e.g., Cα atoms).

- Covariance Matrix Construction: Calculate the covariance matrix of the atomic positional fluctuations.

- Diagonalization: Diagonalize the covariance matrix to obtain eigenvectors (PCs) and eigenvalues (variance captured).

- Projection: Project the trajectory onto the first 2-3 principal components.

- Free Energy Surface Calculation: Bin the 2D projection and calculate the probability (P) in each bin. Compute the free energy as G = -kB * T * ln(P), where kB is Boltzmann's constant and T is temperature.

- Visualization: Plot the free energy as a contour or surface plot.

Title: Workflow for PCA-Based Free Energy Surface Calculation

Q5: What are common pitfalls in calculating free energy differences between conformers, and how can I avoid them? A: Major pitfalls include: 1) Inadequate sampling of intermediate states in methods like umbrella sampling or metadynamics. Solution: Extend simulation time and confirm overlap between histogram windows. 2) Poor choice of reaction coordinate that doesn't distinguish states. Solution: Use collective variables from PCA/tICA. 3) Ignoring entropy contributions, especially in flexible systems. Solution: Employ methods that compute vibrational entropy (e.g., normal mode analysis on converged clusters) or use thermodynamic integration methods. See the protocol below.

Experimental Protocol: Umbrella Sampling for Free Energy Difference Along a Reaction Coordinate

- Define Reaction Coordinate (ξ): Choose a CV, such as a distance, angle, or dihedral, that distinguishes the starting (A) and target (B) conformers.

- Steered MD (Optional): If A and B are separated by a high barrier, perform steered MD to generate initial pathways.

- Set Up Windows: Define 20-40 overlapping windows along ξ, spaced ~0.1-0.5 Å (or rad) apart.

- Apply Restraints: Run an independent simulation in each window, applying a harmonic restraint (force constant 10-50 kcal/mol/Ų) to keep the system near the window's center.

- Equilibration & Production: Equilibrate each window (50-100 ps), then run production MD (1-10 ns per window).

- Analysis with WHAM: Use the Weighted Histogram Analysis Method to unbias the restraints and combine data from all windows to produce the potential of mean force (PMF) along ξ.

- Error Analysis: Perform bootstrapping or block averaging to estimate statistical error in the free energy difference (ΔG_A→B).

Title: Umbrella Sampling Windows Along a Reaction Path

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Conformational Landscape Analysis

| Tool / Reagent | Function & Application | Example / Note |

|---|---|---|

| Molecular Dynamics Software | Engine for sampling conformational space through numerical integration of equations of motion. | GROMACS, AMBER, NAMD, OpenMM. Choice depends on force field and GPU support. |

| Force Field | Mathematical model defining potential energy terms (bonds, angles, dihedrals, non-bonded) for the system. | CHARMM36, AMBER ff19SB, OPLS-AA. Must match your molecule type (protein, nucleic acid, lipid). |

| Explicit Solvent Model | Represents water and ions as discrete molecules for accurate solvation dynamics. | TIP3P, TIP4P, SPC/E. TIP3P is most common and paired with CHARMM/AMBER. |

| Implicit Solvent Model | Approximates solvent as a continuous dielectric medium for faster sampling. | Generalized Born (GB) models, Poisson-Boltzmann (PB). Used in docking and initial scans. |

| Enhanced Sampling Plugin | Accelerates escape from local minima and crossing of high barriers. | PLUMED (integrates with most MD codes), MetaDynamics, Replica Exchange MD (REMD). |

| Clustering & Dimensionality Reduction Tool | Identifies representative conformers and reduces data complexity. | MDTraj, scikit-learn (for PCA/tICA), cpptraj (AMBER). |

| Free Energy Calculation Package | Computes relative stability (ΔG) between conformational states. | WHAM (for umbrella sampling), MBAR, alchemical transformation tools. |

| Visualization & Analysis Suite | Visualizes trajectories, energy landscapes, and molecular structures. | VMD, PyMOL, Matplotlib (for plotting FES), NGLview. |

Troubleshooting Guide & FAQs for High-Dimensional Molecular Optimization

This technical support center addresses common challenges encountered when managing high-dimensional conformational spaces in molecular dynamics (MD) simulations, free energy calculations, and structure-based drug design.

FAQ 1: My conformational sampling is incomplete and misses key ligand poses. How can I improve coverage when dealing with molecules possessing many rotatable bonds?

- Answer: Incomplete sampling often stems from insufficient simulation time or inadequate enhanced sampling techniques. For ligands with >10 rotatable bonds, the conformational space grows exponentially.

- Solution A: Implement enhanced sampling protocols. Use accelerated Molecular Dynamics (aMD) or Gaussian Accelerated MD (GaMD) to lower energy barriers. A typical aMD protocol involves adding a non-negative boost potential to the dihedral and/or total potential energy when it falls below a defined threshold (Edih or Etot). Key parameters (αdih, Edih) must be calibrated.

- Solution B: Employ systematic torsional scanning as a precursor. Perform quantum mechanics (QM) scans on molecular fragments to create a Rotamer Library, then use this to bias subsequent MD simulations.

- Check: Always compute the root-mean-square deviation (RMSD) and radius of gyration over time to assess sampling convergence. Use cluster analysis (e.g., using DBSCAN) on sampled frames to quantify pose diversity.

FAQ 2: During protein-ligand docking, flexible binding loops cause high scoring function variance and unreliable poses. How do I stabilize this?

- Answer: High scoring variance indicates the system is sensitive to small conformational changes in the loop.

- Solution: Use a dual-step approach. First, run a targeted MD simulation or loop remodeling (e.g., with RosettaDock) on the apo protein to generate an ensemble of loop conformations. Second, perform ensemble docking against multiple representative loop structures from this ensemble.

- Protocol:

- Identify flexible residues: Calculate per-residue root-mean-square fluctuation (RMSF) from a short (50-100 ns) MD simulation of the apo protein.

- Generate ensemble: For residues with RMSF > 2.0 Å, apply a harmonic restraint to the protein backbone, then gradually release it over 20 ns of simulation to sample loop motions.

- Cluster and select: Cluster the loop conformations and select the top 5 centroid structures for docking.

- Check: Compare the docking scores across the ensemble. A stable, correct binding mode should appear consistently with a favorable score in >60% of the ensemble members.

FAQ 3: Explicit solvent simulations are computationally prohibitive for my large system. When and how can I use implicit solvent models effectively?

- Answer: Implicit solvent models (e.g., Generalized Born, Poisson-Boltzmann) are suitable for equilibrium properties like binding affinity but can fail for processes dependent on specific water interactions.

- Use Case: Use implicit solvent for initial, rapid screening of ligand conformations or for molecular mechanics/Poisson-Boltzmann surface area (MM/PBSA) calculations on pre-sampled explicit solvent trajectories.

- Limitation & Fix: Implicit solvent often poorly models water-mediated hydrogen bonds. If your binding site contains a known, crucial water molecule (e.g., a conserved crystallographic water), treat it as part of the protein receptor during implicit solvent docking or simulations by fixing it in place.

- Parameter Check: Ensure the nonpolar solvation model (e.g., surface area term) and the ionic strength setting are appropriate for your biological context.

FAQ 4: How do I quantify and compare the relative contribution of each high-dimensionality source to my overall computational cost?

- Answer: The cost can be approximated by analyzing the degrees of freedom. The table below summarizes key metrics and their qualitative impact.

Table 1: Quantitative Impact of High-Dimensionality Sources on Simulation

| Source | Key Metric | Typical Range | Impact on Sampling Cost (CPU hours) |

|---|---|---|---|

| Rotatable Bonds (Ligand) | Number of Torsions (N) | 5 - 15+ | Scales ~exponentially with N. >10 bonds often requires enhanced sampling (>1000 core-hrs). |

| Flexible Loops | Residue Count & RMSF | 5-20 residues, RMSF > 2Å | Increases system size and required simulation time linearly. Can multiply sampling need by 5-10x. |

| Solvent (Explicit) | Number of Water Molecules | 10,000 - 100,000+ | Dominates cost for MD. ~80-90% of atoms are solvent. Implicit models reduce cost by ~90%. |

| System Size (Total) | Number of Atoms | 50,000 - 500,000 | MD cost scales approximately as O(N log N) with particle-mesh Ewald. |

Experimental Protocols

Protocol 1: Torsional Free Energy Profile via Umbrella Sampling Objective: Calculate the free energy change (ΔG) associated with rotating a critical ligand bond.

- System Preparation: Solvate and neutralize your protein-ligand complex in an explicit solvent box.

- Reaction Coordinate: Define the specific dihedral angle (ξ) as the reaction coordinate.

- Window Creation: Use the

gmx whamorcolvarsmodule to set up sequential simulation windows spaced every 10-15 degrees along ξ, applying a harmonic biasing potential (force constant 300-500 kJ/mol/rad²). - Equilibration & Production: Run each window for at least 5 ns of equilibration followed by 20+ ns of production MD.

- Analysis: Use the Weighted Histogram Analysis Method (WHAM) to unbias and combine the data from all windows, producing a ΔG profile versus ξ.

Protocol 2: Binding Affinity Calculation via MM/GBSA Objective: Estimate relative binding free energies from an MD trajectory.

- Trajectory Generation: Run a stable, explicit-solvent MD simulation (≥50 ns) of the protein-ligand complex. Also run separate simulations of the ligand and protein in solvent.

- Frame Selection: Extract snapshots at regular intervals (e.g., every 100 ps) from the equilibrated portion of the trajectory.

- Energy Calculation: For each snapshot, strip waters and ions. Calculate gas-phase energies (MM), solvation free energy (GB), and surface area (SA) terms using a tool like

MMPBSA.pyorgmx_MMPBSA. - Averaging: Average the terms over all snapshots. The binding free energy ΔG_bind =

- - .

Visualizations

Title: Flexible Loop Ensemble Docking Workflow

Title: Solvent Model Selection Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Managing High-Dimensionality

| Item / Software | Primary Function | Key Application in This Context |

|---|---|---|

| GROMACS | Molecular Dynamics Engine | Highly optimized for explicit solvent MD on HPC clusters. Essential for sampling loop and solvent dynamics. |

| OpenMM | GPU-Accelerated MD Toolkit | Enables rapid enhanced sampling (aMD, GaMD) on GPUs, crucial for torsional sampling. |

| AutoDock Vina / GNINA | Docking Software | Provides rapid scoring for many poses. GNINA supports CNN-based scoring and flexible side chains. |

| Amber/CHARMM Force Fields | Molecular Parameter Sets | Provide bonded (torsional) and non-bonded parameters essential for accurate energy calculations. |

| PyMOL / VMD | Molecular Visualization | Critical for inspecting flexible loops, ligand poses, and solvent networks in trajectories. |

| MDAnalysis / MDTraj | Trajectory Analysis Library | Scriptable analysis of RMSD, RMSF, and clustering to quantify sampling quality. |

| WHAM / PyEMMA | Free Energy Analysis | Tools to unbias and combine data from umbrella sampling or metadynamics simulations. |

| Rosetta | Macromolecular Modeling Suite | Specialized protocols for de novo loop remodeling and high-resolution refinement. |

Troubleshooting Guides & FAQs

Q1: My high-dimensional molecular dynamics simulation fails to sample relevant conformational states, yielding non-physiological results. What are the primary checks? A1: This is a classic symptom of sparse sampling. First, verify your enhanced sampling method. For replica exchange molecular dynamics (REMD), ensure temperature spacing gives an exchange probability of 20-30%. Use the following check:

- Calculate potential energy variance for each replica:

σ². - Temperature spacing ΔT should approximately follow: ΔT ≈ T * √( (2 * kb) / (Cv) ), where C_v is heat capacity. If states are still missed, your collective variables (CVs) may be poorly chosen and not describing the true reaction coordinate.

Q2: The computational cost for free energy calculation across 5 parameters is prohibitive. How can I estimate and reduce it? A2: Cost in high-dimensional free energy landscapes scales exponentially with dimensions (d). A standard FES calculation requires ~N^d points, where N is points per dimension. Use the following table to estimate:

| Dimensions (d) | Points per Dimension (N=10) | Total Evaluations | Est. CPU Time (Standard DFT) |

|---|---|---|---|

| 2 | 10 | 10² = 100 | ~200 core-hours |

| 4 | 10 | 10⁴ = 10,000 | ~20,000 core-hours |

| 6 | 10 | 10⁶ = 1,000,000 | ~2 million core-hours |

Mitigation Protocol: Employ stratified or adaptive sampling.

- Initial Sparse Scan: Perform a low-resolution scan (N=3-4) to identify regions of interest.

- Define Relevance Probability: P(x) ∝ exp(-ΔG(x)/kT).

- Adaptive Refinement: Iteratively add samples in regions where uncertainty (σ_G) is > kT/2. Use a Gaussian Process regressor to model uncertainty.

Q3: My optimization algorithm (e.g., for protein-ligand pose prediction) consistently converges to the same incorrect local minimum. How do I escape? A3: You are likely trapped in a local minima basin. Implement a meta-strategy:

- Introduce Controlled Perturbation: Use a short, high-temperature MD burst (e.g., 500K for 10ps) from the current minimum.

- Apply Biasing: Use a soft harmonic restraint (force constant 0.5-1.0 kcal/mol/Ų) on a different CV (e.g., radius of gyration vs. your primary CV) to push the system away.

- Restart with Diversity: Generate multiple starting conformations using:

- Principal Component Analysis (PCA) on prior trajectories.

- Clustering (k-means or hierarchical) on dihedral angles.

- Select centroid structures from the top 5 clusters as new starting points.

Experimental Protocols

Protocol 1: Setting up a Well-Tempered Metadynamics (WT-MetaD) Simulation to Combat Sparse Sampling Objective: Achieve efficient sampling and free energy estimation along 2-3 collective variables. Materials: See "Research Reagent Solutions" below. Method:

- CV Selection: Identify 2-3 CVs (e.g., distance, angle, torsion) using PCA or expert knowledge.

- Simulation Setup: Equilibrate system in NVT and NPT ensembles. Use a Langevin thermostat (γ=1 ps⁻¹) and Parrinello-Rahman barostat.

- WT-MetaD Parameters:

- Gaussian height (w): 1.0-2.0 kJ/mol.

- Gaussian width (σ): 10-20% of CV's fluctuation in a short unbiased run.

- Deposition stride: 500-1000 steps.

- Bias factor (γ): 10-30 (sets effective temperature for sampling).

- Convergence Check: Monitor the time evolution of the free energy estimate for the last 50% of simulation. Convergence is reached when the root mean square deviation (RMSD) between successive estimates plateaus below 0.5 kT.

Protocol 2: Hybrid Quantum Mechanics/Molecular Mechanics (QM/MM) Adaptive Sampling Objective: Accurately sample reactive events (e.g., bond breaking) at reduced cost. Method:

- Region Definition: Use the ONIOM scheme. Define the QM region (30-100 atoms) encompassing the reactive center. Treat the remainder with MM.

- Initial Sampling: Run short (10ps) MM MD to generate 1000 frames.

- Adaptive Selection: Select 50 frames where a key bond distance (d) is within a strategic range:

d_eq < d < d_eq + 1.0 Å. - QM/MM Evaluation: Perform energy/gradient calculations (DFT level, e.g., B3LYP/6-31G*) on selected frames.

- Model Retraining: Use results to train a machine-learned potential (e.g., Neural Network Potential). Iterate steps 3-5 until energy error on a hold-out set is < 2 kcal/mol.

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in High-Dim Optimization | Example Product/Code |

|---|---|---|

| Enhanced Sampling Suite | Provides algorithms to overcome barriers & improve sampling. | PLUMED (v2.8+), Colvars Module in NAMD/OpenMM |

| Collective Variable (CV) Library | Pre-defined, optimized CVs for molecular systems (distances, angles, coordination numbers, path CVs). | PLUMED DISTANCE, ANGLE, PATHMSD |

| Machine-Learned Potential (MLP) Engine | Trains fast, accurate potentials from QM data to reduce ab initio cost. | DeepMD-kit, ANI-2x, SchNetPack |

| Free Energy Estimator | Converts biased simulation data into unbiased free energy surfaces. | sum_hills (WT-MetaD), MBAR (via pymbar), WHAM |

| High-Throughput Workflow Manager | Automates launching and monitoring of thousands of related calculations. | Parsl, Apache Airflow, FireWorks |

| Replica Exchange Scheduler | Manages swapping between parallel simulations for optimal exchange rates. | GROMACS mdrun -multidir, LAMMPS fix temper/npt |

Visualizations

Well-Tempered Metadynamics Workflow

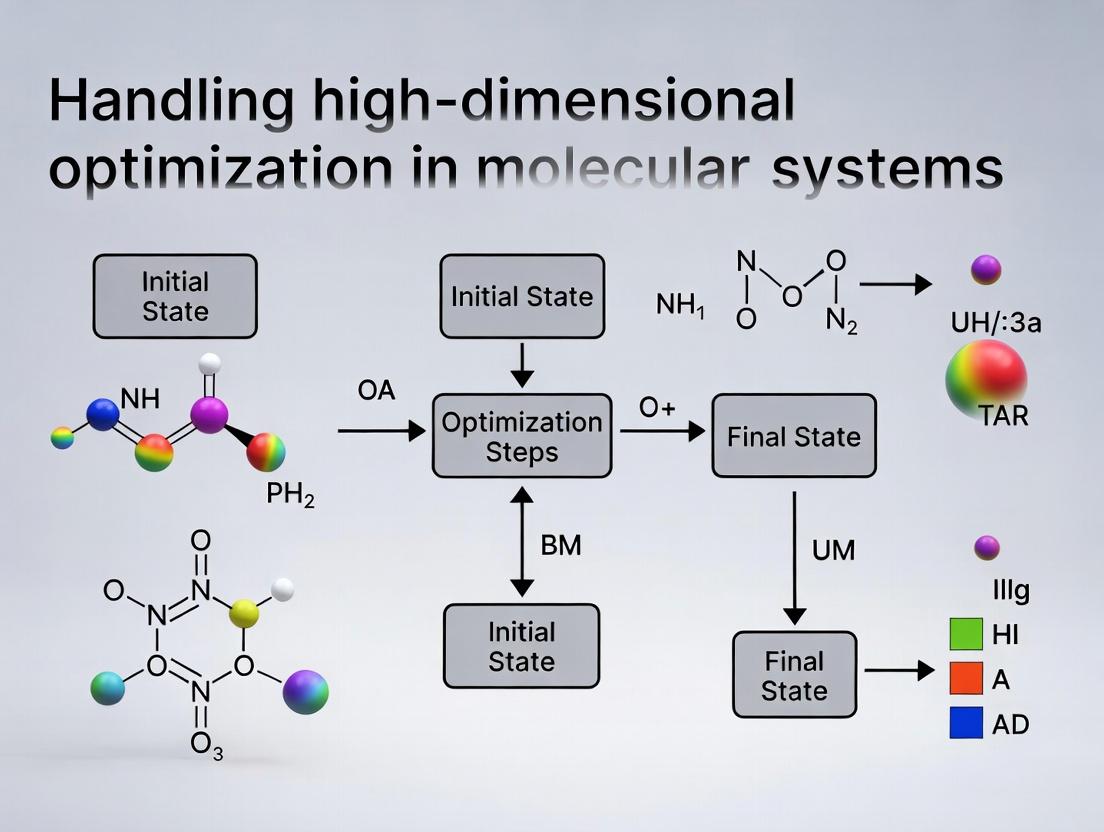

The Central Optimization Problem Pathway

Technical Support Center: Troubleshooting High-Dimensional Optimization in Molecular Systems

Frequently Asked Questions (FAQs) & Troubleshooting Guides

Q1: During a binding affinity prediction run using an AlphaFold2 or RoseTTAFold variant, the optimization stalls in a local minima, yielding unrealistic ΔG values. What are the primary corrective steps? A: This is a common issue in high-dimensional energy landscape exploration. First, verify your sampling parameters. Increase the number of MCMC (Markov Chain Monte Carlo) steps or MD (Molecular Dynamics) simulation time. Second, consider modifying the temperature parameter in your simulated annealing protocol to allow for more aggressive exploration. Third, check for biases in your initial conformation; use multiple, diverse starting poses. The protocol below outlines a systematic approach.

Q2: When applying gradient descent for protein folding in silico, the loss/energy plateaus prematurely. How can I improve convergence? A: Premature plateauing often indicates vanishing gradients or an insufficiently expressive optimization algorithm. Switch from standard SGD to Adam or L-BFGS optimizers which are better suited for rough, high-dimensional landscapes. Implement gradient clipping to manage instability. Additionally, introduce noise (e.g., Gaussian noise on atomic coordinates) during early training cycles to escape shallow minima.

Q3: In materials design for perovskite stability, my optimization loop suggests chemically implausible elemental substitutions. How do I constrain the search space effectively? A: You must enforce valence and charge balance constraints. Integrate a rule-based filter into your generative pipeline that rejects candidates failing basic chemical valency checks (e.g., using the Mendeleev or pymatgen libraries). Additionally, apply penalty terms in your objective function that heavily disfavor configurations with unrealistic bond lengths or coordination numbers.

Q4: My molecular dynamics simulation for binding free energy calculation (MM/PBSA, FEP) crashes due to "exploding forces" when exploring novel chemical space. What is the likely cause and fix? A: Exploding forces typically arise from steric clashes or unphysical bond/angle parameters for non-standard residues or novel materials. First, run a steepest descent energy minimization with a very low step size (0.001) before switching to more aggressive minimizers. Second, ensure your force field (e.g., CHARMM36, GAFF2) has appropriate parameters for all atoms. Use the ANTECHAMBER tool to generate missing parameters. If the issue persists, consider using a reinforced dynamics method that limits step size based on force magnitude.

Experimental Protocols

Protocol 1: Enhanced Sampling for Binding Affinity Prediction (MM/GBSA with Metadynamics)

- System Preparation: Solvate the protein-ligand complex in an orthorhombic water box (TIP3P) with a 10 Å buffer. Neutralize with counterions.

- Equilibration: Perform a 6-step equilibration using NVT and NPT ensembles, gradually reducing positional restraints on the protein backbone and ligand from 5.0 to 0.0 kcal/mol/Ų over 2 ns.

- Production with Bias: Run a 50-100 ns Well-Tempered Metadynamics simulation. Use two Collective Variables (CVs): 1) Distance between protein binding site residue centroid and ligand centroid, and 2) Radius of gyration of the ligand. Set hill height to 0.1-0.3 kcal/mol, width based on CV fluctuation, and deposition every 500 steps.

- Free Energy Calculation: Use the final biased simulation to compute the free energy surface. Extract snapshots at 100 ps intervals for MM/GBSA calculation using the

MMPBSA.pymodule in AmberTools. The binding free energy (ΔG_bind) is averaged over these snapshots.

Protocol 2: Iterative Optimization for Protein Folding with Neural Networks

- Data Preprocessing: Input multiple sequence alignment (MSA) and template features (from HHblits/JackHMMER and PDB) into the model (e.g., OpenFold).

- Initial Prediction: Generate an initial structure (backbone atoms) and per-residue confidence metric (pLDDT).

- Refinement Loop: a. Regions with pLDDT < 70 are identified as unreliable. b. For each low-confidence region, generate 5-10 alternative decoys using a fragment insertion method or by varying the latent vector in a generative model. c. Re-score all decoys using the network's predicted LDDT or an independent scoring function (Rosetta Energy Units). d. Select the top-scoring decoy for each region and graft it back into the full structure. e. Perform a short (200-step) gradient-based relaxation on the combined structure using the network's own gradient.

- Convergence Check: Repeat Step 3 until the average pLDDT plateaus or for a maximum of 5 iterations.

Protocol 3: High-Throughput Virtual Screening for Materials Design

- Search Space Definition: Define the compositional space (e.g., ABX₃ perovskites) and allowable substitutions at A, B, and X sites from a pre-curated list.

- Descriptor Calculation: For each candidate, compute 10-15 relevant descriptors (e.g., ionic radii, tolerance factor, electronegativity difference, valence band width) using materials informatics libraries (pymatgen).

- Surrogate Model Prediction: Input the descriptor vector into a pre-trained machine learning model (e.g., Random Forest, Gradient Boosting) to predict the target property (e.g., bandgap, decomposition energy).

- Bayesian Optimization Loop: Use the model's predictions and uncertainty estimates to select the next batch of 50-100 candidates for more accurate (but costly) DFT calculation.

- Iteration & Validation: Augment the training data with DFT results, retrain the surrogate model, and repeat the loop for 5-10 cycles. Validate the top 10 predicted materials with full experimental characterization.

Data Presentation

Table 1: Comparison of Optimization Algorithms for Binding Free Energy Calculation

| Algorithm | Typical Simulation Time | Reported Mean Absolute Error (MAE) vs. Experiment (kcal/mol) | Key Advantage | Primary Limitation |

|---|---|---|---|---|

| Molecular Dynamics (MM/PBSA) | 50-100 ns | 2.5 - 4.0 | Accounts for full flexibility | Computationally expensive, sampling limited |

| Free Energy Perturbation (FEP) | 10-20 ns per lambda | 1.0 - 2.0 | High theoretical accuracy | Requires careful alchemical pathway setup |

| Metadynamics (Well-Tempered) | 50-200 ns | 1.5 - 3.0 | Accelerates sampling of CVs | Choice of CVs is critical, can be system-dependent |

| Machine Learning (ΔGNet, etc.) | Minutes (inference) | 0.8 - 1.5 | Extremely fast screening | Depends heavily on training data quality/scope |

Table 2: Performance Metrics for Protein Folding Tools on CASP15 Targets

| Tool/Method | Average GDT_TS (Global) | Average GDT_TS (Hard Targets) | Typical Runtime (GPU hours) | Key Innovation |

|---|---|---|---|---|

| AlphaFold2 | 88.5 | 75.3 | 2-10 | Evoformer architecture, MSA processing |

| RoseTTAFold | 85.2 | 70.1 | 1-5 | Trunk architecture (1D, 2D, 3D tracks) |

| OpenFold | 87.9 | 74.8 | 3-8 | Open-source, trainable recreation |

| Iterative Refinement (Protocol 2) | +1.2 (improvement) | +2.5 (improvement) | +30% time | Targeted rebuilding of low-confidence regions |

Visualizations

Diagram Title: Enhanced Sampling Workflow for Binding Affinity

Diagram Title: Iterative Refinement Loop for Protein Folding

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools & Datasets

| Item/Resource | Function/Benefit | Example/Provider |

|---|---|---|

| Force Fields | Provides parameters for potential energy calculations in MD simulations. | CHARMM36, AMBER ff19SB, GAFF2 (for small molecules), OPLS4. |

| Quantum Chemistry Software | Performs high-accuracy electronic structure calculations for small systems or training data generation. | Gaussian, ORCA, PySCF (open-source). |

| Docking & Screening Suites | Rapidly predicts ligand poses and scores binding affinity for virtual screening. | AutoDock Vina, Glide (Schrödinger), FRED (OpenEye). |

| Free Energy Calculation Tools | Implements alchemical methods (FEP, TI) for accurate ΔG prediction. | SOMD (OpenMM), FEP+ (Schrödinger), GROMACS with PLUMED. |

| Protein Structure Prediction | State-of-the-art AI models for predicting protein 3D structure from sequence. | AlphaFold2 (ColabFold), RoseTTAFold (server), ESMFold. |

| Materials Databases | Curated repositories of computed and experimental material properties for model training. | Materials Project, OQMD, AFLOW, NOMAD. |

| Enhanced Sampling Plugins | Implements advanced algorithms (Metadynamics, REMD) to escape local minima. | PLUMED (universal plugin), Colvars. |

| High-Performance Computing (HPC) Cluster | Essential for running long MD, Ab-initio calculations, and large-scale hyperparameter optimization. | Local university clusters, cloud providers (AWS, GCP, Azure), national supercomputing centers. |

Core Algorithms and Practical Applications: From Theory to Benchside Implementation

Technical Support Center

Troubleshooting Guide & FAQs

Q1: My PCA results are dominated by technical noise from the assay platform, not biological signal. What should I do? A: Standard pre-processing for molecular data (e.g., mass spectrometry, gene expression) is essential.

- Protocol: Before PCA, apply variance-stabilizing transformation (e.g., log2 for proteomics/transcriptomics). Then, center and scale each feature (mean=0, variance=1). For batch correction, use ComBat or its variants, but validate that it removes technical, not biological, variance.

- Check: Ensure the PCA input matrix (samples x features) has features (proteins, genes) as columns. The first principal component (PC1) explaining >50% of variance often indicates a dominant technical batch effect.

Q2: When using t-SNE for visualizing my molecular clusters, the results change dramatically every time I run it. How can I ensure reproducibility? A: t-SNE is stochastic. Use a fixed random seed and carefully tune the perplexity parameter.

- Protocol: Set

random_state(sklearn) orseedparameter. Standardize data first. Perplexity should be smaller than the number of data points. For molecular sample sizes (n~100-1000), start with perplexity=30. Run multiple times with different seeds to check for stable structures. Consider using UMAP as a more reproducible alternative for layout. - Check: If global structure (cluster separation) changes, perplexity is likely set incorrectly.

Q3: My undercomplete autoencoder for feature compression is just learning the identity function and not compressing. What's wrong? A: The bottleneck layer is likely too wide or the network is overfitting.

- Protocol: Systematically reduce bottleneck neurons (e.g., from 100 to 10 dimensions for 1000 input features). Add regularization: L1/L2 weight regularization on the encoder, or dropout in the encoder layers. Use a non-linear activation function (e.g., ReLU) and ensure the decoder architecture is symmetric to the encoder.

- Check: Monitor reconstruction loss on a validation set. If it's nearly zero from the start, the bottleneck is not constraining.

Q4: How do I interpret the latent space from a variational autoencoder (VAE) trained on molecular structures? A: The VAE latent dimensions are regularized to follow a prior distribution (e.g., Gaussian), enabling interpolation.

- Protocol: After training, encode your dataset (e.g., molecular fingerprints) to the latent space (mean vector). Perform PCA on these latent vectors to identify major axes of variation. You can then traverse a latent dimension while decoding to observe smooth changes in molecular properties. Validate by sampling points from the prior and decoding to generate novel, valid structures.

- Check: Calculate the KL divergence term of the loss. It should converge slowly; if it goes to zero immediately, the model is ignoring the latent space (posterior collapse).

Q5: I need to integrate multiple omics datasets (transcriptomics, proteomics) for a unified patient view. Which method should I use? A: Standard PCA fails here. Use a method designed for multi-view integration.

- Protocol: Consider Multi-omics Factor Analysis (MOFA+) or Integrative NMF (iNMF). For deep learning, use a multi-modal autoencoder with separate encoder arms for each data type, joining in a shared bottleneck layer. Pre-process each modality independently (normalize, scale) before integration.

- Check: Validate that the latent space captures shared biological variation (e.g., patient subtype) across modalities, not just technical biases from one platform.

Quantitative Comparison of Dimensionality Reduction Methods

Table 1: Method Comparison for Molecular Data

| Aspect | PCA | t-SNE | Autoencoder (Undercomplete) | UMAP (Common Alternative) |

|---|---|---|---|---|

| Primary Goal | Variance maximization, decorrelation | Neighborhood preservation, visualization | Non-linear feature compression | Neighborhood preservation, visualization |

| Preserves | Global variance | Local structure | Global & local structures (configurable) | Local & more global structure than t-SNE |

| Deterministic? | Yes | No (stochastic) | Yes (with fixed seed) | Mostly reproducible |

| Scalability | Excellent (O(n³) for covariance) | Moderate (O(n²)) | Good (O(n) per epoch) | Good (O(n)) |

| Out-of-Sample Projection | Trivial (matrix multiplication) | Not standard (requires re-embedding) | Trivial (run through encoder) | Approximate, but possible |

| Key Hyperparameter(s) | Number of components | Perplexity, learning rate | Bottleneck size, regularization strength | Number of neighbors, min_dist |

| Best for Molecular Use Case | Initial data exploration, denoising, whitening | Final visualization of well-defined clusters | Feature engineering, non-linear compression, multi-omics integration | Visualization, clustering speed/reproducibility |

Table 2: Typical Parameter Ranges for Molecular Data (n = samples)

| Method | Key Parameter | Recommended Starting Value for n~100-10k | Adjustment Guidance |

|---|---|---|---|

| PCA | n_components | 50 | Use scree plot to find "elbow". Keep components explaining >80-90% cumulative variance. |

| t-SNE | Perplexity | 30 | Should be less than n. Increase for more global view. |

| Learning Rate | 200 | If clusters look like "balls", lower it. If compact, increase. | |

| UMAP | n_neighbors | 15 | Lower for local structure, higher (up to 100) for global. |

| min_dist | 0.1 | Lower (~0.01) for tight clustering, higher (~0.5) for more spread. | |

| Autoencoder | Bottleneck Dim | Input Dim / 10 | Start small, increase if reconstruction loss is too high. |

| Latent Dim (VAE) | 2-20 for visualization | Use for generative tasks. Monitor KL loss to avoid collapse. |

Experimental Protocol: Benchmarking Dimensionality Reduction for Compound Activity Prediction

Objective: To evaluate how PCA, t-SNE (for pre-processing), and Autoencoder-derived features improve the performance of a Random Forest classifier in predicting compound activity from high-dimensional molecular descriptors.

Materials:

- Dataset: Public ChEMBL bioactivity data (e.g., kinase inhibitors). Features: 2048-bit ECFP4 molecular fingerprints. Target: Binary active/inactive label.

- Software: Python with scikit-learn, TensorFlow/PyTorch, scanpy (for t-SNE/UMAP).

Procedure:

- Pre-processing: Remove duplicate compounds and apply random split (80/20 train/test). Store test set.

- Dimensionality Reduction (on training set only):

- PCA: Fit to training fingerprints. Retain components explaining 95% variance.

- t-SNE: Fit with perplexity=30, random_state=42. Use resulting 2D coordinates as features.

- Autoencoder: Build a symmetric network: Input(2048) → Dense(512, ReLU) → Dense(128, ReLU) → Bottleneck(32, linear) → Dense(128, ReLU) → Dense(512, ReLU) → Output(2048, sigmoid). Train for 100 epochs using MSE loss, Adam optimizer. Use the 32-dimensional bottleneck output as new features.

- Model Training: Train identical Random Forest classifiers (n_estimators=100) on: a. Original 2048-bit fingerprints (Baseline). b. PCA-transformed features. c. t-SNE-transformed features (2D). d. Autoencoder-derived features (32D).

- Evaluation: Predict on the held-out test set (transformed using the models fit on the training set). Calculate and compare AUC-ROC, Precision-Recall AUC, and F1-score.

Visualization of Workflows

Title: PCA Protocol for Molecular Data

Title: Undercomplete Autoencoder Structure

Title: High-Dim Molecular Optimization Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Dimensionality Reduction in Molecular Research

| Tool / Reagent | Function / Purpose | Example / Note |

|---|---|---|

| scikit-learn | Primary library for PCA, t-SNE (Barnes-Hut), and standard ML models. | Use sklearn.decomposition.PCA, sklearn.manifold.TSNE. Essential for preprocessing. |

| TensorFlow / PyTorch | Deep learning frameworks for building and training custom autoencoders (VAEs). | Provides flexibility for designing non-standard encoder/decoder architectures. |

| UMAP | Dimensionality reduction for visualization; often more scalable/stable than t-SNE. | umap-learn package. Useful for initial data exploration of large molecular sets. |

| RDKit | Cheminformatics toolkit for generating molecular descriptors and fingerprints (ECFP). | Converts SMILES strings to feature vectors (e.g., 2048-bit Morgan fingerprints). |

| MOFA+ | Multi-omics Factor Analysis for integrating multiple high-dimensional data types. | R/Python package. Crucial for joint analysis of transcriptomics, proteomics, etc. |

| Scanpy (if using single-cell) | Python toolkit for single-cell analysis; includes efficient PCA, t-SNE, UMAP wrappers. | Optimized for very large cell-by-gene matrices (similar to compound-by-descriptor). |

| Hyperparameter Optimization | Libraries for tuning perplexity, learning rate, network architecture. | optuna, hyperopt. Automates search for optimal DR parameters on your data. |

Technical Support Center: Troubleshooting Guides & FAQs

FAQs and Troubleshooting

Q1: In Metadynamics, my system does not transition over the free energy barrier despite long simulation time. What could be wrong?

- A: This is often due to poor Collective Variable (CV) selection. The CVs may not fully describe the reaction coordinate. Troubleshooting Steps: 1) Conduct a short, unbiased MD to analyze principal components or distances/angles that change. 2) Consider using a combination of two CVs (e.g., distance and dihedral). 3) Reduce the Gaussian deposition rate (

pace) and/or height to prevent "filling" too quickly and causing repulsion.

- A: This is often due to poor Collective Variable (CV) selection. The CVs may not fully describe the reaction coordinate. Troubleshooting Steps: 1) Conduct a short, unbiased MD to analyze principal components or distances/angles that change. 2) Consider using a combination of two CVs (e.g., distance and dihedral). 3) Reduce the Gaussian deposition rate (

Q2: During Replica Exchange MD (REMD), my exchange acceptance ratio is below 10%. How can I improve it?

- A: A low acceptance ratio indicates poor overlap in potential energy distributions between adjacent replicas. Solution: Adjust the temperature ladder. Use tools like

temperature_generator.pyto calculate optimal temperature spacing. The formula for number of replicas needed is approximately: √( (2ΔFmax) / (kB) ) * (γ / ΔT), where ΔFmax is the max free energy, kB is Boltzmann constant, γ is a system-specific constant (~1.5-2.0), and ΔT is the average temperature spacing. See the optimized protocol table below.

- A: A low acceptance ratio indicates poor overlap in potential energy distributions between adjacent replicas. Solution: Adjust the temperature ladder. Use tools like

Q3: In Accelerated MD (aMD), my simulation becomes unstable or crashes. Why?

- A: This typically occurs when the acceleration parameters (boost potential, E and α) are set too aggressively, distorting the energy landscape excessively. Troubleshooting: 1) Re-calculate the average dihedral and/or total potential energy (

E_dandE_p) from a short cMD run with higher precision. 2) Apply the boost only to the dihedral terms first, which is more stable. 3) Incrementally increase α to widen the boost potential well, making transitions smoother.

- A: This typically occurs when the acceleration parameters (boost potential, E and α) are set too aggressively, distorting the energy landscape excessively. Troubleshooting: 1) Re-calculate the average dihedral and/or total potential energy (

Q4: How do I choose between Well-Tempered Metadynamics (WT-MetaD) and standard Metadynamics for a new protein-ligand binding study?

- A: WT-MetaD is generally preferred for complex, high-dimensional landscapes like binding. The bias deposition decreases over time, allowing for better convergence and a simpler reweighting procedure to obtain kinetic estimates. Use standard MetaD for quick, exploratory scans of small barriers.

Quantitative Data Summary

Table 1: Key Parameter Guidelines for Enhanced Sampling Methods

| Method | Key Parameter | Typical Value/Range | Purpose & Optimization Tip |

|---|---|---|---|

| Metadynamics | Gaussian Height (w) | 0.05 - 1.2 kJ/mol | Start low for smoother filling. WT-MetaD uses a decaying version. |

| Gaussian Width (σ) | 1/5 to 1/10 of CV fluctuation | Estimate from short unbiased run. Too large misses details. | |

| Deposition Pace (τ_G) | 100 - 1000 steps | Scales inversely with height. Lower pace + lower height improves accuracy. | |

| REMD | Number of Replicas | 12-72 (system-dependent) | Use REPAST formula: N ≈ 1 + (Tmax / Tmin) * √(f * ΔFmax / kB T_min). |

| Temperature Spacing | 5-15 K (aqueous) | Aim for 20-30% exchange acceptance. Closer spacing near phase transitions. | |

| aMD | Dihedral Boost Energy (E_d) | Avg. dihedral energy + (4-6 * N_res) kcal/mol | Tune to sample 5-10x more transitions than cMD. |

| Acceleration Factor (α_d) | (1/5 to 1) * E_d | Larger α gives smaller, more frequent boosts; smoother dynamics. |

Table 2: Comparative Performance on a 50-residue Protein Folding (Model System)

| Method | Simulation Time (ns) | Wall-Clock Time (Hours)* | Effective Sampling Gain | Key Metric Achieved |

|---|---|---|---|---|

| Conventional MD | 1000 | 240 | 1x (Baseline) | Partial unfolding/refolding |

| Metadynamics (2 CVs) | 100 | 120 | ~10x in CV space | Full FES, barrier height estimate |

| REMD (32 replicas) | 30 per replica | 180 (parallel) | ~15-20x in temp space | Folding probability vs. T, melting curve |

| aMD (Dual Boost) | 50 | 60 | ~5-8x in dihedral space | Multiple full folding events |

*Based on 1 ns/day performance on 1 GPU core equivalent.

Detailed Experimental Protocols

Protocol 1: Setting Up a Well-Tempered Metadynamics Simulation for Protein-Ligand Dissociation.

- System Preparation: Solvate protein-ligand complex in a cubic water box, add ions to neutralize.

- Equilibration: Perform energy minimization, NVT (100 ps), and NPT (1 ns) equilibration with restraints on protein and ligand heavy atoms.

- CV Selection: Define at least two CVs: a) Distance between ligand center of mass (COM) and protein binding pocket COM. b) Number of protein-ligand heavy atom contacts within 4.5Å.

- MetaD Parameters: Use the PLUMED plugin. Set

PACE=500,HEIGHT=1.0kJ/mol,BIASFACTOR=10-15. SetSIGMAfor distance CV to ~0.05 nm and for contacts to ~2.0. - Production: Run for 100-200 ns, monitoring the growth and convergence of the bias potential. Use

plumed sum_hillsto generate the Free Energy Surface (FES).

Protocol 2: Running a Temperature-Based REMD Simulation for Peptide Folding.

- Replica Generation: Prepare identical starting structures (folded, unfolded, or extended).

- Temperature Ladder Calculation: For a target temperature range of 300K to 500K, use the following Python pseudo-code snippet to generate an exponentially spaced ladder:

- Simulation Configuration: In your MD engine (e.g., GROMACS), set up a

.mdpfile withnstcalcenergy = 100andnstxout-compressed = 1000. Use theremdgroup for exchange attempt frequency (every 1-2 ps). - Execution & Analysis: Launch all replicas concurrently. Use tools like

demuxto reorder trajectories by temperature for analysis. Calculate properties like RMSD or radius of gyration as a function of temperature.

Visualizations

Title: From Biased Simulation to Free Energy and Kinetics

Title: Replica Exchange Molecular Dynamics (REMD) Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Enhanced Sampling |

|---|---|

| PLUMED | A core plugin/library for CV-based sampling (MetaD, etc.). It acts as an "analyzer" and "biaser" integrated with major MD codes. |

| OpenMM | A high-performance MD toolkit with built-in support for aMD and easy Python scripting for custom bias potentials. |

| GROMACS/NAMD | Popular MD simulation engines, widely used for REMD and PLUMED-integrated simulations due to strong parallel scaling. |

| PyEMMA/MSMBuilder | Software for building Markov State Models (MSMs) from simulation data, essential for extracting kinetics from enhanced sampling runs. |

| MDAnalysis/MDTraj | Python libraries for trajectory analysis, crucial for analyzing CVs and processing outputs from long, biased simulations. |

| AMBER/CHARMM Force Fields | Accurate biomolecular force fields. The choice (e.g., ff19SB, CHARMM36m) is critical for reliable free energy estimates. |

| GAFF | General Amber Force Field for small molecule ligands, often used in protein-ligand binding studies with MetaD. |

| VMD/PyMol | Visualization software to inspect starting structures, intermediate states, and final conformations sampled. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During high-dimensional molecular property optimization, my Gaussian Process (GP) surrogate model fails due to memory constraints. What are the primary mitigation strategies? A: The cubic computational complexity O(n³) of GPs for n data points is a common bottleneck. Implement the following:

- Sparse GP Approximations: Use inducing point methods (e.g., SVGP) to approximate the full dataset. Reduce complexity to O(n m²), where m << n is the number of inducing points.

- Feature Selection/Dimensionality Reduction: Apply Principal Component Analysis (PCA) or Autoencoders to the molecular descriptor/fingerprint space before model training.

- Switch to Scalable Surrogates: For very high dimensions (>1000), consider Bayesian Neural Networks or Random Forest-based surrogates integrated with BO frameworks like BOTORCH.

Q2: In Bayesian Optimization (BO) for molecular design, the acquisition function gets stuck in a local optimum, failing to explore. How can I adjust the optimization loop? A: This indicates poor balance between exploration and exploitation.

- Acquisition Function Choice: Switch from Expected Improvement (EI) to Upper Confidence Bound (UCB) and increase the β parameter to weight exploration more heavily. Alternatively, use Thompson Sampling.

- Add Random Points: Manually inject 5-10% random samples into each batch of candidates to force exploration.

- Adjust Kernel Lengthscales: Review the GP kernel's automatic relevance determination (ARD) lengthscales. Very small lengthscales can lead to over-exploitation. Consider setting lower bounds or using a Matern kernel instead of RBF.

Q3: My Reinforcement Learning (RL) agent for molecule generation (e.g., using a Policy Gradient) produces invalid molecular structures. What steps can I take to improve validity? A: Invalid structures often arise from an unconstrained action space.

- Constrained Action Masking: Modify the RL environment to mask out invalid actions at each step (e.g., adding a bond that would violate valency rules).

- Post-hoc Correction: Integrate a rule-based or graph grammar-based correction step after each action or episode.

- Reward Shaping: Heavily penalize invalid terminal states in the reward function (e.g., large negative reward). Combine with a positive reward for validity.

Q4: When integrating a surrogate model with an RL policy, training becomes unstable. How should I structure the data flow? A: Instability is typical when the surrogate model's predictions change as new data is added.

- Two-Phase Training: First, pre-train the surrogate model on a large, diverse dataset of known molecular properties. Freeze its weights before starting RL policy training.

- Periodic Retraining: Implement a scheduled retraining protocol for the surrogate. Collect new data from RL episodes in a buffer, and retrain the surrogate only every N episodes to allow the policy to converge with a stable reward signal.

- Uncertainty-Aware Rewards: Use the surrogate's predictive uncertainty (if available, e.g., from a Bayesian model) to dampen rewards—penalize the policy for relying on high-uncertainty predictions.

Data Presentation

Table 1: Comparison of Surrogate Model Performance on QM9 Dataset (MAE for Internal Energy U0)

| Model Type | Training Time (s) | Prediction Time (ms) | MAE (kcal/mol) | Handles >10k Samples? |

|---|---|---|---|---|

| Gaussian Process (RBF) | 1,250 | 45 | 1.2 | No |

| Sparse Variational GP | 320 | 18 | 1.5 | Yes |

| Bayesian Neural Network | 890 | 6 | 2.1 | Yes |

| Random Forest | 65 | 2 | 3.8 | Yes |

Table 2: Bayesian Optimization Results for LogP Optimization (ZINC20 Subset)

| BO Configuration | Iterations to >5.0 | Best LogP Found | % Valid Molecules |

|---|---|---|---|

| EI + GP (MACCS Keys) | 42 | 5.8 | 100% |

| UCB (β=2.0) + GP (ECFP4) | 28 | 6.2 | 100% |

| TuRBO (Trust Region BO) | 19 | 6.5 | 98% |

Experimental Protocols

Protocol 1: High-Throughput Virtual Screening with a Pre-Trained Surrogate Model

- Data Curation: Assay a diverse library of 50,000 compounds for target activity (IC50). Encode molecules using extended-connectivity fingerprints (ECFP6, radius=3, 2048 bits).

- Surrogate Training: Split data 80/20. Train a Random Forest regressor (1000 trees) to predict pIC50 from fingerprint. Validate using Mean Absolute Error (MAE) and R² on hold-out set.

- Virtual Screen: Load a database of 10M purchasable compounds (e.g., ZINC20). Generate ECFP6 for all. Use the trained surrogate to predict pIC50 for each compound.

- Triaging: Rank compounds by predicted pIC50. Apply drug-like filters (Lipinski's Rule of 5, PAINS removal). Select top 1,000 for molecular docking.

Protocol 2: Batch Bayesian Optimization for Molecular Discovery

- Initialization: Select 100 random molecules from the search space (e.g., a ~10⁶ sized fragment library). Compute their properties via simulation or assay.

- BO Loop: For 50 iterations: a. Surrogate Update: Train a Sparse Variational Gaussian Process (SVGP) model on all observed data (initial + acquired). b. Batch Acquisition: Using the q-EI acquisition function (with Kriging Believer), select a batch of 5 molecules that jointly maximize expected improvement. c. Evaluation: Compute the target property (e.g., solubility) for the 5 proposed molecules via simulation. d. Data Augmentation: Add the new (molecule, property) pairs to the training dataset.

- Termination: Return the molecule with the best-observed property after 50 iterations.

Mandatory Visualization

Title: Bayesian Optimization Loop for Molecular Design

Title: RL Policy Training with a Surrogate Model

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for AI-Driven Molecular Optimization

| Item | Function | Example/Tool |

|---|---|---|

| Molecular Encoder | Converts molecular structure into a numerical representation for ML models. | RDKit (for ECFP/Morgan fingerprints, graph representations), OGB (Open Graph Benchmark) |

| Surrogate Model Library | Provides pre-implemented, scalable probabilistic models for integration into BO/RL loops. | GPyTorch (for GP/SVGP), BOTORCH (for BO & Bayesian models), DeepChem (for graph neural networks) |

| Optimization Framework | Orchestrates the iterative loop between proposal, evaluation, and model updating. | BOTORCH + Ax (Facebook), Dragonfly, Scikit-Optimize |

| Chemical Space Navigator | Defines the rules and action space for molecule generation and modification. | RDKit Chemical Reactions, Molecular Graph Grammar, REINVENT's SMILES-based action space |

| High-Performance Compute (HPC) Scheduler | Manages parallel evaluation of proposed molecules via simulation on clusters. | SLURM, Apache Airflow for workflow orchestration |

| Property Prediction Service | Provides fast, approximate property calculations for reward shaping. | XTB for semi-empirical quantum mechanics, OpenMM for molecular dynamics (quick energy minimization) |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My QM/MM simulation crashes with a segmentation fault when the boundary bond is stretched. What is the likely cause and how can I fix it? A: This is often due to an unstable link atom treatment or an incorrect electrostatic embedding scheme at the QM/MM boundary. Implement a charge-shifting scheme (e.g, the L2 or L3 schemes) to redistribute charge near the boundary. Ensure your QM and MM parameters are compatible. Use a larger QM region if the chemical event is too close to the boundary.

Q2: During adaptive sampling, my model fails to explore new regions of conformational space and gets stuck. How do I improve exploration? A: This indicates inadequate sampling or poor selection criteria for new simulations. Implement a diversity-based selection criterion (e.g., using RMSD or a collective variable space) instead of solely uncertainty-based selection. Increase the batch size for new simulations. Consider combining with enhanced sampling methods like metadynamics in the sampling loops.

Q3: My coarse-grained (CG) model fails to reproduce key allosteric dynamics observed in atomistic simulations. What steps should I take? A: The CG force field likely lacks necessary anisotropic interactions. Refit the CG non-bonded potentials using iterative Boltzmann inversion (IBI) or relative entropy minimization, targeting not just radial distribution functions but also angular distributions and spatial density maps from the atomistic reference. Introduce explicit directional bonds or three-body terms if necessary.

Q4: Energy conservation is poor in my hybrid adaptive resolution scheme (AdResS) simulation. What parameters should I check? A: Poor energy conservation typically stems from the transition region. Adjust the width of the hybrid transition zone (recommended >1.0 nm). Fine-tune the thermodynamic force correction term. Ensure the time step is appropriate for the coarse-grained region and does not cause instability when interpolated in the hybrid zone.

Q5: How do I validate that my multi-scale simulation results are physically meaningful and not an artifact of the coupling? A: Perform a hierarchy of consistency checks:

- Compare order parameters (e.g., RMSD, radius of gyration) between pure MM and QM/MM in the same region.

- Run a longer, conventional simulation (if feasible) to see if key events are reproduced.

- Conduct sensitivity analysis on boundary placement (QM/MM) or resolution transition parameters (AdResS).

- Ensure energy/force distributions are smooth across all interfaces.

Experimental Protocols & Methodologies

Protocol 1: Setting up a QM/MM Simulation for an Enzyme Reaction

- System Preparation: Obtain the protein-ligand complex PDB. Solvate in a water box, add ions, and minimize using classical force fields.

- Region Definition: Define the QM region (typically 50-200 atoms) to include the active site, substrate, and key cofactors/residues. Cut covalent bonds at the boundary using a link atom (H) or localized orbital method.

- QM Method Selection: Choose a DFT functional (e.g., B3LYP-D3) with a 6-31G* basis set for efficiency. For higher accuracy, use a QM method like DLPNO-CCSD(T)/def2-TZVP on optimized structures.

- Electrostatic Embedding: Use electrostatic embedding to include the MM point charges in the QM Hamiltonian. Apply a charge-shifting scheme to boundary atoms.

- Dynamics: Run QM/MM geometry optimization, then perform QM/MM molecular dynamics using a Born-Oppenheimer or Car-Parrinello approach.

Protocol 2: Building a Coarse-Grained Model via Martini 3

- Mapping: Map 2-4 heavy atoms to a single CG bead following Martini 3 mapping rules. Define bead types (e.g.,

TC1for apolar,Qafor charged). - Topology Generation: Use

martinize2to generate topology and initial coordinates. Define elastic network (GoMartini) constraints to maintain secondary/tertiary structure. - Parameterization: Solvate the system with standard Martini water beads (

W). Add ions (NA,CL). Use a time step of 20-30 fs. - Equilibration: Run energy minimization, followed by equilibration in the NVT and NPT ensembles (300K, 1 bar) with position restraints on protein backbone beads, gradually released.

- Production & Backmapping: Run production MD. Use

backwardormartinize2scripts to convert trajectories back to atomistic resolution for analysis.

Protocol 3: Implementing Adaptive Sampling with Deep Learning

- Initial Data: Run a set of short, distributed MD simulations (10-100 ns each) to cover a broad initial state.

- Feature Extraction: Fit the trajectories into a low-dimensional latent space using time-lagged independent component analysis (tICA) or a variational autoencoder (VAE).

- Model Training: Train a deep generative model (e.g., a Markov State Model, or a reinforced dynamics model) on the latent space features to predict unexplored states.

- Simulation Selection: Use an acquisition function (e.g., highest predictive uncertainty, or longest predicted path) to select 10-20 simulation frames from which to launch new iterations.

- Iteration: Launch new simulations from selected frames. Incorporate new data, retrain the model, and repeat for 5-10 cycles until convergence of the free energy landscape is achieved.

Table 1: Comparison of Common QM Methods in QM/MM

| QM Method | Typical System Size (Atoms) | Relative Cost | Key Use Case | Recommended Basis Set |

|---|---|---|---|---|

| DFT (B3LYP) | 50-200 | 1x (Baseline) | Enzyme mechanisms, metal sites | 6-31G*, def2-SVP |

| Semi-Empirical (PM6) | 200-500 | 0.01x | Conformational sampling with QM | N/A (Parametric) |

| MP2 | 30-80 | 10-50x | High-accuracy single points | cc-pVTZ |

| DLPNO-CCSD(T) | 50-150 | 5-20x | Benchmark reaction energies | def2-TZVP |

Table 2: Performance Metrics for Adaptive Sampling Algorithms

| Algorithm | Exploration Efficiency (Cycles to Convergence) | Computational Overhead per Cycle | Best for Landscape Type | Key Hyperparameter |

|---|---|---|---|---|

| REAP (Reweighted Autoencoded Variational Bayes for Enhanced Sampling) | 8-12 | High (VAE Training) | Rugged, with deep metastable states | Latent space dimension |

| FAST (Future Adaptive Sampling based on Trees) | 10-15 | Low (Clustering) | High-dimensional, diffusive | Distance metric (e.g., RMSD) |

| BAL (Bayesian Active Learning) | 6-10 | Medium (Gaussian Process) | Smooth, with unknown barriers | Acquisition function (EI vs UCB) |

Visualizations

Title: QM/MM Simulation Setup Workflow

Title: Adaptive Sampling Iterative Cycle

Title: Multi-Scale Modeling Resolution Hierarchy

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Hybrid/Multi-Scale Research |

|---|---|

| CHARMM/DEEPSAM Force Field | Provides consistent MM parameters compatible with QM/MM boundary treatments and polarizable effects. |

| CP2K Software Package | Enables efficient DFT-based QM/MM MD simulations with advanced Gaussian plane-wave methods. |

| OpenMM Molecular Dynamics Engine | GPU-accelerated platform for running adaptive sampling, MM, and custom QM/MM simulations. |

| MDAnalysis Python Library | Essential toolkit for analyzing trajectories, calculating order parameters, and managing multi-scale data. |

| PyEMMA / MSMBuilder | Software for constructing Markov State Models from simulation data to guide adaptive sampling. |

| Martini 3 Coarse-Grained Force Field | A top-down CG force field for biomolecules, enabling microsecond-scale system simulations. |

| PLUMED Enhanced Sampling Plugin | Integrates with MD codes to define collective variables and perform metadynamics/adaptive bias simulations. |

| VMD / NGLview Visualization | For visualizing complex multi-scale systems, boundaries, and dynamic pathways. |

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During metadynamics, my system becomes unstable and the simulation crashes. What could be the cause?

A: This is often due to overly aggressive hill deposition or poorly chosen collective variables (CVs). Reduce the hill_height (e.g., from 1.2 kJ/mol to 0.8 kJ/mol) and increase the hill_width. Ensure your CVs (e.g., distances, angles) are restrained within physically possible ranges using walls or restraints to prevent sampling of unphysical conformations.

Q2: My accelerated molecular dynamics (aMD) simulation does not show improved sampling over cMD. How can I diagnose this?

A: Check the boost potential parameters (alpha_D, alpha_P). If the boost potential is too low, sampling is not enhanced; if too high, the potential landscape is distorted. Recalculate the average dihedral and total potential energies from a short cMD run to set appropriate thresholds. Monitor the boost potential time series—it should be active but not excessively high.

Q3: The identified allosteric pocket appears highly transient and collapses in subsequent simulations. How can I validate it? A: Perform PocketMiner or POVME 3.0 analysis on multiple independent simulation trajectories to assess pocket probability and conservation. Follow up with Hamiltonian replica exchange (H-REMD) simulations focusing on the pocket region to stabilize and characterize it. Conduct fragment probing (e.g., using FTMAP or SILCS) to see if small molecules exhibit favorable binding poses.

Q4: When using PLUMED for replica exchange, my replicas do not exchange efficiently. What should I adjust?

A: Low acceptance ratios (<20%) indicate poor overlap between replicas. Increase the number of replicas or adjust the temperature range. For well-tempered metadynamics, ensure the bias factor is consistent across replicas. Use the --mc option for multiple walkers to improve collective sampling.

Q5: How do I choose between metadynamics, aMD, and Gaussian Accelerated MD (GaMD) for my allosteric system? A: The choice depends on system size and prior knowledge. Refer to the table below for a quantitative comparison.

| Method | Typical System Size (atoms) | Required Prior CV Knowledge? | Computational Overhead vs cMD | Key Tuning Parameter |

|---|---|---|---|---|

| Metadynamics | 10k - 100k | Yes (Critical) | High | Hill Height/Width, Bias Factor |

| aMD | 20k - 500k | No | Moderate | Acceleration Alpha, Threshold Energy |

| GaMD | 20k - 500k | No | Moderate | Boost Potential Parameters (σ0, E) |

| Replica Exchange | 5k - 50k | Optional | Very High | Temperature/Bias Ladder, Replica Count |

Experimental Protocols

Protocol 1: Metadynamics Workflow for Allosteric Pocket Discovery

- System Preparation: Solvate and equilibrate your protein system (e.g., with GROMACS/NAMD/AMBER). Run 100ns conventional MD (cMD) to establish a baseline.

- CV Selection: Identify putative allosteric regions via sequence conservation analysis (ConSurf) and residue interaction networks (RIN). Define 2-3 CVs: e.g., distance between regulatory and functional sites, radius of gyration of a specific domain, or a dihedral angle from a key hinge region.

- Well-Tempered Metadynamics (WTM): Use PLUMED plugin. Deposition parameters: initial

hill_height = 1.0 kJ/mol,biasfactor = 15,hill_widthtailored to CV standard deviation from cMD. Run for 200-500ns or until collective variable space is saturated. - Analysis: Use

plumed sum_hillsto generate the Free Energy Surface (FES). Identify low-energy minima corresponding to distinct conformational states. Cluster snapshots from these minima and perform cavity detection (with MDpocket or fpocket).

Protocol 2: aMD Protocol for Enhanced Conformational Sampling

- Equilibration: Perform standard minimization, NVT, and NPT equilibration.

- Threshold Calculation: Run a short (10-20ns) cMD production run. Compute average total (

V_avg) and dihedral (D_avg) potential energies and their standard deviations (σ_V,σ_D). - Parameter Setting: Apply dual boost. Dihedral boost:

E_dih = D_avg + (4 * σ_D). Total boost:E_total = V_avg + (0.2 * num_atoms). Setalpha_dih = 0.2 * num_dihedralsandalpha_total = 0.2 * num_atoms. - Production aMD: Run simulation for 200-1000ns. Use

amdweightandamdratetools to check boost statistics and reweight trajectories for unbiased analysis. - Pocket Identification: Use a trajectory clustering tool (e.g., GROMACS

cluster) on the reweighted trajectory. Analyze centroid structures for novel pockets using DoGSiteScorer or SiteMap.

Diagrams

Diagram 1: Enhanced Sampling for Allostery Workflow

Diagram 2: Allosteric Signaling Pathway

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function & Application |

|---|---|

| PLUMED 2.x | Plugin for free-energy calculations; essential for implementing metadynamics, steered MD, and analysis of CVs. |

| GROMACS/AMBER/NAMD | Core MD engines for running simulations; choose based on system size, force field, and HPC environment. |

| CHARMM36m/AMBER ff19SB | Force fields providing accurate protein energetics, crucial for modeling conformational changes. |

| CPPTRAJ/MDAnalysis | For trajectory analysis: RMSD, RMSF, hydrogen bonding, and distance/angle calculations. |

| fpocket/MDpocket | Detects and characterizes protein pockets from static structures or simulation trajectories. |

| PyMOL/VMD | Molecular visualization software for rendering structures, pockets, and dynamical trajectories. |

| FTMAP Server | Computational fragment mapping to identify hot spots for ligand binding on protein surfaces. |

| HDX-MS Kit | Experimental validation: measures deuterium uptake to confirm changes in solvent accessibility from simulations. |

Overcoming Convergence and Cost Hurdles: A Troubleshooting Guide for Practitioners

Technical Support Center

Troubleshooting Guides & FAQs

Q1: How do I know if my Molecular Dynamics (MD) simulation has converged in sampling configuration space? A: True convergence in high-dimensional systems is challenging to prove. Key diagnostics include:

- RMSD/RMSF Plateau: Plot the root-mean-square deviation (RMSD) of protein backbone atoms and per-residue root-mean-square fluctuation (RMSF). Convergence is suggested when these values fluctuate around a stable average, not a steady drift.

- Potential Energy Equilibration: The total potential energy of the system should reach a stable average. A continuous drift indicates the simulation is not equilibrated.

- Essential Dynamics Analysis: Perform Principal Component Analysis (PCA) on atomic coordinates. A converged simulation will show Gaussian-like distributions along the first few principal components, and the subspace explored by these PCs will stop expanding.

- Statistical Independence: Use tools like

gmx analyzeorpymbarto calculate statistical inefficiency and effective sample size. Autocorrelation times for key observables (e.g., dihedral angles, distances) should be much shorter than the total simulation time.

Q2: What are the specific signs of poor phase space exploration in Monte Carlo (MC) or enhanced sampling simulations? A: Signs include:

- Low Acceptance Ratio: In MC, an acceptance ratio far from the target (often ~20-50%) indicates proposed moves are too large (low acceptance) or too small (high acceptance), both hindering exploration.

- Replica Stagnation in Replica Exchange MD (REMD): Replicas fail to diffuse efficiently across temperature (or Hamiltonian) space. You can diagnose this by plotting replica exchange attempt acceptance rates (should be >20%) and the replica index as a function of simulation time, which should show frequent "walking."

- Metadynamics Bias Potential Not Filling: In Well-Tempered Metadynamics, the growth rate of the bias potential should decrease over time. A continuous, linear increase suggests poor exploration of the collective variable (CV) space.

- Multiple Local Minima Trapping: The simulation repeatedly returns to the same structural cluster, identified via clustering analysis, without transitioning to other known or predicted metastable states.

Q3: Which quantitative metrics should I monitor to assess sampling quality? A: Track the metrics in Table 1 systematically.

Table 1: Key Metrics for Assessing Sampling Convergence

| Metric | Calculation Method | Target/Converged Indication | Tool/Code Example |

|---|---|---|---|

| Effective Sample Size (ESS) | N_eff = N / (1 + 2Σ_k ρ(k)) where ρ(k) is autocorrelation at lag k |

>100-1000 independent samples for reliable statistics. | PyMBAR, alchemlyb |

| Statistical Inefficiency (g) | Inverse of ESS per unit time. | Value plateaus at a low number (e.g., < 10-100 ps for fast motions). | gmx analyze, pymbar |

| Gelman-Rubin Diagnostic (R̂) | Variance between multiple simulations vs. variance within them. | R̂ < 1.1 for all parameters of interest. | PyMC3, custom scripts |

| Radius of Gyration (Rg) / RMSD Distribution | Histogram over simulation time. | Uni-modal or stable multi-modal distribution; mean and variance are stable over time blocks. | GROMACS (gmx gyrate, gmx rms), MDAnalysis |

| Dihedral Angle Autocorrelation Time | Time for autocorrelation function of ψ/φ angles to decay to 1/e. | Should be short relative to total sim time; plateau in longest correlation time. | gmx rotacf, MDTraj |

Q4: Can you provide a protocol to diagnose poor convergence in a protein-ligand binding MD simulation? A: Follow this experimental protocol:

Title: Protocol for Convergence Diagnosis in Protein-Ligand MD Objective: To assess the sampling adequacy of a protein-ligand complex simulation. Materials: (See "Research Reagent Solutions" table). Procedure:

- Run Multiple Independent Replicas: Initiate at least 3 simulations from different initial velocities (seed numbers). Run for time

t. - Calculate Core Trajectories: For each replica, calculate time series for: a) Protein backbone RMSD (reference: initial structure after equilibration), b) Ligand heavy-atom RMSD, c) Key protein-ligand contact distances or hydrogen bonds, d) Potential energy.

- Block Averaging Analysis: For a key observable (e.g., binding affinity estimate or distance), calculate its mean over successive, increasing blocks of the concatenated trajectory data. Plot the block mean vs. block length. Convergence is suggested when the mean stabilizes (small fluctuations) with increasing block length.

- Cluster Analysis: Pool all replicas. Perform clustering (e.g., GROMOS method) on the ligand's binding pose. A converged simulation will show replicas distributed across the same major clusters in similar proportions.

- Compare Inter-Replica vs. Intra-Replica Variance: For key distances/angles, calculate the variance within a single, long replica and the variance between the means of multiple shorter replicas. If between-variance is a significant fraction of within-variance, sampling is poor.

- Perform PCA: On the combined protein-ligand interface residues (Cα and ligand heavy atoms). Project trajectories from all replicas onto the first two PCs. Check if all replicas sample the same region of PC space.

Q5: What are common pitfalls in high-dimensional CV selection that lead to poor exploration? A: The primary pitfall is choosing a CV that does not capture all relevant slow degrees of freedom for the process of interest (e.g., protein folding, ligand unbinding). This leads to hidden barriers and ineffective bias. Always test CVs by analyzing their evolution in unbiased simulations and checking for correlation with known reaction coordinates.

Visualization of Diagnostic Workflows

Title: Logical Flow for Diagnosing Simulation Convergence

Title: Enhanced Sampling CV Optimization Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Convergence Diagnostics in Molecular Simulations

| Item/Reagent | Function in Experiment | Example Product/Code |

|---|---|---|

| Molecular Dynamics Software | Engine for running simulations. Provides analysis tools. | GROMACS, AMBER, NAMD, OpenMM |

| Analysis Toolkit | Library for processing trajectories and calculating metrics. | MDAnalysis (Python), MDTraj (Python), cpptraj (AMBER) |

| Free Energy Analysis Suite | Calculate statistical inefficiency, ESS, and perform MBAR. | PyMBAR, alchemlyb |

| Visualization Software | Visual inspection of trajectories, poses, and densities. | VMD, PyMOL, UCSF ChimeraX |

| Clustering Algorithm | Identify and quantify conformational states sampled. | GROMOS method, k-means, DBSCAN (in MDTraj) |

| Principal Component Analysis Code | Perform essential dynamics to find slowest motions. | gmx covar & gmx anaeig (GROMACS), Prody |

| High-Performance Computing (HPC) Cluster | Resources to run multiple, long, or replica simulations. | Local cluster, Cloud (AWS, Azure), National supercomputers |

| Force Field Parameters | Physics-based model defining interatomic potentials. | CHARMM36, AMBER ff19SB, OPLS-AA/M, GAFF2 (for ligands) |

| Solvation Box & Ion Parameters | Create realistic aqueous environment and physiological ionic strength. | TIP3P, TIP4P water models; Joung-Cheatham ion params |

Troubleshooting Guides & FAQs

Q1: My enhanced sampling simulation does not converge, and the free energy landscape appears noisy. Could this be due to poor CV selection? A: Yes, this is a classic symptom of suboptimal CVs. The selected CVs may not accurately capture the true reaction coordinate of the process being studied. To troubleshoot:

- Perform a Principal Component Analysis (PCA) on a short, unbiased simulation to identify the largest-amplitude motions.

- Check for correlation between your CVs using the provided table (see Table 1). Highly correlated CVs are redundant.

- Consider employing time-lagged independent component analysis (tICA) or neural network-based autoencoders to identify slow collective modes from high-dimensional data.

Q2: How can I systematically test if my CVs are sufficient to describe a conformational transition? A: Implement a committor analysis, which is the definitive test for a good reaction coordinate. Protocol:

- Select configurations along the putative transition path (from your CV-based sampling).

- For each configuration, launch many short, unbiased simulations with randomized velocities.