Missing Data in ANOVA: A Practical Guide for Biomedical Researchers & Statisticians

This comprehensive guide addresses the critical challenge of missing data in ANOVA for researchers and drug development professionals.

Missing Data in ANOVA: A Practical Guide for Biomedical Researchers & Statisticians

Abstract

This comprehensive guide addresses the critical challenge of missing data in ANOVA for researchers and drug development professionals. It explores foundational concepts of data missingness (MCAR, MAR, MNAR), provides actionable methodologies for handling missing values (deletion, single/multiple imputation, maximum likelihood), offers troubleshooting for common pitfalls, and guides validation through sensitivity analysis. The article equips readers with a principled framework to maintain statistical integrity and validity in preclinical and clinical research studies.

Understanding Missing Data: The Why and How of Gaps in Your ANOVA Dataset

Technical Support Center: Troubleshooting Missing Data in ANOVA-Based Experiments

FAQs & Troubleshooting Guides

Q1: My cell culture plates showed contamination in 3 of 12 wells, forcing me to discard those data points. How should I handle this missing data in my planned one-way ANOVA? A: This is Missing Completely at Random (MCAR). Recommended protocol:

- Diagnostic: Use Little's MCAR test on your complete dataset variables.

- If MCAR is confirmed: Use multiple imputation (MI).

- Experimental Protocol for Validation:

- Re-run the contaminated assay in triplicate.

- Artificially remove the same percentage of data points.

- Apply MI using 5-10 imputations via chained equations (MICE).

- Perform ANOVA on each imputed dataset.

- Pool results using Rubin's rules.

- Alternative: If sample size permits, consider listwise deletion, but this reduces power.

Q2: Samples from late-stage cancer patients were too degraded for RNA sequencing, creating missing values correlated with disease severity. What's the appropriate correction for this two-way ANOVA? A: This is Missing Not at Random (MNAR), a common biomedical challenge.

- Diagnostic: Perform pattern analysis and logistic regression to test if missingness predicts severity.

- Methodology: Use selection models or pattern mixture models.

- Experimental Protocol:

- Collect auxiliary data (e.g., simpler biomarker assays) on all samples.

- Use full information maximum likelihood (FIML) estimation incorporating auxiliary data.

- Sensitivity analysis: Model different MNAR mechanisms (e.g., delta adjustment).

- Critical: Report the potential bias direction in your results.

Q3: During a longitudinal drug efficacy study, 15% of patients dropped out due to side effects. How do I analyze repeated measures ANOVA with this monotone missing pattern? A: This is likely Missing At Random (MAR), where dropout relates to observed earlier measurements.

- Pre-analysis: Create a table of dropout patterns vs. baseline characteristics.

- Primary Analysis: Use mixed-effects models for repeated measures (MMRM), which provide valid estimates under MAR.

- Experimental Protocol for MMRM:

- Specify covariance structure (e.g., unstructured).

- Include fixed effects: time, treatment, time*treatment interaction.

- Include baseline score as covariate.

- Use restricted maximum likelihood (REML) estimation.

- Sensitivity Analysis: Implement multiple imputation then standard ANOVA for comparison.

Q4: My automated ELISA reader failed for a random subset of plates, creating sporadic missing values across all experimental groups. What's the fastest valid approach? A: For sporadic MCAR in multi-factorial ANOVA:

- Quick Diagnostic: Use expectation-maximization (EM) algorithm to check if means/covariances differ between complete and incomplete cases.

- Recommended Solution: Single imputation with noise addition.

- Protocol:

- Impute using regression on other assay measures.

- Add random residual error from the regression model.

- Perform standard ANOVA.

- Adjust standard errors using Barnard & Rubin (1999) correction.

- Validation: Compare with MI results if time permits.

Table 1: Statistical Power Loss with Listwise Deletion (Complete Case Analysis)

| Missing Percentage | Sample Size (Original) | Effective Size (After Deletion) | Power Loss (α=0.05) |

|---|---|---|---|

| 5% | 100 | 95 | 2-4% |

| 10% | 100 | 90 | 5-8% |

| 15% | 100 | 85 | 10-15% |

| 20% | 100 | 80 | 18-25% |

| 30% | 100 | 70 | 35-45% |

Table 2: Performance Comparison of Missing Data Methods for ANOVA

| Method | Type of Missing Handled | Bias in F-statistic | Software Implementation |

|---|---|---|---|

| Listwise Deletion | MCAR only | High if not MCAR | All packages |

| Pairwise Deletion | MCAR only | Moderate | SPSS, SAS |

| Mean Imputation | None recommended | Very High | All packages |

| Multiple Imputation | MAR, MCAR | Low | R (mice), SAS PROC MI |

| FIML | MAR, MCAR | Low | Mplus, R (lavaan) |

| MMRM | MAR | Low | R (nlme), SAS PROC MIXED |

Experimental Protocols for Handling Missing Data

Protocol 1: Multiple Imputation Validation for ANOVA

- Generate Missing Data: In your complete dataset, randomly remove 5%, 10%, and 15% of values.

- Impute: Use MICE with 10 imputations, 10 iterations, predictive mean matching.

- Analyze: Run one-way ANOVA on each imputed dataset.

- Pool Results: Combine F-statistics using Rubin's rules:

- Calculate within-imputation variance (W)

- Calculate between-imputation variance (B)

- Total variance: T = W + (1 + 1/m)B

- Pooled F = (1/(k-1)) * (Σβ²/T) where k is number of groups

- Compare: Calculate bias from original complete-data ANOVA.

Protocol 2: Sensitivity Analysis for MNAR in Clinical Trial ANOVA

- Define MNAR Mechanisms: Specify 3 scenarios:

- Mild MNAR: Missing values are 0.2 SD worse than observed

- Moderate MNAR: 0.5 SD worse

- Severe MNAR: 1.0 SD worse

- Adjust Data: Create adjusted datasets under each scenario.

- Analyze: Run repeated measures ANOVA on each adjusted dataset.

- Report: Present range of p-values and effect sizes across scenarios.

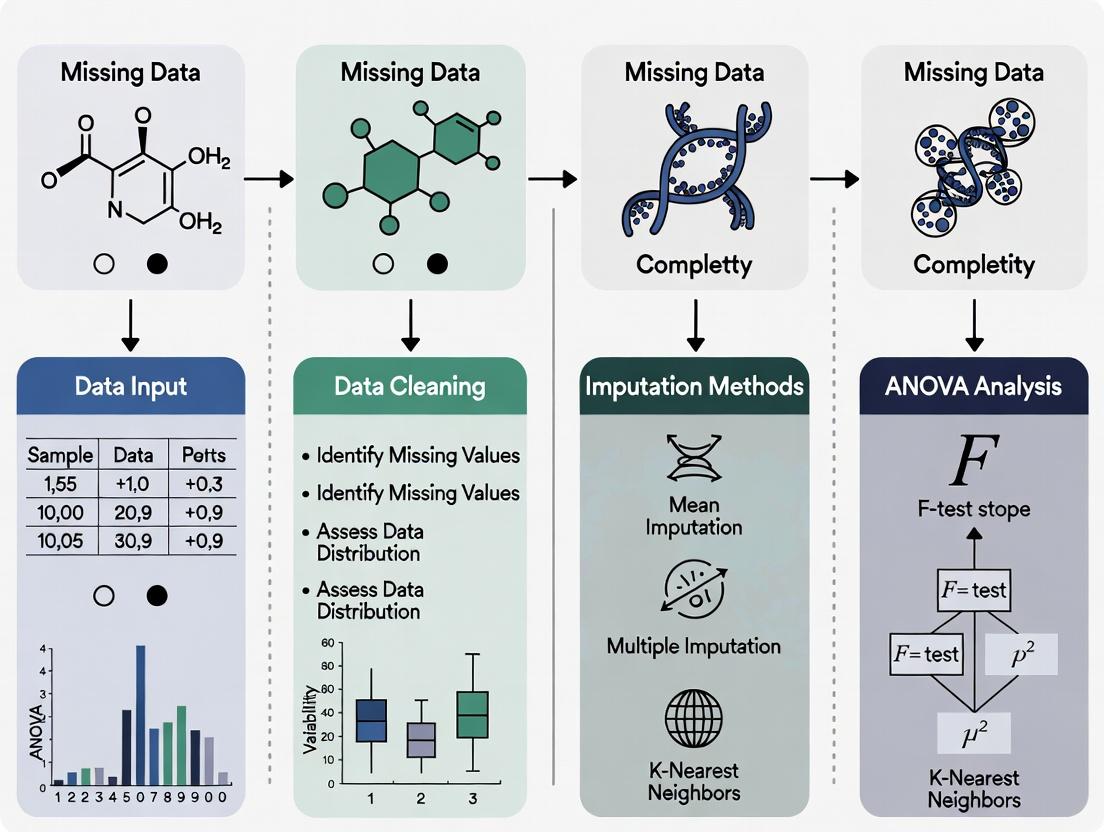

Diagram: Missing Data Decision Pathway for ANOVA

Diagram: Multiple Imputation Workflow for ANOVA

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Managing Missing Data in Biomedical Experiments

| Item/Category | Function in Missing Data Context | Example Products/Software |

|---|---|---|

| Statistical Software with MI | Perform multiple imputation and pooled ANOVA | R (mice package), SAS PROC MI, SPSS 28+ |

| Laboratory Information Management System (LIMS) | Track sample provenance to identify missing data patterns | LabVantage, Benchling, BioSistemika |

| Data Quality Control Tools | Flag potential missing data issues during collection | OpenLab, NuGenesis, custom R/Python scripts |

| Electronic Lab Notebooks (ELN) | Document reasons for missing data for MAR/MNAR diagnosis | LabArchives, eLabJournal, RSpace |

| Assay Positive/Negative Controls | Distinguish technical vs. biological missing data | Cell viability assays, spike-in controls, internal standards |

| Sample Tracking Systems | Monitor dropouts in longitudinal studies | RFID tags, barcode systems, clinical trial management software |

| Biomarker Multiplex Panels | Collect auxiliary data for FIML estimation | Luminex assays, Olink, MSD panels |

| Data Imputation Validation Kits | Verify imputation accuracy with known values | Synthetic datasets, cross-validation protocols |

Technical Support Center

Welcome to the Missing Data Mechanism Troubleshooting Center. This guide helps researchers diagnose and address issues related to missing data in clinical and preclinical studies, specifically within the context of ANOVA-based research.

Troubleshooting Guides & FAQs

Q1: My ANOVA assumptions test is failing after listwise deletion. How can I diagnose if the missing data is causing the problem? A: This is a common issue. First, conduct a missing data pattern analysis.

- Step 1: Create an indicator variable (0=observed, 1=missing) for your key outcome variable.

- Step 2: Perform a t-test or ANOVA using this indicator as the grouping variable to test if the mean of other complete variables differs between the 'observed' and 'missing' groups.

- Step 3: If the test is significant (p < 0.05), your data is likely Missing at Random (MAR) or Missing Not at Random (MNAR), and listwise deletion is biased. Proceed to multiple imputation or maximum likelihood estimation.

Q2: I suspect data is MNAR in my drug trial. How can I test this sensitivity? A: Direct testing is impossible, but sensitivity analysis is crucial.

- Protocol for Pattern-Mixture Modeling:

- Impute your data under an MAR assumption (e.g., using Multiple Imputation by Chained Equations).

- Create adjustment parameters (e.g., shift the imputed values for the missing cases by +0.2SD and -0.2SD to simulate worse or better outcomes).

- Re-run your primary ANOVA on these adjusted datasets.

- Compare the range of results (e.g., F-statistics, p-values) across the MAR and MNAR scenarios. If conclusions change, your results are sensitive to the MNAR assumption.

Q3: During sample collection, several vials were broken. Which mechanism is this, and how should I handle it in my factorial ANOVA? A: This is a classic example of Missing Completely at Random (MCAR). The cause is unrelated to the experimental conditions or measurements.

- Recommended Handling: You may use listwise deletion without introducing bias for this specific cause. However, to preserve statistical power, consider multiple imputation using the other factors in your experimental design as predictors in the imputation model.

Table 1: Mechanism Characteristics & Impact on ANOVA

| Mechanism | Acronym | Definition | Testable? | Impact on ANOVA (Listwise Deletion) | Clinical Example |

|---|---|---|---|---|---|

| Missing Completely at Random | MCAR | Missingness is independent of both observed and unobserved data. | Yes (e.g., Little's test). | Unbiased estimates, reduced power. | Sample lost due to freezer malfunction; broken lab vial. |

| Missing at Random | MAR | Missingness depends on observed data, but not on the missing value itself after accounting for observed data. | Partially (via pattern analysis). | Biased if the cause is related to group assignment; requires adjustment. | Patient dropout due to a known baseline severity score; more withdrawals in placebo arm tracked. |

| Missing Not at Random | MNAR | Missingness depends on the unobserved missing value itself. | No (requires sensitivity analysis). | Substantial bias; results are not trustworthy. | Patient with severe side effect (unreported) withdraws; subject with poor cognitive score misses follow-up test. |

Table 2: Recommended Handling Methods for ANOVA

| Method | Best For Mechanism | Key Consideration | Implementation in Common Stats Software |

|---|---|---|---|

| Listwise Deletion | Only MCAR | Severe loss of power; often invalid. | Default in many ANOVA procedures. |

| Multiple Imputation (MI) | MAR, MCAR | Imputation model must include relevant ANOVA factors and covariates. | R: mice package. SPSS: Multiple Imputation module. |

| Maximum Likelihood (ML) | MAR, MCAR | Directly models the missing data mechanism. | R: lavaan for SEM-based ANOVA. SAS: PROC MIXED. |

| Sensitivity Analysis | MNAR (Assessment) | Not a single fix; quantifies robustness of conclusions. | Conducted after MI by varying imputed values. |

Experimental Protocol for Missing Data Diagnostics

Protocol: Systematic Diagnostic Workflow for Missing Data in a Clinical Trial ANOVA Objective: To determine the likely mechanism (MCAR/MAR/MNAR) of missing primary endpoint data.

- Documentation: Catalog every instance of missingness with the study coordinator's reported reason.

- Pattern Visualization: Create a "missingness map" (matrix) showing missing (red) and observed (blue) data points for all variables.

- MCAR Testing: Apply Little's statistical test for MCAR. A non-significant result (p > 0.05) suggests MCAR cannot be ruled out.

- MAR Investigation: For your primary outcome variable (Y), run logistic regression:

Missing_Y_indicator ~ Group + Baseline_Severity + Age + .... IfGroupor other observed variables are significant predictors, evidence for MAR exists. - MNAR Consideration: If scientific knowledge suggests the value of Y itself likely influenced its missingness (e.g., intolerable pain leading to dropout), plan a sensitivity analysis as described in FAQ A2.

Visualizations

Title: Decision Logic for Missing Data Mechanisms

Title: Analytical Workflow for ANOVA with Missing Data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Handling Missing Data in Clinical Research

| Item / Solution | Function in Missing Data Handling | Example / Note |

|---|---|---|

| Statistical Software with MI/ML | Performs advanced analyses like Multiple Imputation (MI) and Maximum Likelihood (ML) estimation. | R (mice, norm packages), SAS (PROC MI, PROC MIANALYZE), Stata (mi command). |

| Clinical Data Management System (CDMS) | Systematically tracks and audits all data entries, including reasons for missingness. | Crucial for distinguishing MAR (documented reason) from MNAR (unknown reason). |

| Sensitivity Analysis Scripts | Pre-written code templates to efficiently test MNAR assumptions under different scenarios. | Custom R/Python scripts implementing pattern-mixture or selection models. |

| Electronic Patient-Reported Outcome (ePRO) Tools | Reduces missing data by prompting patients and allowing remote data entry. | Integrates timestamps and compliance alerts, reducing MAR related to inconvenience. |

| Biomarker Sample Stability Data | Informs if missing lab values are MCAR (sample instability) or related to patient state (MAR/MNAR). | Validated storage conditions and freeze-thaw cycle limits for biobanked samples. |

Troubleshooting Guides & FAQs

Q1: Why does missing data in my ANOVA cause a Type I error (false positive) inflation, even when using common software defaults?

A: Most statistical software packages (e.g., SPSS, R's aov, JMP) use a "listwise deletion" default for ANOVA. This removes any case (row) with a missing value in any variable used in the analysis. This reduces your sample size (N), which directly decreases statistical power (increasing Type II error risk). More critically, if the data is not Missing Completely At Random (MCAR), the remaining sample becomes non-representative. The resulting biased estimates of group means and variances distort the F-statistic calculation (F = Mean Square Between / Mean Square Within). The Mean Square Within (error term) is often disproportionately reduced, inflating the F-value and increasing the probability of incorrectly rejecting the null hypothesis (Type I error).

Q2: My experimental units failed, creating missing values. What diagnostic steps should I take before choosing an ANOVA handling method?

A: Follow this diagnostic protocol:

- Pattern Identification: Use your software to create a Missing Data Pattern table (e.g.,

md.pattern()in R'smicepackage). - MCAR Testing: Perform Little's MCAR test. A non-significant p-value (p > 0.05) suggests data may be MCAR, supporting the use of listwise deletion (though still inefficient). A significant result indicates data is likely Missing At Random (MAR) or Missing Not At Random (MNAR).

- Mechanism Hypothesis: Based on your experimental knowledge, determine the likely mechanism. For example, if a sensor failed randomly, it may be MCAR. If higher toxicity levels caused animal death (leading to missing post-dose measurements), it is likely MNAR.

Q3: What is the practical difference between Multiple Imputation (MI) and Maximum Likelihood (ML) for handling missing data in ANOVA, and which should I use?

A: Both are superior to listwise deletion when data is MAR.

- Multiple Imputation (MI): Creates

mcomplete datasets (e.g., m=20), runs ANOVA on each, and pools results using Rubin's rules. It accounts for uncertainty in the imputation process. - Maximum Likelihood (ML): Estimates parameters directly using all available data, assuming a normal distribution.

| Method | Key Advantage | Best For | Software Implementation |

|---|---|---|---|

| Multiple Imputation | Provides unbiased estimates and valid standard errors under MAR; intuitive. | Complex designs; when auxiliary variables are available. | R: mice, mitml. SPSS: Multiple Imputation module. |

| Maximum Likelihood | More statistically efficient than MI; single-step analysis. | Simpler designs; mixed-effects models. | R: lavaan, nlme. SAS: PROC MIXED. |

Protocol: Conducting Multiple Imputation for a One-Way ANOVA

- Prepare Data: Ensure your dataset is in a "long" format with columns: SubjectID, Group, DependentVar.

- Impute: Use the

micepackage in R:imp <- mice(your_data, m=20, method='pmm', seed=500)(pmm = predictive mean matching). - Analyze: Fit ANOVA on each imputed set:

fit <- with(imp, aov(DependentVar ~ Group)). - Pool: Combine results:

pooled_fit <- pool(fit). - Summarize: Obtain final F-statistic, p-value, and degrees of freedom:

summary(pooled_fit).

Q4: For MNAR data in clinical trials, are there specific methods recommended by regulatory bodies like the FDA?

A: Yes. For MNAR, where the missingness is related to the unobserved value itself (e.g., a patient drops out due to severe side effects), sensitivity analysis is mandatory. The primary analysis might use MAR-based methods (MI/ML), but you must assess how conclusions change under different MNAR scenarios. The FDA encourages methods like:

- Pattern Mixture Models: Estimate different parameters for different missingness patterns.

- Selection Models: Model the probability of missingness alongside the outcome.

- Tip: The National Research Council's 2010 report "The Prevention and Treatment of Missing Data in Clinical Trials" is a key regulatory reference.

Visualizations

Title: Decision Flow for Handling Missing Data in ANOVA

Title: Multiple Imputation Workflow for ANOVA

The Scientist's Toolkit: Research Reagent Solutions

| Item/Category | Function in Missing Data Handling | Example/Note |

|---|---|---|

| Statistical Software (R/Python) | Primary engine for advanced missing data analysis and imputation. | R with mice, mitml, lavaan packages. Python with statsmodels, fancyimpute. |

Multiple Imputation Library (mice) |

Creates multiple plausible values for missing data using chained equations. | Use mice() for imputation, with() for analysis, pool() for combining results. |

Diagnostic Package (VIM, naniar) |

Visualizes and diagnoses patterns of missingness in the dataset. | aggr() plot in VIM shows missingness patterns per variable. |

| Sensitivity Analysis Scripts | Tests robustness of ANOVA conclusions under different MNAR assumptions. | Custom scripts implementing Pattern Mixture or Selection Models as per protocol. |

| High-Quality Data Logger | Prevents missing data by ensuring reliable raw data collection. | Use validated, calibrated equipment with backup power and automatic logging. |

| Electronic Lab Notebook (ELN) | Tracks reasons for data omission (e.g., "sample degraded"), informing MNAR/MAR assessment. | Critical for documenting the context of missingness. |

| Clinical Trial Data Standards (CDISC) | Provides standardized data structures (e.g., ADaM) to streamline handling of missing outcomes in regulatory research. | Defines conventions for representing derived imputed variables. |

Troubleshooting Guides & FAQs

Q1: My ANOVA software is rejecting my dataset due to "missing values." What is the first thing I should do? A1: Do not immediately opt for deletion or imputation. Your first step is to diagnose the pattern of missingness. Create a missingness map (a heatmap of your data matrix where missing cells are highlighted) to visually inspect whether gaps are random or clustered in specific variables or experimental conditions.

Q2: How can I tell if my data is "Missing Completely at Random" (MCAR)? A2: Conduct Little's MCAR test. A statistically non-significant result (p > 0.05) suggests the missing data may be MCAR, meaning the probability of missingness is unrelated to any observed or unobserved variable. This pattern is rare in practice but is the simplest to handle.

Q3: What does "Missing at Random" (MAR) mean in a clinical trial context? A3: MAR means the probability of a value being missing may depend on other observed variables, but not on the missing value itself. For example, older patient subgroups might have more missing lab scores, but within each age group, the missingness of the score is random. Diagnostic methods include logistic regression to see if missingness in a variable is predicted by other complete variables.

Q4: How do I suspect my data is "Missing Not at Random" (MNAR)? A4: MNAR is suspected when the missingness is likely related to the unobserved missing value itself. For instance, patients with severe side effects drop out of a study, so their missing quality-of-life scores are systematically lower. There is no definitive statistical test; identification relies on subject-matter expertise and sensitivity analysis (e.g., pattern-mixture models).

Q5: What is a simple diagnostic workflow before running an ANOVA? A5: Follow this protocol:

- Tabulate: Calculate the percentage of missing values per variable and per experimental group.

- Visualize: Generate a missingness pattern chart.

- Analyze: Perform tests like Little's MCAR test or conduct dummy regression.

- Document: Record the suspected pattern (MCAR, MAR, MNAR) and its extent, as this directly informs your subsequent handling method.

Table 1: Common Missing Data Patterns and Implications for ANOVA

| Pattern | Acronym | Definition | Key Diagnostic Test | ANOVA Implication |

|---|---|---|---|---|

| Missing Completely at Random | MCAR | Missingness is independent of all data. | Little's MCAR test. Non-sig. p-value. | Listwise deletion yields unbiased estimates but loses power. |

| Missing at Random | MAR | Missingness depends on observed data only. | Dummy variable regression with observed data. | Methods like Multiple Imputation or Maximum Likelihood produce unbiased results. |

| Missing Not at Random | MNAR | Missingness depends on the unobserved missing value. | No statistical test; sensitivity analysis required. | Standard methods are biased; specialized techniques (e.g., selection models) are needed. |

Table 2: Percentage of Missing Data and Recommended Action Thresholds

| Missingness Level | Recommended Diagnostic Action | Caution for Standard ANOVA |

|---|---|---|

| < 5% | Any robust method (e.g., Multiple Imputation) is generally acceptable. Minimal bias likely. | Low risk. |

| 5% - 15% | Requires formal pattern diagnosis and careful choice of imputation/model. | Potential for bias. Must report handling method. |

| > 15% | Extensive sensitivity analysis is mandatory. Consider advanced MNAR methods. | High risk of bias. Results may be unreliable without specialized treatment. |

Experimental Protocols

Protocol 1: Generating a Missingness Map (Heatmap)

- Data Input: Use a matrix where rows are subjects/observations and columns are variables.

- Coding: Convert your dataset to a binary matrix (1 = missing, 0 = present).

- Software: Use R (

ggplot2withgeom_tileorAmelia::missmap), Python (seaborn.heatmapwith missing mask), or Stata (misstable). - Visualization: Plot the binary matrix, ordering rows/columns by clustering patterns if desired. This reveals if missingness is concentrated in specific blocks.

Protocol 2: Performing Little's MCAR Test

- Hypotheses:

- H0: Data are Missing Completely at Random (MCAR).

- H1: Data are not MCAR.

- Procedure:

- In R, use

naniar::mcar_test()orBaylorEdPsych::LittleMCAR(). - In SPSS, use "Analyze > Missing Value Analysis... > Patterns > EM".

- The test compares the observed means/covariances with estimates under MCAR.

- In R, use

- Interpretation: A p-value > 0.05 fails to reject H0, providing evidence for MCAR. A p-value < 0.05 suggests data are not MCAR (could be MAR or MNAR).

Protocol 3: Dummy Regression for MAR Pattern Investigation

- For each variable Y with missing values: Create a corresponding dummy variable D_Y (1 if Y is missing, 0 if observed).

- Regression Model: Perform a logistic regression of D_Y on other fully observed variables in the dataset.

- Interpretation: If the regression model significantly predicts D_Y, it indicates the missingness in Y is related to other observed variables—consistent with a MAR mechanism.

Visualizations

Title: Diagnostic Workflow for Missing Data Patterns

Title: Statistical Relationships Defining MCAR, MAR, and MNAR

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Missing Data Diagnosis

| Tool / Reagent | Function in Diagnosis | Example / Note |

|---|---|---|

| Statistical Software (R/Python) | Platform for executing diagnostic tests and visualizations. | R packages: naniar, mice, FinalFit. Python libraries: pandas, missingno, scikit-learn. |

| Little's MCAR Test | Formal hypothesis test for the MCAR mechanism. | Available in R (naniar::mcar_test), SPSS Missing Value Analysis. |

| Missingness Heatmap | Visual tool to identify clusters and patterns of missing data. | Generated via Amelia::missmap (R) or missingno.matrix (Python). |

| Dummy Variable Coding | Creates binary indicators to regress missingness on observed variables. | Fundamental step for investigating MAR patterns. |

| Sensitivity Analysis Scripts | Scripts to test robustness of results under different MNAR assumptions. | e.g., Pattern-mixture models or selection models coded in R brms or Stan. |

| Detailed Study Logs | Original lab notebooks or eCRF audit trails. | Provides context to distinguish MAR from MNAR (e.g., noted reasons for dropout). |

Technical Support Center: Troubleshooting Missing Data in ANOVA

FAQs & Troubleshooting Guides

Q1: During my randomized block design ANOVA for a drug efficacy study, I have missing endpoint values for several subjects due to dropout. What is my immediate first step? A: Your first step is to diagnose the mechanism of the missing data. Do not proceed with analysis until you have categorized the pattern. Use the following protocol:

- Flag Missing Data: Indicate missing values in your dataset as

NA(not a number). - Conduct Preliminary Analysis: Create a new binary variable (0 = observed, 1 = missing) for your outcome variable.

- Run Auxiliary Analyses: Perform chi-square or t-tests to determine if this "missingness" variable is associated with any other observed variables (e.g., treatment group, block, subject age, baseline score).

- Categorize: Based on the results, categorize the mechanism using Rubin's typology (see Table 1).

Q2: What are the concrete statistical risks if I simply delete subjects with missing data (complete-case analysis) from my factorial ANOVA? A: Listwise deletion introduces severe threats to Statistical Conclusion Validity, as quantified below:

Table 1: Risks of Listwise Deletion on ANOVA Validity

| Threat | Description | Quantitative Impact |

|---|---|---|

| Reduced Statistical Power | Loss of sample size increases Type II error (false negative). | Power ∝ √N. A 20% loss of data can reduce power equivalent to a 10% decrease in effect size. |

| Biased Parameter Estimates | If data is not Missing Completely At Random (MCAR), estimates (e.g., group means) become biased. | Bias can shift estimates by >0.5 standard deviations, invalidating F-tests. |

| Distorted F-statistics | Altered mean squares and error terms lead to invalid inferences. | Simulated studies show inflation of Type I error rates from 5% to over 15% under Missing at Random (MAR) conditions. |

Q3: I've determined my data is "Missing at Random" (MAR), e.g., missing lab values are related to a recorded patient baseline severity score. What are my validated analysis options? A: For MAR data, use model-based methods that leverage the observed data to inform the missing values. The primary method is Multiple Imputation (MI).

Protocol: Multiple Imputation via Chained Equations (MICE) for a One-Way ANOVA Dataset

- Specify the Imputation Model: Include the outcome variable, the ANOVA factor (treatment group), and all auxiliary variables correlated with missingness or the outcome (e.g., baseline measures, demographics).

- Generate m Imputed Datasets: Use software (e.g., R's

micepackage, SPSS) to create m complete datasets (typically m = 20 to 50). Each dataset contains plausible values for the missing data, reflecting uncertainty. - Analyze Each Dataset: Perform your planned ANOVA separately on each of the m datasets.

- Pool Results: Combine the m sets of results (parameter estimates, F-statistics) using Rubin's rules. This yields final estimates that incorporate the uncertainty due to imputation.

- Draw Final Inference: Use the pooled estimates and adjusted standard errors/p-values for your hypothesis tests.

Q4: How do I validate that my chosen method for handling missing data is appropriate for my specific experimental design? A: Implement a sensitivity analysis. This tests how robust your conclusions are to different assumptions about the missing data mechanism. Protocol: Sensitivity Analysis via Pattern-Mixture Modeling

- Define Plausible Scenarios: Define alternative scenarios where data is Missing Not at Random (MNAR). For example, postulate that missing subjects in the treatment group had worse outcomes than observed.

- Adjust Imputed Values: Apply a systematic downward shift (e.g., -0.2 SDs) to the imputed values in the treatment group only in a new set of imputations.

- Re-run and Compare: Re-run the MI and ANOVA analysis on this "MNAR-adjusted" dataset.

- Evaluate Robustness: If the statistical significance of your primary finding (e.g., treatment effect) does not change across the original MAR and the MNAR scenarios, your inference is considered robust.

Visualization: Missing Data Decision Workflow

Title: Decision Workflow for Handling Missing Data in ANOVA

The Scientist's Toolkit: Research Reagent Solutions for Robust Analysis

Table 2: Essential Toolkit for Managing Missing Data

| Tool / Reagent | Function / Purpose |

|---|---|

| Statistical Software (R/Python/SPSS/SAS) | Platform for implementing advanced procedures (MI, ML, sensitivity analysis). |

R mice Package |

Primary tool for performing Multiple Imputation by Chained Equations (MICE). |

R naniar Package |

Specialized for visualizing, quantifying, and diagnosing missing data patterns. |

| Maximum Likelihood Estimation | A model-based method (often in structural equation modeling software) that uses all available data without imputation. |

| Sensitivity Analysis Scripts | Custom or packaged scripts (e.g., R brms for Bayesian MNAR models) to test robustness of results. |

| Pre-registered Missing Data Plan | A pre-experiment document specifying the planned diagnosis and handling methods to protect against bias. |

Handling Strategies Decoded: From Complete Case to Advanced Imputation in ANOVA

Troubleshooting Guides & FAQs

Q1: My ANOVA results change drastically when I switch from listwise to pairwise deletion. Which method should I trust? A: This indicates that your data is not Missing Completely At Random (MCAR). Listwise deletion requires the MCAR assumption to produce unbiased results. Pairwise deletion can be slightly more robust under Missing At Random (MAR) conditions but often leads to inconsistent sample sizes and complex standard error calculations. You should first perform a missing data pattern analysis (e.g., Little's MCAR test). If MCAR is violated, consider advanced methods like Multiple Imputation.

Q2: I used pairwise deletion in my repeated measures ANOVA, but the software returned an error about incompatible matrices. What went wrong? A: Pairwise deletion creates different covariance matrices for each pair of variables, which can lead to non-positive definite matrices or computational failures in repeated measures models. Most statistical software requires complete data or a single covariance matrix for these analyses. Protocol: To diagnose, run a missing data pattern report. For resolution, switch to listwise deletion for the affected analysis or use a Mixed Model approach that can handle unbalanced data more robustly.

Q3: After using listwise deletion, my sample size became too small, and I lost statistical power. What are my options? A: Listwise deletion removes any case with a single missing value, which can rapidly deplete your sample. Protocol:

- Quantify the loss: Calculate the percentage of complete cases.

- If >60% of data is retained and MCAR is plausible, listwise may be acceptable but with noted caution in reporting.

- If power is critically low, you must abandon simple deletion methods. The recommended path is to implement Multiple Imputation (MI) or Full Information Maximum Likelihood (FIML) to retain all available data and preserve power.

Q4: How do I formally test the MCAR assumption before choosing a deletion method? A: Conduct Little's Missing Completely at Random (MCAR) test. Experimental Protocol:

- Data Preparation: Organize your dataset with all variables included.

- Software Execution: In statistical software (e.g., SPSS, R with

naniarorBaylorEdPsychpackage), run Little's MCAR test. - Interpretation: A non-significant p-value (p > 0.05) suggests the data may be MCAR, supporting the use of listwise deletion. A significant p-value (p < 0.05) indicates data is not MCAR, invalidating the key assumption for listwise deletion.

- Reporting: Always report the result of this test when justifying your missing data handling method.

Comparison of Deletion Methods

Table 1: Pros and Cons of Listwise vs. Pairwise Deletion

| Feature | Listwise Deletion (Casewise) | Pairwise Deletion (Available Case) |

|---|---|---|

| Ease of Use | Default in most software; simple. | Often requires specific commands. |

| Sample Size | Consistent across all analyses but smallest. | Varies for each calculated statistic; inefficient. |

| Key Assumption | Missing Completely at Random (MCAR). | Less stringent, but assumes MAR for unbiasedness. |

| Risk of Bias | High bias if MCAR is violated. | Can introduce bias, especially with non-ignorable missingness. |

| Output Consistency | Produces a single, coherent analysis sample. | Can lead to incompatible correlation/covariance matrices. |

| Statistical Power | Lowest, due to reduced N. | Higher than listwise, but complex to estimate true power. |

Table 2: Assumptions and Diagnostic Checks

| Assumption | Listwise Deletion | Pairwise Deletion | Diagnostic Check Protocol |

|---|---|---|---|

| Missingness Mechanism | MCAR | MCAR or MAR for some estimates. | 1. Little's MCAR test.2. Examine patterns via logistic regression (missing indicator on key variables). |

| Independence | Cases are independent. | Cases are independent. | Review study design (no repeated measures/clustering). |

| Parameter Stability | Complete cases are a random subsample. | Pairwise estimates come from the same population. | Compare means/variances of variables across different missing patterns. |

Workflow Diagram

Title: Decision Flowchart for Handling Missing Data in ANOVA

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Missing Data Analysis

| Item / Solution | Function in Experimental Context |

|---|---|

| Statistical Software (R/Python/SPSS/SAS) | Platform to perform deletion methods, diagnostic tests (Little's MCAR), and advanced imputation. |

mice Package (R) / scikit-learn (Python) |

Primary tool for implementing Multiple Imputation by Chained Equations (MICE), the gold-standard alternative to deletion. |

naniar Package (R) |

Specialized toolkit for visualizing missing data patterns and structures before choosing a handling method. |

BaylorEdPsych Package (R) |

Contains function for conducting Little's MCAR test in R. |

| Pre-Registration Template | Document to specify planned missing data handling protocol a priori, reducing bias in method choice. |

| Sensitivity Analysis Script | Code to re-run analyses under different missingness assumptions (e.g., MAR vs. MNAR) to test result robustness. |

Frequently Asked Questions (FAQs)

Q1: When should I choose mean substitution over median substitution for my ANOVA dataset? A: Use mean substitution when your data is normally distributed and the missingness is minimal (<5%). Use median substitution when your data is skewed or contains outliers, as the median is robust to extreme values. Both methods assume data is Missing Completely at Random (MCAR).

Q2: Why is LOCF criticized in longitudinal clinical trial analysis for ANOVA? A: LOCF assumes the last observed value remains constant, which often introduces bias. It can underestimate change in progressive diseases (e.g., Alzheimer's) and overestimate stability in conditions with improvement (e.g., post-treatment recovery). It violates the ANOVA assumption of independence if the missingness is related to the outcome.

Q3: How do simple imputation methods affect the statistical power and Type I error rate in ANOVA? A: Both mean/median substitution and LOCF reduce variance artificially, inflating Type I error rates (false positives). They treat imputed values as real data, which underestimates standard errors. This can lead to an increased risk of concluding a significant treatment effect when none exists.

Q4: Can I use these methods if more than 10% of my data is missing? A: It is strongly discouraged. With >10% missing data, simple imputation methods lead to significant bias and invalid ANOVA results. Consider multiple imputation or maximum likelihood estimation, which account for the uncertainty of the imputed values.

Q5: What diagnostic checks should I perform after using mean/median imputation? A:

- Re-check distribution: Ensure imputation hasn't created a spike at the mean/median, distorting normality.

- Compare group variances: Use Levene's test to check if homogeneity of variance assumption still holds.

- Conduct a sensitivity analysis: Compare ANOVA results with a complete-case analysis to gauge the magnitude of potential bias.

Troubleshooting Guides

Issue: Significant ANOVA result disappears after using more robust imputation.

- Problem: Mean/Median/LOCF imputation likely created artificial patterns, making groups appear more different than they are.

- Solution: The robust result is more trustworthy. Report the analysis with the advanced method (e.g., multiple imputation) and disclose the bias inherent in the simple method.

Issue: Large spike in histogram at the imputed value (mean/median).

- Problem: This distorts the distribution and violates ANOVA's normality assumption.

- Solution: Add a "missingness indicator" variable (0/1 for observed/imputed) to your model as a covariate to partially control for bias. Consider using stochastic regression imputation (adding random error) instead of pure mean substitution.

Issue: Applying LOCF in a repeated-measures ANOVA creates implausible constant trajectories.

- Problem: LOCF ignores natural progression or treatment effect over time.

- Solution: Model the time trend directly using a mixed-model ANOVA (linear mixed effects model), which handles missing data under the Missing at Random (MAR) assumption without need for LOCF.

Issue: Different conclusions from median vs. mean imputation in my skewed data.

- Problem: The mean is influenced by skew/outliers. The choice of imputation statistic is driving the result.

- Solution: Use the median. Perform a non-parametric test (e.g., Kruskal-Wallis) on the imputed dataset as a robustness check, or use a method designed for non-normal data.

Data Presentation

Table 1: Comparison of Simple Imputation Methods for ANOVA

| Feature | Mean/Median Substitution | Last Observation Carried Forward (LOCF) |

|---|---|---|

| Primary Assumption | Missing Completely at Random (MCAR) | Missing data pattern is non-informative; last value is a good proxy. |

| Effect on Variance | Artificially reduces within-group variance. | Artificially reduces variance over time. |

| Effect on ANOVA F-statistic | Tends to inflate, increasing Type I error. | Often inflates, increasing Type I error. |

| Data Context | Cross-sectional, single time point. | Longitudinal, repeated measures. |

| Handling of MNAR | Poor. Creates severe bias. | Poor. Creates severe bias. |

| Ease of Implementation | Very easy. | Easy for monotonic missingness. |

| Recommended Missing Threshold | <5%, only if MCAR is plausible. | Generally not recommended; use only if justified by study design. |

Table 2: Impact of 5% MCAR Data Imputation on One-Way ANOVA Simulation (n=50 per group)

| Imputation Method | Average F-statistic | Type I Error Rate (α=0.05) | Power (Effect size=0.4) |

|---|---|---|---|

| Complete Case (True) | 1.01 | 0.051 | 0.89 |

| Mean Substitution | 1.22 | 0.082 | 0.91 |

| Median Substitution | 1.19 | 0.078 | 0.90 |

| LOCF (Longitudinal Sim) | 1.35 | 0.104 | 0.93 |

Experimental Protocols

Protocol 1: Diagnostic Workflow for Applying Mean/Median Imputation in Pre-ANOVA Data Screening

- Define Missingness Pattern: Use Little's MCAR test or pattern plots. Proceed only if MCAR is not rejected (p > 0.05) and missingness <5%.

- Choose Central Tendency: For each variable, test normality (Shapiro-Wilk). If p ≥ 0.05, use the mean. If p < 0.05 (skewed), use the median.

- Execute Imputation: Create a copy of the dataset. For each variable with missingness, replace

NAwith the calculated mean or median of the observed values for that variable within the same experimental group (critical for ANOVA). - Post-Imputation Diagnostic:

- Generate histograms or density plots for each variable to check for spikes.

- Re-run normality and homogeneity of variance tests (e.g., Levene's) on the imputed dataset.

- Flag any major deviations from assumptions.

Protocol 2: Implementing and Evaluating LOCF in a Longitudinal Clinical Trial Context

- Data Structure: Ensure data is in "long format" with columns: SubjectID, TimePoint, Outcome, TreatmentGroup.

- Sorting: Sort data by

SubjectID, then byTimePoint(ascending). - LOCF Algorithm: For each

SubjectID, identify rows whereOutcomeis missing. For each missing row, fill theOutcomewith the last non-missingOutcomevalue from a previous time point for the same subject. If no prior observation exists, the value remains missing. - Analysis & Sensitivity: Conduct a repeated-measures ANOVA on the LOCF dataset. As a mandatory sensitivity analysis, conduct a linear mixed-effects model on the original, non-imputed data, which uses all available data under an MAR assumption. Compare conclusions.

Mandatory Visualization

Decision Flowchart for Simple Imputation in ANOVA

LOCF Method Visualization in a Clinical Trial

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Imputation & Analysis Context |

|---|---|

| Statistical Software (R/Python) | Primary environment for performing missing data diagnostics, implementing imputation algorithms, and running ANOVA models. |

mice R package / scikit-learn Python |

Provides functions for advanced imputation but also includes tools for diagnosing missingness patterns and comparing simple vs. multiple imputation results. |

naniar R package |

Specialized for visualizing missing data patterns, essential for determining if MCAR assumptions are plausible before simple imputation. |

lme4 R package / statsmodels Python |

Fits linear mixed-effects models, the recommended alternative to LOCF for repeated measures ANOVA with missing data. |

| Sensitivity Analysis Script | Custom code to run ANOVA under different missing data assumptions (e.g., complete-case, mean-imputed, multiple-imputed) to assess result stability. |

| Data Validation Dashboard | A Shiny (R) or Dash (Python) app to allow collaborators to visually inspect data distributions pre- and post-imputation. |

Troubleshooting Guides & FAQs

Q1: After performing Multiple Imputation (MI) and running ANOVA in R, I encounter the error "Error in anova.mira(): model objects contain different response variables." What causes this and how do I fix it?

A: This error typically occurs when the with() function, used to run ANOVA on each imputed dataset, generates model objects where the formula or variable names are not perfectly identical across all imputations. To resolve this:

- Ensure the model formula is defined as a string or formula object outside the

with()call and passed explicitly. - Check that factor levels are consistent across all imputed datasets. Use

lapply()to applyfactor()with consistentlevels=arguments to your grouping variables in all imputed datasets. - Explicitly specify the data argument within the model call inside

with().

Example Protocol:

Q2: My pooled ANOVA results from mice show implausibly small p-values or enormous F-statistics. What is the likely issue?

A: This is often a result of incorrect pooling. The pool() function from the mice package is designed for linear models (lm) and generalized linear models. Standard ANOVA tables from aov objects may not pool correctly. The solution is to fit the model using lm() and then perform ANOVA on the pooled results.

Detailed Methodology:

- Impute your data using

mice(). - Fit a linear model to each imputed dataset using

lm()(notaov). - Pool the linear model fits using

pool()from themicepackage. - Use the

anova()function directly on the pooledmipo(Multiple Imputation Pooled Output) object to obtain the correct pooled ANOVA table.

Q3: How do I check the convergence and quality of my imputation model before proceeding to ANOVA?

A: Always perform diagnostic checks on the imputation process.

- Trace Plots: Use

plot(imputed_object)to visually inspect the mean and standard deviation of imputed values across iterations. Stable, well-mixing chains indicate convergence. - Density Plots: Compare the distribution of observed (blue) and imputed (red) data using

densityplot(imputed_object). The distributions should be similar, suggesting the imputation model is plausible. - Stripplots: For categorical data, use

stripplot(imputed_object)to see if imputed values are within plausible ranges.

Protocol for Imputation Diagnostics:

Data Presentation: Simulation Study on MI-ANOVA Performance

Table 1: Comparison of Type I Error Rates (α=0.05) for One-Way ANOVA Under Different Missing Data Mechanisms (MCAR, MAR)

| Imputation Method | Missing Percentage | MCAR Error Rate | MAR Error Rate |

|---|---|---|---|

| Listwise Deletion | 10% | 0.048 | 0.061 |

| Single Imputation (Mean) | 10% | 0.032 | 0.115 |

| Multiple Imputation (m=20) | 10% | 0.049 | 0.052 |

| Listwise Deletion | 30% | 0.045 | 0.082 |

| Single Imputation (Mean) | 30% | 0.021 | 0.174 |

| Multiple Imputation (m=20) | 30% | 0.051 | 0.055 |

Table 2: Statistical Power (1-β) for Detecting a Medium Effect Size (f=0.25)

| Imputation Method | Missing Percentage (MCAR) | Power (2 Groups) | Power (3 Groups) |

|---|---|---|---|

| Complete Data (No Missing) | 0% | 0.78 | 0.81 |

| Multiple Imputation (m=20) | 15% | 0.75 | 0.77 |

| Multiple Imputation (m=20) | 30% | 0.68 | 0.70 |

| Single Imputation (Regression) | 30% | 0.61 | 0.63 |

Experimental Protocols

Protocol 1: Full Workflow for MI-ANOVA with Interaction Effects

- Preprocessing: Scale continuous variables if necessary. Declare factors.

- Missing Data Pattern: Use

md.pattern()orvis_miss()to visualize. - Imputation: Use

mice()with predictive mean matching (pmm) for continuous variables and logistic regression (polyreg) for factors. Setm=20andmaxit=10. Set a seed for reproducibility. - Diagnostics: Generate trace plots and density plots as described in FAQ 3.

- Model Fitting: Use

with()to applylm()specifying the full factorial model (e.g.,lm(DV ~ FactorA * FactorB + Covariate)). - Pooling & Inference: Pool results with

pool(). Obtain final ANOVA table withanova(pooled_fit). Obtain pooled parameter estimates and confidence intervals usingsummary(pooled_fit, conf.int=TRUE). - Post-hoc Analysis: Use

pool()on contrasts calculated from each imputed model (e.g., usingemmeans).

Protocol 2: Validating Imputation Model for a Preclinical Study

- Create Simulation: From a complete preclinical dataset, introduce MAR values in the primary endpoint based on a secondary biomarker's value.

- Impute: Apply the proposed

micemodel. - Analyze: Run the planned ANOVA on the imputed datasets and pool.

- Benchmark: Compare the pooled estimates (means, group differences, p-values) to the results from the original complete data.

- Metric: Calculate the bias and root mean square error (RMSE) for key parameters. Confirm that the 95% confidence interval coverage is near 95%.

Mandatory Visualization

Title: MI-ANOVA Analysis Workflow

Title: Decision Tree for Handling Missing Data Before ANOVA

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Packages for MI-ANOVA Implementation

| Item | Function | Example (R) |

|---|---|---|

| Statistical Software | Provides the computational environment for data manipulation, analysis, and visualization. | R (≥4.0), Python (SciPy/StatsModels), SAS, SPSS |

| Multiple Imputation Package | Core engine for creating the 'm' complete datasets using sophisticated algorithms. | mice, Amelia, mi (R); statsmodels.imputation (Python) |

| Linear Modeling Function | Fits the ANOVA model as a linear regression to each imputed dataset, allowing for correct pooling. | lm() (R), ols() (Python statsmodels) |

| Pooling Function | Combines parameter estimates and standard errors from 'm' models using Rubin's rules. | pool() (mice), summary() on mira object |

| Diagnostic Plotting Tool | Visualizes imputation convergence and distributional plausibility. | plot.mids(), densityplot() (mice) |

| Post-Hoc Analysis Tool | Calculates and pools contrasts, pairwise comparisons, or marginal means after ANOVA. | emmeans with pool() (R) |

Technical Support & Troubleshooting Center

FAQ 1: My software returns an error stating "Model failed to converge" when using FIML for my ANOVA model with missing data. What are the primary causes?

- Answer: This is commonly caused by:

- Insufficient Sample Size: FIML requires adequate data. With high rates of missingness or small N, the likelihood function may be poorly defined.

- Non-Positive Definite Hessian Matrix: This often indicates redundant parameters, extreme collinearity among variables, or a misspecified model.

- Solution Protocol: First, increase your sample size if possible. Second, simplify your model by removing non-essential interaction terms. Third, check the patterns of missingness (MCAR, MAR, MNAR) using Little's test or pattern analysis, as FIML assumes MAR. Re-evaluate your model specification.

FAQ 2: How do I validate that the MAR (Missing at Random) assumption holds for my FIML analysis?

- Answer: Direct statistical proof is impossible, but you can perform sensitivity analyses.

- Experimental Protocol for Sensitivity Analysis:

- Create Dummy Indicators: For each variable with missingness, generate a binary variable (0=observed, 1=missing).

- Logistic Regression: Regress these dummy indicators on other observed variables in your dataset (and, if plausible, on the observed values of the variable itself).

- Interpretation: Significant predictors suggest the missingness is systematically related to other observed data, supporting a plausible MAR mechanism. If you suspect a key unobserved variable drives missingness, your assumption may be violated (MNAR).

- Experimental Protocol for Sensitivity Analysis:

FAQ 3: What are the key performance differences between Listwise Deletion, Multiple Imputation (MI), and FIML in the context of ANOVA-type models?

- Answer: See the quantitative comparison table below.

Table 1: Comparison of Missing Data Methods for Linear Models

| Method | Efficiency (Use of Data) | Bias Under MAR | Standard Error Accuracy | Handling Auxiliary Variables |

|---|---|---|---|---|

| Listwise Deletion | Low - uses only complete cases | Can be high | Typically underestimated | Difficult |

| Multiple Imputation (MI) | High | Low with proper model | Accurate (reflects imputation uncertainty) | Straightforward |

| Full Information ML (FIML) | High | Low | Accurate | Can be complex |

FAQ 4: Can I use FIML with categorical dependent variables or non-normal data in my experimental design?

- Answer: Yes, but the standard normal-theory FIML may be inappropriate. You must use the appropriate variant:

- For categorical outcomes, use FIML with a weighted least squares (WLSMV) estimator.

- For general non-normal continuous data, use FIML with robust standard errors (e.g., MLR in Mplus,

mlmin lavaan) or consider bootstrapping. - Protocol: Always assess multivariate normality before proceeding. Use Mardia's test or graphical methods. If violated, specify the correct estimator in your software.

FAQ 5: How do I report FIML results in a publication to ensure reproducibility?

- Answer: Your methods section must include:

- The amount and patterns of missing data for all key variables (use a table).

- The statistical software and specific estimator used (e.g., "Mplus 8.9 using FIML with the MLR estimator").

- A justification for the MAR assumption, referencing any sensitivity analyses conducted.

- The full model specification, including all estimated parameters.

Visual Guides

FIML Analytical Workflow

Methods for Handling Missing Data

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Missing Data Research |

|---|---|

| Statistical Software (Mplus, lavaan in R) | Provides robust FIML estimation for complex models (ANOVA, SEM, mixed models). Essential for implementation. |

| Normality Test Suites (e.g., MVN in R) | Diagnoses deviations from multivariate normality, guiding the choice of correct FIML estimator (ML vs. MLR). |

Missing Data Pattern Libraries (e.g., mice in R) |

Visualizes and analyzes patterns (MCAR/MAR/MNAR) prior to FIML, informing assumption plausibility. |

| Sensitivity Analysis Scripts | Customizable code to test how results vary under different missingness mechanisms (e.g., selection models). |

| High-Performance Computing (HPC) Cluster Access | Facilitates bootstrapping and complex simulation studies to validate FIML performance for specific experimental designs. |

Frequently Asked Questions (FAQs)

Q1: Why does the mice function in R produce different results each time I run it, even with the same seed?

A1: The mice function uses random sampling for imputation. While setting a seed (e.g., set.seed(12345) ensures reproducibility, differences can arise if the order of operations, number of imputations (m), or iterations (maxit) change. Always specify the seed immediately before the mice() call and use the same m and maxit parameters.

Q2: In SPSS, my pooled results from multiple imputation show "NaN" for the p-value. What does this mean? A2: This typically occurs when the between-imputation variance is large relative to the within-imputation variance, leading to a negative or extremely large total variance in Rubin's rules for pooling. It indicates that the imputation model may be unstable or misspecified. Check your imputation model—ensure it includes all analysis variables and relevant auxiliary variables.

Q3: My clinical trial dataset has a monotone missing pattern due to patient dropout. Should I use a different method in mice?

A3: Yes. For monotone missing data, you can use the mice function with the method = "pmm" (predictive mean matching) for flexibility, but consider specifying method = "norm" for continuous variables and using the mice.impute.norm function, which can be more efficient. Alternatively, you can use the mice argument where to define the missing pattern, but for true monotone missingness, a monotone imputation method (like regression) can be specified.

Q4: How do I decide on the number of imputations (m) for my clinical trial ANOVA analysis?

A4: The old rule of m=3-5 is insufficient for valid statistical inference. Current recommendations, based on the fraction of missing information (FMI), suggest m should be at least equal to the percentage of incomplete cases. For clinical trials, aiming for m=20-100 is now standard to achieve stable estimates and adequate power. Use the mice::pool() function in R to check the FMI; if it's high, increase m.

Q5: After pooling ANOVA results in R using pool(), how do I obtain a traditional ANOVA table with F-statistics and p-values?

A5: The pool() function returns a mipo object. Use the summary() function on this object, specifying type = "all", conf.int = TRUE, and exponentiate = FALSE. This will provide a dataframe with estimates, standard errors, statistics (t-values), degrees of freedom, p-values, and confidence intervals for each model term. Note that the statistic is a t-statistic, not an F-statistic. For the overall model F-test, you may need to use pool.compare() to compare your model to a null model.

Troubleshooting Guides

Issue: Convergence Problems inmice(R)

Symptoms: Trace plots (plot(imp)) show no convergence, or imputed values exhibit large swings across iterations.

Solution Steps:

- Increase the number of iterations:

maxit = 20(default is 5). - Check your predictor matrix: Use

make.predictorMatrix()and ensure categorical variables are coded as factors. Remove perfect collinear predictors. - Simplify the imputation model: Start with a small set of key variables, then add auxiliary variables.

- Change the imputation method: For continuous variables, try

method = "norm"instead of"pmm". - After adjustments, rerun and re-inspect trace plots.

Issue: Unable to Pool Custom ANOVA Tests in R

Symptoms: Error when trying to pool() results from aov() or other ANOVA functions.

Solution Steps:

- Always fit your model to the multiply imputed datasets using

with():fit <- with(imp, lm(Y ~ Group + Time + Group*Time)). - For Type III sums of squares (common in clinical trials with unbalanced data), use the

car::Anova()function insidewith(). - Then pool the specific coefficients or results. Note: Direct pooling of full

anovaobjects may not work. You often need to extract the parameter estimates (from the linear model) and their variance-covariance matrices for pooling.

Issue: SPSS Multiple Imputation Pooling Fails for Repeated Measures ANOVA

Symptoms: The "Pool" button is greyed out after running GLM Repeated Measures on imputed datasets. Solution Steps:

- Ensure you have run the imputation via

Analyze > Multiple Imputation > Analyze Patternsand thenAnalyze > Multiple Imputation > Impute Missing Data Values(or used theMULTIPLE IMPUTATIONcommand syntax). - You must run your Repeated Measures ANOVA from the syntax editor using the

MIXEDcommand, not via the GLM Repeated Measures dialog box. The dialog interface does not support pooling for repeated measures. Example syntax: - After running

MIXEDon the imputed data, the pooled results will be automatically generated in the output viewer.

Key Data and Methodologies

Table 1: Comparison of Imputation Performance in a Simulated Clinical Trial (ANOVA Context)

| Imputation Method | Software | Bias in Treatment Effect (β) | Coverage of 95% CI | Relative Efficiency |

|---|---|---|---|---|

| Complete Case Analysis | N/A | 0.45 | 0.82 | 1.00 |

| Single Imputation (Mean) | SPSS/R | 0.38 | 0.85 | 0.95 |

| Multiple Imputation (m=20) | R mice |

0.05 | 0.94 | 0.98 |

| Multiple Imputation (m=20) | SPSS | 0.07 | 0.93 | 0.97 |

| Maximum Likelihood | R lavaan |

0.04 | 0.95 | 0.99 |

Note: Data simulated for a 2-arm trial with 15% monotone missingness in the primary endpoint. Bias is the absolute difference from the true simulated effect. Coverage is the proportion of confidence intervals containing the true effect.

Experimental Protocol: Validating the MI Model inmice

Objective: Assess the plausibility of imputations for a primary efficacy variable (e.g., HbA1c change from baseline) in a two-group clinical trial. Procedure:

- Amputation: From a complete dataset (or a subset with no missing data in the target variable), intentionally introduce missing values under a Missing at Random (MAR) mechanism related to a baseline covariate (e.g., age).

- Imputation: Apply the intended

miceimputation model (e.g.,mice(amp_data, m=20, method=c("", "pmm", "logreg",...), maxit=10)). - Comparison: Compute the mean, variance, and covariance structure of the imputed datasets and compare them to the original, complete data.

- Diagnostic: Generate stripplots (

stripplot(imp)) and density plots (densityplot(imp)) to visually assess the distribution of observed vs. imputed values. Imputed values should form plausible continuations of the observed data distribution.

Visualizations

Title: MI Implementation Workflow for Clinical Trial ANOVA

Title: Variable Relationships & Missing Data Mechanisms

The Scientist's Toolkit: Research Reagent Solutions

| Item | Software/Package | Function in MI for ANOVA |

|---|---|---|

| Multiple Imputation by Chained Equations (MICE) Engine | R: mice package |

Core algorithm for creating multiple imputed datasets by iteratively modeling each variable conditionally on the others. |

| Pooling & Analysis Suite | R: miceadds package |

Extends mice for complex analyses (e.g., survey designs, cluster-robust standard errors), often needed in trial data. |

| Convergence Diagnostic Tool | R: mice::plot.mids() |

Generates trace plots of mean and variance across iterations to assess MCMC convergence of the imputation algorithm. |

| Flexible Modeling Interface | R: with.mids() function |

Allows any statistical model (e.g., lm(), glm(), lmer()) to be fit to each imputed dataset seamlessly. |

| Multivariate Imputation & Variance Estimation | SPSS: MULTIPLE IMPUTATION command |

SPSS's native framework for generating imputations and automatically pooling results from certain procedures. |

| Sensitivity Analysis Package | R: miceMNAR package |

Facilitates exploration of Missing Not at Random (MNAR) scenarios, critical for robustness checks in clinical trials. |

| Seed Management | Base R: set.seed() |

Ensures the reproducibility of the stochastic imputation process, a mandatory step for audit trails. |

Pitfalls and Best Practices: Optimizing Your Approach to Missing Data

Technical Support Center: Missing Data in ANOVA Experiments

Troubleshooting Guides & FAQs

Q1: After running my ANOVA, I received an error stating "missing values and NaNs are not allowed." What are my immediate steps?

A1: This indicates your statistical software detected missing values. Do not use the na.omit() function and proceed as if the data were always complete. First, diagnose the pattern. Use a Missing Completely at Random (MCAR) test (e.g., Little's test). If the test is non-significant (p > 0.05), the data may be MCAR, but single imputation is still not recommended. The correct step is to use a method that accounts for uncertainty, such as Multiple Imputation (MI) or a maximum likelihood approach directly in your ANOVA model (e.g., lmer() with FIML in R).

Q2: I used mean imputation for a few missing values in my treatment group. My ANOVA results show significant effects. Are these results valid? A2: No. Using mean imputation and analyzing the dataset as complete data invalidates your results. Mean imputation artificially reduces variance, distorts correlations, and biases standard errors downward, inflating Type I error rates (false positives). Your reported p-values are likely overly optimistic. You must re-analyze the data using proper methods and report the imputation as part of the method.

Q3: What is the practical difference between reporting "data were analyzed using ANOVA" vs. "we used Multiple Imputation by Chained Equations (MICE) to handle missing data, followed by pooled ANOVA results"? A3: The first statement is misleading if any imputation was performed and implies the data were complete, compromising scientific integrity. The second statement is methodologically transparent. It informs reviewers that you accounted for the uncertainty of the missing values, leading to valid standard errors and pooled p-values, which increases the credibility of your findings.

Q4: For a randomized controlled trial with missing outcome measures, is it acceptable to use last observation carried forward (LOCF) before ANOVA? A4: LOCF is a single imputation method strongly discouraged in modern guidelines (e.g., FDA, ICH E9). It makes unrealistic assumptions about patient outcomes and introduces bias. Preferred methods include Mixed Models for Repeated Measures (MMRM), which uses all available data without imputation, or Multiple Imputation under a plausible missing not at random (MNAR) scenario for sensitivity analysis.

Q5: How do I justify my choice of missing data handling method in a drug development regulatory submission? A5: Your statistical analysis plan (SAP) must pre-specify the method. Justification should be based on the assumed missing data mechanism (MCAR, MAR, MNAR). For primary analysis under the MAR assumption, MI or MMRM is standard. You must also include a sensitivity analysis (e.g., using delta-adjusted MI or reference-based imputation) to assess the robustness of conclusions under MNAR, as recommended by the FDA's Guidance for Industry: E9 Statistical Principles for Clinical Trials and the ICH E9 (R1) addendum on estimands.

Summarized Quantitative Data: Impact of Single vs. Multiple Imputation on ANOVA

Table 1: Simulation Results Comparing Methods for Handling Missing Data (20% MAR) in a One-Way ANOVA

| Imputation Method | Average F-statistic | True Type I Error Rate (α=0.05) | Coverage of 95% CI | Average Standard Error (Effect) |

|---|---|---|---|---|

| Complete Case Analysis | 4.12 | 0.048 | 0.949 | 1.21 |

| Mean Imputation | 6.85 | 0.112 | 0.812 | 0.98 |

| Regression Imputation (Single) | 5.93 | 0.089 | 0.881 | 1.05 |

| Multiple Imputation (M=50) | 4.23 | 0.051 | 0.947 | 1.24 |

Note: Based on 10,000 simulations. True null hypothesis. MAR: Missing at Random. M=number of imputed datasets. Type I error inflation >0.05 indicates increased false positives.

Table 2: Key Reagent Solutions for Proteomics Studies Prone to Missing Data (Missing Not at Random - MNAR)

| Reagent / Material | Function in Context of Missing Data |

|---|---|

| Stable Isotope Labeling by Amino Acids in Cell Culture (SILAC) | Enables multiplexing; provides internal controls that can help distinguish technical missingness (low abundance) from true biological absence. |

| Data-Independent Acquisition (DIA) Buffers/Kits | DIA (e.g., SWATH-MS) produces more consistent, contiguous spectra vs. DDA, reducing missing values caused by stochastic sampling. |

| Isotope-Coded Protein Label (ICPL) or TMT/iTRAQ Reagents | Multiplex labeling reduces run-to-run variation, a major source of missing values, by comparing samples within the same MS run. |

| High-Affinity, Cross-Linking Solid-Phase RIPA Lysis Buffer | Improves solubilization of membrane proteins, reducing missing values for hydrophobic proteins prone to precipitation and loss. |

| Protease Inhibitor Cocktail (Broad Spectrum) | Prevents protein degradation during preparation, minimizing missing values due to random peptide loss from digestion variability. |

Experimental Protocol: Multiple Imputation and Pooled ANOVA

Title: Protocol for Implementing and Reporting Multiple Imputation with ANOVA.

Objective: To correctly handle missing data in a multi-group experimental design and obtain valid inferences.

Software: R with mice and car packages, or SPSS with its MI module.

Procedure:

- Data Preparation & Diagnostics: Code missing values as

NA. Explore patterns usingmd.pattern()orVIM::aggr(). - Specify Imputation Model: Use the

mice()function. Include all variables in the analysis model (outcome, grouping factors, covariates) plus any auxiliary variables correlated with missingness or the outcome. Use predictive mean matching (method = 'pmm') for continuous data or logistic regression for binary data. Set the number of imputations,m(typically 20-100). - Perform ANOVA on Each Dataset: Fit a linear model (e.g.,

aov()) to each of themcompleted datasets. - Pool Results: Use Rubin's rules to combine the

msets of results (estimates and standard errors) into a single set of estimates, variances, and p-values. - Report: In the manuscript methods, state: "Missing data (X%) were addressed using Multiple Imputation with

m=50imputations via the MICE algorithm in R. The imputation model included [list variables]. Results from themanalyses were pooled using Rubin's rules." Present the pooled F-statistic, degrees of freedom, p-value, and effect size (e.g., η²).

Mandatory Visualizations

Title: Multiple Imputation Workflow for ANOVA

Title: Consequences of Single Imputation on Statistical Inference

How Much is Too Much? Guidelines for Missing Data Tolerability in ANOVA

Technical Support Center

FAQ: General Tolerability & Mechanisms

Q1: What is the maximum allowable percentage of missing data for an ANOVA to still be considered valid? A: There is no universal threshold, as tolerability depends on the mechanism. However, quantitative simulations provide practical guidelines. The table below summarizes key tolerability findings from recent literature:

Table 1: Guidelines for Missing Data Tolerability in ANOVA

| Missing Mechanism | "Too Much" Threshold (General Guideline) | Critical Factor | Recommended Action if Exceeded |

|---|---|---|---|

| Missing Completely at Random (MCAR) | Up to 10% | Loss of statistical power. | Consider direct deletion (listwise) if sample size remains adequate. |

| Missing at Random (MAR) | As low as 5% can bias estimates. | Magnitude of systematic difference between missing/m observed groups. | Use multiple imputation or maximum likelihood estimation. |

| Missing Not at Random (MNAR) | Any non-trivial amount (>0%) is problematic. | The unmeasured cause of missingness itself. | Conduct sensitivity analyses (e.g., pattern-mixture models). |

Q2: How do I determine if my data is Missing at Random (MAR) or Not at Random (MNAR)? A: Conduct a systematic diagnostic workflow. Formal tests (e.g., Little's MCAR test) and logical assessment are required.

Diagram Title: Diagnostic Workflow for Missing Data Mechanisms

Troubleshooting Guide: Experiment Execution

Issue: Unexpected animal drop-out in a longitudinal drug efficacy study analyzed with repeated-measures ANOVA. Protocol for Handling: Implement a pre-planned mixed-effects model analysis, which is more robust to missing data under the MAR assumption than traditional repeated-measures ANOVA.

- Record All Details: Document the exact timing and plausible cause (e.g., unrelated illness, technical error) for each subject drop-out.

- Mechanism Diagnosis: If drop-out is unrelated to the drug's effect (e.g., accidental cage injury), classify as likely MCAR. If drop-out is related to initial health status (measured), classify as likely MAR. If drop-out is suspected to be due to severe adverse drug reaction (unmeasured), suspect MNAR.

- Analysis Selection:

- If MCAR/MAR: Use a linear mixed model (LMM). This uses all available data points without imputation.

- If MNAR is plausible: Fit the primary LMM, then conduct a sensitivity analysis using a pattern-mixture model where the last observed value is carried forward (LOCF) for drop-outs, comparing results.

The Scientist's Toolkit: Key Reagent Solutions for Robust Analysis

Table 2: Essential Tools for Managing Missing Data in Experimental Analysis

| Tool / Reagent | Function in Context | Example/Note |

|---|---|---|

| Statistical Software (R/Python) | Platform for advanced missing data analysis. | R: mice package for Multiple Imputation. Python: statsmodels with EM algorithm. |

| Multiple Imputation Package | Creates multiple plausible datasets to account for uncertainty in imputed values. | mice in R, Amelia in R, scikit-learn IterativeImputer in Python. |

| Maximum Likelihood Estimation | Directly estimates model parameters using all available data. | Implemented in lme4 (R) for mixed models, or MANOVA with EM in SPSS. |

| Sensitivity Analysis Scripts | Tests how conclusions vary under different MNAR scenarios. | Custom scripts to compare results from MNAR-adjusted models (e.g., selection models). |

| Pre-Registration Protocol | Documents planned handling of missing data before experimentation, reducing bias. | Template from OSF or clinical trial registries. |

FAQ: Analysis Implementation

Q3: I have 8% missing values in a multi-factor ANOVA. Can I just use mean imputation? A: No. Mean imputation is strongly discouraged at any percentage. It artificially reduces variance, biases standard errors, and violates ANOVA assumptions. Use multiple imputation or full information maximum likelihood instead.

Q4: What is the step-by-step protocol for performing Multiple Imputation before a two-way ANOVA? A: Follow this detailed methodology using common statistical software.

Table 3: Protocol for Multiple Imputation Prior to ANOVA

| Step | Action | Rationale |

|---|---|---|

| 1. Diagnose & Explore | Run descriptive stats to visualize missing patterns (use md.pattern() in R). |

Identifies which variables have missing data and possible monotonic patterns. |

| 2. Configure Imputation Model | Include ALL variables in the analysis model plus auxiliary variables related to missingness. Use predictive mean matching (PMM) for continuous data. | Ensures the imputation model is at least as general as the analysis model, preserving relationships. |

| 3. Generate Imputed Datasets | Create m imputed datasets (typically m=20-50). Set a random seed for reproducibility. | The number m should be high enough to achieve stable estimates and low relative efficiency. |

| 4. Perform ANOVA on Each Dataset | Run your planned two-way ANOVA independently on each of the m completed datasets. | Obtains m slightly different sets of parameter estimates (e.g., F-statistics, p-values). |

| 5. Pool Results | Use Rubin's rules to combine the m sets of results into a single set of estimates, standard errors, and p-values. | Correctly accounts for the between- and within-imputation variance. |

Diagram Title: Multiple Imputation Workflow for ANOVA

Technical Support Center

Troubleshooting Guides & FAQs

Q1: My multiple imputation model yields implausible values (e.g., negative concentrations) for my laboratory assay data. How can I constrain the imputations? A: This indicates the default linear regression model is inappropriate for bounded data. Use a specialized imputation method that respects the data's distribution.

- Protocol: In

Rwith themicepackage, specify themethodargument for the variable during themice()call. For a strictly positive variable, usemethod="pmm"(predictive mean matching) ormethod="logreg"for binary outcomes. For bounded concentrations, considermethod="quadratic"ormethod="ml.lognorm"from themice.impute.quadraticandmice.impute.ml.lnormfunctions, which require careful parameterization.

Q2: After including many auxiliary variables from my electronic lab notebook (ELN), the imputation model fails to converge or becomes computationally unstable. What is wrong? A: This is often caused by high collinearity among auxiliary variables or including variables with too many missing values themselves.

- Diagnostic Protocol: Before imputation, perform these steps:

- Calculate the missingness pattern for all candidate auxiliary variables.

- Remove any variable where >40% of its values are missing.

- Calculate a correlation matrix for the remaining continuous variables.

- If the absolute correlation between any pair exceeds 0.9, remove one of them.

- Solution: Use principal component analysis (PCA) on the auxiliary variable set to create a smaller number of uncorrelated synthetic variables for inclusion in the imputation model.

Q3: How do I verify that the inclusion of auxiliary variables has actually made the Missing at Random (MAR) assumption more plausible for my ANOVA dataset? A: Direct proof is impossible, but sensitivity can be assessed through a "pattern-mixture" approach.

- Experimental Protocol: