Mastering Dual-Agent Kinetic Models: A Comprehensive Guide to Parameter Fitting for Drug Development

This article provides a comprehensive guide to fitting parameters in dual-agent kinetic models, a critical task in modern combination therapy development.

Mastering Dual-Agent Kinetic Models: A Comprehensive Guide to Parameter Fitting for Drug Development

Abstract

This article provides a comprehensive guide to fitting parameters in dual-agent kinetic models, a critical task in modern combination therapy development. Targeted at researchers and drug development professionals, it explores the foundational principles of synergistic and antagonistic drug interactions, details step-by-step methodological approaches for model implementation using current software tools, addresses common troubleshooting and optimization challenges, and establishes rigorous validation frameworks. By synthesizing these four core intents, the article equips scientists with the practical knowledge needed to accurately quantify drug interactions, optimize combination regimens, and translate preclinical models into clinical trial designs, ultimately accelerating the development of effective multi-drug therapies.

Understanding the Core: What Are Dual-Agent Kinetic Models and Why Are Their Parameters Crucial?

Welcome to the Technical Support Center for Dual-Agent PK-PD Modeling. This resource is designed to assist researchers navigating the complexities of fitting and applying dual-agent kinetic-dynamic models, a core methodological pillar in contemporary combination therapy research.

Troubleshooting Guides & FAQs

Q1: During model fitting, my parameter estimates for drug interaction (α or ψ) are highly unstable or hit computational boundaries. What are the common causes and solutions? A: This is a frequent issue in dual-agent PK-PD parameter research. Common causes and solutions are:

- Cause 1: Insufficient data density around the interaction region (e.g., only a few dose combinations tested).

- Solution: Redesign the experiment to include more dose combinations, specifically around the suspected IC50 values of each drug alone and in combination. Use an efficient experimental design like a checkerboard assay.

- Cause 2: Structural identifiability problem—the model is over-parameterized for the available data.

- Solution: Simplify the interaction model (e.g., switch from a complex synergistic model to a simpler Loewe Additivity or Bliss Independence model for initial fitting). Consider fixing better-identified primary PK or single-agent PD parameters to literature values.

- Cause 3: Poor initial parameter guesses leading to convergence in a local minima.

- Solution: Implement a global optimization algorithm (e.g., particle swarm, genetic algorithm) alongside standard nonlinear mixed-effects modeling (NONMEM, Monolix) or nonlinear least squares to explore the parameter space.

Q2: How do I pharmacologically validate that my fitted model parameters for synergy/antagonism are biologically plausible? A: Parameter validation is critical for thesis credibility. Follow this protocol:

- In Silico Prediction: Use the fitted model to predict the outcome of a new, untested dose combination within the experimental range.

- In Vitro/In Vivo Validation: Conduct the experiment using the predicted dose combination.

- Comparison: Compare the observed effect (e.g., tumor volume reduction, viral load) with the model prediction. A biologically plausible model should have the observed data point fall within the 95% confidence interval of the prediction. Significant deviation suggests over-fitting or an incorrect model structure.

Q3: My dual-agent PK model fits the plasma concentration data well, but the linked PD effect model fails to capture the response time course. Where should I troubleshoot? A: This indicates a potential disconnect between the PK "driver" and the PD response.

- Check: The inclusion and parameterization of an effect compartment (biophase) in your PK-PD link. The delay in effect is often modeled using a hypothetical effect-site compartment with a first-order rate constant (k_e0).

- Protocol to Estimate ke0:

- Assume a direct link between plasma concentration and effect initially.

- Plot observed effect (E) against plasma concentration (C) over time. A counterclockwise hysteresis loop confirms a distributional delay.

- Incorporate an effect compartment into your model. The effect compartment concentration (Ce) is driven by plasma concentration (Cp): dCe/dt = ke0 * (Cp - Ce).

- Link Ce to the PD model (e.g., Emax, Sigmoid Emax). Fit ke0 alongside PD parameters. The hysteresis loop should collapse when plotting E vs. Ce.

Q4: What are the best practices for quantitatively comparing different dual-agent interaction models (e.g., Loewe vs. Bliss) for my dataset? A: Use a rigorous model selection framework. The table below summarizes key quantitative metrics.

Table 1: Metrics for Comparing Dual-Agent PK-PD Model Fit

| Metric | Formula / Principle | Interpretation in Model Selection |

|---|---|---|

| Akaike Information Criterion (AIC) | AIC = 2k - 2ln(L) | Lower is better. Balances model fit (L: likelihood) with complexity (k: parameters). Penalizes over-fitting. |

| Bayesian Information Criterion (BIC) | BIC = k*ln(n) - 2ln(L) | Lower is better. Stronger penalty for complexity than AIC, preferred for larger sample sizes (n). |

| Visual Predictive Check (VPC) | Graphical overlay of percentiles of observed data vs. model-simulated data. | A good model will have observed percentiles (e.g., 5th, 50th, 95th) fall within the confidence intervals of simulated percentiles. |

| Precision of Parameter Estimates | Coefficient of Variation (CV%) = (Standard Error/Estimate)*100 | CV% < 30% is generally acceptable for structural model parameters. High CV% indicates poor identifiability. |

Experimental Protocols

Protocol: Checkerboard Assay for Initial Interaction Parameter Estimation

- Objective: Generate robust in vitro data for quantifying drug interaction (synergy/additivity/antagonism).

- Materials: See "Scientist's Toolkit" below.

- Method:

- Plate cells in a 96-well plate and allow to adhere.

- Prepare serial dilutions of Drug A along the x-axis and Drug B along the y-axis, creating a matrix of all possible combinations.

- Treat cells and incubate for the desired period (e.g., 72h).

- Measure cell viability (e.g., via ATP quantification using a luminescent assay).

- Calculate % inhibition for each well.

- Analyze data using software like Combenefit or SynergyFinder to generate initial interaction scores (Loewe Additivity Index, Bliss Excess) which inform the PD interaction structure (α, ψ) in the PK-PD model.

Protocol: Serial Sampling for Dual-Agent PK in a Preclinical Study

- Objective: Obtain rich PK data for both agents to drive the PK component of the model.

- Method:

- Administer the combination therapy to animal subjects (e.g., mice, rats) at the planned doses (IV, IP, or PO).

- Use a sparse or serial sampling design across multiple animals. For example, in a 24-hour study, sacrifice 3-4 animals per time point (e.g., 5 min, 15 min, 1h, 4h, 8h, 24h) and collect plasma.

- Quantify plasma concentrations for both drugs using a validated analytical method (e.g., LC-MS/MS).

- Fit a 2- or 3-compartment PK model to the concentration-time data for each drug individually before linking them in the full PK-PD model.

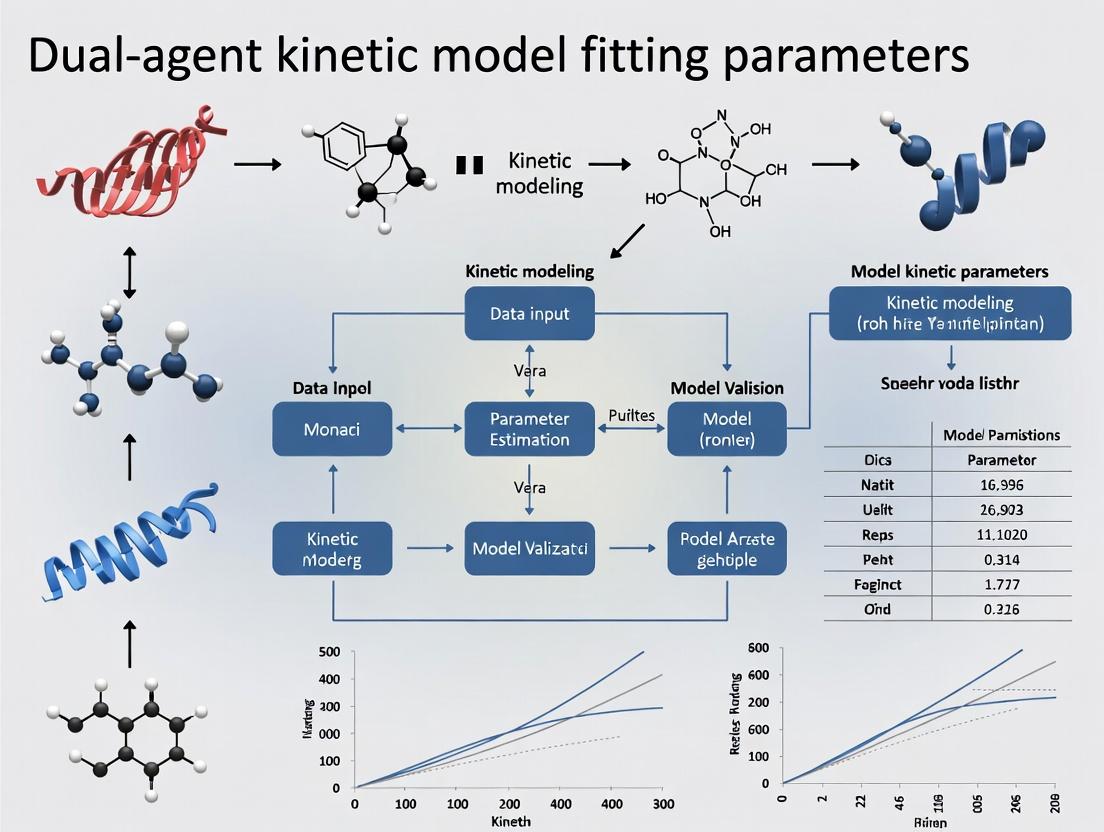

Signaling Pathway & Workflow Visualizations

Title: Logical Structure of a Dual-Agent PK-PD Model with Interaction

Title: Workflow for Dual-Agent PK-PD Model Fitting in Thesis Research

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Dual-Agent PK-PD Experiments

| Item | Function in Research | Example/Notes |

|---|---|---|

| LC-MS/MS System | Quantification of drug concentrations in biological matrices (plasma, tissue) for PK modeling. | Essential for generating precise concentration-time data for two agents simultaneously. |

| Cell Viability Assay Kit (e.g., CellTiter-Glo) | Measures PD response (cell death/proliferation) in checkerboard or time-course assays. | Luminescent ATP quantitation is preferred for sensitivity and linear range. |

| Pharmacometric Software | For non-linear mixed-effects modeling (NLME) and simulation. | NONMEM, Monolix, Phoenix NLME, or R/Python with nlmixr/Pumas. |

| Synergy Analysis Software | Initial analysis of combination data to guide PD interaction model choice. | Combenefit, SynergyFinder, or R package BIGL. |

| In Vivo Animal Model | Provides the integrated system for testing the full PK-PD relationship. | Should be clinically relevant (e.g., patient-derived xenograft for oncology). |

| Stable Isotope-Labeled Internal Standards | Ensures accuracy and precision in bioanalytical method for PK sampling. | Critical for reliable PK parameter estimation. |

Technical Support Center: Troubleshooting Guides & FAQs

This support center is designed to assist researchers working within the framework of dual-agent kinetic model fitting, a core component of modern drug combination research. The following FAQs address common experimental and analytical challenges.

FAQ 1: During the fitting of a competitive inhibition model, my estimated Ki value is inconsistent across different substrate concentrations. What could be the cause?

- Answer: This is a classic sign of non-competitive or mixed inhibition interference. A true competitive inhibitor's Ki should be constant. Please verify your experimental assumptions.

- Troubleshooting Steps:

- Re-assay Purity: Confirm the inhibitor and substrate are pure and not metabolized by the enzyme.

- Check Model Identity: Perform a diagnostic Dixon plot (1/v vs. [I]) at multiple substrate concentrations. If lines intersect on the x-axis, inhibition is competitive. If they intersect elsewhere, a mixed model is needed.

- Re-fit Data: Use a global fitting approach with a model for mixed inhibition (incorporating both Ki and α) across all substrate concentration datasets simultaneously.

- Troubleshooting Steps:

FAQ 2: My calculated IC50 shifts dramatically with changes in assay incubation time or substrate concentration. How do I report a meaningful value?

- Answer: IC50 is a conditional parameter, not an absolute constant like Ki. It is valid only under your specific assay conditions.

- Troubleshooting Guide:

- Standardize Protocol: Clearly document and fix the incubation time, substrate concentration ([S]), and enzyme concentration ([E]).

- Report Context: Always report IC50 with the exact experimental conditions: e.g., "IC50 = 150 nM at [S] = KM and 30-min pre-incubation."

- Convert to Ki: For mechanistic insight, use the Cheng-Prusoff equation (IC50 = Ki(1 + [S]/KM)) for competitive inhibitors to estimate the true binding affinity (Ki). Note this conversion is model-dependent.

- Troubleshooting Guide:

FAQ 3: When fitting a dose-response curve for a dual-agent combination, the interaction coefficient ψ (or α) does not converge, or the confidence interval is extremely wide.

- Answer: This indicates insufficient information content in your experimental design to inform the interaction parameter.

- Troubleshooting Steps:

- Check Design Matrix: Ensure your combination experiment includes an adequate range of doses for both drugs, singly and in combination, especially around the expected effect levels (e.g., EC50). A simple checkerboard assay is essential.

- Increase Replication: Biological variability can obscure interaction signatures. Increase replicate number (n≥3).

- Constrain Parameters: Fit the Emax and γ (Hill slope) for each agent individually from single-agent data first. Then, in the combination model, hold these parameters fixed while fitting only the interaction coefficient ψ (in Bliss or Loewe models) or α (in mechanistic PK/PD models).

- Troubleshooting Steps:

FAQ 4: The Hill coefficient (γ) for my agent is >4 or <0.5. Is this biologically plausible, and how does it affect combination modeling?

- Answer: Extreme γ values indicate a very steep or shallow dose-response curve.

- Implications & Actions:

- Plausibility: Yes, it is plausible (e.g., positive cooperativity can yield γ >> 1).

- Verification: Repeat the assay with more data points along the effect transition to confirm the shape.

- Impact on Combinations: A steep curve (high γ) implies a narrow window between no effect and maximal effect. This can make additive effects (ψ ≈ 1) appear strongly synergistic if data points are sparse. It is critical to include γ in the combination model fit rather than assuming it equals 1.

- Implications & Actions:

FAQ 5: What is the practical difference between the Bliss independence (ψ) and the Loewe additivity (α) coefficients for quantifying drug interactions?

- Answer: They are based on different null references for "no interaction."

- Bliss Independence (ψ): Assumes drugs act via statistically independent mechanisms. ψ > 1 indicates synergy. It is preferred when mechanisms are distinct and non-interacting.

- Loewe Additivity (α): Assumes drugs act on the same pathway or are mutually exclusive. α > 0 indicates synergy. It is preferred for congeners or drugs targeting the same enzyme/receptor.

- Recommendation: Calculate both. If they disagree qualitatively, it suggests your underlying mechanistic assumptions require re-evaluation.

Table 1: Key Pharmacodynamic Parameters in Dual-Agent Modeling

| Parameter | Symbol | Definition | Typical Range | Key Interpretation |

|---|---|---|---|---|

| Inhibition Constant | Ki | Concentration yielding half-maximal occupancy at equilibrium, under no-substrate conditions. | pM - μM | True binding affinity constant. Independent of assay conditions. |

| Half-Maximal Inhibitory Concentration | IC50 | Concentration that reduces assay response by 50% under specific experimental conditions. | nM - mM | Potency metric. Condition-dependent (varies with [S], time). |

| Maximal Effect | Emax | The ceiling effect of a drug, expressed as % inhibition or fold-change. | 0-100% or 0-1 (scale dependent) | Intrinsic efficacy of the agent. |

| Hill Coefficient | γ (or nH) | Steepness of the dose-response curve. Describes cooperativity. | 0.5 - 4+ | γ=1: Michaelian; γ>1: Positive cooperativity. |

| Bliss Interaction Coefficient | ψ (psi) | Multiplicative term over expected Bliss independent effect. | 0 → ∞ | ψ = 1: Additivity; ψ > 1: Synergy; ψ < 1: Antagonism. |

| Loewe Additivity Coefficient | α (alpha) | Additive term describing dose modification in the Loewe model. | -∞ → ∞ | α = 0: Additivity; α > 0: Synergy; α < 0: Antagonism. |

Experimental Protocols

Protocol 1: Determining Ki via Enzyme Kinetics Assay

- Objective: Accurately determine the inhibition constant (Ki) and mode of inhibition for a single agent.

- Method:

- Prepare a fixed, limiting concentration of the target enzyme.

- For each inhibitor concentration ([I], include 0), perform initial velocity measurements across a range of substrate concentrations ([S]), spanning 0.2-5 x KM.

- Measure initial velocity (v) via spectrophotometry, fluorescence, or radiometry.

- Fit the global dataset to the Michaelis-Menten equation modified for competitive, uncompetitive, or mixed inhibition using non-linear regression (e.g., in GraphPad Prism).

- Key Analysis: The model with the lowest AICc that yields consistent residual plots identifies the inhibition mode. The fitted Ki is the output.

Protocol 2: Checkerboard Assay for Dual-Agent Interaction Coefficients (ψ, α)

- Objective: Quantify the interaction between two drugs using a cell-based viability assay.

- Method:

- Plate cells in 96-well or 384-well plates.

- Prepare serial dilutions of Drug A and Drug B in separate tubes.

- Using a liquid handler or multichannel pipette, add Drug A in varying concentrations along the rows and Drug B along the columns to create a matrix of all combinations, including single-agent controls and vehicle controls.

- Incubate for the determined assay period (e.g., 72h).

- Add cell viability reagent (e.g., CellTiter-Glo) and measure luminescence.

- Normalize data: %Viability = (Combo - Median(Blank)) / (Median(Vehicle Control) - Median(Blank)) * 100.

- Key Analysis: Fit normalized data to a dual-agent interaction model (e.g., Bliss Independence or Loewe Additivity model) using software like Combenefit, R

drcpackage, or custom scripts to estimate Emax, γ, and the interaction coefficient (ψ or α).

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Dual-Agent Kinetic & PD Studies

| Item | Function & Application in Context |

|---|---|

| Recombinant Target Enzyme/Protein | High-purity, active protein for mechanistic Ki determination and binding studies. |

| Fluorogenic/Luminescent Substrate | Enables real-time, continuous monitoring of enzyme activity in kinetic inhibition assays. |

| Cell Line with Validated Target Expression | Relevant cellular context for measuring IC50, Emax, γ, and combination effects (ψ, α). |

| Cell Viability Assay Kit (e.g., ATP-based) | Robust, homogeneous endpoint measurement for dose-response and checkerboard combination studies. |

| Positive Control Inhibitor (Known Ki/IC50) | Validates assay performance and serves as a benchmark for new compounds. |

| DMSO (Cell Culture Grade) | Universal solvent for small molecule agents; must be kept at low, consistent concentrations (<0.5% v/v) to avoid cytotoxicity. |

| Automated Liquid Handler | Critical for accuracy and reproducibility in setting up complex checkerboard assay dilution matrices. |

| Non-Linear Regression Software (e.g., Prism, R) | Essential for fitting complex models (dose-response, kinetic, interaction) to extract Ki, IC50, Emax, γ, ψ, α. |

Visualizations

Title: Enzyme Kinetic Inhibition Pathways

Title: Dual-Agent Interaction Analysis Workflow

Technical Support Center: Troubleshooting Guides & FAQs for Dual-Agent Kinetic Model Fitting

Q1: During a combination index (CI) calculation based on the Loewe Additivity model, we are getting a CI > 10 or a CI < 0.01. Are these results valid, or is there likely an error in our dose-response data fitting?

A1: A CI value outside the typical range of 0.1 to 10 often indicates a fundamental issue with the underlying single-agent dose-response curve fits, which are critical for Loewe's reference model. The most common causes are:

- Poor fitting of the Hill equation parameters (Emax, EC50, Hill slope) for the individual drugs. An incorrectly fitted EC_50 can drastically skew isobologram predictions.

- Insufficient data range: The single-agent curves must adequately define the baseline and plateau effects. If your combination dose lies in an effect region extrapolated far beyond your single-agent data, the CI calculation becomes unreliable.

Troubleshooting Protocol:

- Re-visualize Single-Agent Fits: Plot the observed data points and the fitted curve for each drug. Ensure the curve logically follows the data and the residuals are randomly distributed.

- Constrain Parameters Appropriately: If data is limited, consider constraining the Hill slope (e.g., to 1) or the E_max (to the observed maximum effect in your system) to stabilize the fit.

- Re-calculate CI with Bootstrapping: Use software (e.g., Combenefit, R

drcpackage) to perform a bootstrap analysis on your single-agent fits. This will generate a confidence interval for your CI value. If the 95% CI of your combination index still excludes 1 (e.g., [12.5, 15.2]), the interaction (strong antagonism) may be real, albeit extreme.

Q2: When applying the Bliss Independence model, how should we handle single-agent effects that are very low (e.g., <10% inhibition) or zero? The expected effect (E_Bliss) calculation seems to break down.

A2: This is a known limitation of the Bliss model when effects are non-monotonic or near the bottom asymptote. A measured effect of zero for a single agent leads to a division-by-zero problem when calculating the Bliss excess (ΔE = Eobs - EBliss).

Recommended Workaround & Protocol:

- Background Correction: Ensure all effect measurements (EA, EB, E_comb) are correctly normalized to positive (vehicle) and negative (full inhibition) controls.

- Use Absolute Effects: Express effects as a fraction of the total dynamic range (from 0 to 1). Apply a minimum floor value (e.g., 0.001) if a single-agent effect is precisely zero after correction, acknowledging this as a limitation in your analysis.

- Shift to Probabilistic Framework: For quantal data (e.g., cell death yes/no), use the original probabilistic Bliss formulation: Expected Fraction Affected = (FA + FB) - (FA * FB). This is more robust.

- Report with Confidence Intervals: Perform replicates and report the Bliss excess (ΔE) with standard deviations. A ΔE of 0.05 ± 0.08 is not statistically significant, even if the calculation is technically valid.

Q3: Our higher-order model (e.g., a 3-parameter synergy model) fails to converge during nonlinear regression fitting, or returns unrealistic parameter estimates. What steps can we take?

A3: Failure to converge in complex models is typically due to overparameterization, poor initial parameter guesses, or insufficient/ noisy data.

Step-by-Step Debugging Protocol:

- Simplify the Model: Start by successfully fitting the simpler Loewe or Bliss null model to your combination data matrix. Use those residuals to assess if a more complex model is truly needed.

- Grid Search for Initial Parameters: Before regression, systematically calculate the model's sum-of-squares error across a wide "grid" of possible parameter values. Use the parameter set with the lowest error as the initial guess for the nonlinear regression algorithm.

- Increase Data Resolution: Ensure you have sufficient dose combinations, especially around the EC_50 regions of each drug. A full factorial design (e.g., 4x4 matrix) is more robust than a checkerboard design with few points.

- Implement Parameter Constraints: Physiologically realistic constraints are essential (e.g., an interaction coefficient α must be > 0, Hill slopes between 0.5 and 4). This guides the fitting algorithm.

Research Reagent Solutions Toolkit

| Item | Function in Dual-Agent Kinetic Studies |

|---|---|

| Fluorescent Viability/Proliferation Dyes (e.g., CTG, AlamarBlue) | Enable continuous, kinetic monitoring of cell health in response to drug combinations without requiring cell lysis. |

| Real-Time Cell Analyzer (RTCA) / Impedance Systems | Label-free, dynamic tracking of cell number, adhesion, and morphology for temporal synergy/antagonism assessment. |

| FRET-Based Apoptosis Biosensors | Quantify the kinetics of apoptotic pathway activation (e.g., caspase-3 activity) in live cells under combination treatment. |

| Phospho-Specific Antibodies for Western Blot/Flow Cytometry | Map the temporal perturbation of key signaling nodes (e.g., p-AKT, p-ERK) to infer mechanism of interaction. |

| Stable Isotope Labeling (SILAC) Reagents | For global, time-resolved proteomics to identify downstream protein expression changes driven by drug interactions. |

| Multi-Drug Combination Software (Combenefit, SynergyFinder) | Provide validated computational pipelines for applying Loewe, Bliss, and higher-order models to dose-response matrices. |

Quantitative Model Comparison Table

| Framework | Core Principle | Interaction Metric | Key Assumptions | Best For |

|---|---|---|---|---|

| Loewe Additivity | Dose additivity. One drug's dose can be replaced by an equipotent dose of another. | Combination Index (CI): <1=Synergy, =1=Additive, >1=Antagonism | Mutual exclusivity. Requires well-fitted single-agent dose-response curves. | Drugs with similar or identical molecular targets/modes of action. |

| Bliss Independence | Statistical independence. Drugs act through non-interacting pathways. | Bliss Excess (ΔE): >0=Synergy, =0=Independence, <0=Antagonism | The drugs act independently; effects are probabilistic. | Drugs with distinct, parallel mechanisms of action. |

| Zero-Interaction Potency (ZIP) | Loewe additivity on the dose-potency curve, assuming no interaction. | Δβ (delta beta) score. Deviation from the expected dose-response surface. | Conserves the shape of single-agent dose-response curves. | General use; often performs well in benchmark studies. |

| Highest Single Agent (HSA) | The expected effect of a combination is the maximum effect of each drug alone. | Excess over HSA. | Very conservative null model. | Preliminary screening to identify strong combinatory effects. |

Experimental Protocol: Time-Resolved Dose-Response Matrix for Kinetic Model Fitting

Objective: To generate data suitable for fitting dynamic drug interaction models. Workflow:

- Plate Design: Seed cells in a 96-well plate. Use columns for a serial dilution of Drug A and rows for Drug B in a full factorial (e.g., 8x8) checkerboard format. Include single-agent gradients, positive/negative controls, and vehicle controls.

- Kinetic Dosing: Use a liquid handler to add drugs simultaneously at time T=0. For staggered dosing, add Drug B at a later time point (e.g., T=6h) to a plate pre-treated with Drug A.

- Continuous Monitoring: Place plate in a pre-warmed, CO2-equilibrated multimode reader. Acquire data from your assay (e.g., fluorescence from a viability dye) every 2-4 hours for 72-96 hours.

- Data Processing: At each time point, normalize raw readings:

Effect(t) = (Ctrl_neg - Read_sample(t)) / (Ctrl_neg - Ctrl_pos). Generate a dose-effect matrix for key time points (e.g., 24h, 48h, 72h). - Model Fitting: Input each time-point's matrix into analysis software. Fit Loewe or Bliss models independently per time point to observe how the interaction (CI or ΔE) evolves over time.

Title: Kinetic Drug Combination Assay Workflow

Title: Relationship Between Drug Interaction Models

Technical Support Center

FAQ & Troubleshooting Guide for Dual-Agent Kinetic Model Fitting

Q1: During model fitting for two synergistic drugs, our parameter estimates (e.g., α in the Bliss Independence model) show extreme variability between experimental replicates. What could be the cause and how can we stabilize the fit? A: High variability often stems from insufficient data density in the effect surface or poorly constrained initial parameters. Implement a two-step protocol:

- Experimental Protocol (Dose-Response Surface Mapping):

- For Drug A and Drug B, use a minimum of 4 concentrations each, arranged in a full factorial matrix (e.g., 0, IC₂₅, IC₅₀, IC₇₅).

- Use at least 4 biological replicates per combination.

- Measure the system output (e.g., cell viability, phosphorylated protein level) at multiple time points (e.g., 0h, 6h, 12h, 24h) to capture dynamics.

- Fit a baseline Hill equation to each drug's single-agent time-course data to obtain prior estimates for Emax, EC50, and slope (m).

- Computational Protocol (Global Fitting with Regularization):

- Use a global fitting algorithm (e.g., in R with

nls.lmor Python withlmfit) to fit the synergy model (e.g., Loewe Additivity, Bliss Independence) to the entire 4D dataset (Dose A, Dose B, Time, Effect) simultaneously. - Apply Bayesian regularization or soft constraints to the single-agent parameters (Emax, EC50) to keep them within physiologically plausible ranges derived from step 1.

- Use a global fitting algorithm (e.g., in R with

Q2: How do we formally distinguish between synergistic, additive, and antagonistic effects when our kinetic model outputs a continuous interaction parameter? A: Statistical comparison to a null additive model is required. Use the following workflow and decision table:

- Fit your data to both a null model (assuming additivity, e.g., Loewe's) and an interaction model (e.g., incorporating a synergy parameter, β).

- Perform a model comparison using the Extra Sum-of-Squares F-test or compare Akaike Information Criterion (AIC) values.

- Calculate confidence intervals for the interaction parameter.

| Comparison Result | Statistical Threshold | Conclusion |

|---|---|---|

| Interaction model fits significantly better than null model (p < 0.05). | β (or α) 95% CI > 0 | Synergy |

| Interaction model does NOT fit better than null model (p > 0.05). | β (or α) 95% CI overlaps 0 | Additivity |

| Interaction model fits significantly better than null model (p < 0.05). | β (or α) 95% CI < 0 | Antagonism |

Q3: What are the essential reagents and controls for a live-cell imaging experiment tracking signaling pathway dynamics under combinatorial treatment? A: Research Reagent Solutions Table:

| Item | Function & Rationale |

|---|---|

| FUCCI (Fluorescent Ubiquitination-based Cell Cycle Indicator) Cell Line | Reports cell cycle phase (G1: red, S/G2/M: green). Controls for cell cycle-dependent drug effects. |

| FRET-based Biosensor (e.g., for AKT or ERK activity) | Reports real-time, spatially resolved signaling dynamics in single cells upon treatment. |

| Cell Viability Dye (e.g., propidium iodide) | Distinguishes true signaling modulation from cytotoxicity artifacts. |

| Phenotypic Control Inhibitors (e.g., LY294002 for PI3K, U0126 for MEK) | Validates biosensor specificity and establishes expected single-agent dynamic profiles. |

| Matrigel or Collagen Matrix | For 3D culture experiments to model tissue-level context and penetration effects. |

Q4: Our workflow for analyzing dual-agent synergy is fragmented across multiple tools. What is a robust, integrated computational pipeline? A: Follow this standardized workflow for reproducibility.

Synergy Analysis Computational Pipeline

Q5: Can you diagram the key signaling crosstalk node often implicated in targeted therapy synergy? A: A common node is the reciprocal feedback between the MAPK and PI3K/AKT pathways.

MAPK-PI3K Crosstalk and Feedback

Welcome to the technical support center for foundational single-agent PK-PD concepts, designed to support your research in dual-agent kinetic model fitting parameters. Below are troubleshooting guides, FAQs, and essential resources.

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: During single-agent PK model fitting, my two-compartment model consistently fails to converge. What are the primary causes? A: Non-convergence in two-compartment models often stems from:

- Poor Initial Parameter Estimates: The solver cannot find the optimum. Use a naive-pooled approach to get rough estimates from individual subjects first.

- Data Limitations: The sampling schedule may be insufficient to characterize the distribution (α) and elimination (β) phases. Ensure you have early, mid, and late time points.

- Model Misspecification: The data may follow a three-compartment model or a non-linear elimination process. Plot log(concentration) vs. time to visually inspect the number of phases.

Q2: What is the critical difference between EC₅₀ and IC₅₀ in PD models, and how does mis-specification impact a later dual-agent model? A: EC₅₀ (half-maximal effective concentration) is used for agonists, while IC₅₀ (half-maximal inhibitory concentration) is for antagonists/inhibitors. Confusing them in a single-agent foundation will cause complete failure when modeling drug-drug interactions (e.g., synergy/antagonism) in dual-agent systems, as the direction of the effect will be misrepresented.

Q3: My direct-effect PD model shows large residuals at peak effect ("hysteresis"). What does this indicate and how do I proceed? A: Hysteresis—a loop in the plot of effect vs. plasma concentration—indicates a temporal dissociation between PK and PD. This is a key concept for dual-agent research. The solution is to use an effect-compartment model (link model) to account for the distribution delay to the site of action.

Q4: How do I practically distinguish between competitive and non-competitive antagonism from single-agent inhibition data for future combination modeling? A: Run a functional assay with varying agonist concentrations and multiple fixed levels of your inhibitor. Analyze the data with standard Emax models.

- Competitive Antagonism: Increasing inhibitor causes a rightward shift in the agonist dose-response curve (EC₅₀ increases) with no suppression of maximal efficacy (Emax).

- Non-competitive Antagonism: Increasing inhibitor suppresses the observed Emax, with possible changes to EC₅₀.

Q5: When fitting an Emax model, should I fix the baseline (E₀) and maximum (Emax) parameters or estimate them from the data? A: It depends on your experimental design:

- Fix E₀ and Emax if your data contains clear vehicle (baseline) and saturating agonist (maximum) control arms. This increases stability.

- Estimate them if your data range does not clearly define these plateaus. However, this requires data points spanning the entire effect range and can lead to unstable fitting if not present. Inconsistent handling here will propagate error into dual-agent response surface models.

Key Experimental Protocols

Protocol 1: Establishing a Baseline PK Model for a Novel Compound

Objective: To determine the fundamental pharmacokinetic (PK) parameters (Clearance-CL, Volume-V, Half-life) for a new chemical entity (NCE) in a preclinical species. Methodology:

- Administer the NCE intravenously (IV) to ensure complete bioavailability.

- Collect serial blood samples at pre-dose, 2, 5, 15, 30 min, 1, 2, 4, 8, 12, and 24 hours post-dose (n=6-8 animals).

- Quantify plasma drug concentration using a validated LC-MS/MS method.

- Perform non-compartmental analysis (NCA) using software (e.g., Phoenix WinNonlin) to obtain primary parameters: AUC, CL, Vss, t₁/₂.

- Use NCA parameters as initial estimates for fitting a 1-, 2-, or 3-compartment model via maximum likelihood or least squares regression.

Protocol 2: Characterizing a Dose-Response Relationship for PD Modeling

Objective: To generate data for fitting a sigmoidal Emax pharmacodynamic (PD) model. Methodology:

- Design a study with at least 5 dose levels (including vehicle) of the test agent, spaced logarithmically.

- Include a positive control/reference compound at its known effective dose.

- Measure the relevant biomarker or functional endpoint (e.g., enzyme activity, receptor occupancy) at the predetermined time of peak effect (Tmax).

- Plot the mean response (±SEM) versus log(dose).

- Fit the data to the model:

Effect = E₀ + (Emax * Dose^γ) / (ED₅₀^γ + Dose^γ), where γ is the Hill coefficient. Estimate parameters using non-linear regression.

Data Presentation

Table 1: Comparison of Common Single-Agent PK-PD Model Structures

| Model Type | Primary Use | Key Parameters | Common Cause of Fitting Failure |

|---|---|---|---|

| Direct Link (No Hysteresis) | PK and PD change in parallel | Emax, EC₅₀, E₀ | Presence of effect delay (hysteresis) |

| Effect Compartment (Indirect Response) | Accounts for hysteresis (effect delay) | ke₀ (effect site rate constant) | Inadequate early PK sampling to define ke₀ |

| Indirect Response I-IV | Models stimulation/inhibition of response production or loss | kin, kout, IC₅₀/EC₅₀ | Misidentification of whether drug affects production vs. loss of response |

| Sigmoidal Emax | Standard dose/conc-response relationship | Emax, EC₅₀, Hill Coefficient (γ) | Data does not span 20-80% of effect range |

Table 2: Essential PK Parameters from Non-Compartmental Analysis (NCA)

| Parameter | Symbol | Unit | Interpretation for Dual-Agent Research |

|---|---|---|---|

| Area Under Curve | AUC | ng·h/mL | Critical for estimating exposure for later interaction studies. |

| Clearance | CL | L/h | Determines dosing rate. Changes in dual therapy indicate PK interaction. |

| Volume of Distribution | Vss | L | Informs tissue penetration. Key for predicting effect-site concentrations. |

| Terminal Half-life | t₁/₂ | h | Determines dosing frequency and time to steady-state in combination regimens. |

| Maximum Concentration | Cmax | ng/mL | Often linked to efficacy/toxicity; additive effects in combos start here. |

Visualizations

Single-Agent PK-PD Modeling Workflow

Common Pharmacodynamic Response Models

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Category | Example(s) | Primary Function in Foundational PK-PD |

|---|---|---|

| Stable Isotope Labeled Internal Standards | d₃-, ¹³C-, ¹⁵N-labeled drug analogs | Essential for accurate, reproducible quantification of drug concentrations in biological matrices (plasma, tissue) via LC-MS/MS. |

| Recombinant Target Proteins & Enzymes | Human recombinant CYP450 enzymes, kinase domains | Used in vitro to characterize drug-target binding affinity (Kd), enzyme inhibition (IC₅₀), and mechanism of action. |

| Phospho-Specific Antibodies | Anti-pERK, Anti-pAKT, Anti-pSTAT | Enable quantification of target engagement and downstream pathway modulation in cell-based PD assays. |

| Validated Biomarker Assay Kits | ELISA for cytokines, ADP-Glo Kinase Assay | Provide robust, standardized methods to measure specific PD endpoints critical for establishing the exposure-response relationship. |

| PK Modeling Software | Phoenix WinNonlin, NONMEM, Monolix | Industry-standard platforms for performing NCA, fitting complex PK-PD models, and simulating profiles—the core of parameter estimation. |

From Theory to Practice: Step-by-Step Guide to Fitting Dual-Agent Model Parameters

FAQs & Troubleshooting Guides

Q1: During a dose-ratio experiment for two synergistic inhibitors, my model fitting yields highly variable estimates for the cooperativity coefficient (α). What are the primary sources of this instability? A: High variability in α often stems from an inadequate spread of dose combinations. If all tested points cluster near the IC50 of each drug, the surface response is poorly defined. Solution: Implement a checkerboard design that includes extreme ratios (e.g., 10:1, 1:10 of Drug A:Drug B) and doses spanning from 0.1x to 10x the estimated single-agent IC50. Ensure sufficient replication (n≥4) at the corner points of the design matrix to constrain the interaction surface.

Q2: In time-course experiments for kinetic parameter estimation, how do I determine the optimal sampling frequency? A: Insufficient sampling misses critical dynamics. A preliminary experiment is essential. Protocol:

- Treat cells with a single high-concentration bolus of each agent and the combination.

- Take dense, rapid samples (e.g., every 2-5 minutes) for the first hour post-treatment, then every 15-30 minutes for up to 8-12 hours.

- Plot the raw response (e.g., phosphorylated target). The derivative of this curve informs the minimum sampling rate; sample at least 3x more frequently than the fastest observed kinetic phase (e.g., rapid initial inhibition).

Q3: My fitted parameters for a dual-agent binding model are correlated (e.g., kon and koff trade off). How can my experimental design reduce this parameter correlation? A: Parameter identifiability issues are common. Incorporate a sequential dosing strategy into your time-course design. Protocol:

- Pre-treat cells with a saturating concentration of the slower-binding Drug B for 60 minutes.

- Without washing, add a range of concentrations of Drug A.

- Measure response kinetically. This "pre-equilibration" design decouples the association rates, providing independent information on Drug A's binding parameters against a Drug B-saturated target.

Q4: How many biological replicates are needed for robust parameter estimation in these complex designs? A: For model fitting with >4 parameters, we recommend a minimum of independent experimental runs. The table below summarizes guidance based on design type:

Table 1: Replication Guidelines for Parameter Estimation Experiments

| Design Type | Minimum Independent Runs (N) | Key Rationale |

|---|---|---|

| Dose-Ratio (Synergy) | 3 | To reliably distinguish synergistic (α>1) from additive (α=1) models. |

| Full Time-Course | 4 | To account for variability in cell passage status and assay plating. |

| Sequential Dosing | 4 | Increased complexity of the protocol introduces more potential technical noise. |

Q5: What are the essential controls for validating a dual-agent kinetic model? A: The following control set is mandatory for each experiment:

- Vehicle Control (Time=0 & all time points): Baseline signal.

- Single-Agent Maximum Effect: High concentration of each drug alone, full time-course.

- "Additive Expectation" Control: Use a non-interacting agent pair (or a fixed-ratio combination calculated by Bliss Independence) to benchmark your analysis pipeline.

- Target Knockdown/KO Control: To confirm signal specificity, especially for downstream readouts.

Research Reagent Solutions Toolkit

Table 2: Essential Reagents for Dual-Agent Kinetic Studies

| Item | Function & Critical Specification |

|---|---|

| Pathogenic Cell Line | Engineered to express the target(s) of interest at physiologically relevant, constant levels. |

| FRET or BRET Biosensors | For real-time, live-cell monitoring of target engagement or conformational change. |

| Phospho-Specific Antibodies | Validated for fixed-cell immunofluorescence or Western blotting at multiple time points. |

| IC50-Tracker Dyes | Cell-permeable, non-toxic viability dyes for continuous monitoring of cytotoxicity in long courses. |

| Low-Binding Microplates | Reduce non-specific adsorption of small-molecule drugs, ensuring accurate concentration in media. |

| Automated Liquid Handler | Critical for precise, rapid sequential dosing and generation of dose-ratio matrices. |

Experimental Workflows and Pathways

Diagram 1: Dose-Ratio vs. Time-Course Experimental Strategy

Diagram 2: Competitive Binding Pathway for Two TKIs

Detailed Experimental Protocol: Integrated Dose-Ratio Time-Course Experiment

Title: Protocol for Simultaneous Estimation of Synergy and Kinetic Parameters.

Objective: To collect data sufficient for fitting a dual-agent kinetic model with an interaction term, minimizing parameter covariance.

Materials: As per Table 2.

Procedure:

- Plate cells in 96-well imaging microplates. Incubate for 24h.

- Prepare Drug Stocks: Using an automated handler, prepare a 6x6 dose matrix in triplicate. Columns: 5 concentrations of Drug A (0.1x, 0.3x, 1x, 3x, 10x IC50) + vehicle. Rows: 5 concentrations of Drug B similarly scaled + vehicle.

- Dosing & Initiation: Using a multichannel pipette or dispenser, rapidly add 20µL of 6x drug/vehicle solutions to 100µL of media in corresponding wells to initiate treatment (Time=0). Record exact time for each row.

- Time-Course Acquisition:

- Place plate in live-cell imager or plate reader maintained at 37°C, 5% CO2.

- Image/Acquire every 5 minutes for the first 2 hours, then every 15 minutes for the next 10 hours.

- Measure fluorescence/FRET (for target engagement) and a reference viability dye channel.

- Termination: At 12h, for a subset of plates, lyse cells for phospho-protein immunoblot validation of key time points.

- Data Processing:

- Normalize signals to Time=0 vehicle control.

- For each well, extract the time-series trajectory.

- Assemble a 4D data array: [Drug A conc], [Drug B conc], [Time], [Response].

- Model Fitting: Fit the full array simultaneously to a system of ordinary differential equations describing competitive binding with a cooperative interaction term using nonlinear regression software (e.g., Monolix, NONMEM).

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: My kinetic fitting for a dual-agent inhibition model fails to converge. What are the primary data-related causes? A1: Non-convergence often stems from poor-quality input data. Key issues include:

- Insufficient Time Points: The reaction is not captured at a high enough temporal resolution, missing rapid initial binding events.

- High Signal-to-Noise Ratio: Excessive noise obscures the true kinetic trajectory, causing the fitting algorithm to chase outliers.

- Incorrect Baseline/Offset: An improperly defined time-zero or unaccounted for signal drift invalidates the model's boundary conditions.

- Incomplete Equilibrium: Data collection stopped before the system reached steady-state, providing incomplete information for fitting dissociation constants (KD).

Q2: How do I preprocess SPR (Surface Plasmon Resonance) sensorgram data for robust dual-agent competition analysis? A2: Follow this standardized preprocessing workflow:

- Double-Reference Subtraction: Subtract both a buffer-only reference flow cell signal and an in-line blank injection (zero analyte concentration) from all sample sensorgrams.

- Align to Baseline: Align the pre-injection baseline to a consistent response value (often zero) for all curves.

- Filter High-Frequency Noise: Apply a Savitzky-Golay filter to smooth random noise without distorting the kinetic shape.

- Truncate and Combine: Ensure consistent start/stop times across replicates and average technical replicates before fitting.

Q3: What are the critical negative control experiments for validating data in a dual-binding model? A3: Essential controls include:

- Single-Agent Saturation: Confirm each agent alone follows expected 1:1 Langmuir binding before testing in combination.

- Zero-Analyte Injections: Verify no significant bulk shift or non-specific binding to the sensor chip surface.

- Reference Surface: Test agents on a deactivated (no target) surface to quantify non-specific binding.

- Regeneration Efficiency: Demonstrate the surface can be fully regenerated between cycles without loss of ligand activity (>95% recovery).

Experimental Protocols

Protocol 1: SPR Preprocessing for Dual-Agent Kinetic Analysis

Objective: To generate clean, normalized sensorgram data for global fitting of competitive or cooperative binding models.

- Instrument: Biacore T200 or 8K series.

- Ligand Immobilization: Capture the target protein on a Series S CM5 chip via amine coupling to achieve a density of 50-100 RU. Include a reference flow cell subjected to activation and deactivation without ligand.

- Analyte Preparation: Prepare a 3-fold dilution series (e.g., 9 concentrations from 0.1 nM to 100 nM) for each agent (Agent A, Agent B) in running buffer. Prepare co-injection samples at fixed equimolar ratios.

- Data Acquisition: Inject each sample for 180s (association) followed by a 600s dissociation phase at a flow rate of 30 µL/min. Use a standardized regeneration condition (e.g., 10 mM Glycine-HCl, pH 2.0 for 30s).

- Preprocessing (in Scrubber2 or similar): Apply double referencing, align baselines, and smooth with a 5-point Savitzky-Golay filter. Export normalized data for fitting.

Protocol 2: QC for Kinetic Data Suitability

Objective: To quantitatively assess if a dataset is suitable for complex model fitting.

- Calculate the Chi² (Reduced Chi-Squared) value for a simple 1:1 model fit to single-agent data. A value >10 suggests poor data quality or an incorrect model.

- Perform a Residuals Analysis. Plot fitting residuals over time; they should be randomly distributed around zero. Systematic deviations indicate a poor fit.

- Check Parameter Confidence Intervals from the fitting software. Intervals spanning more than one order of magnitude (e.g., ka = 1e3 - 1e5 M-1s-1) indicate insufficient data constraints.

Data Presentation

Table 1: Minimum Data Requirements for Reliable Dual-Agent Kinetic Parameter Estimation

| Parameter | Ideal Value Range | Impact of Deviation | QC Metric |

|---|---|---|---|

| Time Resolution | ≤ 0.1s (early phase), ≤ 5s (late phase) | Misses fast kinetics; overestimates ka | Visual inspection of association curve shape |

| Signal-to-Noise Ratio (SNR) | ≥ 20:1 | High parameter uncertainty; failed convergence | Calculate (Max Signal Std Dev) / (Baseline Noise Std Dev) |

| Ligand Immobilization Level | 50-100 RU (for ~50 kDa target) | Mass transport limitation if too high; low signal if too low | Keep Rmax theoretical / Rmax observed < 2 |

| Analyte Concentration Range | 0.1 * KD to 10 * KD | Cannot define curve asymptotes; poor KD precision | Span of sensorgrams should cover 5-95% of Rmax |

| Number of Replicates | n ≥ 3 (technical) | Unreliable parameter estimates; low statistical power | Coefficient of Variation (CV) for ka, kd should be < 15% |

Table 2: Common Data Artifacts and Correction Methods

| Artifact | Cause | Diagnostic | Correction |

|---|---|---|---|

| Bulk Shift | Refractive index mismatch between sample/running buffer | Parallel shift at injection start/stop | Double referencing |

| Carryover | Incomplete regeneration or sample residue | Elevated baseline, increasing with cycle | Optimize regeneration, include wash steps |

| Mass Transport Limitation | Ligand density too high; flow rate too low | Concave association phase | Lower immobilization level; increase flow rate |

| Non-Specific Binding | Analyte binds to chip matrix or reference surface | Signal on reference flow cell > 5 RU | Include blocker (e.g., BSA, carboxymethyl dextran) in buffer |

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Kinetic Studies

| Reagent / Material | Function in Dual-Agent Kinetic Experiments |

|---|---|

| CM5 Sensor Chip (Cytiva) | Gold surface with a carboxymethylated dextran matrix for covalent ligand immobilization. |

| Amine Coupling Kit (NHS/EDC) | Activates carboxyl groups on the chip surface to enable covalent capture of protein ligands. |

| HBS-EP+ Buffer (10mM HEPES, pH 7.4, 150mM NaCl, 3mM EDTA, 0.05% v/v P20) | Standard running buffer for SPR; provides ionic strength, pH control, and reduces non-specific binding. |

| Series S Capture Kit (Anti-His, Anti-GST) | For oriented, uniform capture of His- or GST-tagged ligands, improving data quality and reproducibility. |

| Regeneration Solution Scouting Kit | A panel of buffers (low pH, high pH, chaotropic, etc.) to identify optimal conditions for dissociating analyte without damaging the ligand. |

| Kinetic Evaluation Software (e.g., Biacore Insight, Scrubber2) | Specialized software for preprocessing sensorgrams, global fitting to complex models, and statistical analysis of fitted parameters. |

Mandatory Visualizations

Diagram 1: SPR Data Preprocessing Workflow

Diagram 2: Dual-Agent Competitive Binding Pathway

Technical Support Center

Frequently Asked Questions (FAQs) & Troubleshooting Guides

FAQ 1: I am fitting a dual-agent kinetic model with a complex interaction term. My parameter estimates are unstable and the solver often fails to converge. What are the primary causes and solutions?

- Answer: This is common in complex dual-agent models. Causes and solutions are toolkit-dependent:

- NONMEM/Monolix: Often due to over-parameterization or poor initial estimates. Use the

$PRIORstatement (NONMEM) or Bayesian priors (Monolix) to stabilize estimation. Simplify the interaction model first, then gradually increase complexity. Check the correlation matrix of estimates; correlations >0.9 indicate poor parameter identifiability. - R (

nlmixr): Thefoceialgorithm can be sensitive. Try thesaemornlmemethod for initial exploration. Use thelogit()orlog()transform to constrain parameters (e.g., fractions between 0-1). Ensure your data object is correctly formatted fornlmixr(seevignette("nlmixr_data")). - Python (

PKPD): Verify the gradient of your ODE system. Use thescipy.integrate.solve_ivpmethod with tighter error tolerances (rtol,atol) within the fitting loop. Consider using global optimization (e.g., differential evolution) to find better initial guesses before local refinement. - ADAPT: Check the "Identifiability" module before fitting. Use the principal component analysis (PCA) option to detect unidentifiable parameters and reduce the model. Increase the number of restarts from different initial points.

- NONMEM/Monolix: Often due to over-parameterization or poor initial estimates. Use the

FAQ 2: How do I implement a covariate model (e.g., weight on clearance) differently across these toolkits?

- Answer: Implementation syntax varies significantly:

- NONMEM: In the

$PKblock:CL = THETA(1) * (WT/70)THETA(2) * EXP(ETA(1)). - Monolix: Use the graphical "Individual model" interface to drag-and-drop covariates or define directly in the structural model text:

CL = pop_CL * (WT/70)^beta_WT * exp(eta_CL). - R (

nlmixr): In the model specification:model({ CL <- pop_cl * (WT/70)^beta_wt * exp(eta_cl); ... }). - Python (

PKPD): Typically defined as part of the model function:def pk_model(t, y, params, wt): cl = params['pop_cl'] * (wt/70)params['beta_wt']; ....

- NONMEM: In the

FAQ 3: I'm getting different objective function values (OFV) or AIC/BIC for the same model and data when using different software. Why?

- Answer: Minor differences are expected, but large discrepancies signal issues.

- Algorithm & Objective: Confirm you are comparing the same estimation method (e.g., FOCE-I vs. SAEM). NONMEM's OFV is -2log-likelihood. Monolix reports -2LL.

nlmixrreports OBJF (equivalent to NONMEM's OFV) when using FOCE-I. - Error Model: Ensure the residual error model (additive, proportional, combined) is implemented identically.

- Data Handling: Check for differences in data filtering, handling of BLQ (below limit of quantification) data, or rounding.

- Convergence: The run may not have converged to the true minimum in one or more tools. Always check convergence diagnostics.

- Algorithm & Objective: Confirm you are comparing the same estimation method (e.g., FOCE-I vs. SAEM). NONMEM's OFV is -2log-likelihood. Monolix reports -2LL.

FAQ 4: My visual predictive check (VPC) looks poor. How do I troubleshoot the simulation and binning process?

- Answer:

- Increase Simulations: Default is often 200-500. For dual-agent models with high variability, use 1000+ simulations.

- Binning Strategy: Avoid the default

centers. Usebins = 'equal'(equal number of observations per bin) orbins = 'kmeans'(clustering) innlmixr/xpose. In Monolix, manually define bin edges based on the independent variable (e.g., time). - Check Model Predictions: First, plot individual fits and population predictions vs. observations. If the base prediction is wrong, the VPC cannot be correct.

- Python: With

PKPDorArviZ, ensure you are passing the correct posterior/parameter distribution for simulation.

Comparative Data Tables

Table 1: Core Technical Specifications & Support

| Feature | NONMEM | Monolix | R (nlmixr) |

Python (PKPD) |

ADAPT |

|---|---|---|---|---|---|

| License & Cost | Commercial, high cost | Commercial, tiered pricing | Open-source (R) | Open-source (Python) | Free academic |

| Primary Estimation Methods | FOCE, FOCE-I, SAEM, IMP | SAEM, Importance Sampling | FOCE-I, SAEM, FO, nlme | MCMC, NLME (via lmfit/emcee) |

GLP, MAP, Maximum Likelihood |

| Covariate Modeling | Syntax-based ($PK) |

GUI & Syntax | R formula-like | Manual function definition | GUI-driven |

| Stochastic Processes | Yes ($PRIOR, $MSFI) |

Yes (Bayesian priors) | Yes (priors in saem) |

Native via Bayesian libs | Limited |

| Diagnostic & VPC Plots | Via xpose (R) |

Built-in (lixoftSuite) | Built-in (nlmixr2) |

Requires matplotlib/seaborn |

Basic built-in |

| Parallel Computing | Limited (PsN) | Built-in (GPU acceleration) | Via future/parallel |

Native (multiprocessing, GPU libs) |

No |

| Learning Curve | Steep | Moderate | Steep (R) | Very Steep | Moderate |

Table 2: Performance on a Dual-Agent Synergy Model (Hypothetical Benchmark)

| Metric | NONMEM (FOCE-I) | Monolix (SAEM) | nlmixr (SAEM) |

ADAPT (MAP) | Recommendation for Dual-Agent Research |

|---|---|---|---|---|---|

| Run Time (min) | 45 | 22 | 65 | 15 | Monolix offers best speed/robustness balance. |

| Parameter Bias (%) | -1.2 to +2.1 | -0.8 to +1.7 | -2.5 to +3.3 | -5.1 to +8.9 | NONMEM/Monolix provide most accurate estimates. |

| Runtime Stability | High | High | Medium (R env.) | Low (complex models) | NONMEM is the industry gold standard. |

| Custom Model Flexibility | High (PREDPP) | High | Very High | Medium | nlmixr/Python for novel, highly custom mechanisms. |

| Identifiability Tools | Basic (corr matrix) | Advanced (Fisher Info) | Basic | Advanced (PCA) | ADAPT is excellent for initial model identifiability analysis. |

Experimental Protocol: Dual-Agent PK/PD Model Fitting Workflow

Title: Protocol for Fitting a Synergistic Dual-Agent Kinetic-Pharmacodynamic (K-PD) Model.

Objective: To estimate system-specific (e.g., tumor growth, drug interaction) and drug-specific (e.g., potency, rate constants) parameters from preclinical in vivo time-course data.

Materials & Reagent Solutions (The Scientist's Toolkit):

- In Vivo Tumor Volume Data: Longitudinal measurements from xenograft mice treated with Agent A, Agent B, and combination A+B.

- Plasma Concentration Data: Sparse or rich PK data for each agent (optional, allows full PKPD; otherwise, use K-PD).

- Software Toolkit: Installed and licensed (if applicable) copy of chosen software (e.g., Monolix 2024R1).

- Data Wrangling Tools: R (

dplyr,tidyr) or Python (pandas) for formatting data to software requirements. - Visualization Library: R (

ggplot2) or Python (Matplotlib) for diagnostic plots.

Methodology:

- Data Assembly: Create a dataset with columns:

ID,TIME,AMT(dose),DV(observed conc or effect),EVID(event type),MDV(missing data),CMT(compartment),AGENT(A, B, or COMBO). - Structural Model Definition:

- PK Component: Use 1- or 2-compartment models for each agent if PK data exists.

- PD Component (Synergy Model): Implement an Indirect Response or Tumor Growth Inhibition model with an interaction term. Example (Emax-based synergy):

EFFECT = E0 + (Emax_A*C_A/(EC50_A+C_A) + Emax_B*C_B/(EC50_B+C_B) + α*(Emax_A*C_A/(EC50_A+C_A))*(Emax_B*C_B/(EC50_B+C_B))) * f(t)- Where

αis the synergy parameter (α > 0indicates synergy).

- Parameter Estimation:

- Load data and model definition into the software.

- Set initial estimates based on literature or single-agent fits.

- Run the estimation algorithm (e.g., SAEM followed by Importance Sampling in Monolix).

- Constrain parameters to physiologically plausible ranges (e.g., use logit transforms).

- Model Diagnostics:

- Examine convergence criteria (gradient, objective function stability).

- Generate goodness-of-fit plots: Observations vs. Population/Individual Predictions, Residuals vs. Time/Predictions.

- Calculate precision of parameter estimates (Relative Standard Error %).

- Model Qualification:

- Perform a Visual Predictive Check (VPC) with 1000 simulations.

- Conduct a likelihood ratio test (for nested models) or calculate AIC/BIC for model selection.

Visualizations

Diagram 1: Dual-Agent Synergy Model Fitting Workflow

Diagram 2: Software Selection Logic for Dual-Agent Models

Technical Support Center: Troubleshooting Guides & FAQs

Q1: During sequential PK/PD fitting, my estimated PD parameters are biologically implausible (e.g., EC50 > maximum observed concentration). What are the primary causes and solutions?

A: This indicates a failure in the initial PK step or a structural model mismatch.

- Cause 1: Poor PK model fit, especially at the tail phase, leading to inaccurate estimation of drug exposure at the PD site.

- Solution: Refit PK data with alternative structural models (e.g., add an extra compartment) or error models. Validate with diagnostic plots (Observed vs. Predicted, Residuals).

- Cause 2: Significant time delay between plasma concentration and effect not captured by a simple effect compartment model.

- Solution: Implement an indirect response (IDR) model. Begin with a basic IDR model (e.g., inhibition of kin or stimulation of kout) in the sequential step.

- Protocol for PK Model Diagnostic: 1) Fit candidate PK models (1-, 2-, 3-compartment) to concentration-time data using NLMEM. 2) Calculate AIC/BIC for model selection. 3) Perform visual predictive check (VPC) to assess predictive performance.

Q2: When switching from sequential to simultaneous fitting, the software fails to converge or yields large parameter standard errors. How should I proceed?

A: This is common due to increased model complexity and parameter identifiability issues.

- Cause 1: Poor initial parameter estimates for the simultaneous fit.

- Solution: Use the final parameter estimates from the robust sequential fit as initial estimates for the simultaneous fit. Fix parameters with high precision (low RSE%) from the sequential fit in the first simultaneous run.

- Cause 2: Over-parameterization or correlation between PK and PD parameters (e.g., between clearance and EC50).

- Solution: Perform a sensitivity analysis or eigenvalue analysis to identify non-identifiable parameters. Simplify the PD model if possible. Consider a sequential fitting approach with Bayesian priors from the PK step as an intermediate step.

- Protocol for Sequential-to-Simultaneous Transition: 1) From sequential fits, export PK and PD parameter estimates and variance-covariance matrix. 2) Use these as initial estimates and initial OMEGA matrix for simultaneous fit. 3) Run simultaneous estimation first with FOCE-I, then with importance sampling methods for final validation.

Q3: How do I statistically justify choosing a simultaneous over a sequential fitting approach for my dual-agent model?

A: A model comparison hypothesis test should be performed.

- Method: Use the objective function value (OFV) from non-linear mixed-effects modeling (e.g., NONMEM). The simultaneous fit will have one combined OFV. For the sequential approach, sum the final OFVs from the independent PK and PD fits. The difference in OFVs (ΔOFV) approximates a χ² distribution. A ΔOFV > 3.84 (χ², df=1, p<0.05) suggests the simultaneous model provides a significantly better fit.

- Critical Check: Ensure the simultaneous model accounts for all correlations between PK and PD random effects. The real advantage is in quantifying these correlations (e.g., between CL and Emax).

- Protocol for Model Comparison: 1) Perform final sequential PK and PD fits, note OFVPK and OFVPD. 2) Perform final simultaneous PK/PD fit, note OFVSIM. 3) Calculate ΔOFV = (OFVPK + OFVPD) - OFVSIM. 4) Compare ΔOFV to χ² critical value (df = difference in number of estimated covariance parameters).

Q4: For a dual-agent study with interacting pathways, how do I design the stepwise fitting protocol to isolate agent-specific parameters?

A: A three-stage sequential protocol is recommended within the thesis context.

- Stage 1: Fit PK parameters for each agent separately using mono-agent data.

- Stage 2: Fit PD parameters for each agent separately using mono-agent PD data, fixing individual empirical Bayes estimates (EBEs) of PK parameters from Stage 1.

- Stage 3: Fit interaction parameters (e.g., synergy, antagonism) using combination therapy data, fixing or estimating with informative priors the agent-specific parameters from Stages 1 & 2.

- Protocol for Interaction Modeling: Use a response surface model (e.g., Greco model). Fix baseline, Emax, and EC50 for each agent from Stage 2. Estimate only the interaction parameter (α) and residual error using combination data in the final step.

Data Presentation

Table 1: Comparison of Sequential vs. Simultaneous Estimation Approaches

| Feature | Sequential Estimation | Simultaneous Estimation |

|---|---|---|

| Computational Complexity | Lower | Higher |

| Convergence Likelihood | Higher (per step) | Lower |

| Handling of PK-PD Feedback | Not possible | Possible |

| Parameter Identifiability | Easier for simple models | Can be challenging |

| Accounting for PK-PD Error Correlation | No | Yes |

| Optimal Use Case | Well-behaved data, simple link models | Complex models, sparse data, suspected correlations |

Table 2: Common Error Codes & Resolutions in NLMEM Software

| Error/Warning | Potential Cause | Troubleshooting Action |

|---|---|---|

| RMATRIX SINGULAR | Over-parameterized model, high parameter correlation. | Simplify model, fix correlated parameters, use Bayesian priors. |

| MINIMIZATION TERMINATED | Poor initial estimates, model too complex for data. | Use sequential estimates, try alternative optimization method. |

| LARGE GRADIENT | Model not fitting data well, local minimum. | Check data for outliers, refine structural model. |

| ETA-BAR/SIGMA RATIO > X | Potential model misspecification for random effects. | Re-evaluate OMEGA structure, consider additional IIV. |

Visualizations

Title: Sequential PK-PD Parameter Estimation Workflow

Title: Simultaneous PK-PD Parameter Estimation Workflow

Title: Thesis Dual-Agent Stepwise Fitting Protocol

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Category | Function in PK/PD Modeling | Example/Note |

|---|---|---|

| Non-Linear Mixed-Effects Modeling (NLMEM) Software | Gold-standard for population PK/PD analysis, handles sparse, unbalanced data. | NONMEM, Monolix, Phoenix NLME. Essential for both sequential & simultaneous estimation. |

| Diagnostic Plotting Toolkit | Visual assessment of model fit, detection of biases, outliers, and model misspecification. | R with ggplot2/xpose or Python with plotnine/matplotlib. Create Observed vs. Predicted, Residual, VPC plots. |

| Sensitivity & Identifiability Analysis Tool | Evaluates whether model parameters can be uniquely estimated from available data. | PE (Parameter Estimation) tool in Pirana, pksensi R package. Critical before simultaneous fitting. |

| Response Surface Model Library | Quantifies pharmacological interaction (synergy/additivity/antagonism) for dual agents. | Pre-coded scripts for Greco, Bliss, or Loewe models in R (Synergy) or MATLAB. |

| Bayesian Estimation Engine | Allows incorporation of prior knowledge (from sequential steps) into complex final models. | Implemented via ITS method in NONMEM, or using Stan/brms in R. Useful for stabilizing fits. |

Technical Support Center

Troubleshooting Guide

Q1: Why does my synergy model (e.g., Bliss Independence or Loewe Additivity) produce unrealistic synergy scores (>100% or <-100%) during parameter fitting for my dual-agent kinetic data?

A: This is often caused by parameter identifiability issues or data scaling problems within the context of your kinetic model fitting.

- Root Cause 1: Over-parameterization of the underlying dose-response curves for single agents. The model may be fitting noise.

- Solution: Implement a parameter constraint protocol. Use the following Python code snippet with

scipy.optimizeto bound Hill equation parameters:

- Root Cause 2: The observed combination effect exceeds the theoretical maximal effect defined by your single-agent model baselines (E₀) and minimal effects (E∞).

- Solution: Re-normalize your response data. Ensure the control (0% effect) and maximal effect (100% inhibition) are robustly defined from plate controls in every experiment. Re-calculate using

Effect_normalized = (Sample - Max_Control) / (Min_Control - Max_Control).

Q2: My code for calculating the Combination Index (CI) from the Chou-Talalay method runs without error, but the CI values are consistently 1.0 across all effect levels. What is wrong?

A: This typically indicates an error in the data structure or an incorrect mapping of single-agent parameters to combination data points.

- Diagnosis Protocol:

- Verify Input Arrays: Ensure your arrays for

D1 (dose of drug 1 in combo), D2, Dx1 (dose of drug 1 alone to achieve the combo effect), and Dx2 are NumPy arrays of floats, not integers or objects. Print dtype for each.

- Check the Effect Level Calculation: The

Dx1 and Dx2 values must be calculated for the exact same effect level (fa) as produced by the combination (D1, D2). Debug by printing fa, D1, D2, Dx1, Dx2 for a single data point.

- Corrected Code Example:

Q3: When implementing a response surface model for synergy (e.g., Greco model), the optimization fails to converge. How can I improve stability?

A: This is common in nonlinear models with multiple interaction parameters (e.g., α in the Greco model). Use a two-stage fitting approach with careful initialization.

- Experimental Protocol for Stable Fitting:

- Stage 1 - Fit Single Agents: Independently fit the Hill model parameters (E₀, E∞, IC₅₀, m) for Drug A and Drug B using robust bounded fitting (as in Q1).

- Stage 2 - Fit Interaction Parameter(s): Hold single-agent parameters fixed. Fit only the interaction parameter (α) using combination data. Initialize α at 0 (additivity).

- Use a Global Optimizer: For Stage 2, use a differential evolution optimizer to avoid local minima.

Frequently Asked Questions (FAQs)

Q: What is the most appropriate synergy framework for time-dependent (kinetic) dual-agent data from cell proliferation assays?

A: For kinetic data, the Zhao-Wilding Interaction Model or a Response Surface Model with a time parameter is most appropriate. The key is to fit the growth rate parameters (e.g., in a logistic or exponential growth model) for single agents and the combination, then test if an interaction term significantly improves the fit. Avoid static models like Bliss if the drug effects change markedly over the assay duration.

Q: How should I handle replicate data points when calculating synergy scores?

A: Never average replicates before fitting. Perform the synergy calculation (e.g., Bliss Excess) on each individual replicate data point from the combination and corresponding single-agent runs, then report the mean and confidence interval of the resulting synergy scores. This propagates experimental variability correctly.

Q: My combination data shows strong antagonism at low doses but synergy at high doses. Which model captures this?

A: The Loewe Additivity model or HSA model may fail here. A Sigmoid Emax Model with a variable interaction parameter (α) that is itself a function of dose (e.g., α = α_base + δ*D1*D2) can capture this crossover effect. Implement this by extending the Greco model.

Q: Are there recommended R or Python packages for implementing these models?

A: Yes. For R, use the BIGL and synergyfinder packages. For Python, SynergyFinder (web tool API), MechBayes (for Bayesian fitting), and custom implementations in SciPy for optimization are standard. Always validate package outputs with a known dataset.

Table 1: Comparison of Common Synergy Frameworks for Kinetic Model Fitting

Framework

Core Equation

Key Parameters

Data Type Required

Handles Time-Kinetics?

Output Metric

Bliss Independence

EBliss = EA + EB - (EA * E_B)

EA, EB (effects)

Fractional Effect (0-1)

No (Static)

Bliss Excess (ΔE = Eobs - EBliss)

Loewe Additivity

1 = DA/DxA + DB/DxB

DxA, DxB (iso-effective doses)

Dose-Response

No (Static)

Combination Index (CI)

Chou-Talalay

CI = (D)1/(Dx)1 + (D)2/(Dx)2

DxA, DxB, m (Hill slope)

Dose-Response

No (Static)

Combination Index (CI)

Greco (ASM)

(D1/IC501) + (D2/IC502) + α(D1D2)/(IC501*IC502) = 1

IC50, m, α (interaction)

Dose-Response Matrix

Partially (via α)

Interaction Coefficient (α)

Zhao-Wilding

dN/dt = rN(1 - N/K) - E(D1,D2,t)*N

r (growth rate), K (capacity), k (kill rate)

Time-course Cell Count

Yes

Interaction on r or k

Experimental Protocols

Protocol 1: Dose-Response Matrix Assay for Synergy Screening

- Plate Setup: In a 96-well plate, serially dilute Drug A along the rows and Drug B along the columns to create an 8x8 matrix of combinations, including single-agent and control wells (n=4 replicates).

- Cell Seeding: Seed cells at optimal density (e.g., 2000 cells/well for a 72h assay) in full growth medium.

- Dosing: 24 hours post-seeding, add drugs using a liquid handler for precision. Include DMSO vehicle controls.

- Incubation & Assay: Incubate for desired duration (e.g., 72h). Measure cell viability using CellTiter-Glo luminescent assay.

- Data Processing: Normalize luminescence: %Viability = (RLUsample - RLUmedia) / (RLUDMSO - RLUmedia) * 100. Fit models using the normalized %Inhibition (100 - %Viability).

Protocol 2: Kinetic Live-Cell Imaging for Time-Resolved Synergy

- Cell Preparation: Seed cells expressing a fluorescent nuclear marker in a 96-well imaging plate.

- Dosing: Prepare a focused 4x4 combination matrix based on single-agent IC₃₀ and IC₇₀ values. Use an onboard injector for time-zero dosing during imaging.

- Imaging: Place plate in live-cell imager (e.g., Incucyte). Acquire phase and fluorescence images every 3-4 hours for 96 hours.

- Data Extraction: Use image analysis software to segment nuclei and count cell number/confluence per well over time.

- Model Fitting: Fit the logistic growth model

N(t) = K / (1 + ((K-N0)/N0)*exp(-r*t)) to control wells to get baseline r and K. For drug-treated wells, fit a modified model where r or K is a function of drug concentrations (D1, D2) to estimate interaction parameters.

Visualizations

Kinetic Synergy Analysis Workflow

Dual-Agent Target Signaling Pathway

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Dual-Agent Kinetic Studies

Item

Function in Synergy Experiments

Example Product/Catalog

ATP-based Viability Assay

Quantifies metabolically active cells at endpoint. Essential for dose-response matrices.

CellTiter-Glo 2.0 (Promega, G9242)

Live-Cell Imaging Dye

Enables kinetic tracking of cell number/confluence without fixation.

Nuclight Lentivirus (Essen BioScience, 4475)

384-Well Cell Culture Plate

High-throughput format for detailed combination matrices with low reagent volumes.

Corning 384-well, TC-treated (Corning, 3767)

Liquid Handling System

Ensures precision and reproducibility in serial dilution and compound transfer.

Echo 650 Liquid Handler (Labcyte)

DMSO Vehicle Control

Maintains consistent solvent concentration across all wells to avoid artifacts.

Hybri-Max sterile DMSO (Sigma, D2650)

Growth Medium

Optimized, serum-lot controlled medium for consistent baseline proliferation.

RPMI 1640 + 10% FBS + 1% Pen/Strep

Bayesian Fitting Software

For robust parameter estimation and uncertainty quantification in complex models.

Stan (mc-stan.org) / PyMC3 (pymc.io)

Technical Support Center

Troubleshooting Guide

Q1: The model fails to converge when fitting the combined PD-1 inhibitor and carboplatin time-series tumor volume data. What are the primary checks? A1: Perform the following checks:

- Parameter Scaling: Ensure parameters are on a similar numerical scale. Normalize volume to initial volume (V0) and scale rate constants (e.g., kgrowth, kkill) to a [0,10] range.

- Initial Estimates: Verify initial parameter guesses are biologically plausible. Use monotherapy fits to inform combination model starting points.

- Identifiability: Check for parameter correlation >0.95. Consider fixing a poorly identifiable parameter (e.g., baseline immune cell count) to a literature value.

- Error Model: Switch from additive to proportional error model if residuals show increasing variance with tumor volume.

Q2: How should I handle censored data points (e.g., tumor volume below detection limit or animal death) in the kinetic fitting? A2: Implement a likelihood-based approach that accounts for censoring:

- For left-censored data (below limit of quantification, LOQ), use

P(observation = LOQ) = P(true volume ≤ LOQ)in the likelihood function. - For right-censored data (death), treat the final time point as an informative dropout. The model should predict tumor volume exceeding a lethal burden (e.g., 1500 mm³).

Q3: The estimated synergy parameter (α) is not statistically significant (confidence interval includes 0). Is the combination merely additive? A3: Not necessarily. Consider:

- Model Misspecification: The hypothesized synergistic mechanism (e.g., chemotherapy-induced immunogenic cell death boosting T-cell infiltration) may be incorrectly represented. Explore alternative structural models.

- Data Insufficiency: The experiment may lack time-point density during the critical interaction period. Re-fit using only the data from the first 21 days post-treatment initiation.

- Covariates: Include animal weight or baseline lymphocyte count as a covariate on the synergy parameter to reduce unexplained variability.

Q4: When simulating the fitted model forward, the predicted tumor volume becomes negative. What is the root cause and fix? A4: This is often caused by an overly sensitive cell kill term.

- Root Cause: The chemotherapy-induced kill term

(k_kill * C(t) * V)dominates whenVis small, mathematically driving volume negative. - Fix: Implement a logistic or cell-quota growth term instead of exponential, or use a non-negative constraint in the ODE solver:

dV/dt = max(-V, growth - kill).

Frequently Asked Questions (FAQs)