Genetic Algorithms in Cluster Geometry Optimization: From Foundations to Biomedical Applications

This article provides a comprehensive overview of genetic algorithms (GAs) for cluster geometry optimization, a crucial task in computational chemistry and materials science for predicting the most stable structures of...

Genetic Algorithms in Cluster Geometry Optimization: From Foundations to Biomedical Applications

Abstract

This article provides a comprehensive overview of genetic algorithms (GAs) for cluster geometry optimization, a crucial task in computational chemistry and materials science for predicting the most stable structures of atomic and molecular aggregates. We explore the foundational principles of GAs and their superiority in navigating complex potential energy surfaces compared to local optimization methods. The review details core algorithmic components—including representation schemes, genetic operators, and fitness evaluation—and highlights diverse applications from nanomaterial design to drug development. We further discuss advanced strategies for maintaining population diversity and avoiding premature convergence, present comparative analyses with other global optimization techniques, and conclude by examining the transformative potential of next-generation hybrid algorithms integrating machine learning and quantum computing for biomedical research.

The Challenge of Cluster Geometry and Why Genetic Algorithms Excel

Understanding the Global Optimization Problem on Potential Energy Surfaces

The potential energy surface (PES) is a fundamental concept in computational chemistry and materials science, representing the energy of a molecular system as a function of its nuclear coordinates. This multidimensional hypersurface contains critical topological features including local minima (representing stable structures), first-order saddle points (transition states), and the highly sought-after global minimum (GM)—the most thermodynamically stable configuration of a system [1]. The global optimization (GO) problem involves locating this GM among what is often an exponentially growing number of local minima as system size increases [1].

The challenge of GO is formidable. Theoretical models suggest the number of minima on a PES scales approximately with the number of atoms (N) according to ( N_{min}(N) = \exp(ξN) ), where ξ is a system-dependent constant [1]. This complex, high-dimensional landscape makes exhaustive search computationally intractable for all but the smallest systems, necessitating sophisticated algorithms that efficiently balance broad exploration of the PES with intensive exploitation of promising regions [1].

Classification of Global Optimization Methods

Global optimization methods for PES exploration are broadly categorized into stochastic and deterministic approaches, each with distinct characteristics and algorithmic strategies [1].

Table 1: Classification of Global Optimization Methods for PES Exploration

| Category | Key Characteristics | Representative Algorithms | Typical Applications |

|---|---|---|---|

| Stochastic Methods | Incorporate randomness in structure generation and evaluation; population-based; non-deterministic search rules | Genetic Algorithms (GA), Artificial Bee Colony (ABC), Particle Swarm Optimization (PSO), Simulated Annealing (SA) | Molecular clusters, flexible biomolecules, complex materials |

| Deterministic Methods | Rely on analytical information (gradients, Hessians); follow defined physical principles; sequential evaluation | Molecular Dynamics (MD), Single-Ended methods, Global Reaction Route Mapping (GRRM) | Reaction pathway exploration, transition state location |

| Hybrid Methods | Combine exploration strengths of stochastic methods with exploitation capabilities of deterministic approaches | RANGE (ABC + GA), GOFEE (Gaussian Processes + local search) | Challenging systems requiring both breadth and depth of search |

Stochastic methods typically begin with random or probabilistically guided perturbations followed by local optimization to identify nearby minima [1]. Their non-deterministic nature allows broad sampling of complex, high-dimensional energy landscapes while avoiding premature convergence. In contrast, deterministic methods follow defined trajectories based on physical principles and are often capable of precise convergence, though they can become computationally expensive for systems with numerous local minima [1].

Key Algorithmic Frameworks and Protocols

Genetic Algorithms and Swarm Intelligence

Genetic Algorithms (GAs), formalized in 1957, apply evolutionary strategies—selection, crossover, and mutation—to optimize structural populations over generations [1]. Each candidate structure represents an individual in a population, with fitness typically determined by its potential energy. Through successive generations, fitter individuals (lower energy structures) are selected and recombined to produce offspring, gradually evolving toward the global minimum.

The Artificial Bee Colony (ABC) algorithm, introduced in 2005, models the foraging behavior of honeybees to optimize structure discovery [1]. In this metaphor, employed bees exploit known food sources (promising regions of the PES), onlooker bees select promising sources based on shared information, and scout bees randomly explore new areas, providing a balance between exploration and exploitation.

The RANGE Framework: A Hybrid Protocol

Building on the efficiency of swarm intelligence, the RANGE (Robust Adaptive Nature-inspired Global Explorer) framework represents an advanced hybrid protocol that integrates the adaptive exploration capabilities of ABC with the exploitation strengths of GA [2].

Table 2: RANGE Framework Components and Functions

| Component | Function | Implementation Details |

|---|---|---|

| ABC Exploration Phase | Broad global search across PES | Employed and scout bees identify promising regions; avoids premature convergence |

| GA Exploitation Phase | Intensive local refinement | Selection, crossover, and mutation operations refine promising candidates |

| Python Implementation | Scalable, accessible architecture | Seamless interfaces to multiple potential energy evaluators (DFT, ML potentials) |

| HPC Compatibility | Handles computationally intensive systems | Designed for exascale computing environments |

Experimental Protocol for RANGE:

- Initialization: Generate initial population of random candidate structures within defined chemical constraints

- Energy Evaluation: Calculate potential energy for each candidate using interfaced electronic structure method (e.g., DFT) or machine-learned potential

- ABC Phase: Employed bees perform local searches around current solutions; onlooker bees probabilistically select promising solutions based on fitness; scout bees replace abandoned solutions with random explorations

- GA Phase: Apply tournament selection to choose parents for crossover; implement cut-and-splice crossover to create offspring; introduce random mutations to maintain diversity

- Local Refinement: Perform local geometry optimization on promising candidates to identify precise local minima

- Convergence Check: Evaluate if global minimum criteria are met; if not, return to step 3 with updated population

- Validation: Confirm putative global minimum through multiple independent runs and frequency analysis [2]

Basin Hopping Protocol

Basin Hopping (BH), introduced in 1997, transforms the PES into a discrete set of local minima, effectively simplifying the landscape for more efficient global exploration [3]. The algorithm combines Metropolis sampling with gradient-based local search, effectively sampling energy basins rather than the full configuration space.

Experimental Protocol for Basin Hopping:

- Initial Structure Generation: Create initial molecular cluster configuration through random sampling or known structural motifs

- Local Minimization: Perform thorough local geometry optimization to reach the nearest local minimum

- Monte Carlo Move: Apply random perturbation to current structure (atomic displacements, molecular rotations)

- Local Minimization: Re-optimize perturbed structure to new local minimum

- Metropolis Criterion: Accept or reject new structure based on energy difference and temperature factor: ( P_{accept} = \min(1, \exp(-ΔE/kT)) )

- Occasional Jumping: Implement jumping moves (MC moves without minimization at infinite temperature) to escape deep local minima when trapped [3]

- Iteration: Repeat steps 3-6 for defined number of MC steps and cycles

- Global Minimum Identification: Track lowest energy structure encountered during sampling

Machine Learning-Enhanced Global Optimization

Recent advances integrate machine learning to accelerate PES exploration. The autoplex framework implements automated, iterative exploration and ML interatomic potential fitting through data-driven random structure searching [4]. The protocol involves:

- Initial Dataset Creation: Generate diverse initial structures through random sampling

- ML Potential Training: Train machine-learned interatomic potential (e.g., Gaussian Approximation Potential) on quantum mechanical reference data

- Random Structure Searching: Use the ML potential to drive extensive structure searches without expensive quantum calculations

- Quantum Validation: Select diverse promising structures for single-point DFT validation

- Active Learning: Incorporate validated structures into training set to improve ML potential

- Iterative Refinement: Repeat steps 2-5 until convergence in prediction accuracy [4]

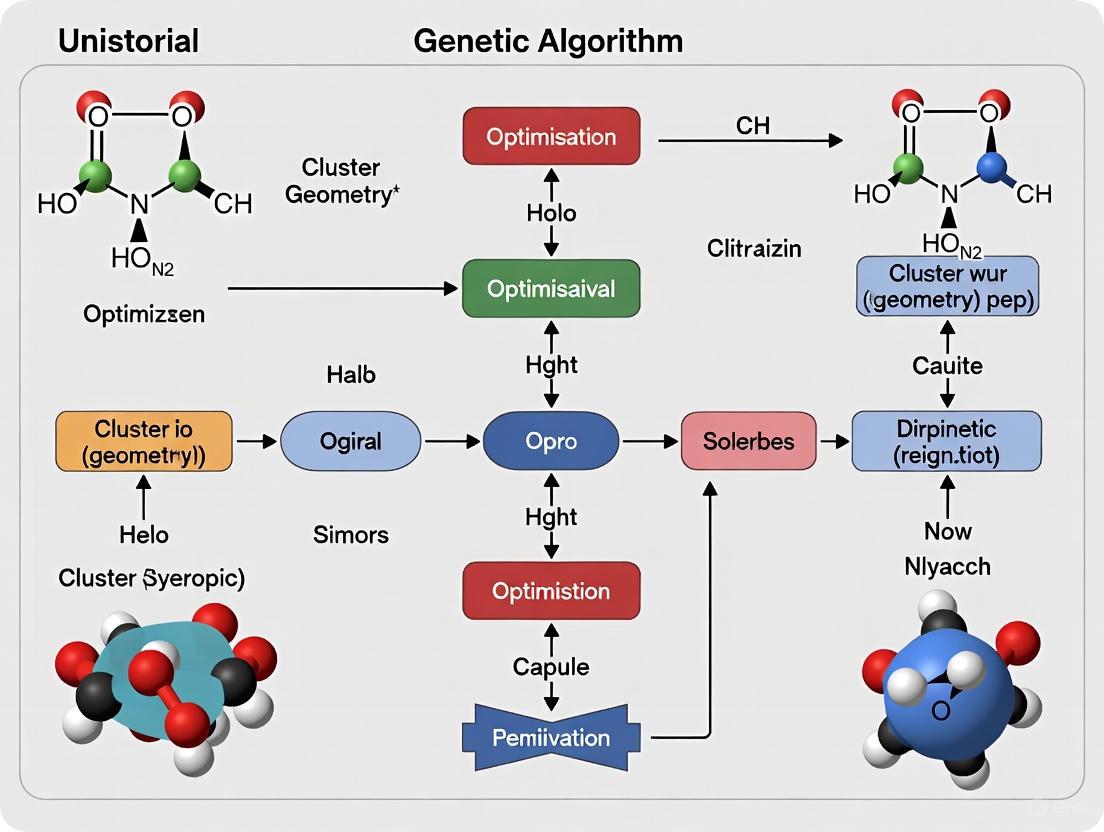

Visualization of Workflows

Global Optimization Algorithm Workflow Comparison

RANGE Hybrid Algorithm Protocol

Table 3: Essential Research Reagents and Computational Resources for Global Optimization

| Resource Category | Specific Tools/Software | Function in Global Optimization |

|---|---|---|

| Electronic Structure Codes | Q-Chem (JOBTYPE=RAND/BH) [3], DFT implementations | Provide accurate energy and force evaluations for candidate structures |

| Machine Learning Potentials | Gaussian Approximation Potentials (GAP) [4], Neural Network Potentials | Accelerate energy evaluations while maintaining quantum accuracy |

| Global Optimization Frameworks | RANGE [2], autoplex [4], BEACON [5] | Implement hybrid algorithms for efficient PES exploration |

| Structure Search Algorithms | Artificial Bee Colony (ABC) [2], Genetic Algorithms (GA) [1], Basin Hopping [3] | Core optimization routines for navigating complex energy landscapes |

| Automation Workflows | atomate2 [4], custom Python scripting | Enable high-throughput computation and iterative model refinement |

| High-Performance Computing | Exascale computing infrastructure [2], Parallel processing | Handle computationally intensive calculations for complex systems |

Application Notes for Specific Chemical Systems

The performance of global optimization algorithms varies significantly across different types of chemical systems. Here we present specific application notes for common scenarios:

Molecular Clusters: For atomic and molecular clusters, the RANGE framework has demonstrated particular efficiency, leveraging the ABC algorithm's exploration capabilities to navigate the numerous local minima typical of cluster PES [2]. Q-Chem's built-in random search (JOBTYPE = RAND) and basin hopping (JOBTYPE = BH) functionalities provide specialized tools for these systems [3].

Binary Material Systems: Complex binary systems such as titanium-oxygen present additional challenges due to varied stoichiometric compositions and electronic structures [4]. The autoplex framework has shown success in these systems by combining random structure searching with iterative ML potential refinement, accurately capturing polymorphs with different compositions like Ti₂O₃, TiO, and Ti₂O [4].

Reaction Pathway Mapping: For identifying reaction mechanisms and transition states, deterministic methods like single-ended approaches and global reaction route mapping (GRRM) offer advantages in precisely locating first-order saddle points connecting local minima [1].

Performance Metrics and Validation Protocols

Validating the success of global optimization requires rigorous performance assessment:

Convergence Metrics:

- Energy-based convergence: Track the lowest energy identified across algorithm iterations

- Structural diversity: Monitor structural similarity to ensure adequate sampling

- Prediction error: Calculate root mean square error (RMSE) between predicted and reference energies [4]

Validation Protocols:

- Multiple Independent Runs: Execute optimization from different initial conditions to verify consistency

- Frequency Analysis: Confirm putative minima through vibrational frequency calculations (no imaginary frequencies)

- Comparative Benchmarking: Test against known global minima for benchmark systems

- Experimental Validation: Where possible, compare predicted structures with experimental data

For the RANGE framework, performance evaluations demonstrate superior efficiency compared to ABC- or GA-alone algorithms across various chemical systems including molecular clusters and heterogeneous surfaces [2]. The hybrid approach achieves robustness while maintaining broad applicability across challenging GO problems in computational chemistry and materials science [2].

Exponential Growth of Local Minima with System Size

In the field of cluster geometry optimization, the potential energy landscape of a system is often described as very complex, characterized by a multitude of local minima, saddle points, and deep energy wells [6]. A fundamental challenge is that the number of local minima in these landscapes grows exponentially with the number of particles (N) in the system [7]. This exponential growth presents a significant barrier to global optimization, as the search space becomes increasingly rugged and difficult to navigate with traditional methods [8]. For researchers employing genetic algorithms (GAs) to explore these landscapes—particularly in critical applications like drug development where molecular configuration determines function—understanding this phenomenon is crucial for developing effective search strategies that can avoid premature convergence on suboptimal solutions [9].

Quantitative Evidence of Exponential Growth

Documented Growth in Physical Systems

The exponential growth of local minima is empirically observed in several physical systems central to materials science and drug development research. The table below summarizes key findings from studies of classical particle clusters:

Table 1: Documented Growth of Local Minima in Physical Cluster Systems

| System Type | Potential Energy Function | Observed Range of N | Growth Characteristic | Primary Reference |

|---|---|---|---|---|

| 2D Uniformly Charged Particles | Coulomb & Logarithmic | 9 to 30 | Exponential growth with N [7] | [7] |

| Lennard-Jones Clusters | LJ Potential | Not Specified | Complex landscape with many minima [6] | [6] |

| General Molecular Systems | Varies (e.g., for drug-like molecules) | Up to 17 atoms (C, N, O, S, halogens) | Rugged landscape structure [6] | [6] |

Implications for Search Complexity

This exponential increase directly impacts computational feasibility. For a system of discrete variables, the size of the model structure search space grows exponentially, making an exhaustive search impractical for all but the smallest systems [8]. In the context of drug discovery, the chemical space of possible small organic molecules is astronomically large (e.g., on the order of 10^80 for molecules with 100 atoms), creating a similarly vast and multi-modal optimization landscape [9].

Genetic Algorithms as a Response to Rugged Landscapes

Limitations of Local Search Methods

Traditional "hill-climbing" algorithms, which start with a simple model and sequentially add single features, are highly susceptible to becoming trapped in local minima [8]. This approach is a greedy algorithm that rapidly proceeds to the nearest local optimum. Its success in finding the global minimum depends entirely on starting the search within a "basin of attraction" that is convex to the global minimum, with no intervening ridges [8]. On a landscape with exponentially many minima, the probability of this favorable starting position becomes vanishingly small.

The Genetic Algorithm Advantage

Genetic algorithms belong to a class of global search algorithms designed to be more robust to local minima than hill-climbing methods [8]. Their strength lies in maintaining a population of candidate solutions, rather than a single point, and using biologically inspired operators—selection, crossover, and mutation—to explore the search space concurrently [10] [11]. This population-based approach allows a GA to "jump" over barriers in the energy landscape that would trap a local search method, providing a much better chance of locating the global minimum or a very good near-optimal solution in a complex, multi-modal landscape [8].

Table 2: Comparison of Search Algorithm Strategies for Rugged Landscapes

| Algorithm Type | Key Mechanism | Robustness to Local Minima | Computational Burden | Key Assumption |

|---|---|---|---|---|

| Hill-Climbing (Local) | Sequential feature addition/removal | Low | Low (increases linearly) | Feature value is model-independent [8] |

| Exhaustive Search (Global) | Tests all possible combinations | High (guaranteed global optimum) | Prohibitive (increases exponentially) [8] | No assumption [8] |

| Genetic Algorithm (Global) | Population-based stochastic evolution | High | Moderate (configurable) | Features valuable in one model may be valuable in others [8] |

Protocol for GA-Based Cluster Geometry Optimization

This protocol details the application of a genetic algorithm for determining the ground-state geometric configuration of a cluster of N uniformly charged classical particles in 2D, a system known to exhibit an exponential number of local minima [7].

Research Reagent Solutions

Table 3: Essential Computational Reagents and Tools

| Item Name | Function/Description | Application Context | ||||

|---|---|---|---|---|---|---|

| Potential Energy Function (U) | Defines the system's energy landscape; the function to be minimized. | Core objective function for fitness evaluation. Example: ( U = \sum_{i=1}^{N} | \mathbf{r}_i | ^2 + \sum{i=1}^{N-1}\sum{j=i+1}^{N} \frac{qi qj}{ | \mathbf{r}i - \mathbf{r}j | } ) for Coulomb potential [7]. |

| Real-Number Encoding | Chromosomes are vectors of particle coordinates (e.g., [x1, y1, x2, y2, ... xN, yN]). | Represents the genotype (solution) in the GA [7]. | ||||

| Fitness Function | A function inversely related to the potential energy, U. For minimization, Fitness = -U or 1/U. | Drives selection; higher fitness solutions are more likely to reproduce [11]. | ||||

| Niche Mechanism (Sequential Niche Technique) | A heuristic that penalizes crossover between overly similar solutions. | Encourages population diversity and helps locate multiple minima (global and metastable) in a single run [7]. | ||||

| Corina Classic | Converts textual molecular representations (e.g., SMILES) to 3D geometric coordinates. | Critical for applications in drug development and molecular geometry optimization [9] [12]. | ||||

| CCDC GOLD / AutoDock Vina | Docking software used to evaluate ligand-protein binding interactions. | Provides fitness scores for drug discovery applications where binding affinity is the target [9]. |

Step-by-Step Workflow

Step 1: Problem Encoding

- Represent the geometry of an N-particle cluster as a single chromosome.

- Use a real-number coding scheme where the chromosome is a vector of concatenated 2D coordinates:

[x1, y1, x2, y2, ..., xN, yN][7]. - This direct encoding allows the genetic operators to act directly on the particle positions.

Step 2: Initial Population Generation

- Generate an initial population of S chromosomes (individuals), where S is the population size (typically 200-500) [7].

- Initialization can be purely random within the confining domain or "seeded" with known reasonable configurations to accelerate convergence [10] [11].

Step 3: Fitness Evaluation

- For each individual in the population, calculate its fitness.

- The fitness function is defined as the negative of the total potential energy, U, of the cluster configuration. Therefore, the optimization goal is to maximize fitness, which corresponds to minimizing energy [7] [11].

- The potential energy U is calculated using the chosen potential (e.g., Coulomb or Lennard-Jones) as defined in the research reagents [7] [6].

Step 4: Selection

- Select parent solutions for breeding based on their fitness.

- Use a fitness-proportionate selection method (e.g., roulette wheel selection) where individuals with higher fitness have a proportionally higher probability of being selected [11].

- This step emulates natural selection, pushing the population toward more optimal regions of the energy landscape.

Step 5: Genetic Operations (Reproduction)

- Crossover (Recombination): For each pair of selected parents, create one or two offspring by combining their genetic material. Use a single-point crossover operator with a high probability (pc typically 0.7 - 0.9) [7]. This swaps segments of the coordinate vectors between parents.

- Mutation: Apply mutation to the offspring with a defined probability. A common method is to add a small random perturbation to a randomly selected coordinate (a gene). The probability of mutating a gene (pmg) can range from 0.05 to 0.35 [7]. Mutation introduces new genetic material and helps the population escape local minima.

Step 6: Replacement

- Form a new generation by replacing the least fit individuals in the current population with the newly created offspring.

- Alternatively, use a generational replacement strategy where the entire parent population is replaced by the offspring population.

Step 7: Termination Check

- Repeat Steps 3-6 for a predetermined number of generations (Ng, often between 10^6 and 10^7 for complex landscapes) [7].

- Alternatively, terminate the algorithm if the highest fitness in the population shows no significant improvement over a large number of consecutive generations [10].

Step 8: Configuration Recovery & Analysis

- Once terminated, the individual with the highest fitness in the final population represents the best-found estimate for the ground-state configuration.

- To also recover metastable configurations (local minima), the niche mechanism implemented during the run will have maintained sub-populations (species) in different regions of the landscape. These can be identified by clustering the final population based on structural similarity [7].

Workflow Visualization

GA Optimization Workflow

Advanced Techniques for Complex Landscapes

Adaptive Network Embedding with Metadynamics

For extremely rugged landscapes, a more advanced technique involves combining GAs with network embedding and Metadynamics [6].

- The energy landscape is treated as a network where nodes are local minima and edges represent transition pathways.

- Network Embedding maps these nodes into a low-dimensional latent space, preserving kinetic relationships and facilitating visualization and clustering [6].

- Metadynamics is used to enhance sampling. It adds a history-dependent bias potential (e.g., Gaussian terms) to the energy landscape, discouraging the search from repeatedly visiting the same low-energy states and thus promoting exploration of new regions [6].

This combined approach allows for a hierarchical, multi-scale understanding of the energy landscape, revealing not just the global minimum but also the structure of metastable states and the funnels connecting them.

Adaptive Embedding Visualization

Multiscale Landscape Analysis

The exponential growth of local minima with system size is a fundamental characteristic of cluster geometry optimization problems that dictates the choice of optimization strategy. Traditional local search methods are inadequate for navigating these vast, complex landscapes. Genetic algorithms, with their population-based, stochastic global search approach, provide a robust and effective methodology for locating global minima. The successful application of GAs requires careful configuration, including real-number encoding, appropriate fitness functions, and mechanisms like niching to maintain diversity. For the most challenging problems in molecular design and drug discovery, integrating GAs with advanced techniques like network embedding and Metadynamics offers a powerful, multi-scale strategy for conquering the complexity of rugged energy landscapes and accelerating scientific discovery.

Stochastic vs. Deterministic Global Optimization Methods

Global optimization is a critical tool in scientific domains where researchers seek the best possible solution from a vast set of possibilities. For problems involving cluster geometry optimization—such as determining the most stable configuration of atoms in a nanoparticle or molecular cluster—the energy landscape is typically characterized by numerous local minima, making finding the global minimum exceptionally challenging. Optimization methods are broadly categorized into two paradigms: deterministic and stochastic approaches. Deterministic algorithms, such as DIRECT (Dividing RECTangles), follow a fixed set of rules and will always produce the same result given the same starting point. In contrast, stochastic algorithms, like Genetic Algorithms (GAs), incorporate elements of randomness to explore the search space and do not guarantee identical results across runs [13] [14].

The choice between these paradigms is not trivial and has significant implications for research outcomes, particularly in fields like drug development and materials science. Deterministic methods provide reliability and rigorous search patterns but may become computationally prohibitive for high-dimensional problems. Stochastic methods offer robustness and the ability to escape local minima, making them suitable for complex, noisy, or high-dimensional objective functions, albeit at the cost of guaranteed convergence [14] [15]. This document outlines the core principles, applications, and protocols for employing these methods, with a specific focus on genetic algorithms for cluster geometry optimization.

Theoretical Foundation and Comparative Analysis

Deterministic Global Optimization Methods

Deterministic optimization algorithms are characterized by their reproducible and rule-based search behavior. A prominent family of deterministic algorithms for derivative-free optimization is the DIRECT-type algorithms. The DIRECT algorithm systematically partitions the search domain into hyper-rectangles and samples at their centers, ensuring a balanced exploration of global and local search aspects. This method is particularly effective for bound-constrained problems where the objective function is black-box, meaning derivative information is unavailable or unreliable [14]. Other deterministic approaches include Lipschitzian optimization and branch-and-bound methods, which provide convergence guarantees under specific mathematical conditions [13].

The primary strength of deterministic methods lies in their comprehensive search strategy. They are designed to eventually locate the global optimum by systematically eliminating regions of the search space. However, this thoroughness can become a liability as the dimensionality of the problem increases, leading to an exponential growth in computational cost, a phenomenon often referred to as the "curse of dimensionality" [14].

Stochastic Global Optimization Methods

Stochastic methods utilize probabilistic elements to guide the search process. This category includes a wide range of algorithms, such as:

- Genetic Algorithms (GAs): Inspired by natural selection, GAs maintain a population of candidate solutions that undergo selection, crossover, and mutation to evolve toward better solutions over generations [16] [15].

- Particle Swarm Optimization (PSO): Models social behavior where a population of particles moves through the search space based on their own experience and the group's best-known position [13].

- Bayesian Optimization: Builds a probabilistic model of the objective function to decide where to sample next, making it highly efficient for expensive-to-evaluate functions [13].

- Differential Evolution and Artificial Bee Colony: Other population-based metaheuristics that have shown success in various global optimization problems [13].

The inherent randomness in these algorithms allows them to effectively explore complex search spaces with many local minima, making them less susceptible to being trapped. They are particularly well-suited for problems where the objective function landscape is rugged or poorly understood [15]. However, they do not offer absolute guarantees of finding the global optimum and often require careful parameter tuning to perform effectively.

Quantitative Benchmark Comparison

A large-scale numerical benchmark provides critical insights into the practical performance of these methods. The following table summarizes key findings from a study comparing 64 deterministic and numerous stochastic derivative-free algorithms over 1197 test problems [14].

Table 1: Benchmark Performance of Deterministic vs. Stochastic Solvers

| Metric | Deterministic Algorithms | Stochastic Algorithms |

|---|---|---|

| Typical Strengths | Excellent on low-dimensional problems; strong theoretical convergence guarantees. | Superior performance in higher dimensions; better at handling noisy, complex landscapes. |

| Performance on GKLS-type problems | Generally excellent. | Variable, often less efficient than deterministic solvers. |

| Performance in Higher Dimensions (>10D) | Efficiency and success rates tend to decrease significantly. | Generally more efficient and robust. |

| Computational Cost | Can be high for exhaustive search in high dimensions. | Often lower for finding good solutions in complex spaces. |

| Solution Guarantee | Provide rigorous bounds on solution quality. | Offer probabilistic convergence, no absolute guarantees. |

| Key Example Algorithms | DIRECT, Multilevel Coordinate Search, SNOBFIT. | Genetic Algorithms, Particle Swarm Optimization, Bayesian Optimization. |

This benchmark underscores that the performance of an optimizer is highly dependent on the problem's nature. Deterministic algorithms excel on structured, lower-dimensional problems, while stochastic algorithms show superior scalability and robustness in higher-dimensional, complex scenarios [14].

Application to Cluster Geometry Optimization

The Cluster Geometry Optimization Problem

Cluster geometry optimization is a central problem in chemical physics and materials science. It involves finding the atomic configuration of a cluster (a group of atoms or molecules) that corresponds to the global minimum on its potential energy surface (PES). This problem is NP-hard, meaning that as the number of atoms in the cluster increases linearly, the number of possible stable isomers (local minima) grows exponentially. This makes an exhaustive search intractable for all but the smallest systems [17] [15]. The problem is analogous to the famous Traveling Salesman Problem, another NP-hard problem, where the task is to find the shortest possible route [15].

Why Genetic Algorithms are a Preferred Stochastic Approach

Genetic Algorithms have emerged as a particularly powerful and popular stochastic method for tackling the cluster geometry optimization problem. Their success can be attributed to several factors:

- Robust Search in Complex Landscapes: GAs are less dependent on the smoothness or differentiability of the PES compared to gradient-based methods. They can effectively navigate a landscape filled with numerous local minima [15].

- Intelligent Search through Crossover: The crossover operation allows for the merging of promising structural motifs from different candidate solutions (parents), potentially creating novel and more stable offspring configurations. This is more efficient than purely random search [17] [15].

- Flexibility in Representation: The atomic coordinates of a cluster can be encoded in various ways within a GA, most effectively using a direct floating-point representation (genotype) of atomic positions, which can then be relaxed locally (phenotype) to the nearest local minimum. This phenotype-based approach is significantly more efficient than older binary representation schemes [15].

The efficiency of a GA is heavily influenced by the "topology of the objective function." For problems with a highly complex, multi-modal PES like cluster geometry, GAs often outperform simpler local search or hill-climbing routines [15].

Comparative Performance on Real-World Problems

The applicability of these methods extends beyond benchmark functions to real-world scientific and engineering challenges.

Table 2: Application-Based Comparison of Optimization Methods

| Application Domain | Suitable Method Type | Specific Algorithms Used | Reported Outcome |

|---|---|---|---|

| Guidance Trajectory Generation | Hybrid (Stochastic + Deterministic) | PSO, Bayesian Optimization, DIRECT-type | Reliable real-time trajectory generation with diverse solutions was achieved when the optimizer was properly chosen [13]. |

| Nuclear Experiment Design | Stochastic | Genetic Algorithm (Gnowee_multi) | The GA successfully optimized a highly modular neutron source design, leading to a 15-20% predicted uncertainty reduction in a key reactor parameter [18]. |

| Nanoparticle Geometry Optimization | Stochastic | Genetic Algorithm (Phenotype operations) | GAs have been successfully applied to find global minima for model Morse clusters, ionic MgO clusters, and bimetallic "nanoalloy" clusters [17] [15]. |

| Fermentation Medium Development | Stochastic (Multi-objective) | Strength Pareto Evolutionary Algorithm (SPEA) | Effectively optimized 13 medium components with a reduced experimental effort compared to classical design methods [16]. |

Experimental Protocols and Workflows

Protocol 1: Genetic Algorithm for Cluster Geometry Optimization

This protocol details the application of a GA for finding the global minimum energy structure of an atomic or molecular cluster.

1. Problem Definition and Representation:

- Objective Function: Define the objective function, typically the potential energy of the cluster calculated using an empirical potential (e.g., Morse, Lennard-Jones) or a quantum mechanical method (e.g., Density Functional Theory for smaller clusters) [17] [15].

- Representation: Encode a candidate solution. The recommended modern approach is to use a floating-point vector directly representing the 3N Cartesian coordinates of the N atoms in the cluster. Initialization is done by generating a population of random cluster structures within a defined spatial boundary [15].

2. Algorithm Configuration:

- Genetic Operators: Implement phenotype-aware operators.

- Crossover: Cut-and-paste crossover, where a spatially defined segment of atoms from one parent is inserted into another parent's structure, followed by local relaxation [15].

- Mutation: Apply local perturbations, such as displacing a randomly selected atom or a small group of atoms, or performing a small rotation of a molecular subunit [15].

- Selection: Use a tournament selection or fitness-proportional selection to choose parents for the next generation. The fitness is typically the negative of the cluster's potential energy (to minimize energy).

- Local Relaxation (Lamarckian Learning): After each genetic operation, perform a local energy minimization (e.g., using conjugate gradient method) on the new offspring. The optimized coordinates (phenotype) are then passed on. This dramatically improves GA efficiency [15].

- Parameters: Set population size (e.g., 30-100), number of generations, crossover rate (e.g., 0.8-0.9), and mutation rate (e.g., 0.1-0.2 per individual). These may require tuning for the specific system.

3. Execution and Analysis:

- Run the GA for a predetermined number of generations or until convergence (e.g., no improvement in the best fitness for a certain number of generations).

- Track the lowest-energy structure found throughout the run.

- Perform multiple independent runs with different random seeds to increase confidence in having located the global minimum.

The following workflow diagram illustrates this protocol:

Protocol 2: Comparative Analysis of Optimizers

This protocol provides a methodology for comparing the performance of different deterministic and stochastic optimizers on a given problem, such as a known cluster geometry.

1. Benchmark Problem Selection:

- Select a set of test problems with known global minima. For clusters, this could include Lennard-Jones clusters (LJ₃, LJ₁₃, LJ₃₈) whose global minima are well-documented [17] [14].

- Alternatively, use a standard test function generator like the GKLS generator to create problems with known characteristics [14].

2. Experimental Setup:

- Algorithms: Choose a representative set of solvers (e.g., DIRECT, a GA, PSO, Bayesian Optimization).

- Parameter Tuning: For stochastic methods, perform a preliminary parameter tuning study to find reasonably good settings for each algorithm on a subset of problems.

- Performance Metrics: Define metrics for comparison:

- Success Rate: The proportion of runs that find the global minimum within a defined error tolerance.

- Average Number of Function Evaluations (NFE): The mean number of times the objective function was evaluated until success. This is a key measure of computational cost.

- Mean Best Fitness: The average of the best solutions found across all runs after a fixed NFE.

3. Execution and Data Collection:

- For each problem and each algorithm, perform a sufficient number of independent runs (e.g., 50-100 for stochastic methods) to gather statistically significant results.

- For deterministic methods, a single run per problem may suffice.

- Record the performance metrics for each run.

4. Data Analysis:

- Perform statistical analysis (e.g., mean, standard deviation) on the collected metrics.

- Use performance profiles or data tables to visually compare the efficiency and robustness of the different algorithms across the problem set.

The Scientist's Toolkit: Research Reagent Solutions

This section details the essential computational "reagents" and tools required to implement the optimization protocols described above.

Table 3: Essential Research Reagents and Tools for Optimization

| Tool / Resource | Type | Function in Research | Example Use Case |

|---|---|---|---|

| Potential Energy Function | Mathematical Model | Defines the energy of a cluster configuration as a function of atomic coordinates; serves as the objective function. | Morse potential for generic clusters; embedded-atom method (EAM) for metals; DFT for electronic structure accuracy [17] [15]. |

| Global Optimization Library | Software | Provides pre-implemented, tested algorithms for deterministic and stochastic optimization. | DIRECTGOLib for deterministic solvers; custom GA codes or general-purpose packages like Gnowee for stochastic optimization [14] [18]. |

| Local Optimizer | Algorithm | Used for local relaxation within a GA (Lamarckian learning) to quickly find the nearest local minimum from a perturbed structure. | Conjugate gradient method, L-BFGS, or simplex method [15]. |

| High-Performance Computing (HPC) Cluster | Hardware | Provides the computational power needed for expensive function evaluations (e.g., DFT) and for running multiple algorithm instances in parallel. | Parallel fitness evaluation in a GA; running multiple benchmark problems simultaneously [18] [15]. |

| Visualization & Analysis Suite | Software | Used to visualize final cluster geometries, plot convergence graphs, and analyze the results. | VMD or Ovito for molecular visualization; Python with Matplotlib or R for data plotting and analysis. |

The dichotomy between stochastic and deterministic global optimization methods presents researchers with a strategic choice. Deterministic methods offer rigor and reliability for structured, lower-dimensional problems, while stochastic methods, particularly Genetic Algorithms, provide the flexibility and power needed to tackle the complex, high-dimensional landscapes common in cluster geometry optimization and drug design. The extensive numerical benchmarks and real-world applications confirm that there is no single "best" method; the optimal choice is deeply contextual, depending on the problem's dimensionality, complexity, and available computational resources.

The future of optimization in scientific research likely lies in hybrid approaches that leverage the strengths of both paradigms. For instance, a stochastic GA can be used for broad global exploration, while a deterministic local solver refines promising candidates. Furthermore, the integration of machine learning models to create cheap surrogates for expensive objective functions is a growing area of research that can dramatically accelerate both stochastic and deterministic optimization processes. By understanding the principles and protocols outlined in this document, researchers can make informed decisions to effectively deploy these powerful tools in their pursuit of scientific discovery.

Genetic Algorithms (GAs) are sophisticated optimization techniques inspired by Charles Darwin's principle of natural selection [19]. They solve complex problems by simulating the evolutionary processes observed in nature, where populations of organisms adapt to their environment over successive generations through selection, crossover, and mutation. In computational terms, GAs maintain a population of candidate solutions that evolve toward better solutions through strategically applied genetic operators. This approach is particularly valuable for optimizing cluster geometries, where the goal is to find atomic or molecular configurations with minimal energy—a problem often characterized by complex, high-dimensional search spaces with numerous local minima that challenge traditional optimization methods [20].

The fundamental components of GAs—population initialization, fitness evaluation, selection, crossover, and mutation—directly correspond to biological evolutionary mechanisms. This correspondence enables GAs to efficiently explore vast and poorly understood search spaces, making them exceptionally suitable for optimizing atomic clusters described by interatomic potential functions containing up to a few hundred atoms [20]. Research has demonstrated that GAs generally outperform other optimization methods for determining minimum energy structures of clusters, including covalent carbon and silicon clusters, close-packed structures such as argon and silver, and complex two-component systems like C—H [20].

Core Operational Principles and Their Biological Correlates

The operational framework of GAs consists of five fundamental components that mirror biological evolution, each playing a critical role in the algorithm's effectiveness for cluster geometry optimization.

Population Initialization and Chromosome Encoding

In biological terms, a population represents a group of individuals within a species. In GAs, the population comprises a set of potential solutions to the optimization problem. Each individual solution is encoded as a chromosome—a string of genes representing the parameters being optimized [21]. For cluster geometry optimization, this typically involves representing the spatial coordinates of atoms within the cluster. The GA process begins with a randomly initialized population of candidate solutions, creating a diverse starting point for the evolutionary process [19].

Advanced implementations often employ domain-specific chromosome encoding schemes that incorporate problem constraints directly into the solution representation. In heterogeneous systems, specialized encoding can enforce compatibility constraints, such as robot-measurement compatibility in multi-robot systems or atomic position constraints in cluster optimization [21]. This targeted initialization ensures feasible solutions while maintaining sufficient diversity to explore the solution space effectively.

Fitness Evaluation

In natural selection, an organism's fitness determines its reproductive success. Similarly, in GAs, a fitness function quantifies how well each candidate solution performs relative to the optimization objective [19]. For cluster geometry optimization, the fitness function typically evaluates the potential energy of atomic configurations, with the objective being to identify structures with minimal energy [20].

The fitness function serves as the primary driver of evolutionary pressure, guiding the population toward optimal regions of the search space. In sophisticated implementations, the fitness evaluation process may be automated, particularly when precise mathematical descriptions of the optimization landscape are difficult to derive analytically [22]. The accuracy and computational efficiency of the fitness function are critical factors determining the overall performance of the GA approach.

Selection

Selection mechanisms in GAs emulate natural selection by favoring individuals with higher fitness scores for reproduction, thereby propagating beneficial traits to subsequent generations [19]. Common selection strategies include:

- Tournament selection: Randomly selects a subset of individuals from the population and chooses the best performer from this subset

- Roulette wheel selection: Assigns selection probabilities proportional to individual fitness scores

- Elitist selection: Automatically preserves a small number of the best-performing individuals unchanged in the next generation

Different selection methods significantly impact the stability and convergence behavior of the optimization process [19]. Elitist approaches, for instance, ensure that the best solutions are not lost between generations, providing monotonic improvement in solution quality at the potential cost of reduced population diversity.

Crossover (Recombination)

Crossover operations mimic biological reproduction by combining genetic information from two parent chromosomes to produce offspring with characteristics of both parents [19]. This operator enables the algorithm to explore new regions of the search space by recombining promising solution fragments. The crossover rate determines the frequency with which this operation occurs, balancing the exploitation of existing good solutions with the exploration of new combinations.

In cluster optimization, specialized crossover operators must account for the physical constraints of molecular structures, ensuring that offspring solutions represent valid atomic configurations. The design of problem-specific crossover operators is often crucial for achieving high-performance results in complex optimization domains.

Mutation

Mutation introduces random modifications to individual chromosomes, maintaining population diversity and enabling the exploration of new solution regions beyond those represented in the initial population [19]. This operator helps prevent premature convergence to local optima by introducing novel genetic material. The mutation rate controls the frequency of these random changes, with appropriate settings balancing exploration and exploitation.

In advanced GA implementations, mutation strategies may evolve during the optimization process. For example, two-phase evolutionary strategies may begin with global mutations to identify promising regions in the search space, then transition to more focused optimizations through semantic mutations and gradient-based refinements [19]. For cluster geometry optimization, mutation operators must generate chemically plausible atomic displacements to maintain physical relevance.

Advanced Methodological Frameworks

Enhanced Genetic Algorithm (EGA) for Complex Optimization

Recent advances in GA methodologies have led to the development of sophisticated frameworks like the Enhanced Genetic Algorithm (EGA), which employs a two-phase optimization approach for complex problems [21]. In this architecture:

- Phase 1 employs domain-specific chromosome encoding to assign tasks while enforcing compatibility constraints

- Phase 2 locally refines each robot's path to minimize travel distance and improve load balancing

This bifurcated strategy simultaneously addresses system-level scalability and local optimization, significantly enhancing convergence stability and solution robustness, especially in large-scale instances [21]. For cluster geometry optimization, this approach could be adapted with an initial phase focusing on global cluster topology and a second phase refining atomic positions within that topology.

Hybrid Optimization Frameworks

The GAAPO (Genetic Algorithm Applied to Prompt Optimization) framework demonstrates how GAs can integrate multiple specialized generation strategies within an evolutionary framework [19]. Unlike traditional genetic approaches that rely solely on mutation and crossover operations, hybrid frameworks capitalize on the strengths of diverse techniques, ensuring optimal performance while maintaining detailed records of strategy evolution. This approach highlights the importance of the tradeoff between population size and the number of generations, with both parameters significantly affecting optimization outcomes [19].

Application to Cluster Geometry Optimization

Experimental Protocol for Atomic Cluster Optimization

Objective: Determine the minimum energy structure of atomic clusters using Genetic Algorithms.

Materials and Computational Environment:

- High-performance computing cluster with parallel processing capabilities

- Quantum chemistry software (e.g., Gaussian, VASP) for energy calculations

- Interatomic potential functions specific to the atomic system under study

- Custom GA implementation with domain-specific genetic operators

Procedure:

- Problem Representation: Encode atomic coordinates as chromosomes using floating-point representation for Cartesian coordinates or internal coordinates

- Population Initialization: Generate initial population of cluster structures using:

- Random atomic positions within a defined spatial boundary

- Seed structures from known similar clusters or symmetric arrangements

- A combination of random and heuristic initialization methods

- Fitness Evaluation: For each cluster configuration in the population:

- Perform energy calculation using appropriate quantum mechanical or empirical methods

- Assign fitness score based on potential energy (lower energy = higher fitness)

- Implement parallel evaluation to reduce computational overhead

- Selection Operation: Apply tournament selection with size 3-5 to choose parents for reproduction while maintaining population diversity

- Crossover Operation: Implement geometric crossover operators such as:

- Cut-and-splice crossover combining portions of parent clusters

- Weighted average of atomic coordinates

- Rotationally invariant crossover preserving cluster symmetry

- Mutation Operation: Apply structural mutation operators including:

- Small random displacements of atomic positions (Gaussian perturbation)

- Rotation of cluster subunits

- Exchange of atom positions within the cluster

- Termination Check: Evaluate stopping criteria after each generation:

- Maximum number of generations reached (typically 500-5000)

- Convergence of fitness values (improvement < threshold over N generations)

- Computational budget exhausted

- Result Extraction: Identify the best-performing cluster structure from the final population and perform refined quantum mechanical analysis to confirm stability

Parameters for Cluster Optimization: Table 1: Typical Parameter Ranges for Cluster Geometry Optimization Using GAs

| Parameter | Recommended Range | Notes |

|---|---|---|

| Population Size | 50-200 individuals | Larger for more complex clusters |

| Number of Generations | 500-5000 | Depends on convergence behavior |

| Crossover Rate | 0.7-0.9 | Higher rates promote exploration |

| Mutation Rate | 0.01-0.1 per gene | Lower rates for fine-tuning |

| Selection Method | Tournament (size 3-5) | Balances selectivity and diversity |

| Elitism Rate | 1-5% | Preserves best solutions |

Research Reagent Solutions for Computational Experiments

Table 2: Essential Computational Tools and Resources for GA-based Cluster Optimization

| Research Reagent | Function in Experiment | Implementation Notes |

|---|---|---|

| Interatomic Potential Functions | Describes energy landscape of atomic interactions | Choose based on system: Lennard-Jones for noble gases, Tersoff for covalent systems |

| Quantum Chemistry Software | Provides accurate energy calculations for fitness evaluation | Gaussian, VASP, ORCA for high accuracy; LAMMPS for empirical potentials |

| Parallel Computing Framework | Enables simultaneous fitness evaluation of population members | MPI or OpenMP implementation critical for computational efficiency |

| Domain-Specific Genetic Operators | Custom crossover and mutation for chemical structures | Ensures generated clusters remain physically plausible |

| Visualization Software | Analyzes and validates resulting cluster geometries | VMD, Jmol, or custom visualization tools |

| Statistical Analysis Package | Tracks convergence and performance metrics | Custom scripts to monitor diversity and fitness progression |

Visualization of Genetic Algorithm Workflow

Performance Analysis and Benchmarking

Experimental results across diverse optimization domains demonstrate that GAs consistently produce near-optimal solutions. In multi-robot task allocation problems, enhanced genetic algorithms have achieved average optimality gaps below 1.5% while reducing computation times by up to 90% compared to exact mixed integer linear programming approaches [21]. For atomic cluster optimization, GAs have proven to be highly effective tools for determining minimum energy structures, generally outperforming other optimization methods for this specific task [20].

The two-phase enhanced genetic algorithm architecture has shown significant improvements in convergence stability and solution robustness, particularly in large-scale instances [21]. This approach effectively addresses the exploration-exploitation tradeoff that is fundamental to evolutionary algorithms, with the first phase performing broad exploration of the solution space and the second phase focusing on localized refinement.

Genetic Algorithms provide a powerful and biologically-inspired framework for solving complex optimization problems, particularly in domains like cluster geometry optimization where traditional methods struggle with high-dimensional search spaces containing numerous local minima. By mimicking the fundamental principles of natural evolution—population dynamics, fitness-based selection, genetic recombination, and mutation—GAs can efficiently navigate these complex landscapes to identify optimal or near-optimal solutions.

The continuing development of enhanced genetic algorithms with specialized operators, hybrid strategies, and domain-specific implementations further expands the applicability and performance of these methods across scientific and engineering domains. For researchers in computational chemistry and materials science, GAs offer a robust methodology for predicting stable molecular configurations and understanding the fundamental principles governing molecular self-organization.

The Historical Development of GAs in Chemical Physics and Nanoscience

Genetic Algorithms (GAs) represent a powerful class of stochastic global optimization methods inspired by the principles of natural evolution and genetics. In chemical physics and nanoscience, GAs have become indispensable tools for solving one of the most challenging problems: predicting the most stable structures of atomic and molecular clusters. The exponential increase in possible configurations with system size renders this problem computationally intractable for exact methods, placing it in the non-deterministic polynomial (NP) complexity class [15]. Since their formalization in the 1950s and popularization by John H. Holland in the 1970s, GAs have evolved from general optimization frameworks to sophisticated techniques specifically tailored for navigating the complex potential energy surfaces (PES) of nanoscale systems [1] [15]. This application note traces the historical development of GAs in these fields, provides detailed protocols for their implementation, and highlights key applications from foundational studies to contemporary research.

Historical Trajectory and Key Developments

The application of GAs to geometry optimization problems in chemical physics began in earnest in the 1990s, as researchers sought methods capable of locating global minima on high-dimensional PESs. The fundamental challenge stems from the exponential scaling of local minima with system size, formally described by the relation ( N_{min}(N) = \exp(\xi N) ), where ( \xi ) is a system-dependent constant [1]. This complexity necessitates intelligent search strategies that balance exploration of the configuration space with exploitation of promising regions.

Table 1: Historical Timeline of Key GA Developments in Chemical Physics

| Time Period | Key Development | Significance |

|---|---|---|

| 1950s-1970s | Formalization of Genetic Algorithms [15] | Established evolutionary principles as optimization strategy |

| 1990s | Application to Cluster Geometry Optimization [15] | Recognized NP-hard nature of cluster prediction; GA as solution |

| Late 1990s | Phenotype Genetic Operators [15] | Problem-specific operators considering cluster geometry improved efficiency |

| Early 2000s | Floating-Point Representation & Local Relaxation [15] | Enhanced computational efficiency and solution quality |

| 2000s-2010s | Parallelization & Lamarckian Evolution [15] | Enabled study of larger systems via distributed computing |

| 2010s-Present | Hybrid Algorithms (e.g., GA-PSO, GA-DFT) [1] | Combined strengths of multiple global optimization methods |

| 2020s-Present | Integration with Machine Learning & Chaos Theory [23] | Enhanced initial population diversity and search guidance |

A pivotal advancement was the shift from genotype operators (simple bit-string manipulations) to phenotype operators that incorporate physical and chemical insights about nanoparticle geometry. This transition significantly improved inheritance properties, ensuring that offspring structures meaningfully combine parental traits [15]. Subsequent innovations included floating-point representation for continuous variables, local relaxation to refine candidate structures and reduce computational cost, and parallelization strategies for high-performance computing environments [15].

The incorporation of Lamarckian evolution, where locally optimized geometries are encoded back into the genetic population, further enhanced convergence rates [15]. Recent trends focus on hybrid approaches, such as the 2025 New Improved Hybrid Genetic Algorithm (NIHGA) that integrates chaos theory using an improved Tent map to enhance initial population diversity and employs association rules to mine dominant blocks, thereby reducing problem complexity [23]. Similarly, the integration of machine learning techniques with traditional GA frameworks has demonstrated significant potential to guide exploration and accelerate convergence [1].

Core Methodologies and Experimental Protocols

Fundamental Workflow of a Genetic Algorithm for Cluster Optimization

The standard workflow for applying GAs to cluster geometry optimization follows a structured, iterative process designed to emulate natural selection.

Protocol 1: Standard GA for Cluster Geometry Optimization

Representation: Encode the cluster's geometry into a chromosome.

- Method A (Cartesian Coordinates): Directly use the (x, y, z) coordinates of all atoms. Requires careful handling of rotation/translation.

- Method B (Internal Coordinates): Use bond lengths, angles, and dihedrals. More biologically plausible but complex.

- Method C (Direct Lattice Encoding): For crystalline nanoparticles, encode unit cell parameters and atomic basis.

Initial Population Generation: Create a diverse set of initial candidate structures (( N \approx 50-100 )).

- Pure Random: Place atoms randomly within a defined volume.

- Seeded with Known Motifs: Incorporate common structural motifs (e.g., icosahedral, face-centered cubic fragments for metals) to bias search towards chemically plausible regions.

Fitness Evaluation: Calculate the potential energy of each cluster in the population.

- Low-Cost Model Potentials (for large clusters/screening): Use empirical potentials (e.g., Lennard-Jones, Gupta, Embedded Atom Method).

- High-Accuracy Ab Initio Methods (for final validation/small clusters): Employ Density Functional Theory (DFT), Second-Order Møller–Plesset Perturbation Theory (MP2), or Local MP2 (LMP2) for BSSE-free results [24].

Selection: Choose parents for reproduction based on their fitness (lower energy = higher probability of selection).

- Tournament Selection: Randomly select k individuals and choose the best among them.

- Roulette Wheel / Fitness-Proportionate Selection: Probability of selection is proportional to fitness.

Genetic Operators:

- Crossover (Phenotype): Combine parts of two parent structures to create an offspring.

- Cut-and-Splice: A common method where two clusters are cut and recombined [15].

- Mutation (Phenotype): Introduce random changes to an individual's structure.

- Atom Displacement: Randomly perturb the position of one or more atoms.

- Rotation: Rotate a subgroup of atoms.

- Permutation: Swap the identities of different atoms in an alloy cluster.

- Crossover (Phenotype): Combine parts of two parent structures to create an offspring.

Local Optimization (Lamarckian Learning): Locally relax every new offspring structure using a local minimizer (e.g., Conjugate Gradient, BFGS) before evaluating its fitness. This crucial step simplifies the energy landscape [15].

Replacement: Form the new generation by selecting individuals from the parent and offspring pools. Elitism (carrying the best individual(s) forward unchanged) is often used to preserve found minima.

Termination: Halt the algorithm when a convergence criterion is met (e.g., no improvement in best fitness for >100 generations, or a maximum number of generations is reached).

Advanced and Hybrid Protocol: The NIHGA for Complex Systems

Recent research focuses on enhancing GA performance through hybridization. The following protocol is adapted from the 2025 New Improved Hybrid Genetic Algorithm (NIHGA) for complex manufacturing system layout, with principles applicable to chemical clusters [23].

Protocol 2: NIHGA with Chaos and Association Rules

Chaotic Initialization:

- Use an Improved Tent Map to generate the initial population. This chaotic system enhances diversity and distribution uniformity compared to pseudo-random number generators.

- Map the chaotic sequences to the parameter space defining cluster coordinates.

Dominant Block Mining via Association Rules:

- During the run, analyze the population of high-fitness (low-energy) individuals.

- Apply association rule theory to identify frequently occurring structural subunits or "dominant blocks" (e.g., a stable pentagonal ring in a water cluster [24]).

- Construct "artificial chromosomes" that preserve these dominant blocks, effectively reducing the dimensionality and complexity of the problem.

Matched Crossover and Mutation:

- Perform standard genetic operations on the layout string, but with operators designed to respect the integrity of identified dominant blocks where beneficial.

Adaptive Chaotic Perturbation:

- After the primary genetic operations, apply a small, adaptive perturbation using the chaotic map to the best solution found.

- This step helps the algorithm escape shallow local minima and explore the vicinity of high-quality solutions.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Computational Reagents and Resources for GA-Driven Cluster Optimization

| Item / Resource | Function / Description | Example Applications |

|---|---|---|

| Empirical Potentials (e.g., Lennard-Jones, EAM) | Fast, approximate energy evaluation for large clusters or initial screening. | Structure prediction of rare-gas (Ar, Xe) and metal (Au, Ni) clusters [15]. |

| Ab Initio Methods (DFT, MP2, LMP2) | High-accuracy energy and force calculation for electronic structure and final validation. | Prediction of accurate geometries and energies for water clusters [(H₂O)ₙ] and semiconductor clusters (SiGe) [24] [15]. |

| Local Optimizer (e.g., Conjugate Gradient, BFGS) | Performs local relaxation of candidate structures, a key step in the "Basin-Hopping" paradigm. | Used in every GA cycle to quench structures to the nearest local minimum [15]. |

| NEMO Potential | A refined model potential parameterized against high-level ab initio data. | Accurate modeling of intermolecular interactions in water clusters [24]. |

| Global Optimization Software (e.g., GASP, GMIN) | Pre-packaged software suites implementing various GA and other global optimization methods. | Accelerates protocol setup and provides tested implementations of genetic operators [15]. |

Application Case Studies

Case Study 1: Water Clusters (H₂O)ₙ

The search for the global minimum structures of water clusters is a benchmark problem in chemical physics. In a seminal 1998 study, a combined approach was used to optimize the geometries of (H₂O)₅ and (H₂O)₆ [24].

Objective: Locate the global minimum and low-lying local minima of water pentamers and hexamers using a high-level ab initio method (LMP2).

Methodology:

- A NEMO model potential was used for the initial global search.

- The parameters of the NEMO potential were simultaneously optimized against LMP2 single-point energies at minimum structures found.

- The best geometries from this cycle were then used as starting points for full local optimization at the LMP2 level with analytical gradients.

Key Findings:

- For (H₂O)₅, the global minimum was a slightly puckered homodromic ring, with four oxygen atoms nearly coplanar and the fifth (the pivot) shifted out of the plane.

- For (H₂O)₆, both a cage structure and a ring structure were identified as low-energy minima, with the cage being the global minimum at the LMP2 level.

- This simultaneous optimization strategy successfully produced an improved NEMO potential that accurately reproduced the LMP2 minimum structures and their energy ordering.

Case Study 2: Carbon and SiGe Core-Shell Nanoparticles

GAs have been extensively applied to carbon-based systems and semiconductor nanomaterials. A notable application involved a single-parent Lamarckian GA [15].

Objective: Determine the most stable atomic arrangement of carbon clusters (Cₙ) and SiGe core-shell structures.

Methodology:

- A single-parent GA was employed, questioning the necessity of crossover for certain cluster problems.

- Lamarckian learning was implemented: every offspring was locally relaxed, and its optimized phenotype was encoded back into the genotype.

- Phenotype operators were used, including moves that specifically altered bond lengths and angles in a chemically meaningful way.

Key Findings:

- The algorithm successfully located known global minima for small carbon clusters, such as the ring structure for C₁₀.

- For SiGe core-shell clusters, the GA predicted stable configurations where the core and shell elements were segregated to minimize strain and surface energy.

- The study demonstrated that a single-parent GA with efficient phenotype operators could be highly effective, reducing computational overhead associated with managing a large population and crossover operations.

Comparative Performance Analysis

The evolution from standard GAs to advanced hybrid models has yielded significant improvements in performance metrics.

Table 3: Performance Comparison of GA Variants

| Algorithm Type | Key Strengths | Limitations / Challenges | Reported Efficacy |

|---|---|---|---|

| Standard GA (Genotype) | General-purpose, simple to implement. | Inefficient for complex PES; poor inheritance in bit representation. | Foundational but largely superseded by phenotype variants [15]. |

| Standard GA (Phenotype) | Chemically intuitive operators; higher inheritance fidelity. | Requires problem-specific knowledge to design operators. | Superior efficiency for atomic clusters compared to genotype GA [15]. |

| Lamarckian GA | Dramatically accelerated convergence. | Risk of losing genetic diversity prematurely. | Essential for efficient optimization of nanoparticles [15]. |

| Hybrid NIHGA (Chaos + Rules) | Enhanced diversity; reduces problem complexity. | Increased algorithmic complexity and parameter tuning. | Superior to traditional methods in both accuracy and efficiency [23]. |

| GA-ML Hybrids | Uses learned patterns to guide search; potential for transfer learning. | Requires large datasets for training; risk of bias. | Significant potential to enhance search performance and convergence [1]. |

The historical development of Genetic Algorithms in chemical physics and nanoscience showcases a trajectory of increasing sophistication, driven by the need to solve the computationally demanding problem of cluster geometry optimization. From their origins as general-purpose evolutionary algorithms, GAs have been refined through the introduction of phenotype operators, Lamarckian learning, and parallelization. The current state-of-the-art involves hybrid approaches that integrate chaos theory for initialization and machine learning or data-mining techniques like association rules to intelligently guide the search process. These advanced protocols, such as the NIHGA, demonstrate superior performance by more effectively balancing global exploration and local exploitation on complex potential energy surfaces. As computational power grows and algorithmic innovations continue, GAs are poised to remain a cornerstone method for predicting the structure and properties of matter at the nanoscale.

Building and Applying a Genetic Algorithm for Cluster Optimization

Application Note: Core Components for Cluster Geometry Optimization

This document details the essential components for implementing a Genetic Algorithm (GA) tailored for cluster geometry optimization in computational chemistry and drug development. The primary challenge in this field is efficiently locating the global minimum on a high-dimensional potential energy surface (PES), where the number of local minima grows exponentially with the number of atoms [1]. GAs excel in this domain by mimicking natural selection to evolve a population of candidate structures toward optimality [15]. The following sections elaborate on the critical triumvirate of representation, fitness function, and selection, providing a foundation for a robust GA framework.

Detailed Methodologies and Protocols

Genetic Representation of Molecular Structures

The representation, or encoding, defines how a candidate solution (e.g., a cluster geometry) is represented as an individual chromosome within the GA population. The choice of representation directly influences the design and efficiency of genetic operators [15].

Protocol: Real-Valued Coordinate Representation for Atomic Clusters

- Objective: To encode the 3D geometry of an N-atom cluster for use in a GA.

- Materials: A computational model for energy calculation (e.g., Brenner potential, Density Functional Theory).

- Procedure:

- Chromosome Structure: Represent an individual cluster as a single, one-dimensional array of length 3N.

- Data Encoding: Store the Cartesian coordinates of each atom sequentially in the array:

[x1, y1, z1, x2, y2, z2, ..., xN, yN, zN]. - Initialization: Generate the initial population by creating random arrays. Atomic positions can be randomized within a physically plausible sphere or cube to ensure diverse starting geometries.

- Genetic Operations:

- Phenotype Mutation: Apply small, random displacements to a subset of the atomic coordinates. This mimics atomic vibrations and leads to localized structural changes [15] [25].

- Phenotype Crossover: Implement a cut-and-splice operation between two parent clusters. This exchanges contiguous blocks of coordinates between two parent arrays to create a novel child structure, effectively combining structural motifs from both parents [15] [25].

- Advantages: This direct, real-value encoding is intuitive and allows for the design of "phenotype" genetic operators that are geometrically meaningful, leading to higher efficiency compared to simple binary "genotype" operators [15].

Table 1: Comparison of GA Representation Schemes for Cluster Optimization

| Representation Type | Description | Advantages | Disadvantages |

|---|---|---|---|

| Real-Valued Coordinate [15] [25] | Array of Cartesian coordinates (x,y,z) for each atom. | Intuitive; enables efficient phenotype operators. | May generate physically unrealistic structures during crossover. |

| Binary String [15] [10] | Classical GA representation using bits of 0s and 1s. | Simple to implement; standard operators. | Requires conversion; less efficient for continuous parameters. |

| Internal Coordinates | Based on bond lengths, angles, and dihedrals. | Reduces dimensionality; inherently preserves bonding. | More complex implementation; requires careful constraint handling. |

Designing the Fitness Function

The fitness function is the primary guidance mechanism for the GA, quantitatively evaluating the quality of each candidate solution in the population [26]. For cluster geometry optimization, the objective is to find the most stable structure, which corresponds to the global minimum on the PES [1].

Protocol: Defining a Potential Energy-Based Fitness Function

- Objective: To compute a fitness score that accurately reflects the stability of a candidate cluster geometry.

- Materials: An energy calculator (e.g., an empirical potential or a quantum mechanics code like DFT).

- Procedure:

- Energy Calculation: For a given chromosome (atomic coordinates), compute the total potential energy of the cluster, ( E_{\text{total}} ), using the chosen energy calculator.

- Fitness Assignment: Define the fitness score, ( F ), such that a lower energy corresponds to a higher fitness. A common formulation is ( F = -E{\text{total}} ). Alternatively, for minimization-only GAs, the fitness can be directly set to ( E{\text{total}} ), with the goal being its minimization.

- Validation: Ensure the fitness function is computed efficiently, as it is the most computationally expensive part of the algorithm. For complex systems, approximate methods or machine learning potentials may be integrated to speed up evaluation [1].

- Considerations: The function must be defined over the entire genetic representation and must be sensitive enough to distinguish between similar structures. In multi-objective optimization, the fitness function may be extended to a vector of objectives (e.g., energy and dipole moment), with solutions lying on a Pareto front [27].

The following diagram illustrates the workflow for evaluating a candidate solution's fitness, which is a core part of the generational GA cycle.

Selection Operators for Maintaining Diversity and Pressure

The selection operator determines which individuals from the current generation are chosen to create the next generation. It applies evolutionary pressure by favoring fitter individuals, while also needing to maintain population diversity to avoid premature convergence [10] [28].

Protocol: Implementing Tournament Selection

- Objective: To stochastically select parent individuals for crossover based on their fitness.

- Materials: A population of candidate solutions with assigned fitness values.

- Procedure:

- Set Tournament Size: Choose a tournament size, ( k ) (typically between 2 and 7).

- Run Tournament: Randomly select ( k ) individuals from the population.

- Choose Winner: The individual with the best fitness among the ( k ) is selected as a parent.

- Repeat: Repeat steps 2 and 3 until the desired number of parents is selected.

- Advantages: Tournament selection is easy to implement, parallelizable, and provides a tunable selection pressure through the parameter ( k ). A larger ( k ) increases the selection pressure, favoring the best individuals more aggressively.

Table 2: Common Selection Operators in Genetic Algorithms

| Operator | Mechanism | Impact on Diversity | Best For |

|---|---|---|---|

| Tournament Selection [10] | Selects best from a random subset of size k. | Tunable diversity via k; generally good. | Most applications; easy parameter tuning. |