Cross-Modality Image Registration: Overcoming Challenges in Biomedical Research and Drug Development

This comprehensive article addresses the critical challenges and solutions in cross-modality medical image registration, targeting researchers, scientists, and drug development professionals.

Cross-Modality Image Registration: Overcoming Challenges in Biomedical Research and Drug Development

Abstract

This comprehensive article addresses the critical challenges and solutions in cross-modality medical image registration, targeting researchers, scientists, and drug development professionals. It explores the fundamental hurdles caused by differing physical principles and image characteristics, details modern methodological approaches from traditional algorithms to AI-powered techniques, provides practical troubleshooting and optimization strategies, and establishes frameworks for robust validation and comparative analysis. The content synthesizes current best practices and future directions, essential for advancing multi-modal imaging in precision medicine and therapeutic development.

Understanding Cross-Modality Mismatch: The Core Challenges in Image Registration

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Why does my automated multimodal registration (e.g., MRI-PET) consistently fail with high residual error, even after trying multiple algorithms? A: This is a common issue stemming from fundamental intensity distribution mismatches. PET data represents metabolic activity (a physiological property), while MRI captures proton density or relaxation times (anatomic/physicochemical property). There is no intrinsic linear correlation between their voxel intensities. Ensure you are using a mutual information (MI) or normalized mutual information (NMI) based similarity metric, which is designed for such multi-modal scenarios. Check that your input images have sufficient overlap; pre-align them manually if necessary. Also, verify that the cost function converges by plotting iterations. If using a deep learning method, confirm your training data distribution matches your test data.

Q2: During histology-to-in-vivo imaging registration, tissue deformation and tearing make landmarks unreliable. How can I proceed? A: Histological processing introduces non-linear, non-uniform distortions (sectioning, fixation, staining). You must implement a two-stage registration pipeline. First, correct intra-histology distortions using elastic registration between serial sections or to a blockface photo. Second, establish a landmark- or feature-based initial alignment to the in-vivo scan (e.g., MRI). Finally, refine with a deformable registration using a similarity metric robust to missing correspondences (e.g., Advanced Normalization Tools - ANTs SyN). Consider using a biorubber embedding protocol to minimize initial physical deformation.

Q3: My CT-PET fusion is good anatomically, but when I add MRI, the alignment is off. What could be the cause? A: This is often due to differential patient positioning between scanning sessions and inherent field-of-view (FOV) distortions in MRI. First, ensure all modalities are initially registered to a common, high-contrast anatomic reference (typically CT, due to its geometric fidelity). Perform MRI-to-CT registration first, then apply the resulting transform to align PET-MRI. Pay special attention to correcting MR geometric distortion, especially in sequences with wide bandwidth. Utilize phantom-based distortion correction maps if your scanner supports it. The issue is compounded by the fact that PET is often acquired simultaneously with CT, but MRI is separate.

Q4: What are the primary quantitative metrics for evaluating the success of cross-modality registration, and what are typical acceptable values? A: The metrics depend on the application (diagnostic vs. radiotherapy). See the table below for common benchmarks.

Table 1: Key Quantitative Metrics for Multi-modal Registration Validation

| Metric | Typical Calculation | Interpretation & Target Values | Best For Modalities |

|---|---|---|---|

| Target Registration Error (TRE) | Mean distance between fiducial markers post-registration. | < 2 mm for intracranial; < 5 mm for abdominal. Gold standard but requires invasive markers. | All, especially CT-MRI-PET. |

| Dice Similarity Coefficient (DSC) | Overlap of segmented structures: 2|A∩B|/(|A|+|B|) |

0.7-0.9 indicates good alignment. Requires accurate segmentation. | MRI-CT, MRI-PET (anatomical). |

| Mutual Information (MI) | Measures statistical dependency of voxel intensities. | Higher is better. No universal threshold; use relative improvement from initial alignment. | MRI-PET, CT-PET, Histology-MRI. |

| Mean Square Error (MSE) | Average squared intensity difference. | Only valid for mono-modal registration. Low value indicates good match. | Serial MRI, Histology sections. |

Q5: Can you provide a standard experimental protocol for validating a new registration algorithm for histology-to-MRI fusion? A: Title: Protocol for Ex Vivo Histology and In Vivo MRI Co-Registration in Rodent Brain. Objective: To achieve and validate accurate 3D reconstruction of 2D histological sections onto a pre-mortem MRI volume. Materials: Perfusion setup, paraformaldehyde, sucrose, cryostat, slide scanner, MRI system (e.g., 7T), analysis workstation with Elastix/ANTs/ITK-SNAP. Procedure:

- Pre-mortem MRI: Acquire a high-resolution T2-weighted 3D scan. Use a stereotactic fiducial system visible on MRI and histology.

- Perfusion & Extraction: Perfuse-fix the animal, extract the brain, and post-fix. Optionally, embed in a customized mold for blockface imaging.

- Cryosectioning: Freeze the tissue. Serially section (e.g., 40 µm thickness) using a cryostat. Collect every section on a slide.

- Staining & Digitization: Stain (e.g., Nissl). Use a high-resolution slide scanner to digitize each section at 1 µm/pixel.

- Pre-processing: (a) Histology stack reconstruction: Align 2D sections to each other using rigid + B-spline deformable registration, referencing the blockface image. (b) MRI pre-processing: Apply skull-stripping and bias field correction.

- Registration: Perform a multi-stage registration from the reconstructed 3D histology volume to the MRI. Start with an initial manual landmark alignment (using fiducials/ventricles). Follow with an affine registration. Finally, apply a high-degree deformable registration (e.g., SyN in ANTs) with a mutual information metric.

- Validation: Calculate the Dice coefficient on independently segmented structures (e.g., hippocampal subfields). Measure TRE if fiducial markers were implanted.

The Scientist's Toolkit: Research Reagent & Essential Materials

Table 2: Key Research Reagents & Materials for Multi-modal Registration Experiments

| Item Name | Category | Function / Purpose |

|---|---|---|

| ITK / SimpleITK | Software Library | Open-source toolkit for image registration and segmentation. Provides algorithmic backbone for many custom pipelines. |

| 3D Slicer | Software Platform | Open-source platform for visualization, processing, and multi-modal data fusion. Enables manual correction and plugin development. |

| Elastix / ANTs | Registration Software | Specialized, robust software packages for rigid, affine, and deformable image registration. Considered state-of-the-art for medical images. |

| Multi-modal Image Phantom | Physical Calibration | Physical object with features visible on multiple modalities (MRI, CT, PET). Used for validating scanner alignment and registration algorithms. |

| Radio-opaque Fiducial Markers | Experimental Material | Beads or clips visible on CT/MRI/histology. Implanted in tissue to provide ground truth landmarks for Target Registration Error (TRE) calculation. |

| Cryostat | Laboratory Equipment | For obtaining thin, serial tissue sections essential for creating a 3D volume from 2D histology slides. |

| Whole Slide Scanner | Laboratory Equipment | Digitizes histological slides at high resolution, enabling computational analysis and registration. |

| Paraformaldehyde (PFA) | Chemical Fixative | Preserves tissue structure during perfusion fixation, minimizing histological distortion that complicates registration. |

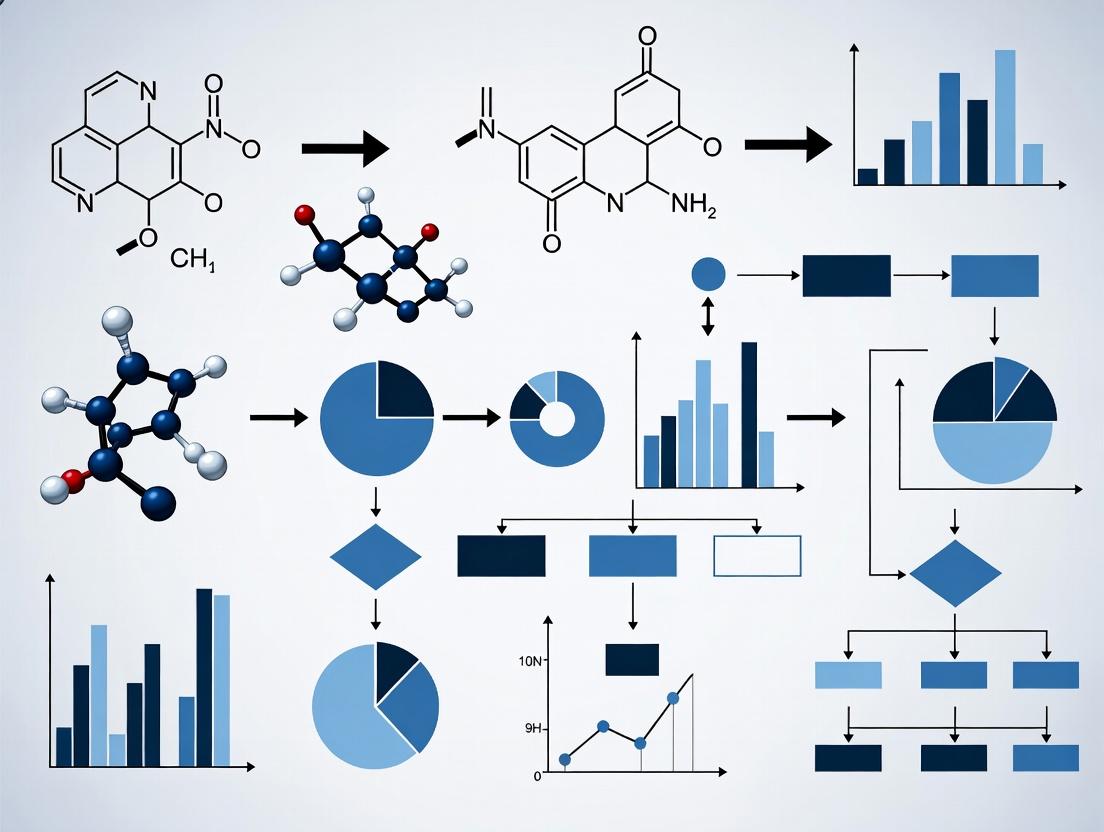

Experimental Workflow & Relationship Diagrams

Title: Core Challenges in Multi-modal Image Fusion

Title: Standard Multi-modal Registration & Validation Workflow

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During multimodal registration of fluorescent microscopy and MRI data, we observe persistent spatial mismatches in the 10-50 µm range, even after affine correction. What is the likely cause and how can we resolve it?

A: This is a classic manifestation of the Physics Gap. The mismatch stems from the fundamental difference in signal origin: fluorescent signals originate from specific molecular tags (e.g., GFP), while MRI signals (e.g., T2-weighted) originate from bulk water proton density and relaxation properties. The resulting images represent different biological and physical spaces. To resolve:

- Protocol: Perform a landmark-based registration using fiducial markers visible in both modalities (e.g., multi-modality fiducial beads filled with a contrast agent like Gadolinium and a fluorophore like Rhodamine).

- Methodology: Embed fiducials in your sample or phantom. Acquire both modality images. Manually or algorithmically identify the centroid of at least 4 non-coplanar fiducials in each image set. Use a point-based registration algorithm (e.g., Iterative Closest Point) to compute a non-rigid (e.g., B-spline) transformation model. Validate using Target Registration Error (TRE) on fiducials not used in the computation.

Q2: When registering CT (bone structure) with optoacoustic imaging (vasculature), we struggle with intensity-based similarity metrics. Why do mutual information and normalized correlation fail?

A: These metrics fail because they assume a functional relationship between intensities across modalities, which does not exist when the underlying physics—X-ray attenuation vs. optical absorption—measures entirely unrelated tissue properties. There is no consistent intensity relationship between bone density and hemoglobin concentration.

- Protocol: Utilize a feature-based registration approach.

- Methodology: For CT, apply a segmentation algorithm (e.g., region-growing or thresholding) to extract the bone surface. For optoacoustic data, apply a vesselness filter (e.g., Frangi filter) to extract the vascular network centerlines. Register the extracted 3D surface mesh to the 3D centerline model using a distance metric, such as minimizing the average distance from vessel points to the bone surface, employing an iterative optimizer like Powell's method.

Q3: In live-cell fluorescence to electron microscopy correlation, we encounter severe deformation between modalities due to sample preparation (chemical fixation, resin embedding, sectioning). How can we account for this?

A: This is a severe, non-uniform deformation introduced by the Physics Gap between live optical states and fixed EM structural states.

- Protocol: Implement a fiducial grid-based non-rigid registration protocol.

- Methodology: Culture cells on a gridded coverslip (e.g., FINDER grid). Acquire live fluorescence images. After chemical fixation, resin embedding, and sectioning, acquire EM images of the same grid coordinates. The grid provides a dense set of corresponding points. Use a thin-plate spline or B-spline transformation model based on these corresponding grid points to warp the fluorescence data onto the EM space. The accuracy is limited by the grid density and section thickness.

Experimental Protocols Cited

Protocol 1: Fiducial-Based Multimodal Registration for Microscopy/MRI

- Sample Preparation: Prepare a phantom (or embed within sample) containing 1% agarose gel with 10 µm diameter multimodal fiducial beads (Gadolinium/Rhodamine) at a density of 50 beads/mL.

- Data Acquisition:

- MRI: Acquire T2-weighted image with 100 µm isotropic voxel size.

- Fluorescence Microscopy: Acquire confocal z-stack with 5 µm slice thickness and 1 µm in-plane resolution.

- Landmark Identification: Use 3D blob detection (LoG filter) to identify bead centroids in each modality. Manually verify correspondence.

- Transformation Computation: Input corresponding point sets into a coherent point drift (CPD) or ICP algorithm to compute a 3D affine + B-spline deformation field.

- Validation: Calculate TRE on a hold-out set of 30% of fiducials. Report mean ± std. dev. (e.g., 15.2 ± 4.3 µm).

Protocol 2: Feature-Based CT-Optoaсoustic Registration

- Data Acquisition: Acquire whole-body mouse scan.

- CT: Isometric voxel 100 µm, 80 kVp.

- Optoacoustic: 3D raster scan at 750 nm wavelength, 150 µm resolution.

- Feature Extraction:

- CT: Apply Otsu thresholding. Perform 3D morphological closing. Generate isosurface mesh of the skeleton.

- Optoacoustic: Apply 3D Hessian-based Frangi filter with scales 0.5-2.0 mm. Threshold to obtain binary vessel mask. Skeletonize to 1-voxel wide centerlines.

- Registration: Use an Iterative Closest Point variant (e.g., trimmed ICP) to align the vessel centerline point cloud to the bone surface mesh, allowing for rigid transformation initially, followed by affine.

- Validation: Qualitatively assess overlap of major vascular junctions (e.g., Circle of Willis) with cranial bone landmarks.

Table 1: Typical Spatial Resolution & Signal Origin by Modality

| Modality | Typical In-Plane Resolution | Signal Physical Origin | Biological Target Correlate |

|---|---|---|---|

| Clinical MRI (T2) | 0.5 - 1.0 mm | Proton density & relaxation (H2O) | Edema, bulk tissue |

| Confocal Fluorescence | 0.2 - 0.5 µm | Photon emission (fluorophore) | Specific protein (e.g., GFP-tagged) |

| Micro-CT | 5 - 50 µm | X-ray linear attenuation | Tissue density (bone > soft tissue) |

| Optoacoustic | 50 - 200 µm | Ultrasound from thermal expansion | Optical absorption (e.g., hemoglobin) |

| Electron Microscopy | 1 - 5 nm | Electron scattering | Ultra-structure |

Table 2: Registration Performance Comparison for Different Methods

| Registration Challenge | Method Used | Reported Target Registration Error (TRE) | Key Limitation |

|---|---|---|---|

| Fluorescence to EM (cell) | Fiducial Grid + Thin-Plate Spline | 70 ± 25 nm (post-sectioning) | Grid fabrication precision, sample deformation |

| MRI to Histology (mouse brain) | Landmark (Allen CCF) + Affine | 150 ± 100 µm | Tissue slicing distortion, contrast mismatch |

| CT to Optoacoustic (mouse) | Vessel-Bone Feature ICP | 0.3 ± 0.1 mm (vascular junctions) | Requires clear segmentable features |

Diagrams

Title: The Physics Gap in Signal Generation

Title: Multimodal Registration Decision Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Bridging the Physics Gap |

|---|---|

| Multi-Modality Fiducial Beads (e.g., Gd/Rhodamine, Quantum Dots) | Provide spatially identical, detectable landmarks across imaging modalities (e.g., MRI, Fluorescence, CT) to enable point-based correspondence. |

| Finder Grid Coverslips (Coordinate-etched) | Provide a physical coordinate system for relocating the same cell or region between light and electron microscopes, mitigating large-scale deformation. |

| Tissue Clearing Reagents (e.g., CUBIC, CLARITY) | Render tissue optically transparent to light while preserving fluorescence, improving depth penetration and correlation with deep modalities like MRI. |

| Ultrastructure-Preserving Fluorophores (e.g., miniSOG, APEX) | Generate EM-dense precipitates upon illumination, creating an EM-visible signal at the exact location of the fluorescent protein, directly linking optical and structural data. |

| Anisotropic Phantoms | Calibration objects with known, measurable geometry across scales, used to quantify and correct for modality-specific distortions before registration. |

Technical Support Center: Troubleshooting Cross-Modality Image Registration

This support center addresses common issues encountered when registering images from modalities with significant disparities in intensity characteristics, resolution, and noise (e.g., MRI, CT, fluorescence microscopy, electron microscopy). The guidance is framed within research on cross-modality registration challenges.

Troubleshooting Guides

Guide 1: Poor Registration Due to Intensity/Contrast Mismatch

- Problem: Intensity-based similarity metrics (e.g., Mutual Information) fail because the relationship between intensities in the two images is non-linear or non-stationary.

- Solution A (Preprocessing): Apply modality-specific normalization and intensity standardization. For structural modalities (MRI, CT), use histogram matching to a reference atlas. For functional modalities (fluorescence), apply percentile-based clipping (e.g., 0.5th to 99.5th percentile).

- Solution B (Algorithm Choice): Switch to a similarity metric designed for such disparities. Use Normalized Mutual Information (NMI) over Mutual Information for improved robustness, or employ Cross-Correlation for modalities with a linear intensity relationship. For deep learning-based registration, ensure the training dataset adequately represents the intensity range of your target images.

- Verification: Check the joint histogram of the two images after registration. A well-aligned but disparate pair will show a complex, non-diagonal but structured histogram.

Guide 2: Resolution and Scale Disparities Causing Loss of Detail

- Problem: Registering a high-resolution image (e.g., confocal microscopy) to a low-resolution one (e.g., MRI) causes blurring or misalignment of fine structures.

- Solution: Implement a multi-resolution registration pyramid. Begin registration at the lowest resolution of both images to capture large-scale alignment, then progressively refine at higher resolutions. The downsampling factor should match the relative resolution ratio.

- Critical Parameter: The number of pyramid levels and the smoothing sigma at each level must be set appropriately. A common starting point is 3 levels with a smoothing sigma of 1.5, 1.0, and 0.5 pixels/voxels (from coarse to fine).

- Experimental Protocol (Multi-resolution):

- Isotropically resample both images to have similar voxel spacing at the coarsest level.

- Apply Gaussian smoothing with a kernel defined by the sigma for the current level.

- Downsample the smoothed image by a factor of 2.

- Perform rigid/affine registration at this level.

- Propagate the transform to the next higher resolution level.

- Repeat steps 2-5 until the original resolution is reached.

- Optionally, perform a final deformable registration at the native resolution.

Guide 3: High Noise Levels in One Modality Degrading Alignment

- Problem: Noise in one image (e.g., low-light fluorescence, ultrasound) leads to unstable optimization and poor convergence of the registration algorithm.

- Solution A (Denoising): Apply a edge-preserving denoising filter before registration. For Gaussian-like noise, use a non-local means or Gaussian filter. For speckle noise (ultrasound), use a median or speckle-reducing anisotropic diffusion filter.

- Solution B (Metric Robustness): Use a similarity metric less sensitive to noise. Normalized Gradient Fields (NGF) focuses on image edges and gradients, which can be more robust to intensity noise than pure intensity-based metrics.

Frequently Asked Questions (FAQs)

Q1: We are registering pre-clinical µCT (bone structure) to fluorescence imaging (tumor cells). The images share geometry but have no intensity correlation. Which similarity metric should we use? A1: Use Normalized Mutual Information (NMI). It is the standard choice for aligning images from different modalities, as it measures the statistical dependency between image intensities without assuming a linear relationship. Avoid Sum of Squared Differences (SSD) or Cross-Correlation.

Q2: Our deep learning registration model, trained on MRI-CT pairs, performs poorly on new MRI-ultrasound data. What's wrong? A2: This is a classic case of domain shift. The model has learned features specific to the intensity distributions and noise characteristics of the training data. You must fine-tune the model on a (smaller) dataset of MRI-ultrasound pairs or employ domain adaptation techniques during training.

Q3: How do we quantitatively evaluate registration success when there are no manual landmarks? A3: Use modality-independent overlap metrics on segmented structures if available. The Dice Similarity Coefficient (DSC) is most common. If no segmentation exists, use intensity-based metrics post-registration (e.g., NMI value) as an indirect measure, but be cautious as NMI can increase even with physically implausible deformations.

Table 1: Comparison of Similarity Metrics for Cross-Modality Registration

| Metric | Best For | Robust to Noise? | Sensitive to Intensity Contrast? | Computational Cost |

|---|---|---|---|---|

| Normalized Mutual Information (NMI) | Different modalities (e.g., MRI-PET) | Moderate | No | High |

| Mutual Information (MI) | Different modalities | Moderate | No | High |

| Normalized Gradient Fields (NGF) | Modalities with aligned edges | High | No (uses gradients) | Medium |

| Cross-Correlation (CC) | Modalities with linear intensity relationship | Low | Yes | Low |

| Sum of Squared Differences (SSD) | Same modality serial registration | Very Low | Yes | Low |

Table 2: Common Filtering Strategies for Preprocessing

| Filter Type | Primary Use Case | Key Parameter | Effect on Registration |

|---|---|---|---|

| Gaussian Smoothing | General noise reduction, multi-resolution pyramids | Sigma (kernel width) | Reduces noise & detail; stabilizes coarse alignment |

| Non-Local Means | Preserving edges while denoising (MRI, CT) | Filter strength (h) | Reduces noise while maintaining structures for metric calculation |

| Median Filter | Removing speckle noise (Ultrasound) | Kernel radius | Effective for salt-and-pepper/speckle noise without blurring edges excessively |

| Histogram Matching | Standardizing intensity ranges across subjects/Modalities | Reference image histogram | Improves performance of intensity-based metrics across cohorts |

Experimental Protocol: Evaluating Metric Performance Under Noise

Objective: To determine the robustness of NMI, NGF, and CC when registering a simulated MRI to a CT image with increasing levels of Gaussian noise.

Materials: Simulated T1-weighted MRI and corresponding CT phantom from a public database (e.g., BrainWeb).

Methodology:

- Data Preparation: Extract a 3D volume from both modalities. Rigidly misalign the CT volume by a known transform (e.g., 5mm translation, 5° rotation).

- Noise Introduction: Add zero-mean Gaussian noise to the CT image only at 6 levels: 0%, 1%, 3%, 5%, 7%, 10% (relative to max intensity).

- Registration: For each noise level, run a rigid registration (optimizer: gradient descent) to recover the original transform using three separate metrics: NMI, NGF, and CC.

- Evaluation: Record the Target Registration Error (TRE) calculated at 10 predefined landmark positions. Record the final metric value and number of iterations to convergence.

- Analysis: Plot TRE vs. Noise Level for each metric. Plot Iterations to Convergence vs. Noise Level.

Diagrams

Title: Multi-Resolution Registration Workflow

Title: Similarity Metric Decision Tree

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Cross-Modality Validation Experiments

| Item / Reagent | Function in Registration Research | Example Product / Specification |

|---|---|---|

| Multi-Modality Phantom | Provides ground truth data with known geometry and varying contrast for algorithm validation. | Credence Cartridge Radiophantom (for PET/CT/MRI), Microscopy calibration slides with fiducial grids. |

| Fiducial Markers (Implantable) | Creates unambiguous corresponding points in different modalities for calculating Target Registration Error (TRE). | Beckley Gold Fiducial Markers (for MRI/CT), Multi-spectral fluorescent beads (for microscopy). |

| Image Processing Library | Provides tested implementations of registration algorithms, filters, and metrics. | SimpleITK, Elastix, ANTs, ITK (in C++/Python). |

| Deep Learning Framework | Enables development and training of learning-based registration models (e.g., VoxelMorph). | PyTorch, TensorFlow, with add-ons like MONAI for medical imaging. |

| High-Performance Computing (HPC) Access | Necessary for processing large 3D/4D datasets and training deep learning models. | Cluster with GPUs (NVIDIA V100/A100), ≥64 GB RAM, and parallel computing toolkits. |

Technical Support Center: Troubleshooting Cross-Modality Image Registration

FAQs & Troubleshooting Guides

Q1: Why does my MR-to-histology registration fail due to severe intensity inhomogeneity in the MR image?

A: Intensity inhomogeneity, common in MRI, disrupts intensity-based similarity metrics. Implement a two-step protocol: 1) Apply N4 bias field correction using the ANTs framework (antsN4BiasFieldCorrection). 2) Switch to a modality-independent neighborhood descriptor (MIND) as the similarity metric, which is robust to local intensity distortions. Pre-process with:

Q2: How can I address the large field-of-view (FOV) mismatch between whole-body CT and a targeted PET scan? A: FOV mismatch necessitates a masked registration approach. First, generate a body mask from the CT using thresholding and morphological closing. Use this mask to define the region of interest for the registration algorithm. In Elastix, use:

Q3: My non-rigid registration of ultrasound to MRI yields unrealistic organ deformations. How do I constrain the transformation?

A: This indicates over-regularization. Use a B-spline transformation model with explicit penalty term control. Increase the weight of the bending energy penalty (BendingEnergyPenaltyWeight). Start with a value of 0.01 and increase iteratively. Validate using biomechanically plausible landmark displacements.

Q4: What is the primary cause of misalignment when registering a population atlas to a single-subject fMRI, and how is it fixed? A: The primary cause is the high inter-subject anatomical variability not captured by a linear transformation. Solution: Employ a diffeomorphic (SyN) registration from ANTs, which preserves topology. Use the following command structure:

Q5: How do I validate registration accuracy for pre-operative MR to intra-operative ultrasound in neurosurgery without ground truth? A: Implement a target registration error (TRE) estimation using manually annotated, clinically relevant landmarks outside the tumor margin. Additionally, compute the mean surface distance (MSD) of segmented ventricles. A TRE < 2mm and MSD < 1.5mm is clinically acceptable for most applications.

| Validation Metric | Calculation | Acceptable Threshold (Neurosurgery) |

|---|---|---|

| Target Registration Error (TRE) | RMS distance of N landmark pairs | < 2.0 mm |

| Mean Surface Distance (MSD) | Average distance between segmented surfaces | < 1.5 mm |

| Dice Similarity Coefficient (DSC) | 2*|A∩B| / (|A|+|B|) for binary masks | > 0.85 |

Experimental Protocols

Protocol 1: Multi-Modal Atlas Construction (Mouse Brain) Objective: Create a consensus anatomical atlas from serial two-photon (2P), micro-CT (μCT), and block-face histology images. Methodology:

- Sample Preparation: Perfuse-fix mouse brain with paraformaldehyde (PFA). Stain with eosin for μCT contrast.

- Imaging: Acquire μCT scan (isotropic 5μm voxel). Perform serial 2P imaging (ex: 488nm) of surface, then section and stain with Nissl. Image each histology slice.

- Registration Pipeline: a. Histology Stack Co-registration: Align consecutive Nissl slices using rigid registration with normalized cross-correlation. b. 2P-to-Histology: Perform affine then diffeomorphic (SyN) registration using ANTs, using cell bodies as key features. c. μCT-to-Histology: Use landmark-based affine initialization (on vasculature/outline) followed by a symmetric diffeomorphic registration. d. Population Averaging: Register all subjects' μCT data to a chosen template using groupwise registration. Apply transforms to histology and 2P data. Intensity average all modalities.

- Validation: Calculate the Dice coefficient for major anatomical regions (cortex, hippocampus) across 5 subjects after registration.

Protocol 2: CT-PET Registration for Radiotherapy Planning Objective: Achieve accurate alignment of diagnostic CT, planning CT, and FDG-PET for gross tumor volume (GTV) delineation. Methodology:

- Data Acquisition: Acquire free-breathing diagnostic CT, 4D-CT (for motion modeling), and FDG-PET.

- Pre-processing: Reconstruct PET data using ordered-subset expectation maximization (OSEM) with CT-based attenuation correction. B-spline interpolate all images to isotropic 1mm³ voxels.

- Multi-stage Registration: a. Diagnostic CT to Planning CT: Use a deformable registration (e.g., Demons algorithm) to account for patient positioning and physiological differences. b. PET to Diagnostic CT: Perform a rigid (6-degree-of-freedom) registration maximizing mutual information. The PET has low resolution, so non-rigid registration is typically avoided to prevent unrealistic tracer uptake deformation.

- Transform Propagation: Apply the composite transform (b + a) to the PET image to map it into the planning CT space.

- Validation: Physician reviews fused images and contours GTV on planning CT using PET metabolic information. Quantitative analysis of standard uptake value (SUV) consistency in reference organs pre- and post-registration.

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Function in Cross-Modality Registration |

|---|---|

| Eosin Y Stain | Provides soft-tissue X-ray attenuation for micro-CT, enabling alignment of μCT to optical histology. |

| Gadolinium-based MR Contrast Agent | Enhances vascular and pathological tissue contrast in T1-weighted MRI, improving landmark identification for registration to angiography or histology. |

| DFO-chelated Radioisotopes (e.g., ⁸⁹Zr) | Enables long-half-life PET imaging, allowing serial scans to be registered to a single high-resolution anatomical CT/MR template over time. |

| Optical Clearing Agents (e.g., CUBIC, CLARITY) | Renders tissue transparent for light-sheet or two-photon microscopy, creating 3D volumes that can be registered to pre-clearing MRI/CT data. |

| Fiducial Markers (e.g., ZnS:Ag) | Implantable or surface markers visible across CT, MRI, and PET. Provide ground truth landmarks for validation of registration accuracy. |

Visualizations

Title: General Cross-Modality Registration Workflow

Title: MR-US Registration for Neurosurgical Guidance

The Impact of Poor Registration on Quantitative Analysis and Biomarker Discovery

Technical Support Center: Troubleshooting Poor Image Registration

FAQs & Troubleshooting Guides

Q1: What are the primary quantitative errors introduced by misaligned multi-modal images (e.g., MRI-PET) in a tumor volume study? A1: Poor registration leads to significant errors in standardized uptake value (SUV) calculations and volumetric discrepancies. Key metrics affected include:

- SUVmax Error: Can be overestimated by 25-40% in poorly defined tumor boundaries.

- Tumor Volume Discrepancy: Manual vs. "registered" automated segmentation can vary by 30-50%.

- Spatial Overlap (Dice Score): Dice coefficient can drop below 0.6 with >3mm misalignment, indicating poor overlap.

Q2: Our histology-to-in vivo MRI registration failed. The cellular biomarker patterns do not match the radiomic features. Where did we go wrong? A2: This is a classic cross-modality registration challenge. The failure likely stems from:

- Non-linear Tissue Deformation: Histological processing (fixation, sectioning) causes severe tissue shrinkage and distortion (often 15-30% linear deformation).

- Landmark Paucity: Lack of corresponding, unambiguous anatomical landmarks between the 2D histology slide and the 3D MRI volume.

- Protocol Issue: You may have used a rigid transformation where a non-parametric, deformable model (e.g., B-spline, diffeomorphic) was necessary. Follow the Experimental Protocol 1 below.

Q3: After registering longitudinal micro-CT scans of a bone metastasis model, our quantitative bone density measurements are inconsistent. What should we check? A3: Inconsistent voxel intensity values post-registration are common. Troubleshoot in this order:

- Interpolation Artifact: The resampling during transformation can alter original Hounsfield Unit (HU) values. Always perform density measurements on the original native image using the transformation matrix, not on the resampled image.

- Incorrect Similarity Metric: For serial mono-modal CT, use Mean Squared Error (MSE) or Normalized Correlation Coefficient (NCC) as the metric for intensity-based registration. Mutual Information is better for multi-modal cases.

- Background Inclusion: Ensure your region of interest (ROI) mask excludes background/air, which can skew intensity statistics.

Q4: In a multiplex immunofluorescence (mIF) to H&E whole-slide image registration for spatial phenotyping, cell counts are mislocalized. How can we improve accuracy? A4: This is a multi-channel 2D-to-2D registration problem. Implement this check:

- Channel Selection: Use the DAPI channel from mIF and the hematoxylin channel from H&E for registration. They both represent nuclear stains and provide the best feature correlation.

- Control Point Validation: Manually identify at least 10-15 corresponding control points (cell nuclei, vessel junctions) across the entire slide. After automated registration, calculate the Target Registration Error (TRE). If TRE > 2 cell diameters (≈15-20μm), reject the result and use a landmark-guided approach.

- Run Experimental Protocol 2 below.

Summarized Quantitative Data on Registration Impact

Table 1: Impact of Registration Error on Key Quantitative Metrics

| Metric | Good Registration (Dice >0.9) | Poor Registration (Dice <0.7) | Error Magnitude |

|---|---|---|---|

| Tumor Volume (MRI) | 152.3 ± 12.5 mm³ | 108.7 ± 25.1 mm³ | Up to -28.6% |

| SUVmean (PET) | 4.2 ± 0.8 | 5.5 ± 1.3 | Up to +31.0% |

| Radiomic Feature Stability (ICC) | >0.85 (Excellent) | <0.5 (Poor) | High Variability |

| Spatial Transcriptomics Correlation (r) | 0.92 | 0.61 | -33.5% |

Table 2: Recommended Similarity Metrics by Modality Pair

| Modality Pair (Fixed → Moving) | Recommended Similarity Metric | Use Case |

|---|---|---|

| CT → CT (Longitudinal) | Mean Squared Error (MSE) | Bone density tracking |

| MRI (T1) → MRI (T2) | Normalized Cross-Correlation (NCC) | Multi-parametric analysis |

| MRI → PET | Normalized Mutual Information (NMI) | Metabolic-anatomical fusion |

| Histology (H&E) → mIF | Advanced MI or Landmark-based | Cellular spatial analysis |

Experimental Protocols

Experimental Protocol 1: Robust Non-linear Histology-to-MRI Registration Objective: Align a 2D histology section with its corresponding slice from a 3D ex vivo MRI scan.

- Sample Preparation: Embed tissue in agarose for ex vivo MRI. After scanning, section tissue at same plane. Perform H&E staining.

- Preprocessing: Extract hematoxylin channel from H&E slide (color deconvolution). Apply anisotropic diffusion filter to both H&E channel and MRI slice to reduce noise while preserving edges.

- Feature Enhancement: Use vesselness filter or edge detector (e.g., Canny) on both images to highlight tubular structures and boundaries.

- Coarse Alignment (Landmark-based): Manually select 6-8 corresponding landmarks (vessel bifurcations, tissue corners). Perform an affine transformation.

- Fine Alignment (Deformable): Use a B-spline free-form deformation model with Normalized Mutual Information (NMI) as the similarity metric. Optimize with a gradient descent algorithm.

- Validation: Calculate the Target Registration Error (TRE) on a set of validation landmarks not used in step 4. Accept if mean TRE < 100μm.

Experimental Protocol 2: Multiplex IF to H&E Registration for Spatial Phenotyping Objective: Accurately map multiplex immunofluorescence (mIF) cell phenotypes onto H&E morphology.

- Image Acquisition: Scan the sequential tissue section for mIF (with DAPI) and the adjacent H&E slide at the same resolution (e.g., 0.5μm/px).

- Nuclear Segmentation (DAPI/Hematoxylin): Segment nuclei from both the DAPI channel (mIF) and the deconvolved hematoxylin channel (H&E) using a watershed or deep learning model (e.g., StarDist).

- Initial Transformation: Calculate the centroids of all nuclei in both images. Use a coherent point drift (CPD) or RANSAC algorithm to find the global affine transform aligning the two point clouds.

- Elastic Refinement: Treat the DAPI image as the moving image and the hematoxylin image as fixed. Employ an elastic registration algorithm (e.g., Demon's algorithm) using the affine result as initialization.

- Apply Transformation: Apply the final composite (affine + elastic) transformation to all mIF channels (CD8, PD-L1, etc.) and their associated single-cell segmentation masks.

- QC & Analysis: Overlay transformed cell phenotype maps onto H&E. Quantify cell densities within histopathologically annotated regions (tumor, stroma, immune clusters).

Visualizations

Title: Registration Quality Impact on Biomarker Pipeline

Title: Error Types and Their Analytical Consequences

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Cross-modality Registration Experiments

| Item | Function & Rationale |

|---|---|

| Agarose (Low-melt) | For embedding tissue samples for ex vivo MRI to maintain anatomical shape and prevent dehydration, creating a stable bridge to histology. |

| Multi-modality Phantom | Physical calibration device with features visible in multiple modalities (e.g., MRI, CT, PET) to validate and tune registration algorithms. |

| DAPI (4',6-diamidino-2-phenylindole) | Nuclear counterstain in fluorescence microscopy; provides the primary channel for alignment to hematoxylin in brightfield histology. |

| Histology Registration Landmark Kit | Contains micro-injection dyes or implantable fiducial markers (e.g., MRI-visible ink) to create artificial, corresponding landmarks between live imaging and histology. |

| Elastix / ANTs Software | Open-source software suites providing a comprehensive collection of advanced, deformable image registration algorithms for research. |

| Whole-Slide Image Aligner | Specialized software (e.g., ASHLAR, QuPath) designed for non-rigid stitching and registration of multi-channel fluorescence and brightfield whole-slide images. |

Bridging the Modality Gap: Modern Techniques and Real-World Applications

Welcome to the Technical Support Center for Cross-Modality Image Registration. This resource, framed within a broader thesis on Cross-Modality Image Registration Challenges, provides troubleshooting and methodological guidance for researchers, scientists, and drug development professionals.

Frequently Asked Questions & Troubleshooting Guides

Q1: In feature-based registration between MRI and histological slices, my extracted feature sets (SIFT, SURF) have extremely low matching rates. What could be the cause? A: This is a common challenge due to the fundamentally different intensity profiles of the modalities. The issue likely stems from the feature descriptor's inability to find consistent gradients/textures across modalities.

- Troubleshooting Steps:

- Preprocessing Check: Apply modality-specific normalization and edge enhancement. For histology, correct for staining artifacts.

- Descriptor Validation: Switch to modality-invariant descriptors like MIND (Modality Independent Neighbourhood Descriptor) or Self-Similarity Context (SSC).

- Metric Evaluation: Use the Inlier Ratio (IR) instead of just match count. A match is correct if it aligns within a defined pixel tolerance (e.g., 5px) after an initial geometric transform estimate (e.g., RANSAC). An IR < 5% indicates descriptor failure.

- Protocol - MIND Descriptor Extraction:

- For each pixel in the fixed (MRI) and moving (histology) image, define a local 6x6 patch.

- Compute the sum of squared differences (SSD) between the central patch and its four immediate non-local neighbours in a 9x9 search region.

- These four SSD values form the core MIND descriptor, which is normalized to be robust to local contrast changes.

Q2: My intensity-based registration (using Mutual Information) for CT-MRI alignment converges to a clearly wrong local optimum. How can I improve optimization? A: Local optima occur when the similarity metric's landscape is too complex or the initialization is poor.

- Troubleshooting Steps:

- Initialization Error: Quantify the initial misalignment using Mean Squared Error (MSE) of manually placed landmarks (5-10). If initial MSE > 15mm, your initial transform guess is insufficient.

- Multi-Resolution Strategy: Implement a 3-level Gaussian pyramid. Begin registration on the coarsest level (downsampled by a factor of 4), use the result to initialize the next level.

- Optimizer Tuning: For a standard gradient descent optimizer, reduce the learning rate by a factor of 10 (e.g., from 0.1 to 0.01) and increase the number of iterations per level by 50%.

- Protocol - Multi-Resolution Mutual Information Registration:

- Level 3 (Coarsest): Downsample images to 25% original size. Use a B-spline grid spacing of 20mm. Run optimizer for 100 iterations.

- Level 2: Downsample to 50% original size. Initialize with Level 3 transform. Use a 10mm grid spacing. Run for 150 iterations.

- Level 1 (Original): Use original images. Initialize with Level 2 transform. Use a 5mm grid spacing. Run for 200 iterations.

Q3: My deep learning model for ultrasound-MRI registration generalizes poorly to a new patient dataset, showing high Target Registration Error (TRE). How do I diagnose this? A: This typically indicates domain shift between your training and new data.

- Troubleshooting Steps:

- Quantify Domain Shift: Calculate the Frechet Inception Distance (FID) between the new ultrasound images and your training dataset. An FID increase > 10 points suggests significant shift.

- Check Data Normalization: Ensure the new data is normalized using the mean and standard deviation from your training set, not the new dataset's statistics.

- Feature Analysis: Use a trained model to extract feature maps from the new data. If activations are saturated (all zeros or max values), the network is seeing an out-of-distribution input.

- Protocol - FID Calculation for Domain Shift:

- Extract feature activations from a pre-trained layer (e.g., from a VGG network) for 2000 random patches from both your training US images and the new US dataset.

- Model the features of each set as multivariate Gaussians (calculate mean μ and covariance Σ).

- Compute FID = ||μ₁ - μ₂||² + Tr(Σ₁ + Σ₂ - 2*sqrt(Σ₁Σ₂)).

Quantitative Performance Comparison of Registration Methods

Table 1: Representative Performance Metrics Across Modalities and Methods (Synthetic & Clinical Data).

| Registration Method | Modality Pair | Mean Target Registration Error (TRE) | Dice Similarity Coefficient (DSC) | Runtime (sec) | Key Limitation |

|---|---|---|---|---|---|

| Feature-Based (SIFT+RANSAC) | MRI - Histology | 2.4 ± 1.1 mm | 0.45 ± 0.12 | ~15 | Poor performance with non-discriminative textures. |

| Intensity-Based (Mutual Info + B-spline) | CT - MRI | 1.8 ± 0.7 mm | 0.78 ± 0.08 | ~120 | Susceptible to local minima, slow. |

| Deep Learning (VoxelMorph) | Ultrasound - MRI | 1.5 ± 0.9 mm | 0.82 ± 0.07 | ~0.5 | Requires large, paired datasets for training. |

| Deep Learning (CycleMorph) | MRI T1w - T2w | 1.2 ± 0.5 mm | 0.89 ± 0.05 | ~0.7 | Complex training, potential for unrealistic deformations. |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Cross-Modality Registration Experiments.

| Item / Solution | Function / Application |

|---|---|

| ANTs (Advanced Normalization Tools) | Open-source software suite offering state-of-the-art intensity-based (SyN) and multivariate registration. |

| Elastix Toolbox | Modular toolbox for intensity-based medical image registration, featuring extensive parameter optimization. |

| SimpleITK | Simplified layer for the Insight Segmentation and Registration Toolkit (ITK), ideal for prototyping pipelines in Python. |

| VoxelMorph (PyTorch/TF) | Deep learning library for unsupervised deformable image registration; a standard baseline for learning-based methods. |

| 3D Slicer with SlicerElastix | GUI platform integrating Elastix for intuitive experimentation, visualization, and result analysis. |

| MIRTK (Medical Image Registration ToolKit) | Toolkit useful for population-level registration and atlas construction, often used in developmental studies. |

| Histology Registration Toolbox (HIST) | Specialized MATLAB-based tools for non-rigid registration of 2D histology to 3D medical images. |

Experimental Workflows & Methodological Diagrams

Troubleshooting Guides & FAQs

Q1: During multimodal registration, my mutual information (MI) metric plateaus at a low value and does not improve with further optimization steps. What could be wrong? A: This is often caused by insufficient overlap between the source and target image intensities in the joint histogram. Verify your initial alignment. If the misalignment is extreme, the joint histogram becomes sparse, causing MI estimation to fail. Solution: Implement a multi-resolution (coarse-to-fine) registration pyramid. Begin registration on heavily downsampled images to capture gross alignment, then refine at higher resolutions. Ensure your histogram uses a sufficient number of bins (typically 64-128) and that Parzen windowing is applied for robust density estimation.

Q2: My elastic deformation model produces physically unrealistic, non-smooth transformations (e.g., "folding" or "tearing" of the grid). How can I constrain it? A: This indicates a violation of the diffeomorphism constraint. Solution: Incorporate a regularization term directly into your cost function. The most common method is to add a bending energy penalty, proportional to the Laplacian of the displacement field. Adjust the weight (λ) of this penalty term. Start with a high value (e.g., λ=0.5) to enforce very smooth deformations, then gradually reduce it in subsequent optimization rounds if needed. Monitor the Jacobian determinant of the deformation field; negative values indicate folding.

Q3: When registering histological (2D) slices to MRI (3D) volumes, the MI algorithm seems insensitive to large contrast inversions. Is this expected? A: Yes, this is a key strength of MI. It measures the statistical dependence between intensities, not their direct correlation. If one image's white matter is bright and the other's is dark, MI can still find the correct alignment because the intensity relationship is consistent across the image. If registration fails despite this, check for non-stationary biases (e.g., staining variations in histology) that break this consistent relationship. Pre-processing with adaptive histogram equalization or N4 bias field correction may be required.

Q4: The computational time for B-spline based elastic registration is prohibitively high for my high-resolution 3D micro-CT images. Any optimization strategies? A: Performance scales with the number of B-spline control points and image voxels. Solutions: 1) Use a multi-resolution approach for the control point grid itself (start with a coarse grid spacing, e.g., 32 voxels, then refine to 16, 8). 2) Restrict computation to a region of interest (ROI) mask. 3) Use stochastic gradient descent (SGD) for optimization, which uses random subsets of voxels per iteration. 4) Leverage GPU acceleration if your registration toolkit (like elastix or ANTs) supports it.

Q5: How do I choose between Mutual Information (MI) and Normalized Mutual Information (NMI) for my registration? A: NMI is generally preferred as it is more robust to changes in the overlap region. MI's value can fluctuate with the size of the overlapping area, making optimization unstable. NMI normalizes MI by the sum of the marginal entropies, providing a value range that is more consistent. Use NMI as your default similarity metric for multimodal registration.

Experimental Protocols & Data

Protocol 1: Multi-resolution Mutual Information Registration (Brain MRI to Histology)

- Image Pre-processing: For the MRI (moving image), apply N4 bias field correction. For the histology slide (fixed image), perform color deconvolution (if stained) to extract a single channel of interest, then convert to a pseudo-Density map.

- Pyramid Creation: Create 3 resolution levels for both images. Level 1: Downsample by a factor of 8. Level 2: Downsample by a factor of 4. Level 3: Original resolution.

- Initialization: Perform manual landmark-based affine registration on the coarsest level (Level 1).

- Registration: For each level L from 1 to 3: a. Compute the joint histogram using 64 bins with Parzen windowing. b. Use the L-BFGS-B optimizer to maximize NMI. c. Use the resulting transform as the initial guess for level L+1.

- Evaluation: Compute the Target Registration Error (TRE) at manually annotated landmark pairs not used in initialization.

Protocol 2: Regularized Elastic Registration using B-splines

- Input: Affine-registered images from Protocol 1.

- Parameter Grid: Define a control point grid over the fixed image with an initial spacing of 20 pixels.

- Cost Function: Define the cost function C as: C = -NMI(Ifixed, Iwarped) + λ * R, where R is the bending energy penalty: ∑ ( ∂²u/∂x² + ∂²u/∂y² )² integrated over the domain.

λis the regularization weight. - Optimization: Use an adaptive stochastic gradient descent optimizer for 500 iterations per resolution level.

- Grid Refinement: After convergence, refine the control point grid spacing by a factor of 2 (e.g., 20px -> 10px), and repeat step 4 with a reduced

λ. - Validation: Visually inspect the deformation field for smoothness and check that the Jacobian determinant remains positive (>0.01) everywhere.

Table 1: Comparison of Similarity Metrics for Multimodal Registration

| Metric | Formula | Robust to Contrast Inversion? | Sensitive to Overlap Size? | Typical Use Case |

|---|---|---|---|---|

| Mutual Information (MI) | H(Ifixed) + H(Imoving) - H(Ifixed, Imoving) | Yes | High | General multimodal |

| Normalized MI (NMI) | [H(Ifixed) + H(Imoving)] / H(Ifixed, Imoving) | Yes | Low | Recommended Default |

| Correlation Ratio | 1 - Var[Ifixed - T(Imoving)] / Var[I_fixed] | No | Medium | Mono-modal, different contrasts |

Table 2: Effect of Regularization Weight (λ) on Deformation Field Quality

| λ Value | Mean TRE (pixels) | Max Jacobian | Min Jacobian | Visual Quality | Comment |

|---|---|---|---|---|---|

| 0.01 | 3.2 ± 1.1 | 5.7 | -0.8 | Unrealistic, folded | Under-regularized |

| 0.1 | 3.5 ± 1.0 | 3.2 | 0.12 | Good, some local extremes | Optimal for high detail |

| 0.5 | 4.1 ± 1.3 | 1.8 | 0.45 | Very smooth, blurred | Over-regularized |

| 1.0 | 5.0 ± 1.5 | 1.5 | 0.65 | Too rigid, detail lost | Over-regularized |

Visualizations

Title: Multi-resolution MI Registration Workflow

Title: Elastic Registration Cost Function

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Software for Cross-modality Image Registration

| Item | Function/Description | Example/Tool |

|---|---|---|

| High-Fidelity Scanners | Acquire source images for registration with minimal distortion and calibrated intensities. | Slide Scanner (Histology), Clinical MRI/CT, Micro-CT, Confocal Microscope. |

| N4 Bias Field Corrector | Algorithm to correct low-frequency intensity non-uniformity (shading) in MRI and other modalities, crucial for stable MI calculation. | Implemented in ANTs, SimpleITK, ITK-SNAP. |

| B-spline Interpolation Library | Provides the mathematical backbone for representing smooth, elastic deformation fields. | ITK (C++), SimpleITK (Python), elastix Library. |

| Optimization Solver | Numerical optimization package to maximize MI or minimize the composite cost function. | NLopt (L-BFGS-B, MMA), SciPy (L-BFGS-B), elastix's internal SGD. |

| Digital Phantom Data | Simulated image pairs with known ground-truth deformation. Used for algorithm validation and parameter tuning. | BrainWeb (MRI), DIRLAB (CT Lung), custom synthetic deformations. |

| Visualization Suite | Software to visually inspect registration results, overlay images, and visualize vector deformation fields. | ITK-SNAP, 3D Slicer, ParaView, MATLAB with custom scripts. |

Technical Support Center: Troubleshooting Cross-Modality Image Registration with Deep Learning

This support center provides targeted guidance for researchers implementing CNN and Transformer-based models for cross-modality image registration (e.g., MRI to CT, histology to MRI) within a thesis or drug development context.

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: My CNN-based registration network (e.g., VoxelMorph) fails to align edges between MRI and Ultrasound images. The deformation field appears overly smooth and ignores key boundaries. What could be the cause? A: This is a common issue in cross-modality registration due to intensity inversion or non-correlation. CNNs initially rely on pixel-intensity similarity, which can fail across modalities.

- Troubleshooting Steps:

- Preprocessing Check: Ensure you are using modality-invariant features. Replace intensity-based metrics (like MSE) with a mutual information (MI) loss layer or a normalized cross-correlation (NCC) loss in your training loop.

- Architecture Review: Implement a multi-scale network architecture. Train the network to learn features at progressively finer resolutions. This helps capture large deformations first, then refine edges.

- Regularization Tuning: The weight (λ) of the smoothness regularizer (often on the deformation field) may be too high. Gradually reduce λ in subsequent experiments and monitor the Jacobian determinant for unrealistic folding.

Q2: When training a Transformer-based registration model (e.g., TransMorph), I encounter "CUDA out of memory" errors, even with small batch sizes. How can I proceed? A: Transformers have quadratic computational complexity with respect to the number of input tokens (image patches), making them memory-intensive for 3D volumes.

- Troubleshooting Steps:

- Input Size Reduction: Downsample your training volumes more aggressively (e.g., to 128x128x128). You can use a multi-stage approach where a coarse Transformer alignment is refined by a lighter CNN.

- Gradient Accumulation: Use a batch size of 1, but accumulate gradients over 4 or 8 steps before updating weights. This simulates a larger batch size without the memory cost.

- Model Variant: Switch to a more memory-efficient Transformer variant like Swin Transformer which uses shifted windows to limit self-attention computation.

Q3: My trained model performs well on validation data from the same scanner but poorly on external test data from a different clinical site. How do I improve model generalization? A: This indicates overfitting to site-specific noise and intensity distributions.

- Troubleshooting Steps:

- Data Augmentation: Drastically increase the diversity of your training data using robust, physics-informed augmentations. See the Experimental Protocol section below for a detailed method.

- Domain Randomization: During training, randomly modify intensity histograms, simulate different noise levels (Rician, Gaussian), and add random low-resolution slices to mimic artifacts.

- Implementation of Instance Normalization: Replace Batch Normalization layers with Instance Normalization. This makes the network's statistics independent of batch characteristics, improving generalization across domains.

Q4: How do I quantitatively know if my AI-driven registration is successful for my drug development study, beyond visual inspection? A: You must use a battery of metrics, each reported in a structured table. Below is a standard evaluation table.

Table 1: Quantitative Metrics for Evaluating Cross-Modality Registration

| Metric Category | Specific Metric | Ideal Value | Interpretation for Drug Studies |

|---|---|---|---|

| Overlap | Dice Similarity Coefficient (DSC) | 1.0 | Measures alignment of segmented structures (e.g., tumors, organs). Critical for longitudinal treatment assessment. |

| Distance | Hausdorff Distance (HD95) | 0 mm | Measures the largest segmentation boundary error. Ensures no outlier misalignments. |

| Deformation Quality | % of Negative Jacobian Determinants | 0% | Indicates physically implausible folding in the deformation field. Must be near zero. |

| Intensity Correlation | Normalized Mutual Information (NMI) | Higher is better | Measures the information shared between modalities post-registration. Validates alignment without segmentation. |

Experimental Protocol: Training a Generalizable CNN-Transformer Hybrid Model

Objective: Train a robust model for MRI (moving) to CT (fixed) image registration resilient to scanner variation.

Detailed Methodology:

- Data Preprocessing:

- Re-sampling: Isotropically re-sample all volumes to 1mm³.

- Intensity Normalization: For each modality, perform a per-image normalization to the [0, 1] range based on the 1st and 99th intensity percentiles. Do not use global statistics.

- Skull Stripping (Neuro): Apply a pretrained model (e.g., HD-BET) to remove the skull and focus on brain tissue.

Augmentation Pipeline (Critical for Generalization):

- Apply the following in real-time during training:

- Random anisotropic scaling (up to ±10% per axis).

- Random additive Rician noise (σ from 0% to 2% of max intensity).

- Random Gaussian blur (σ from 0 to 1.5 mm).

- Random intensity shift and scaling (±0.1 multiplier, ±0.05 offset).

- Simulated random occlusions (drop out random 3D cubes).

- Apply the following in real-time during training:

Model Architecture (Example - Coarse-to-Fine):

- Stage 1 (Coarse): A lightweight Swin Transformer takes downsampled images (64x64x64). Outputs a low-resolution deformation field.

- Stage 2 (Refinement): A U-Net style CNN takes the upsampled Stage 1 field and the original resolution images. It outputs the final, high-resolution deformation field.

Loss Function:

Total Loss = λ1 * NCC(Local Patches) + λ2 * DSC(Segmentation Label) + λ3 * BendingEnergyPenaltyStart with λ1=1.0, λ2=0.5, λ3=0.05. Adjust based on validation.Training Specifications:

- Optimizer: AdamW (weight decay=1e-4)

- Learning Rate: 1e-4, with cosine annealing decay.

- Batch Size: 1 (use gradient accumulation over 4 iterations).

- Epochs: 1000, with early stopping patience of 100 epochs on validation loss.

Visualizations

AI-Driven Cross-Modality Registration Workflow

Registration Loss Function Composition

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Libraries for AI-Powered Registration Research

| Tool Name | Category | Primary Function in Registration | Key Consideration |

|---|---|---|---|

| ANTs (Advanced Normalization Tools) | Traditional Baseline | Provides state-of-the-art SyN algorithm for a non-DL benchmark. | Use ants.registration for rigorous comparative evaluation. |

| VoxelMorph | CNN Framework | A well-established DL baseline for unsupervised deformable registration. | Easily modifiable; ideal for prototyping custom loss functions. |

| TransMorph | Transformer Framework | Implements a pure Transformer architecture for capturing long-range dependencies. | Computationally heavy; requires significant GPU memory for 3D. |

| MONAI (Medical Open Network for AI) | PyTorch Ecosystem | Provides essential medical imaging transforms, losses, and network layers. | Critical for building reproducible data loading and training pipelines. |

| ITK-SNAP / 3D Slicer | Visualization & Annotation | Visualize 3D registration results, segment ground truth labels, and compute metrics. | Essential for qualitative validation and correcting automated segmentations. |

| SimpleITK | Image Processing | Robust library for basic I/O, re-sampling, and intensity normalization operations. | More reliable than standard scipy for medical image formats and metadata. |

Technical Support Center

FAQs & Troubleshooting for Cross-Modality Imaging in Drug Development

Q1: In our PET-MRI co-registration for pharmacokinetic (PK) modeling, we observe poor spatial alignment between the dynamic PET signal and the anatomical MRI, leading to inaccurate region-of-interest (ROI) analysis. What are the primary causes and solutions?

- A: This is a core challenge in cross-modality registration. Primary causes include:

- Subject Motion: Differences in scan timing and duration cause intra- and inter-modality movement.

- Differences in Resolution & Contrast: PET's low structural resolution vs. MRI's high resolution.

- Intrinsic Parameter Mismatch: Imperfect scanner calibration or coordinate system offsets.

- Troubleshooting Guide:

- Protocol Refinement: Implement a consistent patient positioning protocol using immobilization devices.

- Software Correction: Use mutual information-based algorithms (e.g., in SPM, FSL) which are robust for multi-modal data. Apply motion correction to dynamic PET frames before co-registration to the mean PET image, then register the mean PET to MRI.

- Validation: Always visually inspect overlay images and plot correlation of signals across a boundary region to quantify registration error.

- A: This is a core challenge in cross-modality registration. Primary causes include:

Q2: When using fluorescence molecular tomography (FMT) with micro-CT to assess target engagement in vivo, the reconstructed fluorescent probe distribution appears superficially displaced from the expected tumor location on CT. How can we improve accuracy?

- A: This often stems from the "ill-posed" nature of FMT reconstruction and imperfect optical-to-CT registration.

- Troubleshooting Guide:

- Utilize Anatomical Priors: Use the CT-derived 3D surface contour as a hard constraint during the FMT inverse problem solving. This confines the reconstruction to the animal's volume.

- Multi-Modal Fiducials: Implant or use fiducial markers visible in both modalities (e.g., hollow capillary tubes filled with a contrast agent for CT and a fluorescent dye). These provide ground truth for rigid registration.

- Sequential Workflow: Always acquire the CT scan immediately after the optical scan without moving the animal, using a combined FMT-CT system if available.

Q3: We are correlating ex vivo autoradiography (AR) images with histology (IHC) for efficacy assessment of a novel CNS drug. The manual landmark-based registration is labor-intensive and inconsistent. What is a robust methodological pipeline?

- A: A semi-automated, intensity-based pipeline is recommended.

- Experimental Protocol:

- Tissue Processing: Section sequentially. Mount adjacent sections for AR and IHC on conductive slides.

- Digitalization: Scan AR plate at high resolution (e.g., 10 µm). Digitize IHC slides with a whole-slide scanner.

- Pre-processing: Invert AR image intensities if needed. Apply histogram matching or normalization.

- Registration: Use an open-source tool like Elastix. Perform a multi-resolution affine registration followed by a B-spline deformable transformation. Use mutual information as the cost metric.

- Validation: Define a set of anatomical landmarks (e.g., ventricular boundaries, specific cell layers) on both images by a second independent researcher and calculate the Target Registration Error (TRE).

Data Summary Table: Common Imaging Modalities in Drug Development

| Modality | Primary PK/TE/Efficacy Use | Typical Resolution | Key Quantitative Outputs | Core Registration Challenge |

|---|---|---|---|---|

| PET | PK (whole-body distribution), TE (receptor occupancy) | 1-4 mm | Standardized Uptake Value (SUV), Binding Potential (BP) | Low resolution, poor soft-tissue contrast for alignment. |

| MRI | Anatomical context, Efficacy (tumor volume, functional readouts) | 50-500 µm | Volume, Relaxation times (T1, T2), Diffusion coefficients | Geometric distortion, different sequence contrasts. |

| FMT / BLI | TE, Efficacy (longitudinal therapy response) | 1-3 mm (reconstructed) | Radiant Efficiency (p/s/cm²/sr / µW/cm²) | Scattering, absorption, limited depth resolution. |

| Micro-CT | Anatomical context (bone, lung), Efficacy (morphometry) | 10-100 µm | Hounsfield Units, Volumetric density | Very different contrast mechanism from optical/PET. |

| Autoradiography | High-resolution PK & TE (tissue distribution) | 10-100 µm | Digital Light Units per mm² (DLU/mm²) | 2D, requires correlation with adjacent histology. |

Experimental Protocol: Integrated PK/TE/Efficacy Workflow Using Cross-Modality Imaging

Title: Longitudinal Assessment of an Oncology Drug Candidate in a Murine Xenograft Model.

Objective: To non-invasively correlate drug pharmacokinetics, target engagement (TE), and antitumor efficacy.

Methodology:

- Model Establishment: Implant tumor cells expressing a luciferase reporter subcutaneously in athymic nude mice.

- Imaging Schedule (Days 0, 3, 7, 14):

- BLI (Efficacy Proxy): Acquire baseline and longitudinal bioluminescence signal to monitor tumor burden.

- PET (PK & TE): Administer a target-specific PET tracer (e.g., [¹⁸F]FDG for metabolism or a [⁸⁹Zr]-labeled drug analogue). Perform a 60-minute dynamic PET scan under isoflurane anesthesia.

- MRI (Anatomical & Efficacy): Immediately following PET, acquire T2-weighted MRI for precise tumor volumetry and anatomical localization.

- Data Processing & Analysis:

- Registration: Co-register all PET frames to the mean PET image (motion correction). Rigidly register the mean PET image to the T2-MRI using mutual information. Apply the same transformation to all PET data.

- Analysis: Draw ROIs on the MRI-defined tumor volume. Extract PET time-activity curves for PK modeling (e.g., 2-tissue compartment). Calculate tumor volumes from MRI. Plot longitudinal changes in tumor volume, BLI signal, and PET tracer uptake (SUV) for correlation.

Visualizations

Diagram Title: Integrated PK/TE/Efficacy Imaging Workflow

Diagram Title: Impact of Registration Failure on Drug Development

The Scientist's Toolkit: Research Reagent & Software Solutions

| Item Name | Category | Function in Experiment |

|---|---|---|

| Isoflurane/Oxygen Mix | Anesthetic | Maintains consistent animal immobilization during scanning to minimize motion artifacts. |

| [¹⁸F]FDG or [⁸⁹Zr]-mAb | Radiotracer | Enables quantification of metabolic activity (PK) or specific target engagement via PET. |

| D-Luciferin (Potassium Salt) | Bioluminescent Substrate | Activates luciferase reporter in engineered cells for BLI-based efficacy monitoring. |

| MRI Contrast Agent (e.g., Gd-DOTA) | Contrast Media | Enhances soft-tissue or vascular contrast in MRI for better anatomical segmentation. |

| Multi-Modal Fiducial Markers | Calibration Tool | Contains substances visible in >1 modality (CT+Optical) to validate image co-registration. |

| Elastix / ANTs Software | Registration Algorithm | Provides robust, parameter-optimizable platforms for deformable cross-modality image registration. |

| PMOD / Amide | Image Analysis Suite | Allows visualization, registration, ROI definition, and kinetic modeling of multi-modal PET/MRI data. |

| Immobilization Device | Hardware | Custom-made bed or cradle that fits both PET and MRI scanners, improving consistency. |

Troubleshooting Guides & FAQs

Q1: My multi-modal registration (e.g., MRI to histology) is failing due to severe intensity inhomogeneity in one modality. What are the first steps to correct this? A: Preprocessing is critical. First, apply a bias field correction algorithm (e.g., N4ITK for MRI). Then, use a feature-based or mutual information-based similarity metric instead of simple intensity correlation. Ensure your registration algorithm is robust to local intensity variations by testing advanced methods like modality-independent neighborhood descriptors (MIND).

Q2: During automated batch registration of a large cohort, several image pairs produce extreme, non-physical deformations. How can I automate the detection of these failures? A: Implement a post-registration quality control (QC) pipeline. Key metrics to calculate and flag include:

- Jacobian Determinant: Flag any transformations where the determinant is ≤ 0 (folding) or > 3 (extreme expansion).

- Image Similarity Change: If the final similarity metric (e.g., NCC, MI) is worse than the initial similarity, flag the pair.

- Boundary Displacement: Check if the deformation field magnitude exceeds a realistic threshold (e.g., > 20% of image dimensions). Automated scripts should move flagged results for manual review.

Q3: I am registering 2D whole-slide images (WSI) to in vivo 3D ultrasound. The scale and resolution differences are immense. What strategy should guide my approach? A: Adopt a multi-resolution and multi-scale strategy. For the workflow, see Diagram 1. Begin by extracting a 2D slice from the 3D volume that best corresponds to the WSI plane (often a manual or landmark-guided step). Then, perform pyramid-based registration: start at a very coarse scale (heavily downsampled images) to solve large-scale translation, rotation, and scaling. Progressively refine through finer resolutions. Use a similarity metric robust to the modality gap, such as Mutual Information or a learned deep feature distance.

Q4: My deep learning-based registration model works perfectly on the training/validation set but generalizes poorly to new data from a different scanner. How can I improve robustness? A: This is a common cross-modality and domain shift challenge. Improve your toolkit:

- Data Augmentation: Aggressively augment training data with simulated intensity variations, noise, and random, realistic deformations.

- Domain Randomization: Train the model on data from multiple scanners, protocols, and institutions.

- Model Architecture: Employ a self-supervised or unsupervised learning strategy that does not rely on ground-truth deformations, which are often scanner-dependent.

- Input Normalization: Implement advanced normalization techniques (e.g., histogram matching, z-score per modality) as a preprocessing step within the network pipeline.

Q5: When integrating a registration step into my automated analysis pipeline, should I run it on the raw images or after other preprocessing steps (denoising, skull-stripping)? What is the best order of operations? A: A standardized preprocessing workflow before registration is essential for reproducibility. The recommended order is outlined in Diagram 2. Always perform modality-specific corrections (bias field, gradient distortion) first. Then, apply steps that define the registration space (e.g., skull-stripping for neuroimaging) to the reference image. The moving image should be registered to this processed reference. Final analysis-specific preprocessing (denoising, enhancement) should be applied after registration and resampling to avoid introducing artifacts that confound the alignment process.

Experimental Protocol: Validating Registration Accuracy in Preclinical Studies

Objective: To quantitatively evaluate the accuracy of a CT (reference) to micro-PET (moving) registration algorithm in a murine model.

Materials: See "Research Reagent Solutions" table.

Method:

- Animal Preparation & Imaging: N=8 tumor-bearing mice are injected with a fiducial marker (e.g., I-125 seed) visible in both CT and PET. After 24h, acquire whole-body CT scan (high-resolution, anatomical). Immediately followed by a micro-PET scan (functional).

- Ground Truth Establishment: Manually identify the 3D centroid of the fiducial marker in both the CT and PET image volumes using a validated software tool (e.g., 3D Slicer). Record coordinates (x, y, z). This serves as the independent ground truth landmark.

- Registration Execution: Apply the registration pipeline (e.g., affine + B-spline deformable using Mutual Information) to align the PET volume to the CT volume. Execute both with and without the proposed bias correction preprocessing.

- Accuracy Calculation: Apply the computed transform to the PET fiducial coordinates. Calculate the Euclidean distance (in mm) between the transformed PET landmark and the CT landmark. This is the Target Registration Error (TRE).

- Statistical Analysis: Compare mean TRE and standard deviation for the preprocessed vs. non-preprocessed groups using a paired t-test (α=0.05).

Table 1: Registration Accuracy Results (Mean TRE ± SD, mm)

| Group (N=8) | Mean Target Registration Error (TRE) | Standard Deviation | p-value (vs. No Preprocessing) |

|---|---|---|---|

| No Preprocessing | 2.34 mm | ± 0.87 mm | -- |

| With Bias Correction & Histogram Matching | 1.12 mm | ± 0.41 mm | < 0.01 |

| Acceptance Threshold (Typical) | < 2.0 mm | -- | -- |

Research Reagent Solutions

Table 2: Essential Materials for Cross-Modality Registration Validation

| Item | Function in Experiment | Example/Supplier |

|---|---|---|

| Multi-Modality Fiducial Markers | Provide ground-truth landmarks visible across imaging modalities (CT, PET, MRI) for validation. | I-125 seeds (CT/PET); Gadolinium-based markers (MRI/CT); Multimodal imaging beads (e.g., BioPal). |

| Standardized Imaging Phantoms | Calibrate scanners and provide known geometries/contrasts to test registration algorithms. | Micro Deluxe Phantom (Caliper Life Sciences); Multi-modality geometric phantoms. |

| Image Processing & Registration Software | Platform for executing, testing, and comparing registration algorithms. | 3D Slicer (open-source), Elastix (open-source), ANTs, Advanced MD Studio. |

| High-Performance Computing (HPC) Cluster Access | Enables batch processing of large cohorts and resource-intensive deformable registration. | Local institutional HPC or cloud computing services (AWS, GCP). |

Visualization: Diagrams

Diagram 1: Multi-Scale Strategy for 2D-3D Registration

Diagram 2: Standardized Preprocessing Workflow for Registration

Solving Common Pitfalls: A Practical Guide to Optimizing Registration Accuracy

Troubleshooting Guides & FAQs

Q1: During multi-modal registration (e.g., MRI to histology), my alignment fails with severe localized stretching artifacts. What is the likely cause and solution? A: This is often caused by non-uniform tissue deformation during histology processing (e.g., slicing, mounting). The cost function gets trapped in a local minimum optimizing for a local match while distorting overall geometry.

- Solution Protocol: Implement a multi-scale, feature-based initialization.

- Pre-processing: Extract robust, modality-invariant features (e.g., vessel junctions, tissue boundary corners) using a SIFT-like algorithm or a pre-trained deep feature extractor.

- Coarse Alignment: Perform RANSAC (Random Sample Consensus) on the matched features to compute an affine or low-order spline transform. This provides a robust global initialization.

- Refinement: Use a deformable registration (e.g., Demons, B-spline) with a Normalized Mutual Information (NMI) metric, starting from the coarse transform. Regularize strongly initially, then reduce regularization weight across iterations.

- Validation: Quantify the target registration error (TRE) on a set of manually annotated, distinct fiduciary points not used in registration.

Q2: My intensity-based registration algorithm converges prematurely, resulting in a significant misalignment. How can I diagnose and escape this local minimum? A: This indicates poor optimization landscape exploration.

- Diagnostic & Solution Protocol:

- Visualize the Cost Function: Perturb the initial transform parameters (translation, rotation) around the starting guess and compute the similarity metric (e.g., NMI) at each point to create a 2D/3D landscape plot.

- Employ Stochastic Optimization: Switch from gradient-descent to a population-based method like Covariance Matrix Adaptation Evolution Strategy (CMA-ES) for the initial stages. It is less likely to get stuck.

- Multi-Start Optimization: Run the registration from multiple, randomly perturbed starting positions. Analyze the distribution of final transforms and metric values.

- Pyramid Scheme Check: Ensure your image pyramid (multi-resolution) is properly constructed. If down-sampled too aggressively, critical structural information may be lost, leading to false minima. Use a moderate down-sampling factor (e.g., 2x) and more pyramid levels.

Q3: I observe periodic grid-like artifacts in my registered 3D microscopy volume. What generates these artifacts and how are they removed? A: These are typically interpolation artifacts from repeated application of transformation fields or from imperfect sensor calibration.

- Solution Protocol: Mitigating Interpolation Artifacts

- Source Identification: