Biased Data, Skewed Results: Mitigating Training Set Bias in Biomedical AI and ML Optimization

Training data bias presents a critical, often hidden, challenge in machine learning for drug discovery and biomedical research, leading to models with poor generalizability and clinical translatability.

Biased Data, Skewed Results: Mitigating Training Set Bias in Biomedical AI and ML Optimization

Abstract

Training data bias presents a critical, often hidden, challenge in machine learning for drug discovery and biomedical research, leading to models with poor generalizability and clinical translatability. This article provides a comprehensive framework for researchers and drug development professionals to understand, identify, and remediate bias. We explore the origins and typologies of bias in biomedical datasets, detail methodological strategies for bias-aware model development and data curation, offer troubleshooting protocols for diagnosing and optimizing biased models, and present robust validation frameworks to assess model fairness and comparative performance. The goal is to equip practitioners with the tools to build more reliable, equitable, and effective AI-driven solutions.

The Bias Blind Spot: Understanding the Sources and Impact of Training Data Bias in Biomedical ML

Technical Support Center: Troubleshooting Data Bias in Biomedical ML

Frequently Asked Questions (FAQs)

Q1: During model development for patient stratification, my algorithm shows high accuracy on our hospital's data but fails on external validation. What specific bias might be the cause? A1: This is a classic case of Site-Specific or Cohort Bias. Your training data likely contains artifacts specific to your institution's patient demographics, local diagnostic protocols, imaging equipment, or lab assay kits. The model has learned these site-specific "shortcuts" rather than generalizable biomedical patterns.

Q2: Our drug response prediction model, trained on cell line data, does not translate to patient-derived xenograft models. What went wrong? A2: This indicates a Biological Context Bias. Immortalized cell lines often have different genetic and phenotypic profiles (e.g., faster doubling, adapted to plastic) compared to primary tissues or in vivo models. Your training data lacks the biological complexity and tumor microenvironment present in the target application.

Q3: The performance of our diagnostic AI for skin lesions degrades significantly when used on patients with darker skin tones. How do I diagnose this bias? A3: This is Demographic Representation Bias or Phenotypic Spectrum Bias. Your training dataset is almost certainly under-representative of darker skin tones. You must audit your dataset's Fitzpatrick skin type distribution and likely augment it with data from diverse populations.

Q4: We trained a model to identify a disease biomarker from electronic health records (EHR). It appears to be correlating with billing codes rather than pathophysiology. What is this bias? A4: This is a form of Measurement or Proxy Bias. In EHR data, the recorded variables (like diagnostic codes, frequency of visits, or specific test orders) are often imperfect proxies for the true biological state. The model may latch onto administrative or socioeconomic patterns instead of the intended biomedical signal.

Troubleshooting Guides

Issue: Suspected Batch Effect Bias in Genomic Data Symptoms: Model performance is perfect within a single sequencing run but drops on data from other batches or labs. Diagnostic Steps:

- Perform Principal Component Analysis (PCA) on your normalized feature data.

- Color the PCA plot by experimental batch (e.g., sequencing date, lab site, reagent lot).

- Identification: If samples cluster strongly by batch rather than by disease/control status, batch effect bias is present.

Mitigation Protocol:

- Experimental Design: Randomize samples across batches.

- Bioinformatic Correction: Apply batch effect correction algorithms (e.g., ComBat, limma's

removeBatchEffect). Note: Apply correction after train/test split to avoid data leakage. - Validation: Always hold out an entire batch as an external validation set to test generalizability.

Issue: Label Noise Bias in Histopathology Image Analysis Symptoms: Model predictions are inconsistent, and expert pathologists disagree with a subset of the model's training labels. Diagnostic Steps:

- Conduct a label audit. Have multiple board-certified pathologists re-annotate a random subset (e.g., 10%) of your training images independently.

- Calculate the inter-rater agreement (e.g., Cohen's Kappa) between the original labels and the new consensus.

Table: Label Audit Results Example

| Dataset Subset | Original Labels | Consensus Review Labels | Agreement (Kappa) | Implication |

|---|---|---|---|---|

| NSCLC (n=100) | 75% Adenocarcinoma | 82% Adenocarcinoma | 0.71 | Moderate label noise |

| Melanoma (n=50) | 90% Malignant | 70% Malignant | 0.45 | Severe label noise |

Mitigation Protocol:

- Label Consensus: Implement a multi-reader adjudication process for training data.

- Algorithmic Robustness: Use loss functions robust to label noise (e.g., symmetric cross-entropy, generalized cross-entropy).

- Data Curation: Consider removing samples with persistent label disagreement from the training set.

Detailed Experimental Protocol: Auditing for Demographic Bias

Objective: Quantify representation gaps in a training dataset for a chest X-ray diagnosis model.

Materials: Dataset metadata including patient age, sex, and self-reported race/ethnicity.

Methodology:

- Calculate the frequency distribution of each demographic variable in your training set (

P_train). - Obtain the frequency distribution of the same variables in the target patient population (

P_target). This could be from national health statistics or a large, multi-institutional registry. - Compute the Representation Disparity Ratio (RDR) for each demographic subgroup i:

RDR_i = (P_train_i / P_target_i). - Set an operational threshold (e.g., RDR < 0.8 or > 1.25) to flag significant under- or over-representation.

Table: Example Demographic Audit

| Demographic Subgroup | Training Set % (P_train) |

Target Population % (P_target) |

RDR | Status |

|---|---|---|---|---|

| Female, 20-40 | 15% | 25% | 0.60 | Under-represented |

| Male, 20-40 | 20% | 20% | 1.00 | Adequate |

| Female, 60+ | 30% | 28% | 1.07 | Adequate |

| Male, 60+ | 35% | 27% | 1.30 | Over-represented |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Mitigating Data Bias

| Item | Function in Bias Mitigation |

|---|---|

| Synthetic Minority Oversampling (SMOTE) | Algorithmically generates synthetic samples for under-represented classes to address class imbalance bias. |

| ComBat Harmonization Tool | A statistical method used to adjust for batch effects in genomic and imaging data, removing technical artifacts. |

| DICOM Metadata Anonymizer & Auditor | Software to safely audit and balance metadata (age, sex, scanner type) in medical imaging datasets. |

| Cell-Line Authentication Kit (STR Profiling) | Essential to confirm the identity of biological samples and prevent contamination bias in preclinical studies. |

| Multi-Institutional Data Sharing Agreement Templates | Legal frameworks to enable pooling of diverse datasets, crucial for improving demographic and technical diversity. |

| Inter-Rater Reliability Software (e.g., Cohen's Kappa Calculator) | Quantifies label consistency among human annotators, diagnosing label noise bias. |

Visualizations

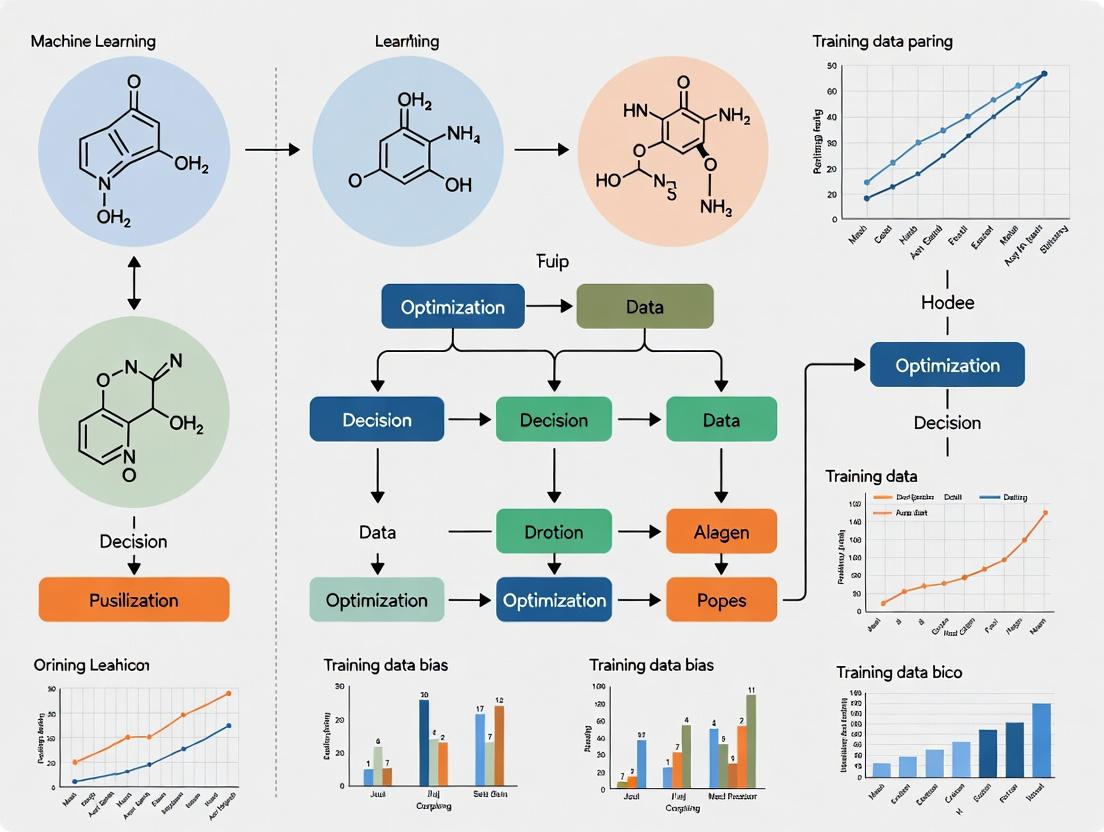

Title: Sources and Impacts of Training Data Bias in Biomedicine

Title: Bias Mitigation Workflow for Researchers

Technical Support Center: Troubleshooting Data Bias in ML Research

FAQs & Troubleshooting Guides

Q1: Our model performs well on internal validation but fails on external patient cohorts. What is the likely source of bias? A: This is a classic sign of Cohort Selection Bias. Internal validation sets often share latent features (e.g., imaging machine type, hospital-specific protocols) with the training set. To diagnose:

- Perform Stratified Analysis: Compare the distributions of key covariates (age, sex, ethnicity, disease severity, sample collection date) between your internal and external cohorts. Use statistical tests (Chi-square, t-test, KS test) to quantify differences.

- Check Data Provenance: Audit how subjects were recruited for the internal cohort (e.g., all from a single clinic, all volunteers) versus the external cohort.

Mitigation Protocol:

- Algorithm: Use reweighting techniques like Inverse Probability of Treatment Weighting (IPTW) to balance cohorts.

- Steps:

- Pool internal and external cohort data with a source indicator.

- Fit a logistic regression model to predict the source from covariates.

- Calculate the propensity score (probability of being in the internal cohort).

- Assign a weight of

1/propensity_scorefor internal cohort samples and1/(1-propensity_score)for external cohort samples during training. - Retrain the model using these weights.

Q2: Our NLP model for extracting adverse events from clinical notes seems to be learning phrasing shortcuts instead of medical concepts. How can we confirm this? A: This is likely Annotation Artifact Bias, where spurious correlations in the text (e.g., the phrase "was administered" always preceding a drug name in your notes) are learned as rules.

Diagnostic Experiment:

- Create a "Flipped Label" test set. Manually or semi-automatically modify sentences to contain the artifact but the opposite label (e.g., "The patient was administered saline, and no [DRUG_NAME] was given." but label as 'No Adverse Event').

- If model performance drops precipitously on this adversarial set (>30% drop in F1-score), it confirms reliance on artifacts.

Mitigation Protocol:

- Method: Adversarial Data Augmentation.

- Steps:

- Identify common n-gram or syntactic artifacts in your training data (e.g., "no evidence of", "ruled out").

- Use a template-based or generative model to create new training examples where these artifacts are paired with contrary labels.

- Add these counterexamples to your training set and retrain.

Q3: How can we measure and correct for label noise bias introduced by overworked annotators? A: Label noise is a critical Annotation Bias. Implement a Label Quality Audit.

Audit Protocol:

- Re-annotation: Select a random subset (e.g., 10%) of your training data. Have it re-annotated by expert annotators (the "gold standard") and a sample of the original annotators.

- Calculate Metrics: Compute agreement statistics.

- Analysis: Use the table below to summarize findings and guide correction.

Table 1: Label Noise Audit Metrics for Annotator Performance

| Annotator ID | Samples Annotated | Agreement with Gold Standard (%) | Cohen's Kappa (κ) | Major Error Rate* |

|---|---|---|---|---|

| A-101 | 1,200 | 94.2 | 0.88 | 1.5% |

| A-102 | 1,150 | 85.7 | 0.71 | 5.2% |

| A-103 | 1,180 | 98.1 | 0.96 | 0.8% |

| Pooled Avg | 3,530 | 92.7 | 0.85 | 2.5% |

*Major Error: An error that would critically change the clinical interpretation.

Correction Method: For annotators with kappa < 0.75, flag their labeled data for review or exclude it. Implement Confidence-Weighted Learning where samples with low annotator agreement are given less weight during training.

Q4: What is a standard workflow to systematically audit a dataset for multiple biases? A: Implement a Bias Audit Pipeline. The following diagram outlines the key stages and checks.

Title: Systematic Bias Audit Pipeline for ML Datasets

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Bias Detection & Mitigation Experiments

| Tool / Reagent | Function in Bias Research | Example Vendor/Platform |

|---|---|---|

| Synthetic Data Generators (e.g., CTGAN, SYN) | Creates balanced counterfactual data to augment under-represented cohorts or break spurious correlations. | Mostly open-source (SDV), Gretel.ai |

| Annotation Platform with QA Dashboards | Provides metrics on annotator agreement, speed, and flags inconsistencies for review during label creation. | Labelbox, Scale AI, Prodigy |

| Model Explainability Suites (SHAP, LIME) | Identifies which features (potentially artifacts) the model is relying on for predictions, revealing shortcuts. | Open-source libraries |

| Statistical Analysis Software (R, Python Pandas) | Performs distribution comparison tests (KS, Chi-square) and calculates propensity scores for reweighting. | Open-source, SAS, JMP |

| Adversarial Testing Frameworks | Automates the creation of "flipped label" or perturbed test sets to stress-test model robustness. | TextAttack (NLP), CleverHans (CV) |

| Cohort Sourcing & Management Platform | Tracks patient/donor metadata comprehensively to audit provenance and enable stratified sampling. | Medidata Rave, Castor EDC, RedCap |

Q5: How do we visualize and correct for a confounding variable in a cohort? A: Use Causal Graph Diagrams to map relationships and apply stratification or matching.

Title: Causal Graph Showing a Confounding Variable and Correction

Technical Support Center

FAQ & Troubleshooting for Bias Mitigation Experiments

Q1: During dataset auditing, our model performance metrics (e.g., accuracy) are high across all pre-defined test sets, yet qualitative review reveals clear stereotyping in outputs for underrepresented subgroups. What is the issue and how do we troubleshoot? A: This is a classic symptom of Underrepresentation Bias compounded by Measurement Bias. High aggregate metrics mask poor performance on small subgroups. The test set itself likely suffers from the same underrepresentation.

- Troubleshooting Protocol:

- Disaggregated Evaluation: Calculate performance metrics (accuracy, F1-score, precision, recall) separately for each identifiable demographic or phenotypic subgroup (see Table 1).

- Slice Discovery Tools: Use tools like

SMASH(Slice Metrics Based on Automated Slice Discovery) or manual error analysis to identify "hidden" subgroups where performance degrades. - Bias Auditing: Apply quantitative bias metrics such as Equalized Odds Difference or Demographic Parity Difference to your model's predictions (see Table 2).

- Root Cause: If bias metrics confirm disparity, augment your training data for underrepresented slices using techniques like SMOTE (Synthetic Minority Over-sampling Technique) or targeted data collection.

Q2: Our drug-target interaction model, trained on high-quality bioassay data, fails to generalize to novel protein classes. We suspect Historical Legacy Bias in the available data. How can we diagnose and address this? A: Historical Legacy Bias arises when available data reflects past research focus (e.g., well-studied protein families like kinases) rather than the true biological landscape.

- Troubleshooting Protocol:

- Data Provenance Analysis: Map the taxonomic and protein family distribution of your training data against a reference database like UniProt. Visualize the over- and under-representation.

- Clustering & Out-of-Distribution (OOD) Detection: Cluster your training data by protein sequence or structural features. Test your model on data from low-density clusters; poor performance indicates legacy bias.

- Mitigation Strategy: Employ domain adaptation techniques or invariant risk minimization (IRM) to learn features that are stable across different protein families. Prioritize experimental validation for predictions on under-represented families.

Q3: When correcting for Measurement Bias in clinical phenotype data, what are the standard methodologies to adjust for inconsistent diagnostic criteria across different source cohorts? A: Measurement bias occurs when the data collection process systematically distorts the true value.

- Experimental Protocol for Harmonization:

- Phenotype Algorithm Audit: Document the specific ICD codes, lab values, and inclusion/exclusion criteria used to define each phenotype in each source cohort.

- Cross-Validation with Gold Standards: For a subset of records, compare the algorithmic phenotype label against a clinician-adjudicated "gold standard" label. Calculate Cohen's Kappa for agreement.

- Statistical Harmonization: Apply methods like Calibration (using Platt scaling or isotonic regression per cohort) or more advanced Data Harmonization via Generative Models (e.g., using VAEs) to align feature distributions across cohorts before model training.

Table 1: Hypothetical Disaggregated Model Performance

| Subgroup | Sample Count (N) | Accuracy | F1-Score | Disparity vs. Majority |

|---|---|---|---|---|

| Majority Group A | 10,000 | 0.92 | 0.91 | Baseline |

| Underrep. Group B | 150 | 0.89 | 0.87 | -0.03 / -0.04 |

| Underrep. Group C | 75 | 0.67 | 0.62 | -0.25 / -0.29 |

Table 2: Common Bias Metrics for Binary Classifiers

| Metric | Formula | Ideal Value | Interpretation |

|---|---|---|---|

| Demographic Parity Difference | P(Ŷ=1 | Group1) - P(ŷ=1 | Group2) | 0 | Equal acceptance rates across groups. |

| Equalized Odds Difference | (FPRGroup1 - FPRGroup2) + (TPRGroup1 - TPRGroup2) / 2 | 0 | Equal false positive and true positive rates. |

Experimental Protocols

Protocol 1: Disaggregated Error Analysis & Slice Discovery

- Partition Data: Split your validation/test data by protected attributes (e.g., ethnicity, gender, age decile) and/or phenotypic clusters.

- Run Inference: Generate model predictions for each partition.

- Compute Metrics: Calculate key performance indicators (KPIs) for each slice independently.

- Identify Underperforming Slices: Flag slices where KPIs fall below a predefined threshold (e.g., >10% drop in F1-score) or where bias metrics exceed a fairness threshold.

- Root Cause Analysis: Manually inspect false positives/negatives in underperforming slices to identify common failure modes.

Protocol 2: Bias Mitigation via Reweighting (Pre-processing)

- Calculate Weights: For each training sample i belonging to group a and class y, compute weight:

w_i = (P_group(a) * P_class(y)) / (P_group,class(a, y)). Where P denotes probability in the target fair distribution (often the overall dataset proportions). - Apply Weights: Use these weights during model training (e.g., in the loss function:

Loss = Σ w_i * L(y_i, ŷ_i)). - Validate: Evaluate the retrained model using the disaggregated analysis from Protocol 1.

Diagrams

Title: Bias Identification & Mitigation Workflow

Title: Legacy Bias Causing Model Failure on Novel Targets

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Bias Mitigation Experiments |

|---|---|

Fairness Metrics Library (e.g., fairlearn, AI Fairness 360) |

Provides standardized implementations of quantitative bias metrics (e.g., demographic parity, equalized odds) for model audit. |

Slice Discovery Tool (e.g., SMASH, Domino) |

Automatically identifies coherent subgroups (slices) of data where model underperforms. |

Data Augmentation Tool (e.g., imbalanced-learn, nlpaug) |

Generates synthetic samples for underrepresented classes/subgroups via SMOTE or back-translation. |

| Invariant Risk Minimization (IRM) Framework | A training paradigm that encourages learning of features causally related to the outcome, stable across environments (domains). |

Cohort Harmonization Pipeline (e.g., sva R package, Combat) |

Adjusts for batch effects and systematic measurement differences across data collection sites. |

Interpretability Toolkit (e.g., SHAP, LIME) |

Explains individual predictions to diagnose failure modes in underperforming slices. |

Technical Support Center: Troubleshooting Guides & FAQs

Q1: Our model for predicting novel oncology targets shows high validation accuracy, but all top candidates are proteins highly expressed in male-derived cell lines. How can we diagnose and correct for sex bias in our training data? A1: This indicates a sampling bias where the training data over-represents male biology. Follow this diagnostic protocol:

- Audit Data Provenance: Tabulate the sex origin of all cell lines or tissue samples in your training set.

- Perform Stratified Analysis: Re-run your prediction pipeline on male-only and female-only data subsets separately. Significant performance disparity confirms the bias.

- Corrective Action - Data Rebalancing: Actively source or generate (via techniques like SMOTE) data from under-represented groups. Use a weighted loss function during training to penalize errors on the minority class (e.g., female-derived data) more heavily.

- Validation: Validate final model performance using balanced hold-out sets and external datasets with known sex distribution.

Q2: During clinical trial participant selection using an NLP model on EHR notes, we are inadvertently excluding eligible elderly patients. What is the likely cause and a step-by-step fix? A2: The bias likely stems from the model associating specific linguistic patterns or comorbidities common in elderly patients with ineligibility. Troubleshooting guide:

- Root Cause Analysis: Use SHAP or LIME explainability tools on your model to identify which words or phrases (e.g., "frail," "history of falls," "polypharmacy") are most strongly driving the exclusion classification.

- Protocol for De-biasing:

- Retrain with Fairness Constraints: Implement a fairness penalty (e.g., demographic parity or equalized odds) during model optimization to reduce correlation between the prediction and the protected attribute (age >65).

- Post-processing: Adjust the classification threshold for the elderly subgroup to achieve parity in selection rates, provided predictive performance remains acceptable.

- Continuous Monitoring: Deploy the model with a real-time dashboard tracking selection rates by age decade.

Q3: Our multi-omics drug response predictor fails to generalize to patients of non-European ancestry. What experimental workflow can identify the layer in our pipeline where this racial/ethnic bias is introduced? A3: Implement a bias audit workflow at each stage.

Diagram Title: Bias Audit Workflow for Multi-Omics Pipeline

Detailed Protocol:

- Data Source Audit: Quantify the ancestral composition of your training data using genomic PCA against reference panels (e.g., 1000 Genomes).

- Preprocessing Check: Cluster your normalized data. If clusters separate strongly by ancestry, batch correction methods (e.g., Combat) may be needed.

- Feature Selection Analysis: Test if selected biomarker genes are evenly distributed across ancestry groups or are disproportionately population-specific.

- Model Evaluation: Use ancestry-stratified cross-validation. Performance drops in specific groups indicate biased model generalization.

Summarized Quantitative Data from Recent Studies

Table 1: Documented Bias in Biomedical AI Training Datasets

| Data Type | Biased Variable | Under-Represented Group | Representation % | Study/Year |

|---|---|---|---|---|

| Genomic Data (GWAS) | Genetic Ancestry | Non-European | < 20% | Nature (2023) |

| Cell Line Databases | Sex | Female-derived | ~30% | Cell (2024) |

| Clinical Trial Images | Skin Tone | Fitzpatrick V-VI | < 10% | NEJM AI (2024) |

| EHR Data for NLP | Socioeconomic Status | Low-Income Zip Codes | Variable, often under-coded | JAMA Network (2023) |

Table 2: Impact of Bias on Model Performance Disparity

| Prediction Task | Performance Metric | Majority Group Performance | Minority Group Performance | Performance Gap |

|---|---|---|---|---|

| Diabetic Retinopathy Detection | AUC | 0.95 (Light Skin) | 0.75 (Dark Skin) | 0.20 |

| Polygenic Risk Scores (CHD) | Odds Ratio (Top Decile) | 4.2 (European Ancestry) | 1.5 (African Ancestry) | 2.7 |

| Drug Target Gene Prediction | Precision @ 10 | 0.80 (Male Cell Lines) | 0.45 (Female Cell Lines) | 0.35 |

Experimental Protocols

Protocol: Mitigating Ancestry Bias in GWAS-Based Target Discovery

- Data Collection: Aggregate GWAS summary statistics from diverse cohorts (e.g., All of Us, UK Biobank, BioBank Japan).

- Stratified QC: Perform quality control (imputation quality, MAF) separately per ancestry group.

- Meta-Analysis: Use a random-effects, trans-ancestry meta-analysis model (e.g., MR-MEGA) that includes principal components of genetic variation as covariates to distinguish shared from population-specific effects.

- Polygenic Scoring: Compute ancestry-adjusted polygenic scores using methods like PRS-CSx, which leverages a global prior and shared effects across populations.

- Validation: Test identified targets in in vitro models derived from diverse iPSC lines.

Diagram Title: Trans-Ancestry Target Discovery Workflow

Protocol: Auditing NLP Models for Clinical Trial Criteria

- Synthetic Cohort Generation: Use a language model (LLM) with strict guidelines to generate synthetic patient profiles that vary systematically across protected attributes (age, race, sex) while keeping eligibility factors constant.

- Model Probing: Pass the synthetic profiles through your trial selection NLP classifier.

- Disparity Measurement: Calculate the odds ratio for selection between groups. A ratio significantly different from 1.0 indicates algorithmic bias.

- Adversarial De-biasing: Use the synthetic profiles as a fairness-aware training set to fine-tune the classifier, minimizing prediction difference across groups.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Bias-Aware Biomedical AI Research

| Item | Function | Example/Supplier |

|---|---|---|

| Diverse Reference Panels | Provides ancestral context for genomic analysis and correction. | 1000 Genomes Project, gnomAD, All of Us Researcher Workbench. |

| Ancestry-Informative Markers (AIMs) | A validated set of SNPs to genetically confirm or estimate population ancestry in cell lines/tissues. | Precision AIMs Panel (Thermo Fisher). |

| Commercially Diverse Cell Lines | Pre-characterized cell lines from various ethnicities and sexes for in vitro validation. | ATCC Human Primary Cell Diversity Panel. |

| Synthetic Data Generation Tools | Creates balanced datasets for stress-testing and de-biasing models without privacy concerns. | Mostly AI, Syntegra, or carefully prompted LLMs (e.g., GPT-4 with guardrails). |

| Fairness & Bias Audit Libraries | Open-source code for detecting and mitigating bias in ML models. | IBM AI Fairness 360 (AIF360), Facebook's Fairness Flow, Google's ML-Fairness-Gym. |

| Explainability (XAI) Suites | Identifies which input features drive biased predictions. | SHAP, LIME, Captum (for PyTorch). |

Technical Support Center

FAQs & Troubleshooting Guides

Q1: Our model shows excellent performance on our internal validation set but fails catastrophically on a new, external patient cohort. What could be the cause? A: This is a classic symptom of dataset bias. Your training and internal validation data likely lack representational diversity, causing the model to learn spurious correlations specific to that dataset.

- Troubleshooting Steps:

- Audit Cohort Demographics: Compare the distributions of key variables between your training set and the new cohort. Use the following table as a guide:

Q2: How can we formally test for racial/ancestral bias in our genomic risk prediction model before clinical deployment? A: A rigorous bias assessment protocol is mandatory.

- Experimental Protocol: Bias Disparity Audit

- Stratify Test Data: Partition your held-out test set into subgroups based on genetically inferred ancestry (e.g., AFR, EAS, EUR, SAS) using standardized PCA or ADMIXTURE analysis.

- Calculate Performance Metrics Per Subgroup: Compute key metrics—AUC, Sensitivity, Specificity, Positive Predictive Value (PPV)—for each ancestral subgroup independently.

- Quantify Disparity: Establish disparity thresholds. For example, the FDA's proposed criteria for algorithmic fairness often consider a difference in AUC of >0.05 or a difference in sensitivity/specificity of >0.10 as potentially problematic.

- Mitigation Experiment: If disparity exceeds thresholds, retrain using fairness-constrained optimization (e.g., imposing a penalty on performance variance across subgroups) or use adversarial debiasing to strip away ancestry-correlated features not causally linked to the outcome.

Q3: We suspect batch effects and site-specific protocols are introducing bias into our multi-omics data. How can we diagnose and correct this? A: Technical bias is a major confounder in translational research.

- Diagnostic Workflow:

- Principal Component Analysis (PCA): Plot the first two principal components of your data, colored by collection site/batch. Clear clustering by site indicates strong batch effect.

- Statistical Testing: Perform PERMANOVA on the data matrix using 'Site' as the grouping variable. A significant p-value confirms the effect.

- Correction Methodology:

- ComBat or ComBat-GP: Use these empirical Bayes methods to harmonize data across batches while preserving biological signal. They are standard for genomic and proteomic data.

- Protocol: Normalize data → Identify batch covariates → Apply ComBat adjustment → Validate by re-running PCA to show batch clustering has dissipated.

Visualizations

Diagram: ML Bias Mitigation Workflow (94 chars)

Diagram: Sources of Technical Batch Effect (95 chars)

The Scientist's Toolkit: Research Reagent Solutions

| Item / Reagent | Function in Bias-Aware Research |

|---|---|

| Standardized Reference Cell Lines (e.g., from Cell Line Genomics Consortium) | Provides genetically characterized, common baselines across labs to control for experimental variability. |

| Multiplex Immunoassay Kits with Pre-Mixed Panels (e.g., Olink, MSD) | Minimizes protocol deviation and batch variation in protein biomarker quantification across sites. |

| Synthetic Data Generation Tools (e.g., SynthVAE, CTGAN) | Generates realistic data for underrepresented subgroups to augment training sets without privacy concerns. |

Algorithmic Fairness Libraries (e.g., fairlearn, AIF360) |

Provides pre-implemented metrics (disparate impact, equalized odds) and mitigation algorithms for bias auditing. |

Bioinformatics Pipelines with ComBat/Harmonization (e.g., sva R package, scanpy.pp.harmony in Python) |

Critical for removing technical batch effects from genomic and transcriptomic data prior to analysis. |

Ancestry Inference Tools (e.g., PLINK, EIGENSOFT) |

Enables genetic ancestry stratification of cohorts to assess and correct for ancestral bias in models. |

Building Better Datasets: Proactive Methodologies for Bias-Aware Model Development

Technical Support Center: Troubleshooting Data Curation & Assembly

FAQs & Troubleshooting Guides

Q1: During cohort identification from electronic health records (EHR), my dataset shows a significant demographic skew (e.g., age, ethnicity) compared to the underlying patient population. How can I diagnose and correct this? A: This indicates a sampling bias in your data extraction query or source system enrollment. Follow this protocol:

- Diagnosis: Calculate the prevalence of your condition of interest in your curated dataset across key demographic strata (see Table 1). Compare these proportions to gold-standard epidemiological data (e.g., CDC, WHO) or a prior, comprehensive institutional audit using a Chi-squared test.

- Correction Protocol: Implement stratified sampling or propensity score weighting.

- Stratified Sampling: Re-sample your EHR extract, setting quotas for each demographic subgroup (e.g., 50% female, 30% from Ethnicity A) based on the reference population's distribution.

- Propensity Score Weighting: Build a logistic regression model predicting inclusion in your dataset based on demographics. Use the inverse of the predicted probability (the propensity score) as a weight for each sample in subsequent analyses.

Q2: My image dataset (e.g., histopathology, retinal scans) contains batch effects from different scanner models or staining protocols, causing model overfitting. What is the standard mitigation workflow? A: Batch effects are a common technical bias. Employ this pre-processing pipeline:

- Experimental Protocol for Combatting Batch Effects: a. Metadata Logging: For every image, log critical technical covariates: scanner manufacturer/model, staining lot number, slide thickness, imaging date. b. Quality Control: Apply a standardized filter (e.g., remove out-of-focus images using Laplacian variance). c. Normalization: Apply a stain normalization algorithm (e.g., Structure-Preserving Color Normalization (SPCN) or Macenko) for histology images. For other modalities, use platform-specific calibration. d. Harmonization: Use a computational tool like ComBat (empirical Bayes) or its extensions (ComBat-GAM) to harmonize feature distributions across logged batches, adjusting for known biological variables (e.g., disease stage) to preserve signal.

Q3: When curating genomic data from public repositories like GEO or TCGA, how do I ensure consistency in genomic build alignment and annotation to prevent label leakage? A: Inconsistent genomic coordinates are a source of hidden bias.

- Troubleshooting Guide:

- Symptom: Poor model generalization; high performance on one dataset fails on another.

- Root Cause: Features (e.g., gene expressions, SNP positions) are mapped inconsistently.

- Solution Protocol:

a. Standardize Genome Build: Use

CrossMapor theliftOvertool to convert all genomic coordinates (e.g., SNP arrays, variant calls) to a single reference build (e.g., GRCh38/hg38). b. Re-annotate Uniformly: For gene expression matrices, re-annotate all probe sets to a current gene annotation database (e.g., GENCODE) using the platform-specific annotation files. Do not rely on provided annotations. c. Version Control: Document the exact versions of all reference files used (e.g., GTF, FASTA).

Q4: How can I assess whether my curated "control" group is actually representative for the specific disease context, or if it introduces negative set bias? A: This is a critical validity check. Implement the following methodological review:

- Define "Appropriateness": List the confounding variables that must be balanced (e.g., age, sex, BMI, smoking status, comorbid conditions like hypertension).

- Comparative Analysis: For each potential control source (e.g., general population biobank, "healthy" volunteers, non-diseased tissue from adjacent site), tabulate the distributions of these confounders against your case group.

- Statistical Matching: Use propensity score matching (1:1 nearest neighbor, caliper=0.2) to select the most appropriate control subset from a larger pool. Assess balance post-matching using standardized mean differences (<0.1 indicates good balance).

Table 1: Common Demographic Disparities in Public Biomedical Datasets (Illustrative)

| Dataset / Biobank | Primary Focus | Reported Demographic Skew (vs. US Population) | Potential Bias Risk |

|---|---|---|---|

| UK Biobank | Genomics, Imaging | Higher proportion of older, less ethnically diverse, healthier volunteers | Socioeconomic, "healthy volunteer" bias |

| The Cancer Genome Atlas (TCGA) | Oncology | Underrepresentation of racial/ethnic minority groups, particularly for certain cancers | Limited generalizability of molecular subtypes |

| ADNI (Alzheimer's Disease) | Neuroimaging | Predominantly Non-Hispanic White, highly educated cohort | Skewed model predictions for disease progression |

| GWAS Catalog Summary Stats | Genetics | ~79% of participants are of European ancestry | Reduced predictive utility in non-European populations |

Table 2: Impact of Data Curation Interventions on Model Performance

| Intervention Method | Application Scenario | Reported Effect on Test Set Performance (Generalizability) | Key Metric Change |

|---|---|---|---|

| Stratified Sampling by Demographics | EHR Cohort for Disease Prediction | Increased AUC from 0.72 to 0.78 in underrepresented group | Reduction in AUC disparity from 0.15 to 0.05 |

| Batch Effect Harmonization (ComBat) | Multi-site MRI Study | Improved cross-site classification accuracy by 18% | Decrease in batch-associated variance from 40% to <5% |

| Active Learning for Rare Class | Histopathology (Rare Cancer) | F1-score for rare class improved from 0.31 to 0.65 | Required 60% fewer labeled samples to achieve baseline |

| Synthetic Minority Oversampling (SMOTE) | Imbalanced Molecular Subtypes | Reduced false negative rate by 22% | Precision maintained within 3% of original |

Experimental Protocols

Protocol 1: Implementing Stratified Sampling for EHR Data Extraction Objective: To assemble a cohort from an EHR that mirrors the demographic distribution of a target population. Materials: SQL/OHDSI OMOP CDM access, statistical software (R/Python). Procedure:

- Define the clinical phenotype for

casesusing ICD-10 codes, lab values, and medication records with expert validation. - Query the reference population demographics (e.g., from institutional demographic database) to obtain target proportions for strata:

[Sex] x [Age Group] x [Race/Ethnicity]. - From the pool of identified

cases, calculate the current proportion in each stratum. - For strata where current proportion > target, randomly subsample cases. For strata where current proportion < target, note the deficit (this may require expanded querying or indicate true underrepresentation).

- Apply the same stratified sampling logic to select

controlsfrom patients not meeting case criteria, matching on key confounders like enrollment period.

Protocol 2: Computational Batch Effect Correction for Transcriptomics Data

Objective: Remove technical variation from multiple sequencing batches while preserving biological variation.

Materials: Gene expression matrix (log2 counts), batch metadata file, R with sva package.

Procedure:

- Model Design: Create a full model matrix for biological conditions of interest (e.g.,

~ disease_state + age). Create a null model matrix for covariates to preserve (e.g.,~ age). - Identify Surrogate Variables: Use the

num.svfunction to estimate the number of hidden batch effects. - Run ComBat: Execute

ComBat(dat = expression_matrix, batch = batch_vector, mod = full_model, par.prior = TRUE, prior.plots = FALSE). - Validation: Perform Principal Component Analysis (PCA) on corrected data. Visualize: PC1 vs. PC2 colored by

batch(should show mixing) and bydisease_state(should show separation).

Diagrams

Diagram 1: Bias-Aware Data Curation Workflow

Diagram 2: Common Sources of Bias in Biomedical ML

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in Strategic Curation | Example / Note |

|---|---|---|

| OHDSI OMOP CDM | Standardized data model to convert disparate EHR databases into a common format, enabling reproducible cohort identification queries. | Essential for multi-site studies. |

| Phenotype Libraries | Pre-validated, computable definitions for diseases and conditions (e.g., PheCODE, HPO). Reduces label noise and variability in case/control assignment. | Use from reputable consortia. |

Bioconductor sva |

R package containing ComBat and other algorithms for batch effect correction in genomic and other high-dimensional data. | Industry standard for harmonization. |

| Synthetic Data Generators (e.g., CTGAN, Synthetic Minority Oversampling) | Tools to generate realistic synthetic samples for rare classes or to balance datasets, mitigating class imbalance bias. | Use with caution; evaluate fidelity. |

| Labeling Platforms with QA (e.g., Labelbox, CVAT) | Centralized platforms for expert annotation with built-in quality assurance (IQC/EQC), reducing annotation bias and noise. | Critical for imaging/nlp tasks. |

| Fairness Toolkits (e.g., AIF360, Fairlearn) | Libraries to calculate fairness metrics (demographic parity, equalized odds) and apply post-processing bias mitigations to trained models. | Integrate into evaluation pipeline. |

| Liftover Tools (UCSC, CrossMap) | Utilities to convert genomic coordinates between different assembly builds, ensuring consistent feature space across datasets. | Mandatory for integrative genomics. |

Troubleshooting Guides & FAQs

Q1: My bias metrics (e.g., Demographic Parity Difference, Equalized Odds) show high values for a protected attribute (e.g., race, sex), but my overall model accuracy remains high. Is this acceptable for regulatory submission in healthcare? A: No. High accuracy with high bias metrics is a critical failure for regulatory science and ethical deployment. Regulatory bodies like the FDA emphasize fairness. A biased model can perpetuate health disparities. You must mitigate bias even at a potential minor cost to aggregate accuracy. Proceed to debiasing techniques (pre-processing, in-processing, post-processing) and document all steps.

Q2: During adversarial debiasing, my model fails to converge or the fairness-performance trade-off is worse than reported in literature. What are common pitfalls? A: This often stems from incompatible hyperparameters or gradient conflict. Follow this protocol:

- Stabilize Training: Use a smaller learning rate for the adversary (e.g., 0.001) than the predictor (e.g., 0.01).

- Gradient Reversal Tuning: Implement gradient reversal layer with a scaling factor (

lambda). Start withlambda = 0.1and incrementally increase. - Architecture Check: Ensure the adversary network is simpler (e.g., 1 hidden layer) than the predictor to prevent it from overpowering the primary task.

- Monitor Logs: Track loss for both predictor and adversary separately to diagnose collapse.

Q3: When applying reweighting (pre-processing bias mitigation), my model's performance on the minority subgroup decreases further. Why? A: This may indicate intersectional bias or flawed weight calculation. Do not compute weights solely on a single protected attribute (e.g., sex). Instead, compute for intersections (e.g., sex × age group). Use the formula: Weight = (Expected Probability of Subgroup) / (Observed Probability in Training Data). Validate weights on a held-out sample.

Q4: The "Fairness Through Awareness" approach requires a similarity metric. What is a robust choice for high-dimensional biomedical data? A: For clinical or omics data, a carefully calibrated Mahalanobis distance is recommended. Ensure you:

- Standardize Features: Use RobustScaler to minimize outlier influence.

- Regularize Covariance: Compute the covariance matrix with Ledoit-Wolf shrinkage to avoid singularity.

- Validate: Check that the resulting distances correlate with domain expert assessments of similarity for a small sample.

Q5: How do I validate that bias mitigation for a drug response predictor generalizes to a new patient population not seen during training? A: Implement a rigorous external validation protocol:

- Split by Site/Study: Partition data so that entire clinical trial sites or distinct biogeographic groups are in the external test set.

- Stratified Audit: Evaluate all performance and bias metrics (see table below) on each distinct subgroup within the external set.

- Threshold Calibration: Recalibrate decision thresholds on the external set's majority subgroup if necessary, then re-audit fairness metrics.

Table 1: Comparative Performance of Bias Mitigation Techniques on the TOX21 Dataset (Hypothetical Results)

| Mitigation Technique | Overall AUC | Subgroup A AUC | Subgroup B AUC | Demographic Parity Difference | Equalized Odds Difference | Computational Overhead |

|---|---|---|---|---|---|---|

| Baseline (No Mitigation) | 0.89 | 0.92 | 0.81 | 0.18 | 0.15 | Low |

| Reweighting (Pre-Processing) | 0.87 | 0.90 | 0.85 | 0.08 | 0.09 | Low |

| Adversarial Debiasing | 0.86 | 0.88 | 0.86 | 0.05 | 0.06 | High |

| Reduction Post-Processing | 0.88 | 0.91 | 0.84 | 0.04 | 0.10 | Very Low |

Table 2: Common Fairness Metrics Formulas & Interpretation

| Metric | Formula (Simplified) | Ideal Value | Interpretation in Clinical Context |

|---|---|---|---|

| Demographic Parity | P(Ŷ=1 | A=0) = P(Ŷ=1 | A=1) | 0 | Equal rate of positive prediction across groups. |

| Equalized Odds | P(Ŷ=1 | A=0, Y=y) = P(Ŷ=1 | A=1, Y=y) for y∈{0,1} | 0 | Equal TPR and TNR across groups. Critical for diagnostic fairness. |

| Predictive Parity | P(Y=1 | A=0, Ŷ=1) = P(Y=1 | A=1, Ŷ=1) | 0 | Equal PPV across groups. Ensures positive predictions are equally reliable. |

Experimental Protocols

Protocol 1: Benchmarking Bias Metrics in a Drug Toxicity Classification Pipeline

- Data Partitioning: Split dataset into Train (60%), Validation (20%), Test (20%) by patient ID, ensuring no patient leakage. Stratify splits by protected attribute (e.g., genetic ancestry PCA group).

- Baseline Model Training: Train a standard Random Forest or GBDT model on the Train set. Optimize hyperparameters for AUC on the Validation set.

- Bias Audit: On the held-out Test set, calculate all metrics in Table 2 for each protected subgroup. Use the

aif360orfairlearnPython toolbox. - Documentation: Record results in a table formatted like Table 1.

Protocol 2: Implementing and Evaluating Adversarial Debiasing

- Architecture Setup: Implement a predictor network (2-3 hidden layers) and an adversary network (1-2 hidden layers) using PyTorch/TensorFlow. The adversary takes the predictor's penultimate layer as input and predicts the protected attribute.

- Gradient Reversal: Insert a Gradient Reversal Layer (GRL) between the predictor and adversary. The GRL acts as identity during forward pass and negates gradients during backward pass.

- Alternating Training: In each epoch:

- Step 1: Freeze adversary, train predictor to minimize prediction loss.

- Step 2: Freeze predictor, train adversary to minimize protected attribute prediction loss.

- Step 3: Joint training with GRL active to maximize adversary loss while minimizing predictor loss.

- Evaluation: Follow Protocol 1, Step 4, on the Test set.

Diagrams

The Scientist's Toolkit: Research Reagent Solutions

| Item/Resource | Function in Algorithmic Fairness Research |

|---|---|

| AI Fairness 360 (aif360) | Open-source Python toolkit containing a comprehensive set of fairness metrics, bias mitigation algorithms, and explainability tools for benchmarking. |

| Fairlearn | Python package focused on assessing and improving fairness of AI systems, offering reduction algorithms and interactive dashboards for visualization. |

| SHAP (SHapley Additive exPlanations) | Game-theoretic approach to explain model predictions, crucial for identifying feature contributions to bias and ensuring interpretability. |

| MLflow | Platform to track experiments, parameters, metrics (including fairness metrics), and models to maintain rigorous audit trails for regulatory compliance. |

| Synthetic Data Generators (e.g., SDV, Gretel) | Tools to generate bias-controlled or augmented synthetic datasets for stress-testing fairness methods when real-world data is limited or highly sensitive. |

| Protected Attribute Ontologies | Standardized vocabularies (e.g., from NCI Thesaurus, CDISC) for defining race, ethnicity, sex, and genetic ancestry to ensure consistent subgroup analysis. |

Troubleshooting Guides & FAQs

FAQ 1: Why does my SMOTE-augmented dataset lead to overfitting and poor generalization on the external test set?

- Answer: This is a common issue where synthetic samples are generated without considering the underlying data manifold, creating unrealistic or noisy examples. Specifically for biomedical data, SMOTE can create implausible biological states in high-dimensional feature spaces (e.g., gene expression, molecular descriptors). The synthetic points may lie in regions of feature space that do not correspond to viable biological mechanisms, leading the model to learn artificial decision boundaries.

- Solution: Implement Manifold-aware or boundary-focused variants like Borderline-SMOTE or ADASYN, which focus synthesis on harder-to-learn samples. For highly structured data (e.g., images, spectra), consider switching to model-based synthesis (e.g., using VAEs or GANs) that learn the data distribution. Always validate synthesized samples with domain experts (e.g., a medicinal chemist for compound data) for plausibility.

FAQ 2: My CTGAN model for generating synthetic patient cohorts collapses, producing identical samples. How do I fix this?

- Answer: Mode collapse in GANs is often due to an imbalance in the discriminator and generator training dynamics or an overly powerful discriminator.

- Solution:

- Adjust Training Hyperparameters: Use the Wasserstein GAN with Gradient Penalty (WGAN-GP) loss, which provides more stable training signals.

- Monitor Metrics: Track the Frechet Inception Distance (FID) or the classifier Two-sample Test (C2ST) during training to quantitatively assess diversity and quality.

- Architecture Checks: Ensure your generator network has sufficient capacity (e.g., number of layers, nodes) to model the complex distribution of your underrepresented population.

- Data Preprocessing: Normalize continuous variables and use appropriate encoding for mixed data types (continuous and categorical).

FAQ 3: After using augmentation, my model's performance metrics improve on validation data but degrade in real-world deployment for the target minority subgroup.

- Answer: This indicates a domain shift introduced by the augmentation technique. The synthesized data may not accurately represent the true conditional distribution

P(X|Y)of the minority class in the deployment environment, especially if the original training data for that subgroup was biased or too small to estimate the distribution. - Solution: Implement a two-stage validation pipeline:

- Hold out a completely untouched sample of the rare population from the original data as a "sanity test" set.

- Use domain adaptation techniques post-augmentation to align the feature distributions of the synthetic minority data and the held-out real minority data.

- Report performance on the held-out real minority set as the key metric, not just on the overall augmented validation set.

FAQ 4: How do I choose between oversampling (like SMOTE) and undersampling for my highly imbalanced biomedical dataset?

- Answer: The choice depends on dataset size and computational cost.

- See the comparative table below:

| Technique | Recommended Scenario | Primary Risk | Typical Use-Case in Drug Development |

|---|---|---|---|

| Oversampling (SMOTE & variants) | Total dataset size is small to moderate. | Creating unrealistic samples; overfitting. | Augmenting rare adverse event reports or patients with a specific genetic biomarker. |

| Undersampling (Random, Tomek Links) | The majority class is very large, and computational efficiency is critical. | Loss of potentially useful information from the majority class. | Pre-processing large-scale phenotypic screening data before focused model training. |

| Hybrid (SMOTE + Tomek) | Dataset is of medium size and you want to clean the decision boundary. | Increased complexity in pipeline tuning. | Balancing cell image datasets for classification of rare morphological phenotypes. |

| Synthesis (VAE/GAN) | Data has complex, high-dimensional structure (images, sequences). | High computational demand; risk of generating nonsensical data. | Generating synthetic compound structures or histopathology images for rare cancer subtypes. |

Detailed Experimental Protocol: Evaluating SMOTE vs. CTGAN for Imbalanced Assay Data

Objective: To compare the efficacy of SMOTE and CTGAN in improving model performance on an underrepresented "active" class in a high-throughput screening assay.

Materials & Reagents:

- Original Dataset:

assay_data.csvcontaining 10,000 compounds (features: 2048-bit Morgan fingerprints, target: binary activity with 1% positive rate). - Software: Python 3.9, imbalanced-learn 0.10.1, SDV 0.17.1, scikit-learn 1.3.0.

- Validation Set: 20% of original data, stratified by activity, held out before any augmentation.

Methodology:

- Data Splitting: Split original data into 80% training (

n=8000) and 20% held-out test (n=2000). The training set contains ~80 active compounds. - Baseline Training: Train a Random Forest classifier on the original imbalanced training set. Evaluate on the held-out test set, recording Precision, Recall, and F1-score for the active class.

- SMOTE Augmentation:

- Apply SMOTE to the training set only, balancing the active:inactive ratio to 1:1.

- Train an identical Random Forest classifier on the SMOTE-augmented dataset.

- Evaluate on the same, original held-out test set.

- CTGAN Synthesis:

- Train a CTGAN model (default parameters, 100 epochs) exclusively on the minority class data (

n=80) from the training set. - Generate 7920 synthetic active compound fingerprints to create a 1:1 balanced training set.

- Train the Random Forest classifier on this combined dataset.

- Evaluate on the original held-out test set.

- Train a CTGAN model (default parameters, 100 epochs) exclusively on the minority class data (

- Statistical Validation: Repeat steps 2-4 with 10 different random seeds. Perform a paired t-test on the F1-scores across runs to determine if improvements from either method are statistically significant (p < 0.05).

Research Reagent Solutions Toolkit

| Item/Category | Function & Relevance to Bias Mitigation |

|---|---|

| Synthetic Minority Oversampling Technique (SMOTE) | Algorithmic reagent to generate interpolated samples for minority classes, directly addressing population imbalance in training data. |

| Conditional Tabular GAN (CTGAN) | Deep learning-based reagent for generating synthetic, realistic tabular data (e.g., patient records, compound features) conditioned on class labels. |

| Wasserstein GAN with Gradient Penalty (WGAN-GP) | A stabilized GAN variant used as a "reagent" to improve the training stability and output quality of synthetic data generators. |

| Frechet Inception Distance (FID) / Classifier Two-Sample Test (C2ST) | Quantitative assay reagents to measure the quality and diversity of generated synthetic data compared to real data. |

| Domain Adaptation Algorithms (e.g., CORAL, DANN) | Reagents to align the feature distributions between source (augmented) and target (real-world) data, mitigating introduced domain shift. |

Workflow & Pathway Visualizations

Title: Comparative Evaluation Workflow for Augmentation Techniques

Title: Risk-Aware Pathway for Bias Mitigation via Augmentation

Technical Support & Troubleshooting Center

Frequently Asked Questions (FAQs)

Q1: During adversarial debiasing, my adversary network collapses, predicting all outputs as a constant regardless of input. What is the cause and how do I fix it?

A1: This is a common failure mode known as "adversary collapse." It typically occurs when the primary predictor becomes too strong too quickly, providing no useful signal to the adversary. To resolve this:

- Implement Gradient Reversal Correctly: Ensure the gradient reversal layer (GRL) is placed between the shared representation and the adversary, not the predictor. Verify the gradient scaling factor (λ) is correctly applied and reversed.

- Adjust Training Dynamics: Use a two-step or alternating training schedule. First, train the predictor for k steps to learn a useful representation. Then, freeze the predictor and train the adversary for 1 step to catch up. Alternate.

- Tune Hyperparameters: Reduce the learning rate of the main predictor or increase the adversary's learning rate. Start with a small adversary weight (λ) and gradually increase it (annealing schedule).

- Strengthen the Adversary: Increase the capacity of the adversary network (more layers/units) to make it more competitive.

Q2: My fair representation learning model successfully reduces demographic parity disparity but causes a significant drop in overall predictive accuracy. Is this expected?

A2: Yes, there is often a trade-off between fairness and accuracy, formalized as the fairness-accuracy Pareto frontier. Your observation is expected, but the drop may be mitigated.

- Diagnose the Trade-off: Plot accuracy vs. your fairness metric (e.g., demographic parity difference) for different adversary weights (λ). This reveals your model's specific frontier.

- Re-evaluate the Fairness Metric: Ensure demographic parity is the correct objective for your application. For drug development, equalized odds might be more appropriate if false positives/false negatives have different clinical implications across groups.

- Inspect Representation: Use t-SNE or PCA to visualize the learned fair representation. Check if it has become too compressed, losing predictive information. Consider increasing the dimensionality of the encoded representation.

Q3: How do I choose between adversarial debiasing and a fair representation learning approach like variational fair autoencoders (VFAE) for my biomedical dataset?

A3: The choice depends on your data structure and fairness goal.

| Criterion | Adversarial Debiasing | Fair Representation Learning (e.g., VFAE) |

|---|---|---|

| Data Type | Best for structured tabular data or learned representations. | Excellent for high-dimensional, complex data (images, sequences). |

| Fairness Objective | Directly optimizes a defined fairness metric (DP, EO). | Often focuses on independence (Z ⊥ S). |

| Interpretability | Lower; the debiasing process is implicit in the gradient battle. | Higher; you can inspect the disentangled latent space. |

| Primary Use Case | When you need a performant predictor with a fairness constraint. | When you need a reusable, fair data representation for multiple downstream tasks. |

| Implementation Complexity | Moderate (requires careful balancing of two networks). | High (requires probabilistic model design & tuning). |

Q4: I am getting NaN losses when implementing adversarial debiasing with PyTorch/TensorFlow. What are the likely culprits?

A4: NaN losses usually stem from exploding gradients or numerical instability.

- Gradient Reversal Layer Implementation: Check your custom GRL code. The forward pass should return the input unchanged, but the backward pass should negate and scale the gradient. A sign error here causes instability.

- Loss Functions: Use stable implementations of cross-entropy or log loss. Add a small epsilon (e.g., 1e-8) to log arguments to prevent

log(0). - Gradient Clipping: Implement gradient clipping (e.g.,

torch.nn.utils.clip_grad_norm_) for both the predictor and adversary networks to cap exploding gradients. - Optimizer: Switch from Adam to SGD or lower Adam's

beta1/beta2parameters to reduce the chance of instability from moving variance estimates.

Experimental Protocols

Protocol 1: Standard Adversarial Debiasing Experiment

- Objective: Train a classifier where predictions (

Ŷ) are independent of a sensitive attribute (S), measured by Demographic Parity. - Dataset Split: 60/20/20 (Train/Validation/Test). Ensure stratified splits on

Sand labelY. - Architecture:

- Shared Encoder (h): 3 fully-connected layers with ReLU activations.

- Predictor (p): 2 layers taking

h(X)as input, outputtingŶ. - Adversary (a): 2 layers taking

GRL(h(X))as input, outputtingŜ.

- Training:

- Loss Functions:

- Predictor Loss:

L_p = CrossEntropy(Ŷ, Y) - Adversary Loss:

L_a = CrossEntropy(Ŝ, S) - Combined Loss:

L = L_p - λ * L_a(Note the negative sign for adversarial).

- Predictor Loss:

- Procedure: Use alternating optimization. In each epoch:

- Step 1: Update predictor parameters (θh, θp) to minimize

L. - Step 2: Update adversary parameters (θ_a) to minimize

L_a.

- Step 1: Update predictor parameters (θh, θp) to minimize

- Hyperparameter Tuning: Sweep λ ∈ [0.1, 1.0, 10.0, 100.0]. Use validation set to select the model with the best fairness-accuracy trade-off.

- Loss Functions:

Protocol 2: Variational Fair Autoencoder (VFAE) for Fair Representation

- Objective: Learn a latent representation

Zindependent ofS, useful for downstream prediction tasks. - Architecture:

- Encoder (q): Inference network mapping

X, Sto parameters of Gaussian posteriorq(Z|X, S). - Decoder (p): Generative network reconstructing

XfromZ. - Prior: Standard Gaussian

p(Z) = N(0,I). - Maximum Mean Discrepancy (MMD): Penalty applied to match moments of

q(Z|S=0)andq(Z|S=1).

- Encoder (q): Inference network mapping

- Training:

- Loss Function (ELBO with MMD penalty):

L_vfae = E[log p(X|Z)] - β * KL(q(Z|X,S) || p(Z)) - α * MMD(q(Z|S=0), q(Z|S=1)) - Procedure: Train end-to-end via stochastic gradient descent with reparameterization trick.

- Tuning: Sweep α (MMD weight) to control fairness-independence vs. reconstruction quality. A higher α forces greater independence.

- Loss Function (ELBO with MMD penalty):

Mandatory Visualizations

Adversarial Debiasing Training Workflow

Variational Fair Autoencoder (VFAE) Architecture

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Experiment | Example / Specification |

|---|---|---|

| Gradient Reversal Layer (GRL) | A "pseudo-function" that acts as the identity in the forward pass but negates and scales the gradient during backpropagation. Enables adversarial training. | Custom layer in PyTorch/TensorFlow. Key parameter: lambda (scaling factor). |

| Maximum Mean Discrepancy (MMD) | A kernel-based statistical test used to measure the distance between two probability distributions. Used as a loss to enforce similarity of latent distributions across groups. | Use a Gaussian RBF kernel. Implementations in torch_two_sample or Alibi. |

| Variational Autoencoder (VAE) Framework | Provides the scaffolding for probabilistic encoder-decoder models, necessary for implementing VFAE and similar fair representation methods. | Libraries: Pyro (PyTorch), TensorFlow Probability, or custom implementations. |

| Fairness Metric Libraries | Pre-built functions to calculate key fairness metrics (Demographic Parity, Equalized Odds, etc.) on model outputs, essential for evaluation. | AI Fairness 360 (IBM), Fairlearn (Microsoft), scikit-lego. |

| Sensitive Attribute Encoder | A method to incorporate sensitive attribute S into the model input or loss, often via one-hot encoding or embedding, without allowing direct leakage. |

Standard one-hot encoding for categorical S. For VFAE, S is an input to the encoder. |

Technical Support Center: Troubleshooting Guides & FAQs

This support center addresses common technical challenges in bias-aware omics and screening pipelines, framed within the thesis context: Addressing training data bias in machine learning optimization research.

Frequently Asked Questions (FAQs)

Q1: During bulk RNA-seq analysis, my ML model for patient stratification shows high performance on the training cohort but fails on external validation. What bias could be at play? A1: This is a classic sign of batch effect or cohort composition bias. The training data likely contains technical (sequencing platform, lab protocol) or biological (age, ethnicity, sample collection site) artifacts that the model learned as predictive. Solution: Implement rigorous batch correction (e.g., Combat, Harmony, or SVA) before model training. Always split data into training/validation sets by batch or cohort to assess generalization, not randomly.

Q2: In high-throughput compound screening, hit rates differ drastically between plates, confounding the identification of true bioactive compounds. How do I mitigate this? A2: This indicates positional or plate-level bias, often from edge effects or liquid handling inconsistencies. Solution:

- Use control compounds (positive/negative) distributed across all plates.

- Apply plate-level normalization (e.g., Z-score or B-score normalization) to remove row/column effects.

- For ML analysis, include plate ID and well position as potential confounder variables in the model to debias the feature set.

Q3: When integrating multi-omics datasets (e.g., proteomics + transcriptomics) from public repositories for ML, how do I handle missing data without introducing bias? A3: Naive imputation (e.g., mean-filling) can create artificial signals. Solution: Use bias-aware imputation:

- For Missing Completely at Random (MCAR) data, use k-NN or matrix factorization methods.

- For Missing Not at Random (MNAR) data (common in proteomics), employ methods like

left-censored imputationor incorporate detection probability models. - Always document the imputation method and percentage of missing values per feature, as this is a source of potential bias.

Q4: My deep learning model trained on TCGA data performs poorly on data from younger patient cohorts. What's the issue? A4: This is population or sampling bias. TCGA data has known under-representation of certain demographic groups. Solution: Apply algorithmic fairness techniques during model optimization:

- Pre-processing: Re-sample the training data to better match the target population's distribution.

- In-processing: Use adversarial debiasing where the model learns features predictive of the outcome but not the protected attribute (e.g., age group).

- Post-processing: Calibrate decision thresholds per subgroup.

Key Experimental Protocols for Bias Mitigation

Protocol 1: Bias-Aware Preprocessing for Transcriptomic Data

- Quality Control & Trimming: Use FastQC and Trimmomatic. Discard samples with >30% low-quality bases.

- Alignment & Quantification: Align to reference genome with STAR. Quantify reads per gene using featureCounts.

- Batch Effect Diagnosis: Perform PCA on normalized counts. Color samples by batch (e.g., sequencing run). If batches cluster separately, proceed to correction.

- Bias Correction: Apply the

removeBatchEffectfunction from limma (for known batches) or run Harmony integration (for complex, unknown covariates). - Validation: Post-correction, re-run PCA. Batch clusters should be mixed. Train ML models on corrected data.

Protocol 2: Normalization for High-Throughput Screening (HTS) Data

- Plate Layout: Include 32 negative controls (e.g., DMSO) and 16 positive controls distributed across each 384-well plate.

- Raw Data Acquisition: Measure raw fluorescence or absorbance.

- Background Subtraction: Subtract the median signal of negative controls on a per-plate basis.

- Normalization:

- For % Inhibition:

Normalized_Value = (Raw - Median_Positive) / (Median_Negative - Median_Positive) * 100. - For Z-score:

Z = (Raw - Plate_Median) / Plate_MAD. - For B-score: Fit a two-way robust median polish to remove row and column effects.

- For % Inhibition:

- Hit Calling: Define hits as compounds with normalized values >3 standard deviations from the plate mean (for Z-score) or >50% inhibition.

Summarized Quantitative Data

Table 1: Impact of Batch Correction on ML Model Generalizability

| Correction Method | Internal AUC (95% CI) | External Validation AUC | Reduction in Batch Association (p-value) |

|---|---|---|---|

| None (Raw Data) | 0.98 (0.96-0.99) | 0.61 | 1.2e-10 |

| ComBat | 0.95 (0.92-0.97) | 0.83 | 0.32 |

| Harmony | 0.94 (0.91-0.96) | 0.85 | 0.45 |

Table 2: Effect of Normalization on HTS False Discovery Rate (FDR)

| Normalization Method | Initial Hit Count | Confirmed Hits (Secondary Assay) | FDR |

|---|---|---|---|

| Raw Intensity | 450 | 45 | 90.0% |

| Plate Mean & SD (Z-score) | 210 | 63 | 70.0% |

| B-score (Row/Column) | 185 | 111 | 40.0% |

Visualizations

Bias Mitigation & ML Training Workflow

HTS Plate Bias & Correction Logic

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Bias Mitigation |

|---|---|

| UMI (Unique Molecular Identifier) Adapters | During RNA/DNA library prep, UMIs tag each original molecule, allowing bioinformatic correction for PCR amplification bias, ensuring quantitative accuracy. |

| Spike-in Controls (e.g., ERCC RNA) | Known quantities of exogenous RNA/DNA added to samples pre-processing. Used to normalize for technical variation and detect batch effects in sequencing efficiency. |

| Control Compounds (Agonist/Inhibitor/DMSO) | Essential in HTS to map systematic plate bias (positional effects) and to define the dynamic range for normalizing compound response data. |

| Reference Standard Cell Lines (e.g., MAQC/SEQC) | Genomically characterized cell lines used across labs and experiments to benchmark platform performance and align data, mitigating inter-study bias. |

| Polystyrene Bead Sets (for Cytometry) | Beads with known fluorescence intensity used to calibrate flow cytometers daily, preventing instrumental drift from biasing cell population quantification. |

| DNA Methylation Control Standards | Fully methylated and unmethylated DNA samples used as standards in bisulfite sequencing to calibrate conversion efficiency and prevent coverage bias. |

Diagnosing and Correcting Bias: A Troubleshooting Guide for Underperforming ML Models

Technical Support Center

Issue: Suspected Demographic Bias in Predictive Model Performance User Query: "My model for predicting clinical trial enrollment likelihood shows high overall accuracy but when I check performance by race subgroup, the false positive rate is significantly higher for one group. What steps should I take to investigate this bias?"

Troubleshooting Guide & FAQ

Q1: What are the primary quantitative red flags for bias in model performance? A1: Significant disparities in key performance metrics across protected subgroups (e.g., race, sex, age) are the primary red flags. Investigate if these disparities exceed your predefined fairness thresholds.

Table 1: Key Performance Metrics to Stratify and Compare

| Metric | Formula | Red Flag Threshold (Example) |

|---|---|---|

| False Positive Rate (FPR) | FP / (FP + TN) | Difference > 0.1 between subgroups |

| False Negative Rate (FNR) | FN / (FN + TP) | Difference > 0.1 between subgroups |

| Positive Predictive Value (PPV) | TP / (TP + FP) | Ratio < 0.8 between subgroups |

| Recall (Sensitivity) | TP / (TP + FN) | Difference > 0.15 between subgroups |

| Area Under the ROC Curve (AUC) | Area under ROC plot | Difference > 0.05 between subgroups |

Q2: How do I properly stratify my evaluation to detect such bias? A2: Implement a rigorous subgroup analysis protocol.

- Pre-define Subgroups: Identify legally/economically protected attributes (e.g., self-reported race, ethnic category, biological sex) and medically relevant attributes (e.g., genetic ancestry, disease subtype).

- Calculate Metrics Per Subgroup: Compute all performance metrics from Table 1 separately for each subgroup.

- Statistical Testing: Use statistical tests (e.g., bootstrap confidence intervals, permutation tests) to determine if observed disparities are significant and not due to random chance. A p-value < 0.05 typically indicates significance.

Q3: Beyond performance metrics, how can I detect bias in the predictions themselves? A3: Examine the distribution of prediction scores (e.g., probabilities) across subgroups.

- Calibration Check: A model is well-calibrated if, for example, of patients assigned a risk score of 0.7, 70% truly have the outcome, and this holds across subgroups. Use calibration plots per subgroup.

- Score Distribution Analysis: Plot density histograms of prediction scores for each subgroup. Look for systematic shifts in the distributions.

Table 2: Analysis of Prediction Score Distributions

| Subgroup | Mean Prediction Score | Score Variance | Calibration Error (ECE) |

|---|---|---|---|

| Subgroup A | 0.45 | 0.12 | 0.02 |

| Subgroup B | 0.62 | 0.09 | 0.15 |

| Disparity | 0.17 | 0.03 | 0.13 |

Experimental Protocol: Subgroup Performance Disparity Assessment Objective: To empirically measure and test for significant performance disparities across demographic subgroups. Materials: Held-out test set with ground truth labels and protected attributes; trained model. Procedure:

- Generate predictions for the entire test set.

- Partition the test set

Dinto subsetsD_gfor each subgroupginG(e.g., G={Race1, Race2}). - For each subset

D_g, compute the confusion matrix and derive all metrics in Table 1. - For each metric

M, compute the disparityΔM = max_{g in G}(M_g) - min_{g in G}(M_g). - Null Hypothesis Significance Testing (NHST):

a. Define test statistic

Tas the observed disparityΔM_obs. b. Fori=1toNiterations (e.g., N=1000), permute the protected attribute labels in the test set, breaking any link between subgroup and outcome. c. RecomputeΔM_ifor each permuted dataset. d. The p-value is(count of ΔM_i >= ΔM_obs + 1) / (N + 1). e. A p-value < 0.05 rejects the null hypothesis that the disparity is due to chance. - Report all per-subgroup metrics, disparities, and p-values.

Title: Workflow for Statistical Detection of Model Bias

The Scientist's Toolkit: Research Reagent Solutions for Bias Audits

Table 3: Essential Software & Libraries for Bias Detection

| Tool / Library | Primary Function | Application in Bias Detection |

|---|---|---|

| AI Fairness 360 (AIF360) | Open-source toolkit for fairness metrics and algorithms. | Calculate 70+ fairness metrics, run bias mitigation algorithms. |

| Fairlearn | Python package for assessing and improving fairness. | Compute disparity metrics, create visual dashboards for assessment. |

| SHAP (SHapley Additive exPlanations) | Game theory-based model explanation. | Identify feature contributions to predictions per subgroup to locate bias source. |

| Scikit-learn | Core machine learning library. | Stratified sampling, performance metric calculation, permutation testing. |

| Matplotlib / Seaborn | Data visualization libraries. | Create calibration plots, score distribution histograms, disparity bar charts. |

Title: Bias Detection Loop within ML Optimization Research

Technical Support Center

Troubleshooting Guides & FAQs

Q1: Why does the AI Fairness 360 (AIF360) toolkit's mitigation algorithm fail to run on my dataset, returning "ValueError: Could not find a non-trivial projection"?

A: This error typically occurs when the DisparateImpactRemover algorithm cannot compute a repair transformation. Follow this protocol:

- Check Dataset Scale: Ensure your dataset has more than 50 samples per protected group category. The algorithm requires sufficient data for reliable statistical estimation.

- Verify Protected Attribute: Confirm the protected attribute (e.g., race, gender) is encoded as a binary (0/1) or categorical integer field. One-hot encoded vectors will cause failure.

- Preprocessing Step: Before mitigation, run the following sanity check code:

Q2: When using Fairlearn's GridSearch with a RandomForestClassifier, the optimization runs indefinitely. How do I fix this?

A: This is often due to an excessively large search space. Implement the following constrained experimental protocol:

Limit Hyperparameter Grid: Use the provided configuration.

Enable Early Stopping: Wrap your estimator to use warm_start.

Set a Maximum Grid Size: Fairlearn evaluates all combinations. The number of constraints (constraints) multiplied by the hyperparameter combinations (param_grid) must be kept below 100 for reasonable runtime. Use this calculation:

Q3: How do I interpret a "0.0" fairness metric score from the Fairness Indicators TensorFlow widget? Does it indicate perfect fairness?

A: No, a score of 0.0 does not inherently indicate perfect fairness. It indicates no measured disparity given your current setup. Follow this diagnostic protocol:

- Check Metric Type: Identify if the metric is a difference or ratio.

- For difference metrics (e.g., Equal Opportunity Difference, Demographic Parity Difference), 0.0 means the performance (like true positive rate) is identical across groups.

- For ratio metrics (e.g., Equal Opportunity Ratio, Demographic Parity Ratio), 1.0 means perfect parity, while 0.0 indicates one group has a rate of zero.

- Verify Slicing: A 0.0 difference can occur if the evaluation slices (subpopulations) were not correctly configured. Re-run evaluation ensuring your protected feature is included in the

slicing_features list.

- Examine Base Rates: Use the widget's visualization to check if any subgroup has an extremely small sample size (<50), which can lead to unreliable metric calculation.

Q4: The Aequitas audit toolkit reports a high "False Positive Rate Disparity" for my model. What are the immediate next steps to diagnose the source of this bias?

A: A high FPR disparity means one group is disproportionately incorrectly flagged as positive. Execute this root-cause analysis protocol:

- Isolate the Error: Use the Aequitas

Group() function to generate the following table for your protected attribute.

- Examine Feature Distributions: For the group with high FPR, use SHAP or partial dependence plots to analyze if a specific feature value is causing the false alarms.