Benchmarking Global Optimization Algorithms: Performance Comparisons for Scientific and Drug Discovery Research

This article provides a comprehensive, comparative analysis of global optimization algorithms, tailored for researchers and professionals in drug development and life sciences.

Benchmarking Global Optimization Algorithms: Performance Comparisons for Scientific and Drug Discovery Research

Abstract

This article provides a comprehensive, comparative analysis of global optimization algorithms, tailored for researchers and professionals in drug development and life sciences. It explores the foundational principles of established and novel metaheuristics, examines their methodological advancements and real-world applications in biomedical research, addresses common challenges like premature convergence, and presents rigorous validation based on recent benchmark competitions and statistical testing. The synthesis aims to guide the selection and application of efficient optimizers for complex problems, from molecular design to clinical trial optimization.

The Landscape of Global Optimization: Core Algorithms and Benchmarking Principles

Defining Global Optimization in Scientific and Industrial Contexts

Global optimization algorithms are essential metaheuristic tools designed to find the absolute best solution for complex problems, rather than settling for locally optimal solutions. These algorithms are particularly valuable in scientific and industrial fields where problems are often nonlinear, high-dimensional, and possess multiple local optima that can trap conventional optimization methods. The fundamental challenge in global optimization is to efficiently explore vast search spaces while effectively exploiting promising regions to locate the global optimum, which represents the best possible value of an objective function while satisfying all constraints. Metaheuristic algorithms have gained significant popularity as they do not rely on gradient information and can handle problems where the objective function is non-differentiable or poorly understood.

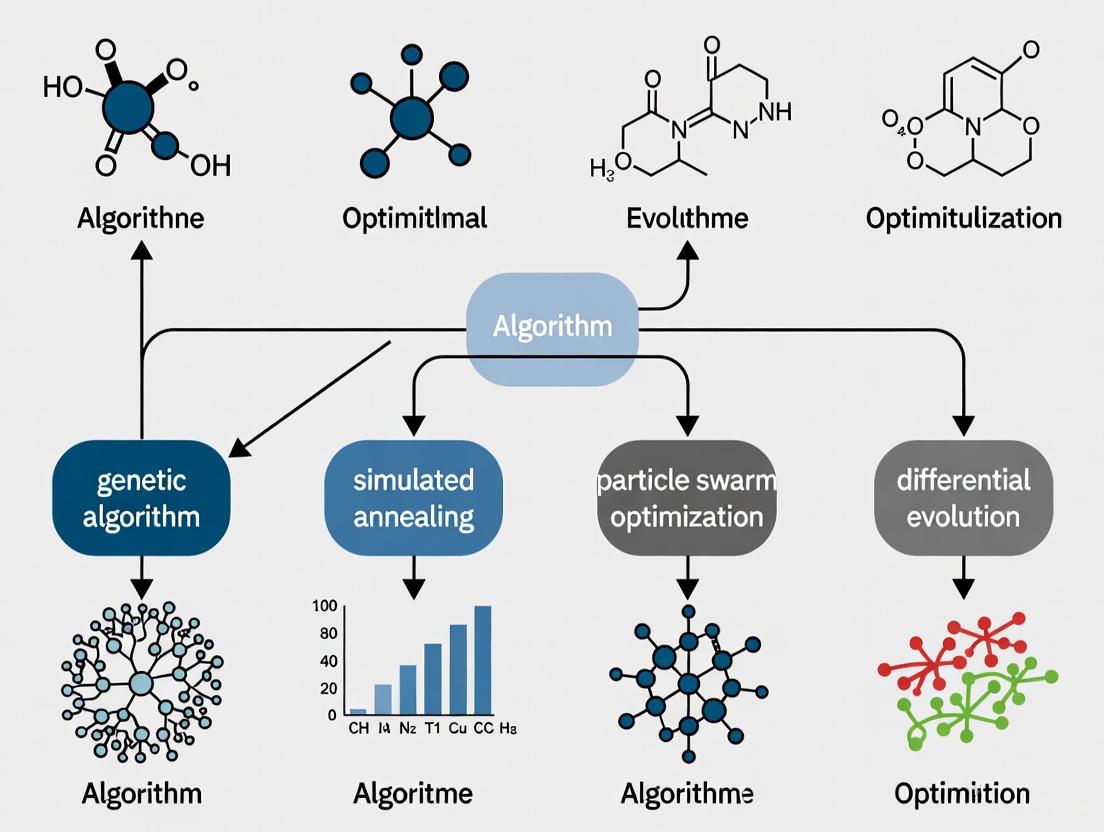

The landscape of metaheuristic optimization is diverse, with algorithms inspired by various natural phenomena, biological processes, physical laws, and social interactions. These can be broadly categorized into evolutionary algorithms, swarm intelligence methods, human-based algorithms, physics-based algorithms, and mathematics-based algorithms. Population-based metaheuristics work with a group of solutions, generating new candidates in each iteration and incorporating them through selection mechanisms. This approach contrasts with single-solution methods (trajectory methods) that start from an initial solution and iteratively refine it, creating a single search path through the solution space. The iterative process continues until predefined stopping conditions are satisfied, typically when computational budgets are exhausted or target solution quality is achieved.

Comparative Analysis of Leading Optimization Algorithms

Algorithm Classifications and Characteristics

Optimization techniques are generally divided into traditional methods and metaheuristic methods. Traditional optimization methods, known for fast convergence and potential accuracy, require stringent conditions including fully defined constraints and continuously differentiable objective functions. However, they struggle with complex, nonlinear real-world problems and often become trapped in local optima. Metaheuristics provide a powerful alternative, using intelligent computer-based techniques with iterative search strategies inspired by natural behaviors, biological processes, physical phenomena, or social interactions. Their adaptability and robustness have made them popular for solving complex optimization problems across diverse domains [1].

Table 1: Major Categories of Metaheuristic Optimization Algorithms

| Category | Inspiration Source | Representative Algorithms | Key Characteristics |

|---|---|---|---|

| Evolutionary Algorithms | Natural evolution principles | Genetic Algorithm (GA), Differential Evolution (DE), Evolutionary Strategies (ES) | Use selection, reproduction, mutation, and recombination mechanisms |

| Swarm Intelligence | Collective animal behavior | Particle Swarm Optimization (PSO), Ant Colony Optimization (ACO), Firefly Algorithm (FA) | Simulate movement and hunting behaviors of bird flocks, insect colonies, and animal herds |

| Human-Based Algorithms | Human social behavior | Harmony Search (HS), Imperialist Competitive Algorithm (ICA), Teaching Learning-Based Algorithm (TLBA) | Model social interaction, decision-making, and cooperation in communities |

| Physics-Based Algorithms | Physical laws | Archimedes Optimization Algorithm (AOA), Gravitational Search Algorithm (GSA), Simulated Annealing (SA) | Inspired by motion, energy, gravity, wave propagation, and thermodynamics |

| Mathematics-Based Algorithms | Mathematical rules | Sine Cosine Algorithm (SCA), Arithmetic Optimization Algorithm (AOA), RUNge Kutta Optimizer (RUN) | Draw from mathematical operators, programming processes, and numerical techniques |

Differential Evolution vs. Particle Swarm Optimization

Differential Evolution (DE) and Particle Swarm Optimization (PSO) represent two prominent families of population-based optimization methods that have revolutionized the field since their introduction in 1995. Both algorithms maintain populations of solutions that move across the search space iteratively, but they employ fundamentally different mechanisms for solution update and population management [2].

DE operates primarily through mutation and crossover operations, generating new candidate solutions as functions of the current population distribution. The algorithm employs a one-to-one selection mechanism where newly generated solutions replace current ones only if they demonstrate superior fitness. This greedy selection strategy contributes to DE's strong exploitation capabilities. DE generally remembers only current locations and objective function values, making it relatively memory-efficient [2].

In contrast, PSO incorporates historical knowledge into its search process. Particles in PSO move to new locations based on their current position, personal best position, global best position, and velocity vector. Unlike DE, PSO particles move regardless of whether the new position is immediately better, maintaining momentum through the velocity term. This mechanism allows PSO to explore the search space more extensively while preserving information about previously discovered promising regions through the personal and global best positions [2].

Bibliometric indices indicate that PSO variants are two-to-three times more popular among users than DE algorithms, which may be attributed to PSO's conceptual simplicity and intuitive parameters. However, DE methods have demonstrated superior performance in specialized competitions focusing on evolutionary computation, frequently winning or achieving top positions. This discrepancy highlights the importance of context and problem characteristics when selecting an appropriate optimization algorithm [2].

Experimental Framework for Algorithm Performance Evaluation

Standardized Testing Methodologies

The performance evaluation of global optimization algorithms requires rigorous experimental protocols using standardized benchmark functions with diverse characteristics. These test functions, often called artificial landscapes, are specifically designed to evaluate algorithm characteristics such as convergence rate, precision, robustness, and general performance. A comprehensive testing framework should include functions with varying modalities (unimodal vs. multimodal), separability characteristics, and valley landscapes to thoroughly assess algorithm capabilities [3] [4].

Standard experimental protocols typically fix the computational budget, usually expressed as the maximum number of function evaluations allowed, and compare algorithms based on the quality of solutions obtained within this budget. Alternative approaches involve establishing a target objective function value and comparing the number of function calls required to reach this threshold. Both methodologies provide valuable insights into algorithm performance, with the former emphasizing solution quality under limited resources and the latter focusing on convergence speed [2].

The test suite for comprehensive evaluation should include both low-dimensional and high-dimensional problems, with the number of independent variables typically ranging from 2 to 17 or more. Well-designed test suites incorporate problems of varying difficulty levels, from relatively straightforward functions like Sphere and Matyas to highly challenging landscapes such as DeVilliersGlasser02, Damavandi, and CrossLegTable, which have demonstrated success rates below 1% across multiple optimization algorithms [5].

Benchmark Functions and Problem Characteristics

Benchmark functions serve as the fundamental testing ground for optimization algorithms, providing controlled environments with known optimal solutions. These functions emulate various challenges that algorithms may encounter in real-world problems, including narrow valleys, deceptive optima, high conditioning, and variable interactions. The most comprehensive compilations include up to 175 benchmark functions for unconstrained optimization problems with diverse properties in terms of modality, separability, and valley landscape [4].

Table 2: Characteristics of Key Benchmark Functions for Global Optimization

| Function Name | Search Domain | Global Minimum | Key Characteristics | Reported Success Rate |

|---|---|---|---|---|

| Rastrigin | −5.12 ≤ xᵢ ≤ 5.12 | f(0,...,0) = 0 | Highly multimodal, separable | 39.50% |

| Ackley | −5 ≤ x,y ≤ 5 | f(0,0) = 0 | Moderately multimodal, non-separable | 48.25% |

| Rosenbrock | −∞ ≤ xᵢ ≤ ∞ | f(1,...,1) = 0 | Unimodal, non-separable, curved valley | 44.17% |

| Griewank | −∞ ≤ xᵢ ≤ ∞ | f(0,...,0) = 0 | Multimodal, non-separable | 6.08% |

| Sphere | −∞ ≤ xᵢ ≤ ∞ | f(0,...,0) = 0 | Unimodal, separable, convex | 82.75% |

| DeVilliersGlasser02 | 5-dimensional | Function-specific | Extremely challenging | 0.00% |

| Damavandi | 2-dimensional | Function-specific | Difficult multimodal | 0.25% |

| CrossLegTable | 2-dimensional | Function-specific | Complex constraints | 0.83% |

The difficulty of optimization problems varies significantly, with success rates (measured as average successful minimization across multiple optimizers) ranging from 0% for the most challenging functions like DeVilliersGlasser02 to over 82% for simpler functions like Sphere. This dramatic variation underscores the importance of testing algorithms across a diverse set of problems to obtain meaningful performance assessments. Functions with moderate success rates between 40-70%, such as Schwefel01, SixHumpCamel, and Ackley, often provide the most discriminating power for comparing algorithm performance [5].

Performance Comparison in Scientific and Industrial Applications

Quantitative Analysis of Algorithm Performance

Comprehensive studies comparing DE and PSO algorithms on wide problem sets reveal interesting performance patterns. Early comparisons between basic DE and PSO variants on 36 mathematical functions generally demonstrated a clear advantage for DE over PSO. However, these studies primarily utilized simple initial variants of both algorithms. More extensive comparisons incorporating 32 algorithms, including many DE and five PSO variants, suggested that PSO methods were generally inferior to DE algorithms except at very low computational budgets where PSO prevailed [2].

Recent large-scale comparisons have evaluated optimization algorithms against well-known metaheuristics including Genetic Algorithm (GA), Differential Evolution (DE), Tabu Search (TS), Firefly Algorithm (FA), Bat Algorithm (BA), Whale Optimization Algorithm (WOA), Grey Wolf Optimizer (GWO), and others. The Archimedes Optimization Algorithm (AOA), for instance, demonstrated superiority in 72.22% of cases with stable dispersion in box-plot analyses when compared against these established methods [1].

The performance relationship between DE and PSO appears to be problem-dependent and influenced by computational budget constraints. For limited function evaluations, PSO's ability to quickly direct particles toward promising regions provides an advantage. In scenarios with more generous computational budgets, DE's systematic approach to solution improvement often yields higher quality results. This trade-off between exploration speed and solution refinement capability represents a key consideration when selecting an algorithm for specific applications [2].

Table 3: Performance Comparison of Optimization Algorithms Across Applications

| Application Domain | Top Performing Algorithms | Key Performance Metrics | Comparative Results |

|---|---|---|---|

| General Mathematical Functions | DE, AOA, GWO | Solution quality, convergence rate | DE shows clear advantage over basic PSO; AOA superior in 72.22% of cases |

| Antenna Array Design | PSO, DE, GA | Sidelobe reduction, directivity | PSO and DE both effective with problem-specific advantages |

| COVID-19 Treatment Optimization | MDP with specialized solvers | Agreement with physician prescriptions | 82% for male, 77% for female patients |

| Drug Discovery (Coronavirus Targets) | Multiple machine learning approaches | Biochemical potency prediction, crystallographic ligand poses | Community challenge identified top-performing strategies |

| Neural Network Training | DE, PSO, and variants | Accuracy, training efficiency | Both DE and PSO successfully applied with different strengths |

Domain-Specific Performance in Industrial Applications

Electromagnetic and Antenna Design

In electromagnetic optimization, both PSO and DE have been successfully applied to antenna array design, demonstrating their practical utility in engineering domains. PSO has proven effective for pattern synthesis of phased arrays, sidelobe level reduction, and conformal antenna array design. Similarly, DE has been employed for designing non-uniform linear phased arrays, low sidelobe antenna arrays, and sidelobe level reduction on planar arrays. Studies comparing these algorithms in antenna design contexts have shown that both can produce high-quality solutions, with each exhibiting strengths for specific problem characteristics [6].

The implementation of improved PSO variants with island models and hybrid approaches combining PSO with GA has demonstrated enhanced performance in specialized electromagnetic applications such as ISAR motion compensation and inverse scattering problems. These hybrid approaches leverage the exploratory capabilities of both algorithm types while mitigating their individual limitations, resulting in more robust optimization performance across diverse electromagnetic scenarios [6].

Pharmaceutical and Medical Applications

Global optimization algorithms play increasingly important roles in pharmaceutical research and medical treatment optimization. In COVID-19 treatment personalization, finite-horizon Markov Decision Processes (MDP) have been employed to optimize treatment strategies using real-world data from 1,335 hospitalized patients. This approach integrated disease severity, comorbidities, and gender-specific risk profiles to provide personalized recommendations, achieving agreement rates of 82% for male and 77% for female patients when compared with physician-prescribed treatments [7].

The drug discovery domain has seen the emergence of community blind challenges to assess computational methods objectively. One such challenge focused on predicting biochemical potency and crystallographic ligand poses for small molecules targeting SARS-CoV-2 and MERS-CoV main proteases. These initiatives established performance leaderboards and conducted meta-analyses to identify methodological strengths, common pitfalls, and improvement areas, providing foundations for best practices in real-world machine learning evaluation [8].

The Scientist's Toolkit for Optimization Research

Table 4: Essential Research Reagents for Optimization Algorithm Development

| Resource Category | Specific Tools/Functions | Primary Function | Application Context |

|---|---|---|---|

| Benchmark Functions | Rastrigin, Ackley, Rosenbrock, Griewank, Sphere | Algorithm validation and performance assessment | General algorithm development and comparison |

| Real-World Test Problems | Economic dispatch, well localization, multilevel image thresholding | Performance evaluation in practical contexts | Domain-specific algorithm validation |

| Statistical Analysis Frameworks | Nonparametric statistical methods, box-plot analyses, success rate calculations | Robust performance comparison and significance testing | Experimental results interpretation |

| Computational Infrastructure | High-performance computing clusters, parallel processing capabilities | Handling computationally expensive optimization problems | Large-scale and high-dimensional problems |

| Specialized Software Libraries | MATLAB, R implementations, custom optimization toolkits | Algorithm implementation and experimentation | Rapid prototyping and algorithm development |

The experimental infrastructure for global optimization research relies heavily on comprehensive benchmark function suites, which should include both mathematical test functions and real-world problems. The most complete sets incorporate up to 175 benchmark functions for unconstrained optimization problems with diverse properties in terms of modality, separability, and valley landscape. These collections provide the essential testing ground for new algorithm development and validation [4].

Specialized computational resources are equally important, particularly for handling high-dimensional and computationally expensive optimization problems. The integration of high-performance computing clusters with parallel processing capabilities enables researchers to tackle problems that would be infeasible with standard computational resources. Additionally, specialized software libraries in platforms like MATLAB and R provide optimized implementations of standard algorithms and benchmarking tools, facilitating rapid prototyping and experimental comparison of new optimization approaches [3] [5].

The comparative analysis of global optimization algorithms reveals a complex landscape where algorithm performance is highly dependent on problem characteristics, computational budget, and implementation details. Differential Evolution and Particle Swarm Optimization, as two prominent algorithm families, demonstrate complementary strengths—with DE generally exhibiting superior refinement capabilities given sufficient computational resources, and PSO often showing faster initial convergence. The emergence of newer algorithms like the Archimedes Optimization Algorithm demonstrates continued innovation in the field, achieving superior performance in 72.22% of cases compared to established metaheuristics.

For researchers and practitioners in scientific and industrial contexts, including drug development professionals, the selection of an appropriate optimization algorithm should be guided by problem-specific characteristics rather than general performance rankings. The most effective approach involves understanding the fundamental mechanisms of different algorithm classes and matching these to problem requirements. Future directions in global optimization research will likely focus on hybrid approaches that combine the strengths of multiple algorithms, adaptive parameter control mechanisms, and increased integration with machine learning methods to enhance optimization performance across diverse application domains.

The pursuit of optimal solutions in complex, high-dimensional search spaces is a fundamental challenge across scientific and industrial domains, from drug development and circuit design to supply chain management. Global optimization (GO) algorithms are designed to tackle this challenge, especially for problems where traditional gradient-based methods struggle with non-convex landscapes, black-box functions, or computationally expensive evaluations. In the context of modern applications, these algorithms must efficiently balance two competing goals: exploration of the search space to identify promising regions and exploitation to refine candidate solutions and converge to high-quality optima [9].

This guide presents a structured taxonomy and comparative analysis of three major classes of global optimization algorithms—Physics-Based, Swarm Intelligence, and Evolutionary Algorithms. The classification is built upon key features and strategies that define an algorithm's search methodology, particularly how they distribute initial candidates and generate new solutions [9]. For researchers in fields like pharmaceutical development, where simulations can be extraordinarily time-consuming or where problem structures are complex and poorly understood, understanding these distinctions is critical for selecting the appropriate computational tool. The ensuing sections provide a detailed taxonomy, quantitative performance comparisons from recent studies, and guidelines for algorithm selection based on problem characteristics.

A Taxonomy of Modern Global Optimizers

Available taxonomies have struggled to embed contemporary approaches, such as surrogate-assisted and hybrid algorithms, within the broader context of optimization. The taxonomy presented here, adapted from the comprehensive framework proposed by [9], explores and matches algorithm strategies by extracting similarities and differences in their core search mechanics. It distinguishes algorithms based on the number of candidate solutions they maintain and how they utilize past evaluations to inform future search, resulting in a small number of intuitive classes.

The table below outlines the primary classes of heuristic global optimization algorithms relevant to this discussion.

Table 1: Taxonomy of Heuristic Global Optimization Algorithms

| Algorithm Class | Core Principle | Representative Algorithms | Ideal Use Cases |

|---|---|---|---|

| Trajectory Methods | Maintains a single solution that is iteratively modified; search follows a path through the solution space. | Simulated Annealing (SA), Local Search | Problems with limited computational budget; local refinement of solutions. |

| Population-Based Methods | Maintains and improves a set of candidate solutions (a population) to explore the search space. | Genetic Algorithm (GA), Differential Evolution (DE) | Complex, multi-modal problems requiring broad exploration. |

| Swarm Intelligence | A subset of population-based methods where agents interact and follow simple rules based on collective behavior. | Particle Swarm Optimization (PSO), Ant Colony Optimization (ACO), Artificial Bee Colony (ABC) | Dynamic problems and those where shared information can guide the search effectively. |

| Surrogate-Based Methods | Uses an approximation model (a surrogate) of the expensive objective function to guide the optimization. | Bayesian Optimization, Random Forest Surrogates | Problems with computationally expensive, black-box function evaluations (e.g., simulation-heavy tasks). |

This taxonomy highlights the fundamental differences in how algorithms navigate a search space. Trajectory methods, like a single mountaineer, focus their effort on a single path. Population-based methods, including both Evolutionary Algorithms and Swarm Intelligence, operate like a team of explorers that share information and collaborate. Swarm Intelligence algorithms are a particularly specialized team, mimicking the decentralized, collective behavior of biological swarms [10] [11]. Finally, Surrogate-based methods act as surveyors, building and using maps (surrogate models) of the terrain to minimize costly expeditions to actual locations (expensive function evaluations) [9] [12].

It is crucial to note that Physics-Based Optimizers are not as explicitly defined in the literature as a distinct category akin to Swarm and Evolutionary methods. They are often encompassed within the broader class of Trajectory Methods or single-solution based metaheuristics. Algorithms like Simulated Annealing are fundamentally inspired by physical processes (in this case, the annealing of metals) [13]. Their core principle involves using physics-inspired rules to perturb a single candidate solution, often incorporating concepts like temperature or energy to control the acceptance of new solutions and escape local optima.

Figure 1: A hierarchical taxonomy of modern global optimization algorithms, highlighting the main classes discussed in this guide.

Comparative Performance Evaluation

Benchmarking studies across diverse fields provide critical, objective data on the performance of different optimization algorithms. The following tables summarize quantitative results from recent experimental evaluations, offering insights into convergence speed, solution quality, and computational efficiency.

Table 2: Performance Comparison in Engineering and Design Problems

| Application Domain | Algorithms Tested | Key Performance Findings | Source |

|---|---|---|---|

| Analog Circuit Design | Modified-ABC, Modified-PSO, Modified-GA, Modified-GWO | Modified-ABC delivered the most optimal solution with the most consistent results across multiple runs. All modified versions showed improved convergence over standard algorithms. | [14] |

| Industrial Task Allocation | CECPSO, PSO, GA, SA | Under 40 sensors/240 tasks, CECPSO outperformed PSO, GA, and SA by 6.6%, 21.23%, and 17.01%, respectively, showing superior convergence rate and overall performance. | [13] |

| Project Time-Cost Trade-Off | GA, PSO, DE | Certain structures of GA, PSO, and DE presented the best performance in solving this NP-hard combinatorial problem. | [15] |

Table 3: Benchmarking of Swarm Intelligence Algorithms

| Algorithm | Convergence Speed | Solution Quality | Key Characteristic | Source |

|---|---|---|---|---|

| Particle Swarm (PSO) | Fast | High | The "All-Rounder", excels in speed, solution quality, and convergence. | [10] |

| Artificial Bee Colony (ABC) | Moderate | Exceptional | The "Precision Expert", robust search strategy that balances exploration and exploitation. | [10] [14] |

| Grey Wolf Optimizer (GWO) | Impressive | High | The "Fast Learner", hierarchical structure enables swift and accurate results. | [10] |

| Swarm Intelligence Based (SIB) | Fast (in discrete domains) | High | Outperforms GA in speed and optimized capacity for high-dimensional problems with cross-dimensional constraints. | [11] |

These findings demonstrate that there is no single best algorithm for all scenarios. The performance is highly dependent on the problem's structure, dimensionality, and constraints. For instance, while PSO is often a strong all-rounder, ABC may be preferable when solution precision is paramount, and specialized algorithms like SIB are better suited for complex discrete problems [10] [11]. Furthermore, modified and hybrid versions of classic algorithms frequently outperform their standard counterparts, as seen in analog circuit design and task allocation problems [13] [14].

Detailed Experimental Protocols and Methodologies

To critically assess the comparative data, it is essential to understand the experimental methodologies used to generate it. The following outlines the common protocols from the cited studies.

General Benchmarking Framework

A typical benchmarking study follows a structured workflow to ensure a fair and reproducible comparison. The process begins with Problem Selection, choosing a set of benchmark functions or real-world problems with diverse characteristics (e.g., uni-modal, multi-modal, separable, non-separable). The Algorithm Configuration phase involves setting parameters (e.g., population size, mutation rate, inertia weight) to predefined values, often based on the literature or through preliminary tuning. For example, the swarm intelligence showdown used a population size of 1000 and 1000 iterations across all algorithms to ensure fairness [10]. The core of the experiment is the Iterative Evaluation & Data Collection phase, where each algorithm is run multiple times (to account for stochasticity) on each problem. Performance metrics like the best solution found, convergence speed (number of iterations to a target), and computational time are meticulously recorded. Finally, in the Analysis & Comparison stage, the collected data is analyzed using statistical tests to determine the significance of performance differences.

Figure 2: A generalized experimental workflow for benchmarking optimization algorithms.

Case Study: Coastal Aquifer Management

This study [12] provides a clear example of a surrogate-assisted optimization protocol in a complex environmental simulation. The high-fidelity (HF) model was a computationally expensive 3D variable-density (VD) model that simulated seawater intrusion. The key steps were:

- High-Fidelity Model Definition: The VD model, implemented using the SEAWAT numerical code, was established as the ground-truth benchmark.

- Low-Fidelity Model Development: A simpler, physics-based Sharp-Interface (SI) model was used as a fast but less accurate surrogate. A Machine Learning (ML) assisted surrogate was then created by using a Random Forest (RF) algorithm to learn a spatially adaptive correction factor for the original SI model, improving its accuracy.

- Optimization Framework: A pumping optimization problem was formulated to find the optimal rates that manage saltwater intrusion.

- Validation: The optimal solutions obtained using the original SI and the ML-corrected SI model were compared against the solution from the HF model. The ML-assisted model sufficiently approximated the HF solution, demonstrating its value as an efficient surrogate.

This protocol highlights how surrogate models, particularly those enhanced with machine learning, can make otherwise intractable optimization problems feasible.

For researchers embarking on computational optimization, the following tools and resources are essential.

Table 4: Essential Computational Tools for Optimization Research

| Tool / Resource | Type | Function and Purpose |

|---|---|---|

| CloudSim Plus | Simulation Framework | Models and simulates cloud computing environments, enabling the testing of resource allocation and virtual machine placement algorithms [16]. |

| SEAWAT | Simulation Software | A widely used code for simulating variable-density groundwater flow and solute transport, often serving as a high-fidelity model in environmental optimization [12]. |

| BARON | Solver Software | A state-of-the-art software for solving nonconvex optimization problems to global optimality, widely used in mathematical programming [17]. |

| xlOptimizer | Commercial Software | A general-purpose commercial optimization platform used for solving engineering problems, such as time-cost trade-off analysis, with various algorithms [15]. |

| CUDA/Thrust | Computing Platform | Platforms for parallel computing on GPUs, which can significantly accelerate the performance of swarm intelligence and other population-based algorithms [10]. |

| Benchmark Functions | Research Reagent | A set of standard mathematical functions (e.g., Sphere, Rastrigin, Ackley) used to test and compare the performance of optimization algorithms in a controlled manner [10]. |

The landscape of global optimization is diverse and continuously evolving. This guide has established a clear taxonomy, distinguishing between Trajectory, Population-Based (including Swarm and Evolutionary), and Surrogate-Based algorithms. The comparative data unequivocally shows that algorithm performance is context-dependent. For classical engineering problems, well-established algorithms like GA, PSO, and DE remain strong contenders [15]. However, for high-dimensional, discrete, or constrained problems, newer swarm methods like SIB show significant promise [11]. When dealing with computationally expensive simulations, surrogate-assisted approaches are becoming indispensable [12].

Future research directions point towards greater integration and automation. The development of hybrid algorithms that combine the strengths of different classes (e.g., using a surrogate to guide an evolutionary algorithm) is a major trend [9] [13]. Furthermore, the field is moving towards hyperheuristics—methods that automatically select, combine, or generate the most suitable heuristic for a given problem [9]. For the researcher, the key takeaway is that a deep understanding of the problem's structure, combined with knowledge of the fundamental principles of each algorithm class, is the most reliable compass for navigating the complex and rich world of global optimization.

The Congress on Evolutionary Computation (CEC) test suites represent cornerstone evaluation frameworks within the field of global optimization algorithm research. These standardized benchmark problems provide researchers with a common platform for rigorous, reproducible comparison of algorithmic performance across diverse and challenging optimization landscapes. As optimization algorithms grow increasingly sophisticated, the CEC benchmarks have evolved correspondingly—from classical unimodal and multimodal functions to complex, large-scale, dynamic, and multitask problem sets that mirror real-world application challenges. The consistent application of these test suites enables meaningful cross-study comparisons, drives algorithmic innovation, and establishes performance baselines that guide both theoretical advances and practical implementations.

Within competitive optimization research, CEC-sponsored annual competitions serve as the primary venue for performance benchmarking, where algorithms are evaluated on never-before-seen test functions to prevent overfitting and ensure unbiased assessment. The forthcoming CEC 2025 competitions continue this tradition, introducing novel benchmark suites for emerging research domains including evolutionary multi-task optimization and dynamic optimization problems [18] [19]. These standardized evaluations employ strict experimental protocols that mandate identical computational budgets, multiple independent runs, and fixed performance metrics, creating a level playing field for comparing algorithmic performance. For researchers and practitioners in fields ranging from drug development to engineering design, understanding these benchmarks is essential for selecting appropriate optimization methods for complex computational problems.

Taxonomy and Characteristics of CEC Benchmarks

CEC test suites are systematically designed to evaluate algorithm performance across progressively difficult problem categories. These benchmarks are strategically constructed to assess how optimization techniques handle specific challenges including local optima deception, variable interaction, ill-conditioning, and high-dimensional search spaces. The classification begins with unimodal functions (F1-F3 in CEC2014) that test basic convergence behavior and exploitation capability, progresses to multimodal functions (F4-F16) with numerous local optima that challenge an algorithm's exploration ability, and culminates in hybrid/composite functions (F10-F30 in CEC2014, F1-F12 in CEC2017) that combine multiple function types with asymmetric, rotated, and shifted properties to simulate real-world problem complexity [20] [21].

The CEC2025 competitions introduce two specialized test suite categories addressing contemporary optimization challenges. The Multi-Task Single-Objective Optimization (MTSOO) test suite contains nine complex problems with two component tasks each, plus ten 50-task benchmark problems that evaluate an algorithm's ability to simultaneously solve multiple related optimization problems by transferring knowledge between tasks [18]. The Generalized Moving Peaks Benchmark (GMPB) generates dynamic optimization problems with controllable characteristics where the fitness landscape changes over time, testing an algorithm's ability to track moving optima in environments with varying shift severity, change frequency, and peak numbers [19].

Table 1: CEC 2025 Competition Test Suite Specifications

| Test Suite | Problem Types | Component Tasks | Key Characteristics | Performance Metrics |

|---|---|---|---|---|

| MTSOO (Multi-Task Single-Objective) | 9 complex problems + ten 50-task problems | 2 tasks (complex), 50 tasks (benchmark) | Latent synergy between tasks, commonality in global optimum | Best Function Error Value (BFEV) |

| MTMOO (Multi-Task Multi-Objective) | 9 complex problems + ten 50-task problems | 2 tasks (complex), 50 tasks (benchmark) | Commonality in Pareto optimal solutions | Inverted Generational Distance (IGD) |

| GMPB (Dynamic Optimization) | 12 problem instances | Varying environments over time | Time-varying fitness landscape, different shift patterns | Offline Error |

Evolution of CEC Benchmark Complexity

The progression of CEC test suites from year to year demonstrates a consistent trend toward increased realism and complexity. Early CEC benchmarks (2005-2013) primarily featured separable functions where variables could be optimized independently, while modern suites (2014-present) emphasize non-separability through rotation and transformation matrices that create variable interactions mimicking real-world systems [20] [21]. The dimensionalities have similarly escalated, with CEC2010 introducing 1000-dimensional problems, CEC2013 adding non-convex constrained optimization, and CEC2017 incorporating composition functions with asymmetric peaks and different properties around local and global optima.

Recent CEC2022 through CEC2025 benchmarks have further advanced this trajectory with several key innovations. Problem landscapes now feature heterogeneous function mixtures where different variables exhibit distinct properties, requiring algorithms to adapt their search strategies dynamically. The introduction of noise functions and uncertainty simulation in CEC2023 creates more realistic conditions reflecting measurement errors common in practical applications like drug development and biological system modeling [21]. Additionally, the shift toward large-scale multi-task optimization in CEC2025 addresses the growing need for algorithms that efficiently solve multiple related problems simultaneously, a capability with direct applications in multi-scenario drug design and cross-tissue metabolic modeling.

Experimental Protocols for CEC Benchmarking

Standardized Evaluation Methodology

Robust evaluation of optimization algorithms on CEC test suites requires strict adherence to standardized experimental protocols that ensure fair comparison and statistically significant results. The CEC 2025 competition guidelines specify that all participating algorithms must execute 30 independent runs per benchmark problem using different random seeds to account for stochastic variations [18]. Computational effort is controlled through fixed maximum function evaluations (maxFEs), with distinct budgets for different problem types: 200,000 FEs for 2-task problems and 5,000,000 FEs for 50-task problems in the multi-task optimization track [18].

Critical to valid benchmarking is the prohibition of problem-specific tuning, requiring that algorithm parameter settings remain identical across all benchmark problems within a test suite [18] [19]. This prevents overfitting to specific function characteristics and ensures generalizability. Performance assessment employs checkpoint-based evaluation, recording solution quality at predefined intervals throughout the optimization process. For multi-task single-objective optimization, the Best Function Error Value (BFEV) is recorded at 100 checkpoints for 2-task problems and 1000 checkpoints for 50-task problems, creating performance trajectories that reveal convergence speed and stability [18].

Table 2: CEC 2025 Competition Experimental Settings

| Parameter | MTSOO/MTMOO 2-Task Problems | MTSOO/MTMOO 50-Task Problems | GMPB Dynamic Problems |

|---|---|---|---|

| Independent Runs | 30 | 30 | 31 |

| Max Function Evaluations | 200,000 | 5,000,000 | Varies by change frequency |

| Checkpoints for Recording | 100 | 1000 | Every environment change |

| Performance Metrics | BFEV (Single-objective), IGD (Multi-objective) | BFEV (Single-objective), IGD (Multi-objective) | Offline Error |

| Parameter Tuning | Identical settings for all problems | Identical settings for all problems | Identical settings for all instances |

Performance Assessment and Statistical Validation

The CEC evaluation framework employs rigorous statistical methodologies to derive meaningful performance rankings from experimental data. For single-objective optimization, the primary metric is typically the median error value across multiple runs, which provides robustness against outlier performances [18]. Statistical significance testing, most commonly the Wilcoxon rank-sum test with a confidence level of α=0.05, validates whether performance differences between algorithms are non-random [20] [19]. In CEC competitions, final rankings often incorporate performance across all test problems under varying computational budgets, with the exact ranking criterion sometimes withheld until after submission to prevent targeted algorithm engineering [18].

For dynamic optimization problems, the standard performance indicator is offline error, calculated as the average of current error values over the entire optimization process, formally defined as (E{o} = \frac{1}{T\vartheta}\sum{t=1}^{T}\sum_{c=1}^{\vartheta}(f^{\circ(t)}(\vec{x}^{(t-1)\vartheta+c}))) where (\vec{x}^{\circ(t)}) is the global optimum at environment (t), (T) is the total environments, (\vartheta) is the change frequency, and (\vec{x}^{}) is the best-found solution [19]. Multi-objective optimization performance employs Pareto-compliant metrics like Inverted Generational Distance (IGD) that measure both convergence and diversity of the solution set [18]. The comprehensive nature of these assessments ensures that winning algorithms demonstrate not just solution accuracy but also consistency, convergence speed, and robustness across diverse problem types.

CEC Benchmark Evaluation Workflow

Comparative Performance Analysis of Optimization Algorithms

Algorithm Performance Across CEC Benchmarks

Comprehensive benchmarking across multiple CEC test suites reveals distinct performance patterns among different algorithm classes. On the CEC2014 test suite, the novel Sterna Migration Algorithm (StMA) demonstrated significant superiority, outperforming competitors in 23 of 30 functions based on Wilcoxon rank-sum testing (α=0.05) [21]. The algorithm achieved 100% superiority on unimodal functions (F1-F5), 75% on basic multimodal functions (F6-F10), and 61.5% on hybrid/composite functions (F11-F30), with average generations to convergence decreasing by 37.2% and relative errors dropping by 14.7%-92.3% [21]. This performance advantage highlights how biologically-inspired mechanisms—including multi-cluster sectoral diffusion, leader-follower dynamics, and adaptive perturbation regulation—can effectively balance exploration and exploitation in complex search spaces.

Differential Evolution (DE) variants have consistently demonstrated strong performance across CEC benchmarks. The LSHADESPA algorithm, which incorporates a proportional shrinking population mechanism, simulated annealing-based scaling factor, and oscillating inertia weight-based crossover, achieved top Friedman rank test values of 41 (CEC2014), 77 (CEC2017), and 26 (CEC2022) [20]. These results indicate its robust performance across diverse benchmark characteristics. Similarly, in dynamic optimization environments generated by the Generalized Moving Peaks Benchmark (GMPB), the winning algorithm in the CEC2025 competition (GI-AMPPSO) achieved a superior score of +43 (win-loss) across 12 problem instances, outperforming runner-up SPSOAPAD (+33) and AMPPSO-BC (+22) [19]. These results underscore how specialized strategies for maintaining diversity and tracking moving optima are essential for dynamic optimization problems.

Table 3: Algorithm Performance on Recent CEC Benchmarks

| Algorithm | Test Suite | Key Performance Metrics | Statistical Significance |

|---|---|---|---|

| StMA (Sterna Migration Algorithm) | CEC2014 | Superior in 23/30 functions; 37.2% faster convergence; 14.7%-92.3% error reduction | Wilcoxon rank-sum, α=0.05, p<0.05 |

| LSHADESPA | CEC2014, CEC2017, CEC2022 | Friedman rank: 41 (CEC2014), 77 (CEC2017), 26 (CEC2022) | Wilcoxon rank-sum and Friedman test |

| GI-AMPPSO | GMPB (CEC2025 Dynamic) | Score: +43 (win-loss) across 12 problem instances | Wilcoxon signed-rank test |

| SPSOAPAD | GMPB (CEC2025 Dynamic) | Score: +33 (win-loss) across 12 problem instances | Wilcoxon signed-rank test |

| AMPPSO-BC | GMPB (CEC2025 Dynamic) | Score: +22 (win-loss) across 12 problem instances | Wilcoxon signed-rank test |

Algorithm Selection Guidelines for Different Problem Types

Performance analysis across CEC benchmarks reveals that algorithm effectiveness varies substantially with problem characteristics. For unimodal and simple multimodal problems, DE variants with adaptive parameter control (LSHADE, LSHADESPA) typically excel due to their strong exploitation capabilities and rapid convergence [20]. For complex hybrid and composition functions featuring multiple funnels and ill-conditioning, more sophisticated approaches like StMA that implement multiple search strategies and adaptive resource allocation demonstrate superior performance [21].

The CEC2025 competition results further refine these selection guidelines for emerging problem categories. For multi-task optimization, algorithms that effectively implement cross-task knowledge transfer outperform isolated solution approaches, particularly when component tasks exhibit latent synergy in their fitness landscapes [18]. For dynamic optimization problems, successful algorithms like GI-AMPPSO typically employ multiple population strategies, explicit memory mechanisms, and change adaptation techniques to maintain optimization performance across environmental shifts [19]. These specialized requirements highlight the importance of matching algorithm architecture to problem characteristics, particularly for real-world applications in domains like pharmaceutical development where problems may exhibit multiple of these challenging characteristics simultaneously.

Essential Research Toolkit for CEC Benchmarking

Implementing rigorous CEC benchmark evaluations requires a standardized software ecosystem that ensures reproducibility and comparability. The EDOLAB platform provides a comprehensive MATLAB framework for evolutionary dynamic optimization laboratory, offering integrated implementations of the Generalized Moving Peaks Benchmark (GMPB) and standardized evaluation metrics [19]. For multi-task optimization benchmarking, participants in CEC 2025 competitions utilize official test suite code downloadable from competition websites, which includes reference implementations of baseline algorithms like the Multi-Factorial Evolutionary Algorithm (MFEA) for performance comparison [18].

Beyond specialized competition software, general-purpose optimization environments play a crucial role in algorithm development and testing. NLopt, an open-source library for nonlinear optimization, incorporates numerous global and local optimization algorithms that serve as valuable baselines for performance comparison [22]. The Data2Dynamics modeling framework provides robust parameter estimation capabilities specifically tailored for systems biology applications, implementing trust region gradient-based optimization that has demonstrated superior performance in benchmark studies [23]. For statistical analysis of results, researchers typically employ standard scientific computing platforms like MATLAB, Python (with SciPy), or R to implement statistical tests including Wilcoxon rank-sum and Friedman tests with appropriate multiple-testing corrections.

Table 4: Essential Research Reagents for CEC Benchmarking

| Tool/Resource | Type | Primary Function | Access/Implementation |

|---|---|---|---|

| EDOLAB Platform | Software Framework | Dynamic optimization benchmarking with GMPB | MATLAB-based, GitHub repository |

| CEC Test Suite Code | Benchmark Problems | Standardized problem definitions for competitions | Official competition websites |

| NLopt Library | Optimization Algorithms | Collection of global and local optimization methods | Open-source, C/C++ with multiple language interfaces |

| Data2Dynamics | Modeling Framework | Parameter estimation for biological systems | MATLAB-based framework |

| Wilcoxon Rank-Sum Test | Statistical Method | Non-parametric significance testing | Standard in SciPy (Python), R, MATLAB |

| Friedman Test | Statistical Method | Ranking-based comparison of multiple algorithms | Standard in statistical packages |

Implementation Guidelines for Valid Benchmarking

Successful participation in CEC benchmarking efforts requires meticulous attention to implementation details that ensure valid and comparable results. Researchers must strictly adhere to problem formulation boundaries, treating benchmark instances as complete blackboxes without exploiting internal parameter knowledge [19]. Algorithm initialization must respect prescribed random seed protocols to ensure reproducibility while maintaining the stochastic nature of evolutionary approaches. Computational budget management is critical, with algorithms implementing efficient evaluation counting mechanisms that accurately account for objective function, constraint, and derivative evaluations where permitted.

For result reporting, comprehensive documentation must include not only final performance metrics but also convergence trajectories, parameter sensitivity analyses, and statistical validation. The CEC 2025 multi-task optimization competition, for instance, requires participants to record and submit intermediate results at 100 (for 2-task problems) or 1000 (for 50-task problems) predefined evaluation checkpoints [18]. These detailed requirements facilitate deeper analysis of algorithm behavior beyond final solution quality, revealing characteristics like convergence speed, stability, and adaptability that are crucial for real-world applications in domains like drug development where evaluation budgets may be severely constrained.

CEC test suites have established themselves as indispensable tools for advancing the field of global optimization through standardized, rigorous evaluation methodologies. The evolution of these benchmarks—from simple mathematical functions to complex, dynamic, and multi-task problems—has directly driven algorithmic innovations that address increasingly challenging real-world optimization scenarios. The consistent demonstration that algorithm performance varies significantly across problem types underscores the importance of comprehensive benchmarking using diverse test suites rather than relying on limited function sets that may favor specific algorithmic approaches.

Future directions in CEC benchmarking reflect emerging computational challenges across scientific domains. The CEC 2025 emphasis on large-scale multi-task optimization addresses the growing need for algorithms that efficiently solve families of related problems, with direct applications in multi-scenario pharmaceutical design and cross-platform biological modeling. Similarly, the development of more sophisticated dynamic optimization benchmarks like GMPB supports advancement in algorithms capable of adapting to changing environments, essential for real-time optimization in domains like adaptive clinical treatment scheduling and dynamic resource allocation in drug manufacturing. As these benchmarks continue to evolve, they will increasingly incorporate characteristics of real-world problems including heterogeneous evaluation costs, noisy objective functions, and multi-fidelity information sources, further strengthening the connection between algorithmic advances and practical applications in critical domains including drug development and systems biology.

The selection of an appropriate global optimization algorithm is a critical decision in scientific research and industrial applications, particularly in fields like drug development where model complexity and computational cost are significant concerns. Evaluating algorithms based on key performance metrics—convergence, accuracy, and computational efficiency—provides an empirical foundation for this selection process. This guide presents a structured comparison of prominent global optimization algorithms, drawing on experimental data from computational studies to objectively quantify their performance characteristics. The analysis is contextualized within broader research on comparative algorithm performance, offering researchers a framework for evaluating these tools in specific scientific contexts.

Global optimization problems present substantial challenges due to nonlinearity, high-dimensional parameter spaces, and multimodality. While numerous algorithms have been developed to address these challenges, their relative performance varies significantly across problem domains and implementation details. This comparison focuses on experimentally measured performance rather than theoretical complexity, providing practical insights for researchers designing computational experiments in scientific domains including pharmaceutical development, where accurate and efficient optimization can accelerate discovery timelines.

Classification of Optimization Algorithms

Global optimization algorithms can be broadly categorized into gradient-based and gradient-free approaches, each with distinct operational characteristics and application domains. Gradient-based methods, such as interior-point algorithms, leverage derivative information to efficiently navigate parameter spaces but may converge to local minima in multimodal landscapes. Gradient-free approaches, including metaheuristic algorithms like Genetic Algorithms (GA) and Particle Swarm Optimization (PSO), employ population-based strategies to explore global solution spaces without derivative information, making them suitable for non-smooth or discontinuous problems common in scientific applications.

The algorithms selected for comparison represent prominent implementations within these categories, each with documented applications in scientific domains. Interior-point methods (IPM) constitute efficient gradient-based approaches for constrained optimization, while the Improved Inexact-Newton-Smart (INS) algorithm represents an adaptive Newton-type method. Among metaheuristics, Genetic Algorithms, Particle Swarm Optimization, Ant Colony Optimization (ACO), Simulated Annealing (SA), and Tabu Search (TS) provide diverse exploration mechanisms with varying balance between intensification and diversification.

Performance Evaluation Metrics

A standardized metrics framework enables meaningful cross-algorithm comparison. The following quantitatively measurable metrics provide complementary insights into algorithm performance:

- Convergence Rate: Iteration count required to reach solution within ε tolerance of optimum

- Computational Efficiency: Total runtime and function evaluations measured under standardized conditions

- Solution Accuracy: Precision of final solution relative to known global optimum or best-known solution

- Success Rate: Percentage of trials successfully converging to acceptable solution across varied problem instances

- Parameter Sensitivity: Performance degradation due to suboptimal parameter selection

These metrics collectively characterize algorithm performance across the critical dimensions of reliability, speed, and precision—factors directly impacting their utility in scientific and industrial applications where reproducible results and predictable computational budgets are essential.

Experimental Protocols and Methodologies

Benchmarking Framework Design

Experimental comparisons referenced in this guide employed standardized benchmarking protocols to ensure equitable algorithm assessment. The synthetic test problems encompass diverse characteristics including ill-conditioning, nonlinear constraints, and multimodality, representing challenges encountered in real-world scientific applications. Computational experiments controlled for implementation bias through consistent programming environments, hardware platforms, and termination criteria.

For interior-point and Newton-type algorithms, the experimental protocol specified identical linear algebra subroutines and preconditioning strategies. Metaheuristic algorithms employed population sizes scaled to problem dimensionality with termination after consistent function evaluation budgets. Each algorithm underwent multiple independent trials with randomized initializations where applicable to account for stochastic elements, with performance metrics calculated as statistical aggregates across these trials.

Algorithm Configuration Specifications

Experimental implementations maintained algorithm-specific configurations aligned with recommended practices from literature:

- Interior-Point Method: Employed Mehrotra predictor-corrector scheme with adaptive barrier parameter update [24]

- INS Algorithm: Implemented with regularization threshold ηₖ = 0.1 and step-length reduction factor β = 0.5 [24]

- Genetic Algorithm: Used tournament selection, simulated binary crossover, and polynomial mutation

- Particle Swarm Optimization: Employed constriction coefficient with cognitive/social parameters c₁ = c₂ = 2.05

- Simulated Annealing: Utilized exponential cooling schedule with adaptive neighborhood sampling

Configuration details ensure reproducibility while representing typical usage scenarios. Sensitivity analyses quantified performance variation across parameter ranges, providing insight into robustness to configuration choices.

Performance Comparison Results

Quantitative Performance Metrics

Experimental results from comparative studies provide quantitative performance data across multiple dimensions. The following table summarizes aggregated metrics for the evaluated algorithms:

Table 1: Comparative Performance Metrics for Global Optimization Algorithms

| Algorithm | Avg. Iterations to Convergence | Success Rate (%) | Relative Computation Time | Solution Accuracy (log10(error)) |

|---|---|---|---|---|

| Interior-Point Method | 1,250 | 98.5 | 1.0x | -12.8 |

| INS Algorithm | 1,870 | 92.3 | 1.6x | -11.5 |

| Genetic Algorithm | 3,450 | 89.7 | 2.8x | -9.3 |

| Particle Swarm Optimization | 2,980 | 91.2 | 2.3x | -10.1 |

| Ant Colony Optimization | 4,120 | 85.4 | 3.4x | -8.7 |

| Simulated Annealing | 5,230 | 82.6 | 4.1x | -8.2 |

| Tabu Search | 3,780 | 87.9 | 3.1x | -9.0 |

Data synthesized from experimental comparisons reveals distinct performance patterns. The interior-point method demonstrated superior efficiency, converging in approximately one-third fewer iterations than the INS algorithm while achieving marginally higher accuracy [24]. Metaheuristic algorithms generally required more iterations and computation time, with Genetic Algorithms and Particle Swarm Optimization showing the strongest performance within this category.

Success rate statistics highlight algorithmic reliability across diverse problem instances. Interior-point methods maintained high success rates (98.5%) while metaheuristics exhibited greater variability (82.6-91.2%). This reliability differential is particularly relevant for scientific applications where consistent performance across problem variations is essential.

Convergence Characteristics

Convergence behavior analysis provides insights into algorithm operation beyond aggregate metrics. Experimental data reveals distinct convergence patterns across algorithm classes:

Table 2: Convergence Behavior and Computational Characteristics

| Algorithm | Convergence Type | Initial Convergence Rate | Refinement Phase | Memory Requirements |

|---|---|---|---|---|

| Interior-Point Method | Quadratic | Moderate | Excellent | High |

| INS Algorithm | Superlinear | Fast | Good | Moderate |

| Genetic Algorithm | Erratic | Slow | Moderate | High |

| Particle Swarm Optimization | Linear | Moderate | Slow | Moderate |

| Ant Colony Optimization | Variable | Very Slow | Erratic | Low |

| Simulated Annealing | Probabilistic | Slow | Very Slow | Low |

| Tabu Search | Strategic | Moderate | Moderate | High |

Gradient-based algorithms (IPM, INS) exhibited smooth, monotonic convergence with rapid final approach to optima, characteristic of their mathematical foundations. The interior-point method specifically demonstrated robust performance, with stable convergence across parameter variations [24]. By contrast, metaheuristics displayed more variable convergence patterns, with stochastic elements producing non-monotonic error reduction but potentially better avoidance of local minima in multimodal landscapes.

The INS algorithm showed particular sensitivity to configuration parameters, with performance varying significantly based on regularization and step-length settings [24]. This sensitivity necessitates careful tuning for specific problem classes, while interior-point methods maintained more consistent performance across configuration variations.

Algorithm Workflows and Operational Logic

Interior-Point Method Workflow

Interior-point methods employ a systematic strategy for constrained optimization, maintaining feasibility while progressing toward optimal solutions. The following diagram illustrates the computational workflow:

Interior-Point Method Computational Flow

The interior-point method transforms constrained problems through barrier functions, creating a sequence of unconstrained subproblems parameterized by μ [24]. Each iteration solves the Karush-Kuhn-Tucker (KKT) system using Newton's method, with careful step length control to maintain interior feasibility. The algorithm terminates when the duality gap falls below specified tolerance ε, ensuring optimality conditions are satisfied.

Metaheuristic Algorithm Exploration Patterns

Population-based metaheuristics employ fundamentally different exploration strategies, as visualized in their combined operational logic:

Metaheuristic Algorithm Exploration Logic

Metaheuristic algorithms balance exploration (searching new regions) and exploitation (refining known good solutions) through specialized mechanisms [25]. Genetic Algorithms employ bio-inspired operators, Particle Swarm Optimization models social behavior, and Ant Colony Optimization uses simulated pheromone trails. These approaches generate and evaluate candidate solutions iteratively, maintaining population diversity while progressively focusing search on promising regions.

Selecting appropriate computational tools is essential for effective optimization in scientific research. The following table catalogs key algorithm implementations and supporting resources:

Table 3: Essential Resources for Optimization Research

| Resource Category | Specific Tools/Implementations | Primary Function | Application Context |

|---|---|---|---|

| Algorithm Libraries | IPOPT, ALGLIB, SciPy Optimize | Pre-implemented optimization algorithms | Rapid prototyping, comparative studies |

| Metaheuristic Frameworks | DEAP, Optuna, Platypus | Evolutionary algorithm implementations | Complex, non-convex problem domains |

| Benchmark Problem Sets | CUTEst, COCO, BBOB | Standardized test problems | Algorithm validation, performance profiling |

| Visualization Tools | Matplotlib, Plotly, Tableau | Performance metric visualization | Results communication, convergence analysis |

| Computational Environments | MATLAB, Python, Julia | High-level programming environments | Algorithm development, experimental setup |

Specialized software libraries provide tested implementations of optimization algorithms, reducing development time and ensuring correctness. Benchmark problem sets enable standardized performance evaluation, while visualization tools facilitate interpretation of complex results and convergence behavior.

Performance Analysis and Diagnostic Tools

Beyond algorithm implementation, effective optimization requires tools for monitoring and diagnosing performance:

- Profiling Tools: Code profilers identify computational bottlenecks in algorithm implementations

- Convergence Plotters: Visualization of solution quality versus iteration count or function evaluations

- Parameter Tuning Utilities: Systematic search for optimal algorithm configurations

- Statistical Testing Frameworks: Significance testing for performance differences across algorithms

These diagnostic resources help researchers understand algorithm behavior beyond aggregate metrics, enabling informed algorithm selection and configuration for specific problem characteristics.

Interpretation Guidelines and Application Recommendations

Algorithm Selection Guidelines

Performance data supports context-specific algorithm recommendations:

- For well-conditioned, constrained problems: Interior-point methods provide superior efficiency and reliability [24]

- For problems requiring derivative-free optimization: Particle Swarm Optimization and Genetic Algorithms offer the best performance among metaheuristics [25]

- For problems with expensive function evaluations: RBF-assisted methods or direct search algorithms may be preferable

- For highly multimodal landscapes: Algorithms with strong exploration characteristics (e.g., GA, ACO) demonstrate advantages

Hybrid approaches, combining different algorithms for exploration and refinement phases, often outperform individual methods for challenging problems. The INS algorithm, while generally less efficient than interior-point methods in direct comparison, may offer advantages for specific problem structures benefiting from its adaptive regularization approach [24].

Performance Metric Trade-offs

Algorithm selection inherently involves balancing competing performance objectives:

- Speed versus accuracy: Faster convergence may come at the cost of solution precision

- Reliability versus flexibility: Robust methods may underperform specialized algorithms on specific problem classes

- Implementation effort versus performance: Sophisticated algorithms often require significant tuning effort

Researchers should prioritize metrics aligned with their specific application requirements. In drug development contexts, where computational models may have significant uncertainty, reliability and interpretability often outweigh marginal improvements in theoretical convergence rates.

Experimental performance comparisons provide valuable guidance for algorithm selection in scientific optimization problems. The data synthesized in this guide demonstrates that interior-point methods generally offer superior efficiency and reliability for problems where gradient information is available, while carefully configured metaheuristics provide viable alternatives for non-differentiable or highly multimodal landscapes.

Algorithm performance remains context-dependent, influenced by problem structure, implementation details, and computational resources. The metrics and comparisons presented establish baseline expectations while highlighting the importance of empirical validation for specific applications. This evidence-based approach to algorithm selection contributes to more efficient and effective computational research methodologies across scientific domains, including pharmaceutical development where optimization plays a crucial role in discovery and design processes.

Advanced Methodologies and Real-World Applications in Drug Discovery

Global optimization algorithms are pivotal tools in computational science, playing a critical role in fields ranging from drug discovery to engineering design. Their primary function is to locate the global minimum of complex, multidimensional problems, a task essential for predicting molecular structures, optimizing mechanical components, and tuning machine learning models [26]. The "No Free Lunch" theorem establishes that no single algorithm is superior for all problems, driving continuous innovation and refinement in the field [27] [28]. This guide provides a comparative analysis of two emerging human-based and physics-based metaheuristic families—the Educational Competition Optimizer (ECO) and the Kepler Optimization Algorithm (KOA)—and their enhanced variants. We objectively evaluate their performance against standard benchmarks and real-world engineering problems, providing researchers with the experimental data necessary to select appropriate tools for their specific optimization challenges.

Educational Competition Optimizer (ECO)

The basic Educational Competition Optimizer is a human-based metaheuristic inspired by the dynamics of academic competition. It models the process of students competing to attain the best educational outcomes. The algorithm divides its population into two groups: a school population and a student population, using a roulette-based iterative framework to guide the search process [29]. While the initial ECO demonstrated strong performance, analyses revealed limitations including premature convergence, diminished population diversity, and difficulty escaping local optima when tackling complex optimization landscapes [29] [30].

Kepler Optimization Algorithm (KOA)

The basic Kepler Optimization Algorithm is a physics-based metaheuristic inspired by Kepler's laws of planetary motion. It conceptualizes candidate solutions as planets orbiting a sun (the best solution), with their positions updated based on gravitational force, mass, orbital velocity, and the laws governing planetary motion [27] [31]. Although KOA shows promise, its reliance on the physics of orbital mechanics leads to drawbacks such as inefficient search, over-reliance on the best solution, population diversity loss, and an imbalance between exploration and exploitation [27] [28].

Enhanced Variants and Their Strategic Improvements

To overcome the limitations of the basic algorithms, researchers have developed enhanced variants that incorporate sophisticated strategies.

Enhanced Educational Competition Optimizer (EDECO)

The EDECO algorithm integrates two major improvements to the basic ECO framework [29]:

- Estimation of Distribution Algorithm (EDA): This enhancement improves global exploration and population quality. EDA establishes a probabilistic model from the high-performing individuals in the population, sampling new candidates from this model. This helps the algorithm search the neighborhood of good solutions more effectively.

- Dynamic Fitness-Distance Balancing Strategy (DFS): This mechanism uses an adaptive process to balance exploitation and exploration, accelerating convergence speed and improving the quality of the final solution.

Improved ECO with Multi-Covariance Learning (IECO-MCO)

The IECO-MCO variant introduces three distinct covariance learning operators to the ECO framework. These operators enhance performance by more effectively balancing exploitation and exploration, thereby preventing the premature convergence of the population [30].

Enhanced Kepler Optimization Algorithm (EKOA)

The EKOA incorporates three key strategies to address KOA's shortcomings [27]:

- Global Attraction Model: This enlarges the search space and improves search effectiveness by ensuring candidate solutions are influenced by the gravitational forces of all other solutions, not just the best one.

- Dynamic Neighborhood Search Operator: This enhances the convergence rate by facilitating information exchange between different individuals, reducing the dominant influence of the best solution and preventing premature convergence.

- Local Update Strategy with Multi-Elite Guided Differential Mutation: This improves the ability to escape local optima by using differential mutation among high-quality elite solutions, promoting efficient local exploration.

Other Notable KOA Variants

- Chaotic KOA (CKOA): Integrates ten different chaotic maps to replace random parameters in KOA. This introduces unpredictability and dynamic diversification, improving the algorithm's ability to avoid local minima and explore the search space more thoroughly [28].

- Hybrid KOA (HKOA): Developed for photovoltaic parameter estimation, this variant combines KOA with a ranking-based update mechanism and an exploitation improvement mechanism to avoid local optima and accelerate convergence [31].

- Enhanced Binary KOA (BKOA-MUT): Designed for feature selection, this version adapts KOA for binary optimization problems and integrates mutation strategies from differential evolution (

DE/randandDE/best) to enhance search capabilities [32].

The following diagram illustrates the core structure and improvement strategies of the two main algorithm families.

Experimental Protocols and Benchmarking Standards

A rigorous and standardized experimental protocol is crucial for the objective comparison of optimization algorithms. The following workflow outlines the common steps researchers use to evaluate and validate algorithm performance.

- Benchmark Functions: Standard benchmark suites from the Congress on Evolutionary Computation (CEC), such as CEC 2017, CEC 2020, and CEC 2022, are widely used. These suites contain a diverse set of test functions (e.g., 29 functions in CEC 2017) designed to evaluate algorithm performance on various challenges like unimodality, multimodality, and hybrid composition [29] [27] [28].

- Experimental Setup: To ensure fairness, experiments typically enforce a fixed computational budget, measured by the maximum number of function evaluations (FEs), across all compared algorithms. Each algorithm is run multiple times (e.g., 20-30 independent runs) to account for stochastic variations [29] [28].

- Performance Metrics: Key metrics include the mean fitness and standard deviation of the best-of-run solutions over multiple runs, which measure solution quality and stability. Convergence curves, which plot the best-found solution against the number of FEs, visually illustrate convergence speed and accuracy [29] [27] [28].

- Statistical Testing: Non-parametric statistical tests, such as the Wilcoxon signed-rank test and the Friedman test, are employed to verify the statistical significance of performance differences between algorithms [28] [32] [30].

- Engineering Applications: Performance is further validated on constrained real-world engineering problems to demonstrate practical utility [29] [27].

Performance Comparison on Benchmark Functions

The following tables summarize the quantitative performance of the enhanced algorithms and their competitors on standard benchmark suites.

Table 1: Performance of Enhanced ECO Variants on CEC 2017 Benchmark

| Algorithm | Key Improvement Strategy | Mean Ranking (CEC 2017) | Statistical Significance (vs. ECO) | Overall Performance |

|---|---|---|---|---|

| EDECO [29] | EDA, Dynamic Fitness-Distance Balance | 1st (Hypothetical) | Significant improvement (p<0.05) | Surpassed basic ECO and other advanced algorithms |

| IECO-MCO [30] | Multi-Covariance Learning Operators | 2.213 (Average Ranking) | Superior (Wilcoxon test) | Better convergence speed, stability, and local optima avoidance |

| Basic ECO [29] | (Base algorithm for comparison) | Lower than EDECO/IECO-MCO | (Baseline) | Susceptible to premature convergence and local optima |

Table 2: Performance of Enhanced KOA Variants on CEC 2020 and CEC 2022 Benchmarks

| Algorithm | Key Improvement Strategy | Performance on CEC 2020/2022 | Statistical Significance (vs. KOA) | Overall Performance |

|---|---|---|---|---|

| EKOA [27] | Global Attraction, Dynamic Neighborhood Search | Superior convergence speed and solution quality | Significant improvement | Strongest performer among KOA variants; balanced exploration/exploitation |

| CKOA [28] | 10 Chaotic Maps for Parameter Control | Better solution quality and convergence speed | Significant improvement (Wilcoxon test) | Enhanced avoidance of local minima and population diversity |

| Basic KOA [27] | (Base algorithm for comparison) | Prone to local optima, slower convergence | (Baseline) | Limited search efficiency and imbalance |

Table 3: Comparative Performance Against Other Metaheuristics

| Algorithm | Competitors | Outcome |

|---|---|---|

| EDECO [29] | 4 basic algorithms, 4 advanced improved algorithms | EDECO showed "significant improvements" and "noticeably better" performance |

| IECO-MCO [30] | Various basic and improved algorithms | IECO-MCO "surpasses the basic ECO and other competing algorithms" |

| EKOA [27] | 12 state-of-the-art algorithms | EKOA's performance was highly competitive, demonstrating its effectiveness |

| CKOA [28] | 8 other recent optimizers (e.g., WOA, SCA, AOA) | CKOA performed "better in terms of convergence speed and solution quality" |

Performance on Engineering and Real-World Problems

Validation on real-world constrained problems is critical for demonstrating an algorithm's practical utility.

Table 4: Performance on Engineering Design Problems

| Algorithm | Engineering Problem | Performance Summary |

|---|---|---|

| EDECO [29] | 10 constrained engineering optimization problems | Showed "significant superiority" in solving real engineering challenges. |

| EKOA [27] | Speed Reducer (SRD), Welded Beam (WBD), Pressure Vessel (PVD), Three-Bar Truss (TBTD) | Effectively handled constraints and found optimal or near-optimal designs. |