Basin-Hopping Algorithm: A Complete Guide to Mapping Potential Energy Surfaces for Drug Discovery

This comprehensive guide explores the basin-hopping algorithm for mapping complex potential energy surfaces (PES), with direct applications in computational drug discovery and materials science.

Basin-Hopping Algorithm: A Complete Guide to Mapping Potential Energy Surfaces for Drug Discovery

Abstract

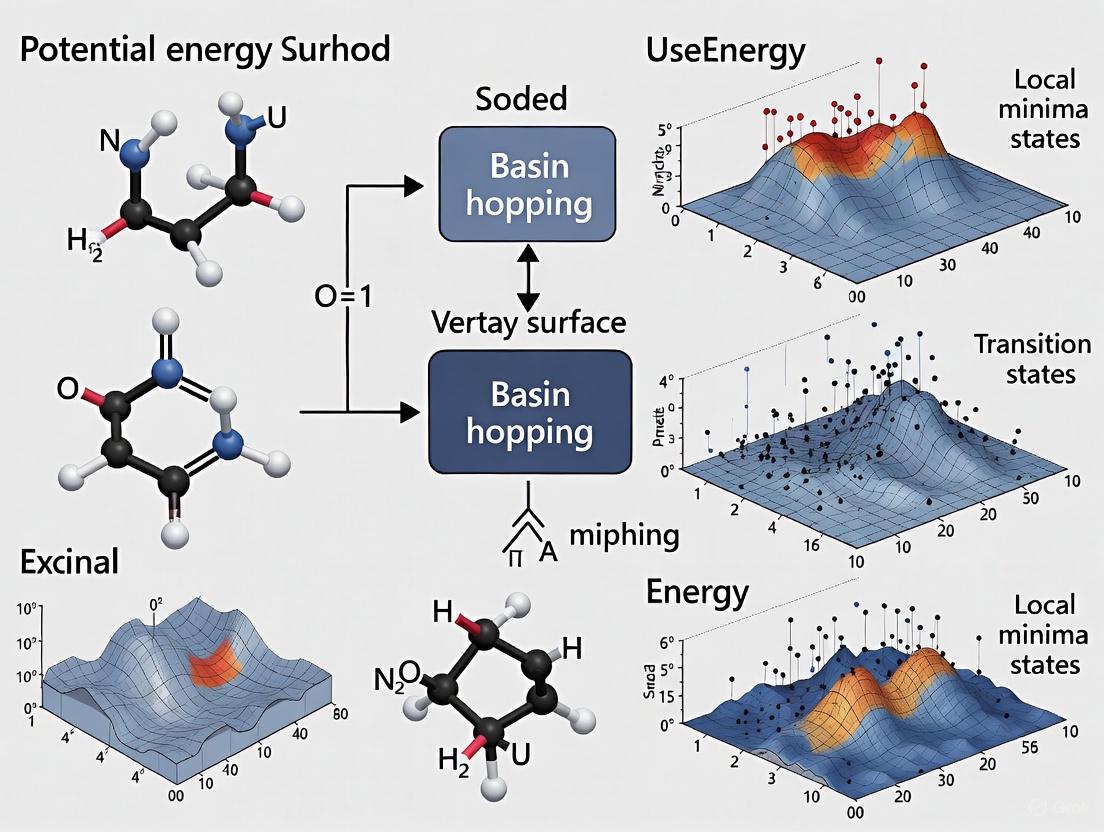

This comprehensive guide explores the basin-hopping algorithm for mapping complex potential energy surfaces (PES), with direct applications in computational drug discovery and materials science. Covering foundational principles to advanced implementations, we detail how this global optimization technique efficiently navigates rugged energy landscapes to identify low-energy molecular configurations. The article examines adaptive and parallel computing approaches that accelerate convergence, validates performance against established metaheuristics, and demonstrates practical applications in pharmaceutical research including conformational analysis of drug molecules like Resveratrol. Essential reading for researchers seeking robust PES exploration methods for predicting drug-target interactions and reaction mechanisms.

Understanding Potential Energy Surfaces and the Basin-Hopping Algorithm

What are Potential Energy Surfaces and Why They Matter in Drug Design

PES & Basin-Hopping FAQs for Computational Researchers

What is a Potential Energy Surface (PES) and why is it fundamental to my research? A Potential Energy Surface (PES) is a multi-dimensional landscape that describes the energy of a molecular system as a function of the positions of its atoms [1] [2]. Imagine a geographical map where the longitude and latitude represent different molecular structures, and the altitude represents the system's energy. Each point on this surface corresponds to a specific atomic arrangement and its associated energy [3]. This is foundational because it allows researchers to explore the stability of molecular structures, predict reaction pathways, and understand the mechanisms of chemical reactions, all of which are crucial for rational drug design [2].

How does the Born-Oppenheimer approximation make PES computation feasible? The Born-Oppenheimer approximation is a key simplification that separates the motion of electrons from the much heavier, slower-moving nuclei [4]. This allows for the treatment of nuclear positions as fixed parameters when calculating the electronic energy for a given molecular geometry. Consequently, the total energy of the molecule can be computed for an infinite variety of nuclear arrangements, creating the smooth "surface" we study [4]. Without this approximation, the coupled quantum mechanical equations for electrons and nuclei would be prohibitively difficult to solve for all but the smallest systems.

What are "stationary points," and why do I keep looking for them? Stationary points are specific geometries on the PES where the energy gradient (the first derivative of energy with respect to nuclear position) is zero [1] [4]. They are critical for understanding molecular behavior:

- Minima correspond to stable molecular structures, such as the lowest-energy shape of a drug molecule or a protein-ligand complex. A PES can have multiple local minima, representing different stable conformers or isomers [4].

- Saddle Points (or Transition States) are the highest-energy points along the lowest-energy pathway connecting two minima. They represent the energy barrier for a molecular transformation, such as a bond rotation or a chemical reaction, and directly determine the reaction rate [1] [4]. Identifying these points allows you to map out the possible conformational changes and reactivity of a drug candidate.

My basin-hopping simulations are slow to converge. What could be the issue? Basin Hopping is a metaheuristic global optimization algorithm that "walks" over the PES by iteratively taking random steps and then performing local minimizations [5]. Slow convergence can stem from several factors:

- Poor Step Size: The magnitude of the random "step" is critical. A step that is too small will keep the simulation trapped in a local region of the PES, while a step that is too large will prevent it from efficiently finding deeper minima.

- Insufficient Sampling: The algorithm may require a large number of iterations to adequately explore a complex, high-dimensional PES, especially for flexible drug-like molecules. Increasing the number of independent basin-hopping runs can help.

- Expensive Local Minimizations: Each step involves a local energy minimization, which can be computationally costly. Using efficient quantum chemical methods (e.g., semi-empirical methods like GFN2-xTB for pre-optimization) can speed this up [4].

How can I distinguish a true global minimum from a low-lying local minimum? This is a central challenge in PES exploration. No algorithm can provide a 100% guarantee for a complex system, but you can improve confidence by:

- Multiple Runs: Execute many independent basin-hopping simulations from different, randomly generated starting geometries. The lowest energy structure found consistently across multiple runs is a strong candidate for the global minimum [5].

- Population-Based Methods: Use variants of basin-hopping that maintain a population of candidate solutions (e.g., BHPOP) [5]. These methods explore multiple minima simultaneously and are often more robust.

- Comparing Algorithms: Cross-validate your results with a different global optimization metaheuristic, such as Covariance Matrix Adaptation Evolution Strategy (CMA-ES) or Differential Evolution (DE) [5].

What is the connection between PES, basin-hopping, and drug design?

- PES provides the fundamental map of a molecule's stability and reactivity.

- Basin-Hopping is an efficient computational method for exploring this map to find the most stable structures (global minima) and important low-energy alternatives [5]. In drug design, this is applied to:

- Identifying the most stable 3D shape (conformation) of a drug candidate, which dictates its ability to bind to a biological target.

- Predicting protein-ligand binding poses by exploring the PES of the complex.

- Understanding drug metabolism by mapping the PES for potential chemical reactions.

Table 1: Key Computational Tools for PES Mapping in Drug Discovery

| Item/Resource | Function in PES Research |

|---|---|

| Quantum Chemistry Software (e.g., Gaussian, ORCA, Q-Chem) | Performs the core quantum mechanical calculations to compute the energy and gradient for a given molecular geometry [6]. |

| Force Field Packages (e.g., AMOEBA in TINKER, Amber) | Provides a cheaper, classical approximation of the PES for larger systems or longer simulations. Advanced polarizable force fields like AMOEBA offer improved accuracy over fixed-charge models [6]. |

| Global Optimization Algorithms (e.g., Basin-Hopping, CMA-ES, DE) | Navigates the PES to locate global minima and other important stationary points without being trapped in local minima [5]. |

| Visualization Software (e.g., PyMOL, VMD) | Helps researchers visualize optimized molecular structures, conformational changes, and docking poses derived from PES exploration. |

| The xtb Software (GFN2-xTB) | A semi-empirical quantum mechanical method that provides a good balance of speed and accuracy, excellent for initial geometrical optimizations and screening before more expensive calculations [4]. |

Experimental Protocols for PES Mapping

Standard Workflow for a Geometrical Optimization A geometrical optimization finds a local minimum on the PES, which is a subroutine in basin-hopping [4].

- Initial Structure: Start with a reasonable 3D molecular structure, either drawn in a molecular builder or obtained from a database.

- Software & Method Selection: Choose a computational chemistry package (e.g., TINKER, Amber, Gaussian) and an appropriate level of theory (e.g., a force field for speed, a density functional theory (DFT) method for accuracy).

- Calculation Setup: Specify the calculation type as "Geometrical Optimization." The software will then iteratively:

- Calculate the energy and the energy gradient (first derivative) at the current geometry.

- Use the gradient to determine a direction that leads downhill in energy.

- Displace the atoms slightly in that direction to a new geometry with lower energy.

- Repeat until the gradient is near zero, indicating a stationary point has been found [4].

- Analysis: The output provides the optimized 3D structure and its energy. The success of the optimization can be verified by checking that the gradient norm converges to zero.

Protocol for a Basin-Hopping Simulation This protocol is adapted from global optimization studies in chemical physics [5].

- Initialization: Generate a random initial molecular geometry, x.

- Local Minimization: Perform a local energy minimization starting from x to find the corresponding local minimum, y.

- Perturbation (Hop): Apply a random perturbation to the coordinates of y (e.g., an atomic displacement or a rotation around a bond) to create a new structure, z.

- Local Minimization: Perform a local minimization starting from z to find a new local minimum, y'.

- Acceptance/Rejection: Apply a criterion (e.g., the Metropolis criterion from Monte Carlo, based on the energy difference between y' and y) to decide whether to accept the new minimum and set x = y' for the next cycle, or reject it and retain y.

- Iteration: Repeat steps 3-5 for a predefined number of cycles or until convergence is achieved. The following diagram illustrates this iterative process:

Performance & Technical Data

Table 2: Comparison of Global Optimization Metaheuristics for PES Exploration

| Algorithm | Key Principle | Typical Use Case in PES | Reported Performance Notes |

|---|---|---|---|

| Basin Hopping (BH) | Iterative random steps followed by local minimization [5]. | Locating low-energy isomers of molecular clusters and biomolecules [5]. | Simple, effective, and often performs comparably to more complex algorithms on difficult real-world problems [5]. |

| Differential Evolution (DE) | A population-based algorithm that creates new candidates by combining existing ones [5]. | General-purpose global optimization for molecular geometry. | A widely used and robust metaheuristic. |

| Covariance Matrix Adaptation Evolution Strategy (CMA-ES) | A population-based method that adapts an internal model of the search distribution [5]. | High-precision optimization on challenging synthetic benchmark functions [5]. | Often considered state-of-the-art for numerical optimization; can be highly effective but may be complex to tune [5]. |

| BHPOP (Population-based BH) | A variant of BH that maintains and evolves a population of solutions [5]. | Enhanced exploration of complex, multimodal PES. | Can outperform standard BH and CMA-ES on difficult cluster energy minimization problems [5]. |

Basin-hopping is a powerful global optimization algorithm that has proven particularly effective for solving challenging problems in chemical physics and materials science, especially those involving complex potential energy surfaces with multiple local minima [7] [8]. At its core, basin-hopping is a two-phase method that combines global exploration through Monte Carlo moves with local exploitation via deterministic minimization [9]. This combination allows the algorithm to efficiently escape local minima while still converging to low-energy regions, making it ideal for mapping potential energy surfaces in computational chemistry and drug development research [10] [11].

The algorithm transforms the original energy landscape into a collection of interpenetrating staircases, where each plateau corresponds to the energy of a local minimum [12]. This transformation effectively removes transition state regions from consideration while preserving the global minimum, significantly simplifying the optimization landscape [12].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental principle behind basin-hopping optimization?

Basin-hopping, also known as Monte Carlo Minimization, is a global optimization technique that iterates through a cycle of three main steps [10] [9]:

- Random perturbation of the current coordinates

- Local minimization from the perturbed coordinates

- Acceptance or rejection of the new coordinates based on the minimized function value

The key innovation is that the energy of each configuration is taken to be the energy of its local minimum, effectively transforming the potential energy surface into a series of interpenetrating staircases where each plateau represents a local minimum [7] [12]. This approach allows the algorithm to "hop" between different basins of attraction, hence the name "basin-hopping."

Q2: How do I choose appropriate parameters for my basin-hopping experiments?

Selecting proper parameters is crucial for successful basin-hopping optimization. The table below summarizes key parameters and their recommended settings based on research literature:

Table 1: Key Basin-Hopping Parameters and Recommended Settings

| Parameter | Description | Recommended Setting | Scientific Rationale |

|---|---|---|---|

| Temperature (T) | Controls acceptance probability of uphill moves | Comparable to typical function value difference between local minima [9] | Higher T accepts more uphill moves for better exploration [9] |

| Step Size | Maximum displacement for random perturbations | Comparable to typical separation between local minima in parameter space [9] | Too small limits exploration; too large reduces efficiency [9] |

| Iterations (niter) | Number of basin-hopping steps | 100-10,000+ depending on problem complexity [8] | More iterations increase probability of finding global minimum [8] |

| Local Minimizer | Algorithm for local minimization | L-BFGS-B (default), Nelder-Mead, BFGS, or Powell [9] [13] | Should be efficient for your specific landscape [9] |

| Target Acceptance Rate | Desired ratio of accepted steps | 0.5 (default) [9] | Balances exploration and exploitation [9] |

For the temperature parameter, Wales and Doye recommend setting T to be "comparable to the typical difference in function values between local minima" rather than being concerned with the height of barriers between them [9]. The step size should be "comparable to the typical separation between local minima in argument space" [9].

Q3: Why does my basin-hopping simulation get trapped in local minima?

Basin-hopping can become trapped for several scientific and technical reasons:

Insufficient thermal energy: If the temperature parameter T is set too low, the algorithm cannot accept uphill moves needed to escape deep local minima [9]. The probability of accepting an uphill move is given by exp(-ΔE/T), where ΔE is the energy difference and T is the temperature [9].

Inadequate step size: If the maximum displacement is too small, perturbations may not be sufficient to move the system to a new basin of attraction [9].

Limited iterations: Complex energy landscapes with many minima may require thousands of iterations to locate the global minimum [8].

Kinetic trapping: Certain systems have inherent energy barriers that are difficult to cross, such as proton transfer in molecular systems [10].

Advanced solution: Implement the "Basin Hopping with Occasional Jumping" (BHOJ) algorithm, which introduces random jumping processes after a specified number of consecutive rejections to mitigate stagnation [7] [11].

Q4: How can I implement constraints and bounds in basin-hopping?

Standard basin-hopping does not natively support constraints, but researchers have developed several effective workarounds:

Custom step functions: Create a specialized step routine that respects your boundaries. The

RandomDisplacementBoundsclass provides a template that ensures new positions stay within specified limits [14].Local minimizer constraints: Use constrained local minimizers (e.g., L-BFGS-B with bounds) through the

minimizer_kwargsparameter [14] [9].Acceptance tests: Implement custom acceptance criteria to reject steps that violate constraints [9].

Penalty functions: Modify your objective function to include penalty terms for constraint violations.

For molecular systems, common constraints include minimum interatomic distances (e.g., push_apart_distance = 0.4) and preservation of chemical connectivity [7] [10].

Q5: What are the best practices for verifying that I've found the global minimum?

Because basin-hopping is stochastic, there is no guarantee that the true global minimum has been found [9]. These verification strategies are recommended:

Multiple random starts: Execute the algorithm from different initial points and compare results [9].

Consistency checks: Ensure physical reasonableness of the solution based on chemical intuition and experimental data [10].

Algorithm comparison: Validate against other global optimization methods (e.g., Genetic Algorithms) [11].

Experimental correlation: Compare computational predictions with spectroscopic or ion mobility data [10].

Extended runs: Increase iteration count (niter) to thousands of steps and check for consistency [8].

Research by Röder and Wales emphasizes that "confidence in BH search results come from a satisfactory agreement with experimental observations and/or the consistency of results from several parallel simulations with different initial conditions" [10].

Troubleshooting Guides

Problem: Poor Acceptance Rate

Symptoms:

- Most trial moves are rejected

- Little improvement over many iterations

- Algorithm appears "stuck"

Diagnosis and Solutions:

Table 2: Troubleshooting Poor Acceptance Rates

| Cause | Diagnosis | Solution |

|---|---|---|

| Step size too large | Large energy increases after perturbation | Reduce step size by 20-50% [9] |

| Step size too small | Minimal energy change after perturbation | Increase step size; enable adjust_displacement [7] |

| Temperature too low | Nearly all uphill moves rejected | Increase T to match typical energy differences between minima [9] |

| Rugged landscape | High sensitivity to small coordinate changes | Implement custom step routines or use delocalized internal coordinates [11] |

The basin-hopping implementation in SciPy includes an automatic step size adjustment mechanism that can be enabled via adjust_displacement=true with a target_ratio of 0.5, which will dynamically optimize the step size to maintain the desired acceptance rate [7] [9].

Problem: Excessive Computational Time

Symptoms:

- Each iteration takes too long

- Slow progress in finding lower minima

- Local minimization dominates runtime

Optimization Strategies:

Choose efficient local minimizer: For your specific problem, test different algorithms (L-BFGS-B, Powell, Nelder-Mead) to find the best trade-off between speed and reliability [9] [13].

Implement callback with stopping criteria: Use the callback function to stop early when a satisfactory solution is found [15].

Reduce convergence criteria: Loosen tolerance settings in the local minimizer for faster execution.

Use machine learning augmentation: Employ similarity indices and clustering to avoid re-exploring similar regions [10].

Parallelize independent runs: Instead of one long run, execute multiple shorter runs from different starting points.

Problem: Handling Molecular Systems with Multiple Protonation States

Challenge: Standard basin-hopping may miss important minima when molecular protonation can change [10].

Solution Approach:

- Treat charge-carrying protons as separate moieties during the simulation [10]

- Conduct separate BH searches for each plausible prototropic isomer [10]

- Augment BH framework with chemical intuition (manually identify different isomers) [10]

As noted in Frontiers in Chemistry, "If one were to assume that the protonation site of para-aminobenzoic acid were the nitrogen center... the O-protonated isomer (which is the gas phase global minimum) would not be identified without modifying the atomic connectivity during the BH search" [10].

Basin-Hopping Workflow Visualization

Basin-Hopping Algorithm Flow: This diagram illustrates the iterative process of basin-hopping optimization, showing how Monte Carlo perturbations are combined with local minimization and acceptance criteria to navigate complex energy landscapes.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Computational Tools for Basin-Hopping Research

| Tool/Reagent | Function | Application Context |

|---|---|---|

| Similarity Indices | Quantifies geometric similarity between structures [10] | Avoids redundant exploration; identifies unique minima [10] |

| Hierarchical Clustering | Groups related conformations [10] | Maps potential energy surface; identifies funnels [10] |

| Delocalized Internal Coordinates (DICs) | Preserves favorable structural motifs [11] | Enhances efficiency for covalent systems [11] |

| Metropolis Criterion | Stochastic acceptance of uphill moves [9] | Enables escape from local minima [9] |

| L-BFGS-B Minimizer | Efficient local optimization with bounds [9] [13] | Default local minimization workhorse [9] |

| Multi-dimensional Scaling | Visualizes high-dimensional conformation space [10] | Interprets and rationalizes search results [10] |

Advanced Techniques and Recent Developments

Machine Learning Augmentation

Recent research has successfully integrated unsupervised machine learning techniques with basin-hopping to significantly improve its efficiency [10]. These approaches include:

- Similarity functions and hierarchical clustering to assess uniqueness of local minima and guide PES searches [10]

- Multidimensional scaling to visualize and interpret high-dimensional conformational spaces [10]

- Interpolation of geometries to identify intermediate local minima and create guess geometries for transition state searches [10]

As described in Frontiers in Chemistry, these machine learning techniques "can be used as tools for interpreting and rationalizing experimental results from spectroscopic and ion mobility investigations" [10].

Comparison with Genetic Algorithms

Systematic comparisons between basin-hopping and genetic algorithms for complex optimization problems like surface reconstruction have revealed important insights [11]:

- Genetic Algorithms tend to be "more robust with respect to parameter choice and in success rate" [11]

- Basin-Hopping can exhibit "advantages in speed of convergence" in certain applications [11]

- Both methods benefit from "feature-preserving trial moves" that maintain favorable structural motifs [11]

The efficiency of both approaches is "strongly influenced by how much the trial moves tend to preserve favorable bonding patterns once these appear" [11].

Basin-hopping remains a powerful and versatile algorithm for global optimization challenges, particularly in computational chemistry and drug development research. Its core strength lies in the elegant combination of Monte Carlo global exploration with efficient local minimization, creating an effective approach for mapping complex potential energy surfaces. By understanding the algorithm's principles, properly configuring its parameters, and implementing appropriate troubleshooting strategies, researchers can successfully apply basin-hopping to a wide range of scientific problems, from predicting molecular structures to optimizing drug candidates.

Historical Context and Core Algorithm

The Basin Hopping (BH) algorithm, established in the seminal work of Wales and Doye, is a global optimization technique designed to navigate complex potential energy surfaces (PES) with numerous local minima [16]. Its primary goal is to locate the global minimum of a system, a crucial task in fields like drug development and materials science.

The algorithm's efficiency transforms the high-dimensional PES into a collection of "basins of attraction" by focusing on energetically relevant regions [16]. The classic Basin Hopping algorithm follows an iterative cycle:

- Perturbation: The current atomic configuration is randomly displaced [16].

- Local Minimization: The perturbed structure undergoes local energy minimization [16].

- Acceptance/Rejection: The minimized structure is accepted or rejected based on the Metropolis criterion, which allows for occasional acceptance of higher-energy configurations to escape local minima [16].

The table below summarizes the core components of the classic Wales and Doye algorithm and its modern extensions.

Table: Evolution of the Basin Hopping Algorithm

| Aspect | Classic Implementation (Wales and Doye) | Modern Enhancements |

|---|---|---|

| Core Cycle | Perturbation → Local Minimization → Metropolis Acceptance [16] | Same core cycle, enhanced with automation and parallelism [16]. |

| Step Size | Fixed or manually tuned [16]. | Adaptive step size: Dynamically adjusted based on recent acceptance rates to maintain an optimal ~50% target [16]. |

| Key Parameters | Step size, temperature (Metropolis criterion) [16]. | Same, with automated control systems [16]. |

| Computational Focus | Serial execution; one candidate per step [16]. | Parallel local minimization: Multiple trial structures are evaluated concurrently per step, drastically reducing wall-clock time [16]. |

Modern Implementations and Technical Specifications

Contemporary research has built upon the foundational BH algorithm to improve its efficiency and practicality for high-performance computing (HPC) environments, such as in the adaptive and parallel Python implementation discussed by C. et al. [16].

Table: Technical Specifications of a Modern Basin Hopping Implementation

| Component | Specification / Methodology | Purpose / Rationale |

|---|---|---|

| Local Optimizer | L-BFGS-B algorithm [16]. | Efficient local minimization for bounded problems. |

| Perturbation Type | Random uniform displacement within a cube of side (2 \times \text{stepsize}) [16]. | Explores configuration space around the current structure. |

| Metropolis Temperature | ( T = 1.0 ) (in reduced units for the LJ potential) [16]. | Controls the probability of accepting uphill moves. |

| Adaptation Frequency | Step size adjustment every 10 BH steps [16]. | Ensures responsive yet stable adaptation of the step size. |

| Parallel Model | Python's multiprocessing.Pool to minimize n candidates concurrently [16]. |

Enables simultaneous evaluation of multiple trial structures. |

The following diagram illustrates the workflow of this modern, accelerated Basin Hopping algorithm:

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Components for Basin Hopping Experiments

| Component / Software | Function / Application |

|---|---|

| Lennard-Jones Potential | A prototypical model potential used for method development and benchmarking, e.g., for challenging systems like LJ38 clusters [16]. |

| Python Ecosystem | The primary programming environment for modern implementations; key libraries include SciPy (for optimizers like L-BFGS-B) and NumPy for numerical operations [16]. |

| Machine-Learned Potentials (MLIPs) | Advanced potentials, such as Gaussian Approximation Potentials (GAP), trained on quantum mechanical data to enable exploration with near-DFT accuracy at lower computational cost [17]. |

| Automated Frameworks (e.g., autoplex) | Software packages that automate the iterative process of exploration and fitting of potential-energy surfaces, reducing manual effort [17]. |

| .molden File Format | A common file format used for input (initial geometry) and output (accepted structures) in computational chemistry simulations [16]. |

Troubleshooting Guide and FAQs

Q1: My Basin Hopping simulation is trapped in a high-energy local minimum and cannot escape. What steps can I take?

- Increase Step Size: A larger perturbation displacement helps the search escape the current funnel. Modern implementations often include an adaptive step size that automatically adjusts to maintain a healthy acceptance rate (~50%) [16].

- Adjust Metropolis Temperature: Temporarily increasing the temperature parameter in the Metropolis criterion will increase the probability of accepting uphill moves, facilitating escape from deep local minima [16].

- Utilize Parallel Candidates: Employ a modern implementation that uses parallel local minimization. Generating and evaluating multiple candidates per step significantly improves the odds of finding a productive path away from the current minimum [16].

Q2: The computational cost of my energy evaluations (e.g., using DFT) is prohibitively high for long Basin Hopping runs. What are my options?

- Adopt a Multi-Stage Strategy: Use a fast but approximate method (like a classical force field or a machine-learned potential) for the initial, broad exploration. Then, refine the low-energy candidates using high-level DFT calculations [16].

- Leverage Machine-Learned Interatomic Potentials (MLIPs): Replace expensive DFT calculations with a MLIP like a Gaussian Approximation Potential (GAP) during the search. MLIPs can provide near-DFT accuracy at a fraction of the cost, making extensive sampling feasible [17].

- Implement Strict Convergence Criteria: Ensure your local minimizer (e.g., L-BFGS-B) uses tight convergence tolerances to avoid unnecessary cycles and wasted computations on insufficiently minimized structures [16].

Q3: How do I enforce constraints, such as fixed bond lengths or a required sum of weights, in a Basin Hopping simulation?

The standard Basin Hopping algorithm and its common implementations (like scipy.optimize.basinhopping) do not natively support constraints beyond simple bounds [14]. To handle constraints:

- Use a Custom Step Function: Implement a tailored step function that generates new trial structures which inherently satisfy your constraints.

- Penalty Functions: Modify your objective function to include a large penalty term for structures that violate the constraints, guiding the minimization towards feasible regions.

- Explore Alternative Optimizers: Consider other global optimization algorithms in the

scipysuite (like differential evolution) or specialized libraries like NLopt that may offer better support for specific constraint types [14].

Q4: My parallel Basin Hopping code shows diminishing returns when I use more than 8-16 concurrent processes. Why? This is a classic feature of parallel scaling. The overhead from process synchronization, communication, and data aggregation begins to outweigh the benefits of adding more workers, especially when the number of concurrent evaluations exceeds the number of truly independent, productive search directions available in a single step [16]. The performance gains are typically near-linear up to a certain point (e.g., 8 cores), after which they become modest [16].

Welcome to the Technical Support Center

This resource provides targeted troubleshooting guides and frequently asked questions (FAQs) for researchers employing the Basin-Hopping (BH) algorithm in computational chemistry and physics, particularly for mapping potential energy surfaces (PES) and locating global minima in complex systems like atomic clusters and biomolecules. The guidance is framed within the context of advanced thesis research, focusing on practical experimental issues and their solutions.

Frequently Asked Questions (FAQs)

Q1: What is the Basin-Hopping algorithm, and why is it particularly useful for potential energy surface mapping?

Basin-Hopping is a global optimization technique that transforms the potential energy surface into a collection of interpenetrating staircases. It alternates between random perturbation of coordinates, local minimization, and acceptance or rejection of the new coordinates based on the minimized function value using a Metropolis criterion [9] [18]. This process effectively allows the algorithm to "hop" between different basins of attraction, making it exceptionally capable of navigating rugged, high-dimensional energy landscapes, such as those encountered in molecular cluster optimization [19] [5].

Q2: How does Basin-Hopping compare to other global optimization methods like Simulated Annealing?

While both are metaheuristics, Basin-Hopping is often more efficient for problems with "funnel-like, but rugged" energy landscapes [9]. A key performance analysis found that Basin-Hopping and its population-based variants are highly competitive, performing almost as well as the Covariance Matrix Adaptation Evolution Strategy (CMA-ES) on standard benchmark functions and even better on difficult real-world cluster energy minimization problems [5]. Notably, SciPy has deprecated its Simulated Annealing implementation in favor of Basin-Hopping and dual_annealing [20].

Q3: My research involves expensive ab initio calculations. Can Basin-Hopping be adapted for such costly energy evaluations?

Yes. The standard Basin-Hopping algorithm can serve as a foundation for integration with first-principles methods like Density Functional Theory (DFT) [16]. Furthermore, to reduce computational cost, a promising strategy is to use Basin-Hopping with machine-learned potentials, such as neural networks trained on quantum mechanical data, which can provide near-DFT accuracy at a fraction of the computational cost [16].

Troubleshooting Guides

Issue: Poor Acceptance Rate and Inefficient Sampling

A poorly tuned step size is a common culprit for an acceptance rate that is either too high (leading to random wandering) or too low (causing trapping in a single basin).

Diagnosis:

- Monitor your acceptance rate over a window of iterations (e.g., 50 steps). The target acceptance rate for optimal performance is typically around 0.5 (50%) [9].

- An acceptance rate consistently below 0.4 or above 0.6 indicates a suboptimal step size.

Solution: Implement an Adaptive Step Size. Dynamically adjust the perturbation step size to approach the target acceptance rate [16] [9].

- Protocol: Every

intervaliterations (e.g., 50 steps), calculate the recent acceptance rate.- If the acceptance rate is greater than the target (e.g., 0.5), increase the step size to encourage broader exploration.

- If the acceptance rate is lower than the target, decrease the step size to refine sampling in the current region.

- Implementation: The step size is typically multiplied or divided by a

stepwise_factor(e.g., 0.9) during this adjustment [9]. Most modern implementations, includingscipy.optimize.basinhopping, have this feature built-in.

Issue: Slow Convergence and Prolonged Trapping in Local Minima

This often occurs on highly complex energy surfaces with high barriers between low-lying minima.

Diagnosis:

- The algorithm fails to find a lower energy minimum for a large number of consecutive iterations.

- The search appears to be stuck in a specific region of the configuration space.

Solution 1: Utilize Parallel Evaluation of Candidates. Instead of generating and minimizing one trial structure per step, generate several and minimize them concurrently [16].

- Protocol:

- At each BH step, create

nindependent perturbed structures from the current minimum. - Distribute these

ncandidates to multiple CPU cores for simultaneous local minimization using a method like L-BFGS-B. - Select the lowest-energy structure from the

nminimized candidates and subject it to the standard Metropolis acceptance test.

- At each BH step, create

- Expected Outcome: This approach can significantly reduce wall-clock time and improve convergence by increasing the probability of finding a lower-energy basin at each step, thereby reducing the chance of trapping [16]. Performance gains of nearly linear speedup for up to eight concurrent minimizations have been reported [16].

Solution 2: Employ a Population-Based Basin-Hopping Variant (BHPOP). Introducing a population of walkers can enhance the exploration of the energy landscape [5].

- Protocol: Maintain a population of independent BH runs or a population that interacts by exchanging information. This allows simultaneous exploration of multiple funnels on the PES.

- Expected Outcome: A population-based variant has been shown to be highly effective, outperforming standard BH and other metaheuristics on some challenging real-world problems [5].

Issue: Algorithm Fails to Locate the Known Global Minimum

If the algorithm consistently misses the global minimum on a benchmark system (e.g., LJ38), the problem may lie in the search parameters or the landscape's difficulty.

Diagnosis:

- Validation against known benchmark systems (e.g., Lennard-Jones clusters with fewer than 100 atoms) fails.

- The final result is highly dependent on the starting geometry.

Solution:

- Adjust the "Temperature" (

T): The parameterTin the Metropolis criterion controls the likelihood of accepting uphill moves. IfTis too low, the algorithm cannot escape deep local minima. If it's too high, it behaves like a random search. For best results,Tshould be comparable to the typical energy difference between local minima [9]. - Run from Multiple Starting Points: As a consistency check, always run the BH algorithm from several different, randomly generated initial structures. This helps verify that the lowest minimum found is consistent and not a result of a fortunate initial guess [9].

- Verify the Local Minimizer: Ensure your local minimization routine (e.g., L-BFGS-B) is robust and converges properly. The performance of BH is highly dependent on the efficiency and reliability of the local minimization step [16] [5].

Experimental Protocols & Workflows

Standard Basin-Hopping Workflow for Cluster Optimization

The following diagram illustrates the core workflow of the Basin-Hopping algorithm, integrating the troubleshooting solutions mentioned above.

Diagram Title: Basin-Hopping Algorithm with Adaptive Parallel Search

Detailed Step-by-Step Protocol:

- Initialization: Read the initial molecular geometry from a file (e.g., a .molden file containing Cartesian coordinates). Flatten the coordinates into a 1D array [16].

- Perturbation: Apply a random displacement to all atoms. The maximum displacement is controlled by the

stepsizeparameter. For example, atoms might be displaced within a cube of side2 * stepsize[16] [9]. - Parallel Local Minimization: Take the perturbed structure and create

nindependent copies (or generatendifferent perturbations). Use a local minimizer (e.g., L-BFGS-B fromscipy.optimize.minimize) to relax each structure to its nearest local minimum. This step is distributed across multiple CPU cores using a parallelization tool like Python'smultiprocessing.Pool[16]. - Selection & Metropolis Criterion: From the

nminimized candidates, select the one with the lowest energy. This candidate is compared to the current minimum structure.- If the new energy is lower, accept the new structure.

- If the new energy is higher, accept it with a probability given by the Metropolis criterion:

exp( -(E_new - E_old) / T ), whereTis the "temperature" parameter [9].

- Adaptation: Every

intervalsteps (e.g., 10 or 50), calculate the recent acceptance rate. Adjust thestepsizeto bring the rate closer to the target (e.g., 0.5). Multiply or divide the step size by astepwise_factor(e.g., 0.9) [16] [9]. - Termination: Repeat steps 2-5 for a specified number of iterations (

niter) or until the global minimum candidate remains unchanged for a number of iterations (niter_success). Output the lowest-energy structure found [16] [9].

Key Parameters for Basin-Hopping Experiments

The table below summarizes critical parameters for the BH algorithm, their typical values, and their impact on the search.

| Parameter | Description | Typical Value / Range | Function in Optimization |

|---|---|---|---|

| Step Size | Maximum displacement for random atom perturbations. | System-dependent; often ~0.5 [9] | Controls exploration breadth. Too small causes trapping; too large prevents refinement. |

Temperature (T) |

Parameter in Metropolis acceptance criterion. | Comparable to energy difference between local minima [9] | Controls probability of accepting uphill moves. T=0 leads to Monotonic Basin-Hopping. |

Number of Iterations (niter) |

Total number of basin-hopping steps. | 100+ [9] | Determines the total computational budget for the search. |

| Target Accept Rate | Desired acceptance rate for adaptive step size. | 0.5 (50%) [9] | Allows the algorithm to automatically balance exploration and exploitation. |

Concurrent Candidates (n) |

Number of trial structures minimized in parallel per step. | 2-8 [16] | Reduces wall-clock time and improves convergence by testing multiple paths simultaneously. |

The Scientist's Toolkit: Essential Research Reagents

This table lists key software components and their roles in constructing a Basin-Hopping research pipeline.

| Item | Category | Function in Research |

|---|---|---|

| L-BFGS-B Optimizer | Local Minimization Algorithm | A quasi-Newton method that efficiently finds the local minimum of a basin from a starting point. It is the workhorse for the local minimization phase [16]. |

| Lennard-Jones Potential | Model Potential Energy Function | A simple, widely used pair potential for modeling atomic interactions in clusters. Serves as a benchmark system for testing and validating BH algorithms [16] [19]. |

| Multiprocessing Pool (Python) | Parallelization Tool | Manages the distribution of multiple local minimization tasks across available CPU cores, enabling the parallel evaluation of candidate structures [16]. |

| Molden File Format | Data Structure Format | A common file format for inputting initial molecular coordinates and outputting accepted structures for visualization and further analysis [16]. |

| Neural Network Potentials | Machine-Learned Force Field | Surrogate models trained on ab initio data used to approximate the potential energy surface, dramatically reducing computational cost compared to direct DFT calculations [16]. |

Frequently Asked Questions (FAQs)

Q1: My basin-hopping simulation is trapped in a local minimum and fails to explore the global landscape. What parameters should I adjust?

This is typically addressed by optimizing your step size and temperature parameters [21]. The step size should be comparable to the typical separation between local minima in your system. If it's too small, the algorithm cannot escape local funnels; if too large, it becomes random sampling. Simultaneously, the temperature parameter T should be comparable to the typical function value difference between local minima [21]. Enable the adjust_displacement option (if available in your implementation) to automatically adjust the step size toward a target acceptance ratio, often set near 0.5 [7]. For particularly rugged landscapes, consider implementing an "occasional jumping" strategy after a set number of consecutive rejections to force exploration [7].

Q2: How do I balance computational cost with solution quality in high-dimensional systems like molecular clusters?

Utilize a hybrid approach that combines stochastic global search with efficient local refinement [22]. For the local minimization step within each basin-hopping cycle, choose fast but reliable methods like L-BFGS-B [21]. For molecular systems, initial optimization with force-field methods before switching to more accurate quantum chemical methods can reduce cost [22]. Implement parallel evaluation of trial structures, as modern implementations can achieve nearly linear speedup for concurrent local minimizations, dramatically reducing wall-clock time [23].

Q3: What convergence criteria should I use to determine when to stop my basin-hopping simulation?

The niter_success parameter will stop the run if the global minimum candidate remains unchanged for a specified number of iterations [21]. For molecular systems, you can also use structure-based convergence by monitoring the root-mean-square deviation (RMSD) of unique low-energy structures found [7]. Set write_unique=true to output all unique geometries, then analyze when new structurally distinct minima cease to appear [7]. There is no universal criterion for the true global minimum in stochastic methods, so multiple runs from different starting points provide confirmation [21].

Q4: How can I incorporate domain knowledge about my molecular system to improve basin-hopping efficiency?

Customize the step-taking routine (take_step parameter) to make chemically intelligent moves rather than purely random displacements [21]. For multi-element clusters, implement swap moves where atoms of different elements exchange positions with a defined probability [7]. Use the accept_test parameter to enforce chemical constraints, such as rejecting steps that create unrealistic bond lengths or atomic clashes [21]. For reaction pathway searches, you can bias steps along suspected reaction coordinates while allowing relaxation in other dimensions [24].

Troubleshooting Guides

Poor Acceptance Rates

Table: Troubleshooting Low Acceptance Rates

| Symptom | Possible Cause | Solution |

|---|---|---|

| Acceptance rate too low (<0.2) | Step size too large | Reduce stepsize parameter; enable automatic adjustment [7] |

| Acceptance rate too high (>0.8) | Step size too small | Increase stepsize parameter; enables better exploration [21] |

| Erratic acceptance | Temperature parameter mismatch with energy landscape | Set T comparable to typical energy barriers between minima [21] |

| Consistent rejection of lower energy structures | Custom accept_test too restrictive |

Review constraint logic; consider "force accept" for escape [21] |

Diagnostic Steps:

- Monitor acceptance ratio output throughout simulation [7]

- Plot energy trajectory to visualize exploration patterns

- Check if

target_accept_rateis set appropriately (default 0.5) [21] - Verify local minimizer is converging properly in each step

Performance and Scaling Issues

Slow Runtime:

- Use efficient local minimizers (BFGS, L-BFGS-B) in

minimizer_kwargs[21] - For calculations >2 minutes, use remote compute resources rather than localhost [25]

- Implement parallel basin-hopping with simultaneous trial structure evaluation [23]

- Reduce accuracy requirements for early iterations; tighten during refinement

Memory Problems:

- Limit history tracking for very large systems

- Use structure comparison methods that don't require storing all visited minima

- For molecular systems, employ rotation-invariant structure descriptors [7]

Experimental Protocols

Standard Basin-Hopping Implementation

Key Parameters:

niter: Number of basin-hopping iterations (100-1000+ depending on system)T: "Temperature" for Metropolis criterion (set to energy barrier scale)stepsize: Maximum random displacement (start with 0.1-0.5 times system scale)niter_success: Stop after this many iterations without improvement [21]

Advanced Configuration for Molecular Clusters

For complex systems like Lennard-Jones clusters or molecular aggregates [23]:

- Custom Step Taking: Implement cluster-specific moves like particle swaps, rotation moves, or bond-length biased steps

- Structure Comparison: Use geometric hashing or RMSD with alignment to identify unique minima

- Parallel Evaluation: Use multiprocessing or MPI for simultaneous local minimization of multiple trial structures

- Adaptive Step Sizes: Implement

adjust_displacement=truewithtarget_ratio=0.5[7]

Workflow Visualization

Basin-Hopping Algorithm Workflow

Research Reagent Solutions

Table: Essential Computational Tools for Basin-Hopping Research

| Tool/Category | Specific Examples | Function/Purpose |

|---|---|---|

| Optimization Frameworks | SciPy optimize.basinhopping [21], GMIN [21] | Core basin-hopping algorithm implementation |

| Local Minimizers | L-BFGS-B, BFGS, conjugate gradient [21] | Local relaxation at each basin-hopping step |

| Energy Calculators | Density Functional Theory, Molecular Mechanics [22] | Potential Energy Surface evaluation |

| Structure Analysis | RMSD calculators, graph-based descriptors [7] | Identify unique minima and track progress |

| Specialized Implementations | EON [7], Custom Python codes [23] | Domain-specific optimizations |

| Visualization Tools | Matplotlib, VMD, PyMOL | Analyze results and molecular structures |

Implementing Basin-Hopping: Methods and Real-World Applications in Biomedicine

# Frequently Asked Questions (FAQs)

Q1: What is the core principle behind the basin-hopping algorithm? The basin-hopping algorithm is a global optimization method designed to explore complex potential energy surfaces (PES) by transforming the landscape into a collection of basins of attraction. It operates through a cyclic two-phase process: a random perturbation of the current atomic coordinates, followed by a local minimization of the perturbed structure. A key feature is the Metropolis criterion, which is used to stochastically accept or reject the new minimized structure based on its energy and a temperature parameter. This allows the algorithm to escape local minima and progressively find lower-energy configurations [26] [16] [8].

Q2: Why is the Metropolis criterion crucial in this algorithm? The Metropolis criterion introduces a probabilistic element for accepting solutions that have a higher energy than the current one. This is essential for escaping local minima. At higher temperatures, the algorithm is more likely to accept such uphill moves, enabling a broader exploration of the PES. As the "temperature" parameter decreases over the course of the simulation, the algorithm becomes more selective, favoring downhill moves and converging towards a low-energy minimum [26] [27].

Q3: How do I choose an appropriate step size for the perturbation? The step size, which controls the maximum displacement of atoms during perturbation, is a critical parameter. It should be comparable to the typical distance between local minima on your PES.

- Too small a step size leads to insufficient exploration, trapping the search in the current basin.

- Too large a step size may overshoot nearby minima and reduce efficiency [26]. A best practice is to use an adaptive step size that is dynamically adjusted to maintain a target acceptance rate (e.g., 50%) [26] [16].

Q4: What are the advantages of using parallel evaluation in basin-hopping? In a standard basin-hopping step, one candidate structure is generated and minimized. With parallel evaluation, multiple perturbed candidates are generated and minimized simultaneously at each step. The lowest-energy candidate is then selected for the Metropolis test. This approach:

- Reduces wall-clock time significantly by leveraging multiple CPU cores.

- Improves convergence and reduces the probability of being trapped in suboptimal minima by evaluating a wider range of possibilities at each iteration [16].

Q5: My calculation is not finding new minima. What could be wrong? This is a common issue that can stem from several sources:

- Incorrect step size: Your perturbation magnitude may be too small to move the system into a new basin of attraction. Try increasing the step size or implementing an adaptive scheme [16].

- Temperature too low: A very low temperature will cause the algorithm to reject almost all uphill moves, preventing it from escaping the current local minimum. Consider increasing the initial temperature [26].

- Insufficient iterations: The algorithm may require more iterations (

niter) to sufficiently explore the complex energy landscape, especially for systems with many atoms [8].

# Troubleshooting Guides

# Problem: Poor Acceptance Rate

A very high (>90%) or very low (<10%) acceptance rate indicates that the balance between exploration and exploitation is suboptimal.

| Symptom | Potential Cause | Solution |

|---|---|---|

| Acceptance rate is too high | Step size is too small; perturbations are not generating new basins. | Increase the stepsize parameter. Monitor the energy difference between accepted steps; if it's consistently small, the search is not exploring widely enough [26]. |

| Acceptance rate is too low | Step size is too large or temperature is too low. | Decrease the stepsize or increase the T (temperature) parameter. Implement an adaptive step size control to automatically maintain a ~50% acceptance rate [26] [16]. |

Diagnostic Steps:

- Plot the acceptance rate over the last 50-100 steps.

- If the rate is consistently outside the 20-60% range, adjust the

stepsizeparameter as indicated in the table above. - For adaptive control, update the step size every

intervalsteps (e.g., every 50 steps) by multiplying or dividing it by astepwise_factor(e.g., 0.9) based on whether the current acceptance rate is above or below thetarget_accept_rate(e.g., 0.5) [26].

# Problem: Algorithm is Stagnating in a Local Minimum

The algorithm fails to find a lower energy minimum even after many iterations.

Resolution Protocol:

- Verify Local Optimizer: Ensure your local minimization algorithm (e.g., L-BFGS-B) is converging correctly. Check its output for failures [26] [8].

- Increase Perturbation Strength: Temporarily increase the

stepsizeto encourage larger jumps to new regions of the PES [8]. - Adjust Temperature Schedule: If using a cooling schedule, ensure the initial

Tis high enough to allow escape from deep local minima. The temperature should be comparable to the energy differences between local minima you expect to find [26]. - Implement Parallel Candidates: Use parallel evaluation of multiple trial structures per step. This increases the chance that at least one perturbation will find a path to a lower minimum [16].

- Restart from a New Point: The current basin may be very large. Consider restarting the entire simulation from a different, randomly generated initial structure.

# Problem: Unacceptable Computational Cost

The simulation is taking too long to complete.

Optimization Strategies:

| Strategy | Description | Implementation |

|---|---|---|

| Parallelization | Distribute the local minimization of multiple trial structures across CPU cores. | Use Python's multiprocessing.Pool to minimize several candidates concurrently. This can achieve near-linear speedup [16]. |

| Optimize Engine Settings | Reduce the cost of each single-point energy and force calculation. | In ab initio calculations, use a faster but less accurate method for the initial search, then refine low-energy minima with a higher-level method. |

| Tune Local Minimizer | Make the local optimization more efficient. | Use a fast, reliable algorithm like L-BFGS-B. Adjust its tolerance settings (ftol, gtol) to avoid over-converging high-energy intermediates [8]. |

The following data illustrates the wall-time reduction achieved by evaluating multiple candidates in parallel during a basin-hopping run for a Lennard-Jones cluster.

| Number of Concurrent Candidates | Relative Speedup | Cumulative Wall Time (Arbitrary Units) |

|---|---|---|

| 1 | 1.0x | 100 |

| 4 | ~3.8x | 26 |

| 8 | ~7.1x | 14 |

| 16 | ~10.5x | 9.5 |

These parameters provide a starting point for configuring your basin-hopping calculation.

| Parameter | Description | Recommended Range / Value |

|---|---|---|

niter |

Number of basin-hopping iterations. | 100 - 10,000+ (problem-dependent) |

T (Temperature) |

Energy scale for Metropolis criterion. | Comparable to expected energy differences between local minima. |

stepsize |

Maximum displacement for random perturbation. | Problem-specific; often 0.5 is a default. Use adaptive control. |

interval |

Number of steps between step size updates. | 50 - 100 steps |

target_accept_rate |

Desired acceptance rate for adaptive step size. | 0.5 (50%) |

# Workflow Visualization

Diagram Title: Basin-Hopping Algorithm Workflow

# The Researcher's Toolkit

# Essential Computational Components

| Tool / Component | Function | Example/Note |

|---|---|---|

| Local Optimizer | Performs local minimization on perturbed structures to find the nearest local minimum. | L-BFGS-B, Nelder-Mead, or conjugate gradient methods [26] [16]. |

| Perturbation Function | Generates new trial configurations by randomly displacing atoms. | Uniform or Gaussian random moves; step size is critical [16] [27]. |

| Metropolis Criterion | Stochastically accepts or rejects new structures based on energy change and temperature. | P_accept = min(1, exp(-(E_new - E_old) / T)) [26] [27]. |

| Temperature (T) | Controls the probability of accepting uphill moves. Higher T increases exploration [26]. | Should be set comparable to the energy barrier between minima [26]. |

| Potential Energy Function | Computes the energy of a given atomic configuration. | Lennard-Jones potential, force fields, or ab initio quantum mechanics [16]. |

Python Implementation with Adaptive Step-Size Control

Frequently Asked Questions (FAQs)

General Concepts

Q1: What is adaptive step-size control and why is it important in computational research?

Adaptive step-size control is a computational technique that dynamically adjusts the integration step size during the numerical solution of differential equations. It aims to maintain a specified error tolerance while optimizing computational efficiency. The method automatically takes smaller steps in regions where the solution changes rapidly and larger steps in smoother regions, providing an optimal balance between accuracy and computational cost. This is particularly crucial in potential energy surface mapping where systems can exhibit vastly different timescales and stiffness behaviors [28] [29] [30].

Q2: How does adaptive step-size control specifically benefit basin-hopping algorithms for potential energy surface mapping?

In basin-hopping algorithms for potential energy surface mapping, adaptive step-size control enhances the local minimization phase when exploring complex energy landscapes. By efficiently navigating the potential energy surface with appropriate step sizes, researchers can more accurately locate minima and transition states. The temperature parameter in basin-hopping controls acceptance of new configurations, while adaptive step sizing in the local minimization ensures thorough exploration of each basin's topology, which is essential for identifying the global minimum in Lennard-Jones clusters and other molecular systems [9] [8] [23].

Implementation Issues

Q3: How can I access the actual adaptive time steps used by scipy.integrate.ode solvers rather than just fixed-interval output?

There are several approaches to access adaptive time steps in SciPy:

- Use the

soloutcallback function available with thedopri5anddop853solvers (SciPy 0.13.0+) [31] - Employ the

nsteps=1hack with a warning suppressor, though this generates UserWarning at each step [31] - For newer SciPy versions (1.0.0+), utilize

scipy.integrate.solve_ivpwith thedense_output=Trueparameter [31] - Use the

vodesolver with thestep=Trueparameter in the integrate method [31]

Q4: Why does my implementation of an adaptive Runge-Kutta method (e.g., Dormand-Prince 5(4)) produce different results compared to SciPy's solve_ivp even with identical parameters?

Discrepancies in adaptive Runge-Kutta implementations can arise from several factors:

- Error norm calculation: Ensure you're using the correct RMS norm with both absolute and relative tolerances:

err = sqrt(1/dim * sum((|y_new_i - y_star_i| / (atol + max(|y_new_i|, |y_old_i|) * rtol))^2)[32] - Step size adjustment factor: Verify the safety factor (typically 0.9) and the exponent calculation:

factor = safety * (tol/err)^(1/(q+1))whereqis the lower order method [32] [28] - Coefficient accuracy: Double-check Butcher tableau coefficients for both main and embedded methods [32]

- Step size bounding: Implement proper minimum and maximum step size limits (e.g.,

min_factor=0.2,max_factor=10.0) [32]

Q5: How does basin-hopping's default step-taking mechanism work, and is it adaptive by default?

Yes, basin-hopping uses adaptive step sizing by default through the AdaptiveStepSize class, which wraps the default RandomDisplacement step-taking routine. The algorithm automatically adjusts the step size based on acceptance rates: if many steps are accepted, it increases the step size; if many are rejected, it decreases it. This adaptation occurs at regular intervals (default: every 50 iterations) to optimize the search efficiency [33].

Performance and Optimization

Q6: How can I improve the performance and convergence of my basin-hopping implementation for complex potential energy surfaces?

Performance optimization strategies include:

- Step size tuning: Set an appropriate initial

stepsizeparameter (typically 0.5) based on your problem domain characteristics [9] [8] - Temperature adjustment: Choose temperature

Tcomparable to the typical function value difference between local minima [9] - Custom step takers: Implement problem-specific displacement routines with

take_step.stepsizeattribute for finer control [9] [33] - Parallel evaluation: Utilize parallel processing for multiple trial structures, achieving near-linear speedup for concurrent local minimizations [23]

- Local minimizer selection: Experiment with different local optimization algorithms (

L-BFGS-B,Nelder-Mead, etc.) via theminimizer_kwargsparameter [8]

Q7: What are the key differences between fixed-step and adaptive Runge-Kutta methods in terms of computational efficiency and accuracy?

Table 1: Fixed-Step vs. Adaptive Runge-Kutta Methods Comparison

| Aspect | Fixed-Step Methods | Adaptive Methods |

|---|---|---|

| Step Size | Constant throughout integration | Dynamically adjusted based on local error estimates |

| Error Control | Global error bounds, requires manual tuning | Local error estimates, automatic control to user-defined tolerance |

| Computational Efficiency | May require excessively small steps for stability | Optimized step sizes reduce total function evaluations |

| Handling Stiff Problems | Poor without manual intervention | Automatically reduces step size in rapidly changing regions |

| Implementation Complexity | Simpler to implement | Requires error estimation and step size control logic |

| Performance on Varying Timescales | Inefficient for problems with multiple timescales | Particularly efficient for problems with varying timescales [29] |

Troubleshooting Guides

Problem: Inconsistent or Unreliable Results with Adaptive ODE Solvers

Symptoms: Solution diverges from expected values, different results between runs with same parameters, or error estimates that don't correlate with actual accuracy.

Diagnosis and Solutions:

Verify Error Estimation Implementation

- For embedded Runge-Kutta methods (e.g., RKF45, Dormand-Prince), ensure you're using the correct difference between higher and lower order solutions:

err = |y_high - y_low|[28] [29] - Implement proper error norm calculation that combines absolute and relative tolerances:

error_norm = sqrt(mean((err / (atol + rtol * max(|y_old|, |y_new|)))^2))[32]

- For embedded Runge-Kutta methods (e.g., RKF45, Dormand-Prince), ensure you're using the correct difference between higher and lower order solutions:

Check Step Size Adjustment Logic

The safety factor (typically 0.8-0.9) prevents excessive step size oscillations [28] [30].

Validate Butcher Tableau Coefficients

Problem: Basin-Hopping Algorithm Trapped in Local Minima

Symptoms: Algorithm fails to find global minimum, consistently returns same suboptimal solution, or shows poor exploration of configuration space.

Diagnosis and Solutions:

Adjust Algorithm Parameters Table 2: Basin-Hopping Parameter Optimization Guide

Parameter Default Value Optimization Strategy Effect on Search T(Temperature)1.0 Set comparable to typical function value differences between minima Higher T accepts more uphill moves for better exploration stepsize0.5 Choose based on problem domain scale (e.g., 2.5-5% of domain size) Larger steps facilitate basin escaping, smaller steps refine local search niter100 Increase to 1000+ for complex energy landscapes More iterations improve probability of finding global minimum interval50 Adjust based on problem dimensionality and complexity Controls how frequently step size is adapted niter_successNone Set to stop if no improvement after N iterations Prevents wasted computation on stagnant searches [9] [8] Implement Custom Step Taking Routine

Custom step takers can incorporate domain knowledge for more effective exploration [9].

Enhance with Parallel Processing

- Implement parallel evaluation of trial structures using multiprocessing or MPI

- Achieve nearly linear speedup for up to 8 concurrent local minimizations [23]

- Particularly beneficial for expensive energy calculations (e.g., DFT, molecular mechanics)

Experimental Protocols & Methodologies

Protocol 1: Implementing Adaptive Runge-Kutta Methods for Molecular Dynamics

Purpose: Accurate integration of equations of motion in molecular dynamics simulations with varying timescales.

Materials:

- System of ordinary differential equations (ODEs) describing molecular motion

- Initial atomic positions and velocities

- Potential energy function and force field parameters

Procedure:

- Select Appropriate Embedded RK Method: Choose based on accuracy requirements and computational constraints (e.g., RKF45 for general use, Cash-Karp for better error control) [29]

- Implement Error Estimation: Use embedded formulas to compute both higher and lower order solutions from shared function evaluations [30]

- Configure Tolerance Parameters: Set

atol(absolute tolerance) andrtol(relative tolerance) based on desired precision [32] - Initialize Step Size: Choose conservative initial step size, typically

h = 0.01 * tol^(1/(p+1))where p is method order [30] - Iterate with Adaptive Control:

Validation:

- Compare with analytical solutions for simple systems

- Verify energy conservation in conservative systems

- Test on benchmark problems with known solutions

Protocol 2: Basin-Hopping for Potential Energy Surface Mapping

Purpose: Locate global minimum and important local minima on potential energy surfaces for molecular systems.

Materials:

- Energy evaluation function (empirical potential, DFT, etc.)

- Initial molecular configuration

- Gradient computation method (analytical or numerical)

Procedure:

- Algorithm Configuration:

- Set temperature

Tbased on typical energy barriers (often 0.1-10 kJ/mol for molecular systems) - Choose initial step size appropriate for coordinate scales (typically 0.1-1.0 Å for atomic displacements)

- Select local minimizer (

L-BFGS-Bfor efficiency with gradients,Nelder-Meadfor derivative-free optimization) [8]

- Set temperature

Iteration Cycle:

- Perturbation: Randomly displace current coordinates using adaptive step size

- Local Minimization: Apply local optimization to find nearest minimum

- Acceptance Test: Use Metropolis criterion with current temperature to accept or reject new minimum

- Step Size Adaptation: Periodically adjust perturbation size based on acceptance rate [9] [33]

Convergence Detection:

- Monitor lowest energy found over iterations

- Use

niter_successto stop after no improvement for specified iterations - Implement callback function to track progress and collect minima [9]

Analysis:

- Cluster identical minima using structural similarity measures

- Analyze transition states between important minima

- Calculate thermodynamic properties from minima distribution

Visualization of Computational Workflows

Adaptive Runge-Kutta Integration Process

Diagram 1: Adaptive RK Integration Workflow

Basin-Hopping Algorithm Structure

Diagram 2: Basin-Hopping Algorithm Flow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Potential Energy Surface Research

| Tool/Component | Function/Purpose | Implementation Example | ||

|---|---|---|---|---|

| Adaptive ODE Solvers | Numerical integration of equations of motion with error control | scipy.integrate.solve_ivp, scipy.integrate.ode with dopri5/dop853 [31] |

||

| Embedded RK Methods | Efficient error estimation for step size control | Dormand-Prince 5(4), Runge-Kutta-Fehlberg (RKF45), Cash-Karp [29] | ||

| Butcher Tableaux | Coefficient sets for Runge-Kutta methods | Pre-defined coefficients for various order methods [32] [29] | ||

| Global Optimization | Locating global minima on complex energy landscapes | scipy.optimize.basinhopping with adaptive step size [9] [8] |

||

| Local Minimizers | Efficient local optimization within basins | L-BFGS-B, Nelder-Mead, BFGS algorithms [8] | ||

| Error Norm Calculators | Combined absolute and relative error estimation | RMS norm with tolerance blending: `error = sqrt(mean((err/(atol+rtol* | y | ))^2))` [32] |

| Step Size Controllers | Adaptive step size adjustment logic | Proportional control with safety factors and bounds [28] [30] | ||

| Parallel Evaluation | Concurrent processing of multiple configurations | Multiprocessing, MPI for parallel basin-hopping [23] |

Parallel Computing Strategies for Accelerated Structure Evaluation

Frequently Asked Questions (FAQs)

Q1: What is the primary benefit of using parallel computing in my Basin Hopping experiments? Parallel computing primarily accelerates the most computationally expensive part of the Basin Hopping algorithm: the local minimization of multiple candidate structures. By evaluating several candidates simultaneously, you can achieve a significant reduction in wall-clock time, allowing you to perform more iterations or tackle larger systems within a feasible timeframe [16].

Q2: My parallel Basin Hopping code is not yielding a linear speedup. What could be the cause? Perfect linear speedup is often not achieved due to overhead. Common causes and solutions include:

- Synchronization Overhead: The parallel processes must synchronize at the end of each Basin Hopping step. As the number of parallel evaluations increases, this overhead becomes more pronounced, leading to diminishing returns [16].

- Load Imbalance: If the local minimization of different candidate structures takes varying amounts of time, some processes will finish earlier and remain idle, wasting computational resources.

- Hardware Limitations: Contention for shared resources like memory bandwidth or cache can limit performance gains.

Q3: How do I choose the number of parallel candidates to evaluate per step? The optimal number is system-dependent. Start with a number equal to the available physical CPU cores. Research suggests that evaluating up to 8 candidates in parallel often yields near-linear speedups, but performance gains typically plateau beyond this point due to synchronization overhead [16]. We recommend empirical testing to find the sweet spot for your specific problem.

Q4: Can I combine parallel candidate evaluation with an adaptive step size?

Yes, and this is a highly recommended strategy. The two techniques are complementary. The adaptive step size manages the exploration of the potential energy surface, while parallel evaluation accelerates the process. The SciPy basinhopping function allows you to use a custom take_step function to implement adaptive step-size control while leveraging parallel evaluations for the local minimization phase [34] [16].

Q5: I am getting different results each time I run my parallel Basin Hopping code. Is this a bug? Not necessarily. The Basin Hopping algorithm is inherently stochastic due to its random perturbation step [5]. Different random number sequences will lead to different trajectories on the potential energy surface. You should run multiple independent runs (with different random seeds) to build confidence that you have located the global minimum or a good low-energy structure [10].

Troubleshooting Guides

Problem: The algorithm is converging to a local minimum too quickly. The algorithm appears to be "trapped" and is not exploring the energy landscape effectively.

- Potential Cause 1: Step size is too small.

- Solution: Increase the

stepsizeparameter. A larger step size allows the algorithm to make bigger jumps, potentially escaping the current funnel of attraction. SciPy'sbasinhoppinghas a defaultstepsizeof 0.5 [34]. Implement an adaptive step size mechanism that increases the step size if the acceptance rate is too high [16].

- Solution: Increase the

- Potential Cause 2: Temperature parameter is too low.

- Potential Cause 3: Insufficient exploration.

- Solution: Increase the number of iterations (

niter). For complex landscapes, more sampling is required. Use the parallel framework to perform more iterations in the same amount of time. Alternatively, run multiple independent parallel searches from different starting points [10].

- Solution: Increase the number of iterations (

Problem: The computation is still too slow, even with parallelization. The parallel efficiency is low, or the local minimizations themselves are the bottleneck.

- Potential Cause 1: The local minimizer is inefficient for your system.

- Potential Cause 2: The cost of inter-process communication is too high.

- Solution: Ensure that you are not moving large amounts of data (like entire trajectory histories) between processes during each evaluation. The function being minimized should be designed to be as lightweight as possible for the parallel tasks. Profiling your code can help identify these bottlenecks.

- Potential Cause 3: The problem dimension is very high.

- Solution: Consider using a population-based variant of Basin Hopping (BHPOP), which maintains and evolves multiple solutions in parallel. This can sometimes provide better exploration in high-dimensional spaces compared to the standard single-walker approach [5].

Problem: The algorithm is not accepting any new structures. The acceptance rate is zero or very close to zero.

- Potential Cause 1: Step size is too large.

- Solution: Reduce the

stepsize. An excessively large step size may be perturbing candidates into regions of the potential energy surface that are not energetically favorable, leading to rejections. The adaptive step size mechanism should also decrease the step size if the acceptance rate is too low [16].

- Solution: Reduce the

- Potential Cause 2: The system is frozen at a very deep minimum.

- Solution: This can occur with "funnel-like" landscapes. Implement a callback function that can stop the routine after a number of unsuccessful iterations (

niter_successparameter) [34]. You can also implement a more complex acceptance test (accept_test) to force acceptance under certain conditions to escape the trap [34].

- Solution: This can occur with "funnel-like" landscapes. Implement a callback function that can stop the routine after a number of unsuccessful iterations (

Experimental Protocols & Performance Data

Protocol: Implementing Parallel Basin Hopping with SciPy This protocol outlines how to set up a basic parallel Basin Hopping search using Python's SciPy library.

- Define the Objective Function: The function to minimize, typically the potential energy of the molecular system.

- Configure the Local Minimizer: Create a

minimizer_kwargsdictionary. This is where you specify the local optimization method and any additional arguments. - Set Up Parallel Processing (Embarrassing Parallelism): To parallelize the evaluation of multiple trial candidates, you would typically use a Python multiprocessing

Pooloutside thebasinhoppingcall. The SciPybasinhoppingfunction itself does not directly handle parallelism for candidate evaluation. A common pattern is to run several independentbasinhoppinginstances in parallel with different random seeds, or to customize thetake_stepfunction to generate several candidates that are then minimized in parallel using a pool, as demonstrated in recent research [16]. - Execute the Search: Call the

basinhoppingfunction with your parameters. - Analyze Results: The

resultobject contains the optimized coordinates and the value of the objective function at the minimum.

Performance Analysis of Parallel Evaluation The following table summarizes quantitative data on the speedup achieved by evaluating multiple candidate structures in parallel within a Basin Hopping run, as reported in recent literature [16].

Table 1: Speedup Achieved by Parallel Candidate Evaluation in Basin Hopping

| Number of Concurrent Candidates | Relative Wall-clock Time | Observed Speedup | Notes |

|---|---|---|---|

| 1 | 1.0x | 1.0x | Baseline (serial execution) |

| 2 | ~0.55x | ~1.8x | |

| 4 | ~0.28x | ~3.6x | |

| 8 | ~0.14x | ~7.1x | Close to linear (ideal) speedup |

| 16 | ~0.09x | ~11.1x | Diminishing returns due to synchronization and communication overhead |

Workflow Visualization