AI and Machine Learning in Small Molecule Discovery: Revolutionizing Drug Development for Researchers

This article provides a comprehensive analysis of how Artificial Intelligence (AI) and Machine Learning (ML) are transforming small molecule drug discovery.

AI and Machine Learning in Small Molecule Discovery: Revolutionizing Drug Development for Researchers

Abstract

This article provides a comprehensive analysis of how Artificial Intelligence (AI) and Machine Learning (ML) are transforming small molecule drug discovery. Tailored for researchers, scientists, and drug development professionals, it explores the foundational concepts, key methodologies, and practical applications of AI/ML in identifying and optimizing novel therapeutics. The article details common computational and data challenges, offers strategies for model optimization, and critically examines validation frameworks and comparative performance against traditional methods. By synthesizing current trends and real-world case studies, it serves as an essential guide for integrating AI-driven approaches into the preclinical pipeline.

From Hype to Hypothesis: Understanding the Core AI/ML Paradigms in Small Molecule Discovery

Within the broader thesis on AI and ML in small molecule discovery, it is critical to delineate the technological landscape. AI in drug discovery refers to computational systems performing tasks requiring human intelligence, with Machine Learning (ML) as its core subset, where algorithms learn patterns from data without explicit programming. This application note details key methodologies and experimental protocols for implementing ML in small molecule discovery pipelines.

Table 1: Core AI/ML Approaches in Small Molecule Discovery

| Paradigm | Sub-category | Primary Application in Drug Discovery | Typical Model/Algorithm Examples | Reported Performance Metrics (Representative) |

|---|---|---|---|---|

| Supervised Learning | Regression | Quantitative Structure-Activity Relationship (QSAR) modeling for potency prediction. | Random Forest, Gradient Boosting Machines (GBM), Support Vector Regression (SVR) | R²: 0.6-0.8 on curated bioactivity datasets (e.g., ChEMBL). |

| Supervised Learning | Classification | Binary classification of molecules as active/inactive, or for ADMET property prediction. | Deep Neural Networks (DNNs), XGBoost, Random Forest | AUC-ROC: 0.8-0.9 for hERG toxicity classification. |

| Unsupervised Learning | Clustering & Dimensionality Reduction | Compound library exploration, hit series identification, chemical space visualization. | t-SNE, UMAP, K-Means Clustering | Enables visualization of high-dimensional chemical descriptors in 2D. |

| Generative AI | Deep Generative Models | De novo molecule generation, library design, molecular optimization. | Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), Transformer-based (e.g., GPT for molecules) | Generates >95% valid and novel molecules; can optimize multiple properties simultaneously. |

| Reinforcement Learning | Model-based Optimization | Multi-objective molecular optimization (potency, solubility, synthesizability). | Policy Networks, Q-Learning | Successfully navigates chemical space to propose molecules with improved property profiles over initial leads. |

Detailed Protocols

Protocol 1: Building a Supervised Learning Model for Activity Prediction

Objective: To train a binary classifier predicting biological activity for a given target using public bioactivity data.

- Data Curation: Source IC50/Ki data for a target (e.g., kinase) from a database like ChEMBL. Apply a threshold (e.g., IC50 < 1 µM = Active, > 10 µM = Inactive). Remove ambiguous middle-range values. Ensure chemical standardization (e.g., using RDKit: canonical SMILES, removal of salts, tautomer normalization).

- Feature Representation: Compute molecular descriptors (e.g., RDKit 2D descriptors) or learned representations (e.g., ECFP4 fingerprints, 1024-bit). Split data into training (70%), validation (15%), and test (15%) sets using stratified splitting.

- Model Training: Implement a Gradient Boosting Classifier (e.g., XGBoost). Use the validation set for hyperparameter optimization (grid search or random search) over

max_depth,learning_rate, andn_estimators. Monitor AUC-ROC. - Evaluation: Apply the final model to the held-out test set. Report AUC-ROC, Precision-Recall AUC, and F1-score. Perform permutation tests to assess feature importance.

Protocol 2:De NovoMolecule Generation using a VAE

Objective: To generate novel, target-focused molecules using a conditioned Variational Autoencoder.

- Dataset Preparation: Assemble a dataset of SMILES strings (e.g., known actives for a target and a large background set like ZINC). Tokenize SMILES strings into characters or use Byte Pair Encoding (BPE).

- Model Architecture: Construct a VAE with an encoder (RNN or Transformer) mapping SMILES to a latent vector (z) and a decoder reconstructing SMILES from z. Include a conditional layer that accepts a target property or activity label as input.

- Training: Train the model to minimize reconstruction loss (cross-entropy) and KL-divergence loss. Use teacher forcing for the decoder. Condition the model on the "active" label for the target of interest.

- Sampling & Post-processing: Sample random latent vectors and decode them into novel SMILES. Filter generated molecules for validity (RDKit), uniqueness, and chemical feasibility. Score them with a separate activity prediction model (see Protocol 1).

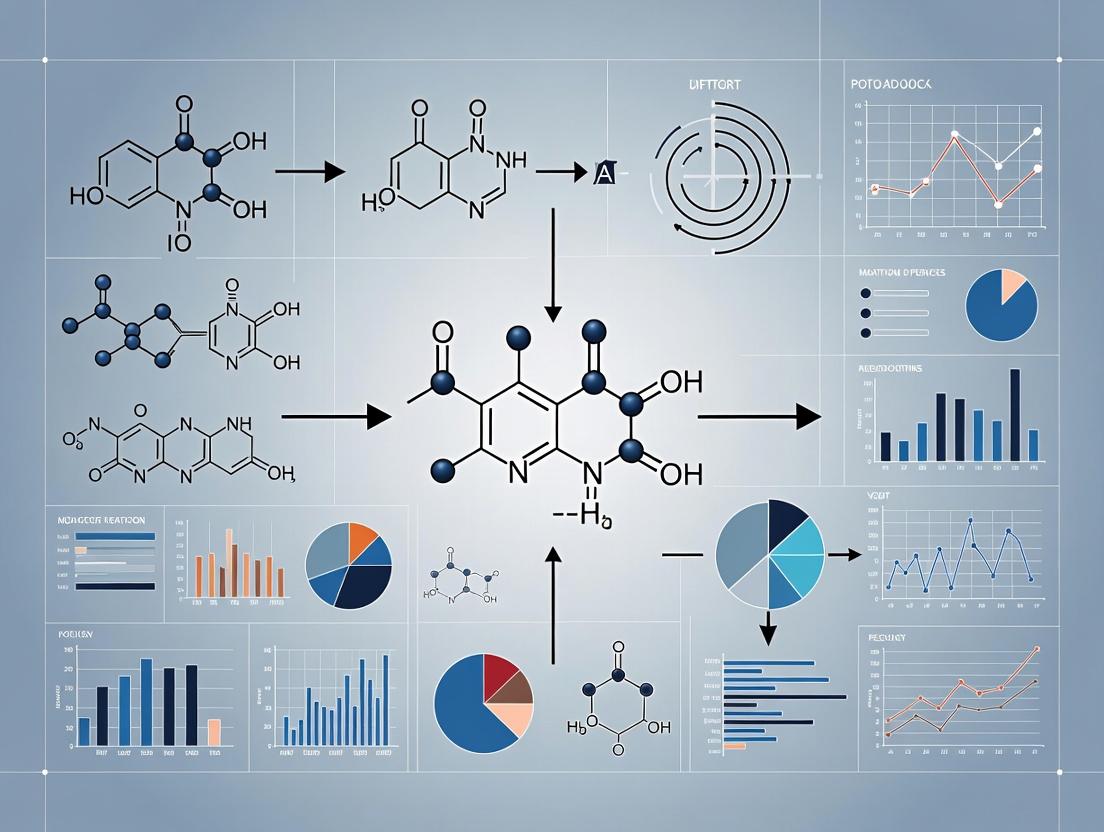

Diagrams

Title: AI/ML Workflow in Small Molecule Discovery

Title: Conditional VAE for Molecule Generation

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for AI/ML-Enabled Drug Discovery

| Item/Category | Function/Description | Example Tools/Libraries |

|---|---|---|

| Chemical Databases | Provide structured, annotated bioactivity and molecular structure data for model training and validation. | ChEMBL, PubChem, BindingDB, ZINC |

| Cheminformatics Toolkits | Enable chemical standardization, descriptor calculation, fingerprint generation, and basic molecular operations. | RDKit, OpenBabel, CDK (Chemistry Development Kit) |

| ML/DL Frameworks | Provide the foundational libraries for building, training, and deploying machine learning and deep learning models. | PyTorch, TensorFlow, scikit-learn, XGBoost |

| Specialized ML Libraries | Offer pre-built models and utilities specifically for chemical and biological data. | DeepChem, Chemprop, DGL-LifeSci |

| High-Performance Computing (HPC) | Infrastructure to handle computationally intensive model training, particularly for deep learning and large-scale virtual screening. | GPU clusters (NVIDIA), Cloud platforms (AWS, GCP, Azure) |

| Experiment Management | Track experiments, hyperparameters, and results to ensure reproducibility and efficient collaboration. | Weights & Biases (W&B), MLflow, TensorBoard |

| Visualization Software | Analyze and interpret model results, chemical space, and structural data. | Matplotlib, Seaborn, Plotly, RDKit molecular visualizer |

The computational discovery of small molecules has undergone a revolutionary transformation, driven by advancements in artificial intelligence (AI) and machine learning (ML). This evolution represents a core pillar of modern AI-driven molecular discovery research, moving from simple statistical correlations to the autonomous generation of novel molecular entities.

Key Historical Milestones:

- 1960s: Advent of Quantitative Structure-Activity Relationships (QSAR), establishing the principle that biological activity can be correlated with calculable molecular descriptors.

- 1990s-2000s: Rise of ligand- and structure-based virtual screening, utilizing molecular docking and pharmacophore models.

- 2010s: Proliferation of deep learning (DL) for molecular property prediction (e.g., using graph neural networks).

- 2020s: Dominance of deep generative models (e.g., VAEs, GANs, Transformers, Diffusion Models) for de novo molecular design.

Quantitative Data & Performance Comparison

Table 1: Evolution of Key Paradigms in Computational Molecular Design

| Paradigm (Era) | Core Methodology | Typical Molecular Representation | Key Advantage | Primary Limitation | Benchmark (DRD2 Actives)* Hit Rate (%) |

|---|---|---|---|---|---|

| Classical QSAR (1960-1990) | Multivariate Linear Regression | Hand-crafted 2D Descriptors (e.g., logP, MW) | Interpretable, simple models | Limited to congeneric series, poor extrapolation | < 5% |

| Virtual Screening (1990-2010) | Molecular Docking / Pharmacophore | 3D Conformations & Chemical Features | Leverages protein structure, broader scope | Dependent on accuracy of scoring functions | 5-15% |

| Deep Learning (Predictive) (2010-Present) | Graph Neural Networks (GNNs) | Atom/Bond Graph | Superior predictive accuracy on complex data | Requires large labeled datasets, generative | 10-25% (for classification) |

| Deep Generative Models (2018-Present) | VAEs, GANs, Transformers, Diffusion | SMILES Strings, Graphs, 3D Point Clouds | De novo design, exploration of vast chemical space | Complex training, potential for invalid structures | 20-40% |

Note: DRD2 (Dopamine Receptor D2) is a common benchmark for generative model validation. Reported hit rates are approximate and synthesized from recent literature (e.g., datasets from GuacaMol, MOSES).

Table 2: Comparison of Contemporary Deep Generative Model Architectures

| Model Type | Example Architectures | Representation | Training Mechanism | Key Strength | Challenge |

|---|---|---|---|---|---|

| Chemical Language Models | SMILES-based RNNs, Transformers (ChemBERTa) | SMILES String | Autoregressive prediction | Captures syntactic rules, large corpora | Invalid SMILES generation, sequence bias |

| Graph-Based Generative | GraphVAE, MolGAN, JT-VAE | Molecular Graph | Variational Inference / Adversarial | Native representation, guarantees validity | Computational complexity, scalability |

| 3D & Geometry-Aware | Equivariant GNNs, Diffusion Models | 3D Coordinates / Surfaces | Score-based generative modeling | Explicit modeling of 3D interactions, crucial for docking | High data/compute requirements |

Experimental Protocols

Protocol 3.1: Classical QSAR Model Development (A Historical Baseline)

Objective: To build a predictive QSAR model for a congeneric series of inhibitors. Materials: See "Scientist's Toolkit" (Section 5). Procedure:

- Dataset Curation: Assemble a homogeneous set of 50-100 molecules with measured biological activity (e.g., IC50). Ensure a congeneric core structure.

- Descriptor Calculation: Using RDKit or MOE, compute a set of 200+ molecular descriptors (e.g., topological, electronic, hydrophobic).

- Data Preprocessing: a) Convert IC50 to pIC50 (-log10(IC50)). b) Remove near-constant descriptors. c) Scale remaining descriptors (Z-score normalization).

- Feature Selection: Apply a feature selection algorithm (e.g., Genetic Algorithm, Stepwise Regression) to reduce descriptors to 3-5 most relevant.

- Model Building: Perform Multiple Linear Regression (MLR) using the selected descriptors:

pIC50 = k1*Desc1 + k2*Desc2 + ... + C. - Validation: Use Leave-One-Out (LOO) or 5-fold cross-validation. Report key metrics: R², Q² (cross-validated R²), and root mean square error (RMSE).

- Interpretation: Analyze coefficient signs and magnitudes to propose a physicochemical profile for optimal activity.

Protocol 3.2: Training a Modern Molecular Generative Model (VGAE Example)

Objective: To train a Variational Graph Autoencoder (VGAE) for generating novel molecules with targeted properties. Materials: See "Scientist's Toolkit" (Section 5). Procedure:

- Dataset Preparation: Download a large, curated dataset (e.g., ZINC250k, ~250,000 drug-like molecules). Preprocess: a) Remove duplicates and inorganic compounds. b) Standardize tautomers/charges. c) Convert all molecules to canonical SMILES and then to graph representations (nodes=atoms, edges=bonds).

- Model Architecture Definition:

- Encoder: A Graph Convolutional Network (GCN) maps the input graph to a latent distribution. It outputs two vectors for each graph: mean (μ) and log-variance (logσ²) defining a Gaussian in latent space.

- Sampler: The reparameterization trick:

z = μ + ε * exp(logσ²), where ε ~ N(0,1). - Decoder: A multi-layer perceptron (MLP) maps the latent vector

zto a probabilistic fully-connected graph. A following network (e.g., another GNN) refines this into a final molecular graph.

- Training Loop: Train for 100-200 epochs.

- Loss Function: Total Loss = Reconstruction Loss (cross-entropy on bonds/atoms) + β * KL Divergence Loss (between latent distribution and N(0,1)).

- Optimization: Use Adam optimizer (lr=0.001), with mini-batch training.

- Conditional Generation: To bias generation towards a property (e.g., high solubility):

- Append a Property Predictor network (a classifier/regressor) to the encoder output.

- During training, include the property prediction loss.

- For generation, sample a latent vector

zand use the decoder, or perform gradient ascent in latent space to maximize the predicted property.

- Post-Generation Processing & Validation:

- Use RDKit to convert generated graphs to SMILES and sanitize them.

- Filter for chemical validity, synthetic accessibility (SA Score), and drug-likeness (QED).

- Validate novelty (not in training set) and diversity of generated structures.

Visualization: Key Workflows and Relationships

Diagram 1: Evolution of Molecular AI Paradigms

Diagram 2: VGAE Training & Generation Workflow

Diagram 3: Conditional Generation via Latent Space Optimization

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions & Software for AI-Driven Molecular Discovery

| Category | Item / Software | Primary Function & Explanation |

|---|---|---|

| Core Cheminformatics | RDKit (Open Source) | Fundamental library for molecular manipulation, descriptor calculation, SMILES I/O, and substructure searching. |

| Classical Modeling | MOE, Schrödinger Suite | Commercial software for comprehensive molecular modeling, QSAR, pharmacophore design, and docking studies. |

| Deep Learning Frameworks | PyTorch, TensorFlow | Flexible open-source frameworks for building and training deep neural networks, including GNNs and generative models. |

| GNN & Generative Libraries | PyTorch Geometric (PyG), DGL | Specialized libraries built on PyTorch/TF for efficient implementation of Graph Neural Networks. |

| Molecular Generation | GuacaMol, MOSES | Benchmarking frameworks and baselines for evaluating generative models (provides datasets, metrics, and reference models). |

| Datasets | ZINC, ChEMBL, PubChem | Large-scale, publicly available databases of molecules and associated bioactivity data for training and testing models. |

| Synthetic Assessment | SA Score, RA Score, ASKCOS | Tools to estimate the synthetic accessibility (SA) or propose retrosynthetic pathways for generated molecules. |

| Property Prediction | ADMET Predictors (e.g., ADMETlab, pkCSM) | Web servers or standalone tools to predict pharmacokinetic and toxicity profiles of generated molecules in silico. |

Application Notes

Core Conceptual Framework in Small Molecule Discovery

The systematic application of AI in drug discovery hinges on a clear understanding of learning paradigms and model objectives. Supervised Learning requires labeled datasets (e.g., molecules annotated with binding affinity or toxicity) to train models for Predictive AI tasks, such as quantitative structure-activity relationship (QSAR) modeling. Unsupervised Learning identifies inherent patterns in unlabeled data (e.g., chemical libraries) and is foundational for Generative AI, which creates novel molecular structures. The integration of these approaches accelerates the hit-to-lead process by predicting properties of known chemical spaces and generating optimized candidates for novel targets.

Quantitative Performance Comparison

Recent benchmark studies (2023-2024) highlight the performance of different AI approaches in standard small molecule discovery tasks.

Table 1: Performance Metrics of AI Approaches in Virtual Screening

| AI Approach | Primary Learning Type | Typical Use Case | Avg. Enrichment Factor (EF₁%) | Avg. AUC-ROC | Key Advantage |

|---|---|---|---|---|---|

| Graph Neural Network (GNN) | Supervised/Predictive | Activity Prediction | 28.4 | 0.82 | High accuracy for labeled data |

| Variational Autoencoder (VAE) | Unsupervised/Generative | De novo Molecule Generation | N/A | N/A | High novelty & synthetic accessibility |

| Reinforcement Learning (RL) | Hybrid/Generative | Multi-parameter Optimization | 19.7* | 0.75* | Optimizes for complex reward functions |

| Random Forest (RF) | Supervised/Predictive | Early-stage ADMET Prediction | N/A | 0.79 | Interpretability, handles small datasets |

| Generative Adversarial Network (GAN) | Unsupervised/Generative | Scaffold Hopping | 22.1* | 0.78* | Generates diverse, realistic structures |

Metrics for RL and GAN are from conditional generation tasks where the model is guided towards a target property, followed by a predictive model's evaluation of the output. EF₁% = Enrichment Factor at top 1% of ranked database; AUC-ROC = Area Under the Receiver Operating Characteristic Curve.

Integrated Workflow for Lead Compound Identification

The most effective contemporary protocols employ a cyclic workflow: 1) Unsupervised/Generative models explore vast chemical space to propose novel scaffolds, 2) Supervised/Predictive models filter and prioritize these candidates based on predicted properties, and 3) experimental validation provides new labels to refine the supervised models, closing the loop. This synergy reduces the empirical screening burden by over 50% compared to high-throughput screening (HTS) alone, as reported in recent kinase inhibitor discovery campaigns.

Experimental Protocols

Protocol: Supervised Learning for Activity Prediction (QSAR Model)

Objective: Train a predictive model to classify active vs. inactive compounds against a target protein. Materials: See Scientist's Toolkit (Section 3).

Methodology:

- Dataset Curation: Assemble a dataset of SMILES strings and binary activity labels (e.g., IC₅₀ < 10 µM = 1). Use public sources (ChEMBL, BindingDB) or proprietary assays. Apply rigorous curation: standardization, duplicate removal, and chemical space analysis.

- Descriptor Calculation & Splitting: Compute molecular descriptors (e.g., RDKit descriptors, ECFP4 fingerprints). Split data into training (70%), validation (15%), and test (15%) sets using scaffold splitting to assess generalization.

- Model Training: Train a supervised algorithm (e.g., Gradient Boosting Machine, Graph Neural Network). Use the training set to minimize cross-entropy loss. Validate hyperparameters (learning rate, depth) on the validation set.

- Evaluation & Interpretation: Evaluate final model on the held-out test set. Report AUC-ROC, precision, recall, and confusion matrix. Use SHAP (SHapley Additive exPlanations) analysis to identify key structural features contributing to activity.

Protocol: Unsupervised & Generative AI forDe NovoDesign

Objective: Generate novel, synthetically accessible molecules with desired property profiles. Materials: See Scientist's Toolkit (Section 3).

Methodology:

- Chemical Space Representation: Compile a large, diverse set of SMILES (e.g., from ZINC15) as training data. No activity labels are required.

- Model Training: Train a generative model (e.g., VAE, GAN, or Transformer). The model learns the probability distribution of the chemical space and the grammatical rules of SMILES notation.

- Latent Space Exploration & Conditional Generation: For unconditional generation, sample random points from the model's latent space and decode to SMILES. For conditional generation, couple the generative model with a predictive model. Use Bayesian optimization or gradient-based methods to traverse the latent space towards regions that maximize a predicted property (e.g., high predicted binding affinity, desirable QED).

- Post-generation Filtering & Analysis: Filter generated molecules using rule-based filters (PAINS, REOS), synthetic accessibility score (SAscore), and predictive models for ADMET. Cluster remaining candidates and select diverse representatives for in silico docking or synthesis.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for AI-Driven Small Molecule Discovery

| Item (Software/Library) | Function in Research | Typical Use Case |

|---|---|---|

| RDKit | Open-source cheminformatics | Molecule standardization, descriptor calculation, substructure search |

| DeepChem | Deep learning library for chemistry | Building and training GNNs and other molecular ML models |

| PyTorch / TensorFlow | Core ML frameworks | Custom model development for generative and predictive tasks |

| Orion AI Platform (BenevolentAI) | Commercial discovery platform | Integrated target identification and molecule generation |

| Schrödinger Suite | Molecular modeling & simulation | High-fidelity physics-based scoring (Glide, FEP+) for AI-generated hits |

| AutoDock Vina / GNINA | Open-source molecular docking | Rapid in silico screening of generated compounds |

| MOSES Benchmarking Platform | Evaluation framework | Standardized assessment of generative model performance |

| Oracle Crystal Ball | Statistical & predictive analytics | Analyzing HTS data trends and model confidence intervals |

Visualizations

Diagram 1: AI Learning Paradigms in Drug Discovery

Diagram 2: AI-Driven Molecule Discovery Workflow

The integration of Artificial Intelligence and Machine Learning (AI/ML) into small molecule discovery represents a paradigm shift, accelerating the transition from hypothesis to candidate. This thesis posits that the predictive power of AI models is fundamentally constrained by the quality, scale, and integration of the primary data sources upon which they are trained. The core triumvirate of data—Chemical Libraries, Bioactivity Datasets, and Protein Structures—provides the essential ingredients for modern computational drug discovery. Chemical libraries define the explorable chemical space; bioactivity datasets map the biological landscape of these compounds; and protein structures offer a mechanistic, three-dimensional understanding of interactions. Effective AI-driven research requires not just access to these repositories, but also standardized protocols for their curation, integration, and application in predictive modeling.

The following tables summarize the current scale and key attributes of major public data sources, providing a basis for dataset selection.

Table 1: Major Public Chemical & Bioactivity Databases (as of 2024)

| Database | Primary Focus | Approximate Scale (Compounds) | Key Bioactivity Metrics | Update Frequency | Primary Access Method |

|---|---|---|---|---|---|

| PubChem | Compound information & screening data | 114+ million substances | BioAssay results (IC50, Ki, EC50, etc.) from HTS | Continuous | Web portal, FTP, API (REST/PowerShell) |

| ChEMBL | Curated bioactive drug-like molecules | 2.4+ million compounds | 19+ million bioactivity data points (Ki, IC50, etc.) | Quarterly releases | Web portal, FTP, API (REST), RDKit interface |

| BindingDB | Measured binding affinities | 2.7+ million data points | Ki, Kd, IC50 for protein targets | Regularly | Web portal, downloadable data files |

| DrugBank | FDA-approved & investigational drugs | 16,000+ drug entries | Drug-target interactions, pharmacology data | Major version releases | Web portal, downloadable XML/TSV |

Table 2: Major Protein Structure Databases

| Database | Primary Focus | Approximate Scale (Structures) | Key Features | Relevance to AI/ML |

|---|---|---|---|---|

| PDB (RCSB) | Experimental 3D structures | 220,000+ entries | X-ray, Cryo-EM, NMR; ligands, co-factors | Training structure-based models (docking, affinity prediction) |

| AlphaFold DB | Predicted protein structures | 200+ million (proteome-scale) | High-accuracy models for uncharacterized proteins | Enabling target feasibility for novel proteins, filling structural gaps |

| PED | Conformational ensembles | 1,400+ proteins | Multiple functional states per protein | Capturing protein flexibility for more realistic docking |

Application Notes & Detailed Protocols

Protocol: Constructing a Curated Bioactivity Dataset from ChEMBL for ML Model Training

Objective: To extract, filter, and standardize bioactivity data for a specific protein target (e.g., Kinase X) to create a high-quality dataset for training a quantitative structure-activity relationship (QSAR) or classification model.

Research Reagent Solutions (Digital Tools):

| Item | Function & Example |

|---|---|

| ChEMBL Web Interface/API | Primary data extraction tool. Allows targeted querying via target name, UniProt ID, or assay parameters. |

| RDKit (Python) | Open-source cheminformatics toolkit for standardizing molecules (tautomer normalization, salt stripping), calculating descriptors, and filtering by properties. |

| Pandas (Python) | Data manipulation library for handling tabular data, merging datasets, and applying logical filters. |

| KNIME or Orange | Visual programming platforms for creating reproducible, GUI-based data curation workflows. |

Methodology:

Target Identification & Data Retrieval:

- Identify the canonical UniProt accession ID for the target protein (e.g.,

PXXXXXfor Kinase X). - Using the ChEMBL web interface or the

chembl_webresource_clientPython library, query for all bioactivities associated with this UniProt ID. - Download data including: ChEMBL Compound ID, Standardized SMILES, Standard Type (e.g., 'IC50', 'Ki'), Standard Relation (e.g., '=', '<'), Standard Value, Standard Units, Assay Description.

- Identify the canonical UniProt accession ID for the target protein (e.g.,

Data Curation & Standardization:

- Filter by Measurement Type: Retain only data points for desired activity types (e.g., IC50, Ki). Convert all values to nM (nanomolar) for consistency.

- Handle Inequalities: Cautiously process data with relations like '>' or '<'. A common practice is to set '>10000' to 10000 (or a high constant) for modeling, noting the censored nature.

- Compound Standardization: Use RDKit to:

- Remove salts and solvents from the SMILES strings.

- Generate canonical tautomers.

- Check and remove invalid SMILES.

- Deduplication: For compounds with multiple measurements, calculate the mean or median pActivity (-log10(Standard Value in M)). Apply a consensus threshold (e.g., keep compounds where measurements fall within 1 log unit).

Property Filtering & Preparation:

- Calculate key molecular properties (Molecular Weight, LogP, Number of H-Bond Donors/Acceptors, Rotatable Bonds) using RDKit.

- Apply "drug-like" filters (e.g., Lipinski's Rule of Five) if relevant to the project scope.

- Create a final binary or continuous activity label. For classification, a threshold is applied (e.g., pIC50 > 6.0 = "Active", pIC50 < 5.0 = "Inactive").

Dataset Splitting: Perform a time-split or scaffold-based split (using Bemis-Murcko scaffolds via RDKit) to ensure the training set is structurally distinct from the test/validation sets, preventing data leakage and providing a more realistic estimate of model performance on novel chemotypes.

Visualization: Workflow for ML-Ready Dataset Creation

Diagram Title: Workflow for Curating an ML-Ready Bioactivity Dataset

Protocol: Integrating a Chemical Library with a Protein Structure for Virtual Screening

Objective: To prepare a corporate or purchasable compound library and a target protein structure for a high-throughput virtual screening (HTVS) campaign to identify potential hits.

Research Reagent Solutions (Digital Tools):

| Item | Function & Example |

|---|---|

| ZINC20/Enamine REAL | Source of commercially available, purchasable compounds for screening libraries (millions to billions of molecules). |

| Open Babel/ RDKit | Tool for converting chemical file formats (SDF, SMILES) and generating 3D conformers. |

| AutoDock Tools, UCSF Chimera | Software for preparing protein structures: removing water, adding hydrogens, assigning charges (e.g., Kollman/Gasteiger). |

| AutoDock Vina, DOCK6, Glide | Molecular docking software suites for performing the computational screening. |

Methodology:

Library Preparation:

- Source: Download a subset (e.g., "lead-like" or "fragment-like") from ZINC20 or select a library from a vendor like Enamine.

- Format Conversion & Standardization: Convert the library to a single format (e.g., SDF). Use RDKit/Open Babel to standardize structures (neutralize, remove salts, generate canonical tautomers).

- 3D Conformer Generation: For docking programs requiring 3D inputs, generate low-energy 3D conformers for each molecule. Tools like RDKit's

EmbedMoleculeor OMEGA are suitable. - Energy Minimization: Minimize the generated 3D structures using a force field (e.g., MMFF94) to remove steric clashes.

Protein Structure Preparation:

- Source Selection: Retrieve the highest-resolution crystal structure of the target with a relevant bound ligand from the PDB. Alternatively, use a high-confidence AlphaFold2 model.

- Preprocessing (in UCSF Chimera/AutoDock Tools):

- Remove all water molecules, except those critical for binding (e.g., catalytic water).

- Add all hydrogen atoms.

- Assign partial charges (e.g., using Gasteiger-Marsili method).

- Define the binding site. This can be based on the co-crystallized ligand's location or a known catalytic site. Save the protein in the required format (e.g., PDBQT for Vina).

Docking Grid/Box Definition:

- Using the prepared protein file, define a 3D grid box that encompasses the binding site. The box center should be on the centroid of the known ligand or active site residues. Set box dimensions large enough to allow ligand movement (e.g., 25Å x 25Å x 25Å).

Virtual Screening Execution:

- Configure the docking software (e.g., Vina) with the prepared protein, ligand library, and grid parameters.

- Run the docking job on a high-performance computing cluster. The output is a ranked list of compounds by predicted binding affinity (docking score).

Visualization: Virtual Screening Workflow Integration

Diagram Title: Integrated Virtual Screening Pipeline from Library and PDB

Thesis Context: The AI/ML Data Pipeline

The protocols above feed into the core AI/ML pipeline of the thesis. The curated bioactivity dataset from ChEMBL is used to train a ligand-based model (e.g., Graph Neural Network). Simultaneously, the virtual screening protocol provides a structure-based approach. The next critical step is data fusion. The predictions from both ligand-based and structure-based models can be combined, and the most promising virtual hits can be procured for experimental validation. This creates a feedback loop where new experimental data further enriches the primary datasets, iteratively improving the AI models. This cyclical integration of chemical, biological, and structural data is the engine of modern AI-driven discovery.

Application Note: AI-Driven Virtual Screening and Lead Optimization

The exploration of chemical space for drug discovery is an intractable problem via traditional methods. This application note details an integrated AI/ML and experimental protocol for efficient navigation, focusing on a kinase target of interest.

Table 1: Comparison of Generative AI Models for De Novo Molecule Design

| Model Name | Type | Generated Molecules Evaluated | % with Valid Chemical Structures | % Predicted Active (pIC50 > 7) | Synthesis Success Rate (Experimental) |

|---|---|---|---|---|---|

| REINVENT 4.0 | Reinforcement Learning | 10,000 | 99.8% | 12.5% | 85% (20 selected) |

| GPT-based Generative | Transformer | 15,000 | 98.5% | 8.7% | 78% (18 selected) |

| VAE (Conditional) | Variational Autoencoder | 8,000 | 95.2% | 15.1% | 82% (17 selected) |

| DiffLinker | Diffusion Model | 12,000 | 99.9% | 10.3% | 91% (22 selected) |

Table 2: Virtual Screening Funnel Metrics (Representative Campaign)

| Screening Stage | Compounds Processed | Computational Cost (GPU-hr) | Output for Next Stage | Attrition Rate |

|---|---|---|---|---|

| Ultra-Large Library Docking (Ultra-fast) | 1 x 10^9 | 5,000 | 500,000 | 99.95% |

| ML QSAR Filter (Activity/Property) | 500,000 | 200 | 5,000 | 99.0% |

| High-Fidelity MM/GBSA Docking | 5,000 | 1,500 | 250 | 95.0% |

| In Silico ADMET & Synthetic Accessibility | 250 | 10 | 25 | 90.0% |

Experimental Protocols

Protocol 1: Active Learning-Driven Hit Identification Cycle

Objective: To iteratively refine a predictive model and select compounds for testing from a multi-million-member commercial library.

Materials: See "The Scientist's Toolkit" below.

Method:

- Initial Model Training: Train a graph neural network (GNN) activity predictor using 500-1000 known active/inactive compounds for the target.

- Initial Prediction & Diversity Selection: Use the model to predict activity for 5 million purchasable compounds (e.g., ZINC20). Select a diverse set of 1000 compounds using k-means clustering on molecular fingerprints.

- Primary Biochemical Assay: Test the 1000 selected compounds using the assay in Protocol 2. Define actives as compounds with >50% inhibition at 10 µM.

- Model Retraining: Add the new experimental data (labels) to the training set. Retrain the GNN model.

- Bayesian Optimization for Selection: Apply Bayesian optimization to the model's predictions over the remaining library to select the next 500 compounds, balancing exploration (diverse structures) and exploitation (high predicted activity).

- Iteration: Repeat steps 3-5 for 3-5 cycles, or until a desired number of confirmed hits (e.g., 50 potent actives) is obtained.

Protocol 2: Biochemical Inhibition Assay (Kinase Example)

Objective: To determine the half-maximal inhibitory concentration (IC50) of compounds from virtual screening.

Method:

- Prepare a 3-fold serial dilution of test compounds in DMSO (e.g., 10 mM to 0.5 nM, 11 points).

- In a 384-well plate, add 2 µL of compound/DMSO to each well. Include controls (DMSO only for 0% inhibition, control inhibitor for 100% inhibition).

- Add 18 µL of kinase reaction mixture (containing kinase, ATP at Km concentration, and buffer) to all wells. Pre-incubate for 15 minutes at room temperature.

- Initiate the reaction by adding 5 µL of substrate/cofactor solution. Incubate for 60 minutes under kinetic linearity conditions.

- Detect product formation using a time-resolved fluorescence resonance energy transfer (TR-FRET) detection method. Stop the reaction with EDTA and develop with detection reagents per manufacturer instructions.

- Read plates on a compatible plate reader (e.g., excitation 340 nm, emission 495/520 nm).

- Analyze data: Plot fluorescence ratio vs. log10[compound]. Fit a 4-parameter logistic curve to calculate IC50 values.

Diagrams

Diagram 1: AI-Driven Drug Discovery Workflow

Diagram 2: Active Learning Cycle for Hit Finding

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for AI-Integrated Discovery

| Item | Function & Application | Example Vendor/Product |

|---|---|---|

| Ultra-Large Screening Library | Digital library of purchasable or synthesizable compounds for virtual screening. Provides the initial search space. | Mcule Ultimate, ZINC20, Enamine REAL Space |

| High-Throughput Assay Kit | Validated biochemical assay for rapid experimental validation of hundreds of predicted compounds. | Cisbio Kinase TR-FRET Assay Kits, Promega ADP-Glo |

| ML-Ready Chemical Database | Curated database with standardized structures and linked bioactivity data for training AI models. | ChEMBL, PubChem, BindingDB |

| Automated Synthesis Platform | Enables rapid synthesis of AI-designed molecules not available commercially. | ChemSpeed SWING, Opentrons OT-2 |

| Cloud Computing Credits | Access to scalable GPU/CPU resources for running large-scale molecular docking and model training. | Google Cloud TPUs, AWS EC2 P4 instances, Azure NDv4 |

| ADMET Prediction Software | In silico tools to predict pharmacokinetic and toxicity properties prior to synthesis. | Schrodinger QikProp, Simulations Plus ADMET Predictor |

Why Now? The Convergence of Big Data, Computational Power, and Algorithmic Advances

Application Notes: The Enabling Triad for AI-Driven Small Molecule Discovery

The recent acceleration in AI-driven small molecule discovery is not attributable to a single breakthrough, but to the synergistic convergence of three critical elements. This triad has transitioned from sequential bottlenecks to concurrent enablers, creating a fertile ground for revolutionary research protocols.

Table 1: Quantitative Evolution of the Enabling Triad (2012-2024)

| Factor | Metric | ~2012 Benchmark | ~2024 Benchmark | Approx. Increase | Impact on Small Molecule Discovery |

|---|---|---|---|---|---|

| Big Data | Publicly Available Chemical/Bioactivity Compounds (e.g., ChEMBL) | ~1.2 Million | >20 Million | >16x | Enables training of robust, generalizable models for binding affinity & synthesis prediction. |

| Computational Power | FP32 Performance (Top-end GPU, e.g., NVIDIA) | ~1.5 TFLOPS (K10) | ~330 TFLOPS (H100) | ~220x | Allows training of deep neural networks (100M+ parameters) on billion-scale datasets in feasible time. |

| Algorithmic Advances | Model Performance (Protein-Ligand Affinity Prediction, RMSD) | >2.0 Å (Docking) | <1.0 Å (AlphaFold3/ DiffDock) | >50% Accuracy Gain | Shift from rigid docking to physics-informed & diffusion-based generative models. |

Detailed Experimental Protocols

Protocol 1: Training a Ligand-Based Bioactivity Prediction Model Using a Graph Neural Network (GNN)

Objective: To create a predictive model for compound activity against a target of interest using publicly available bioactivity data.

Materials & Reagents:

- Dataset: Curated bioactivity data (e.g., Ki, IC50) from ChEMBL or BindingDB.

- Software: Python with libraries: PyTorch Geometric, RDKit, Scikit-learn, Pandas.

- Hardware: GPU with ≥8GB VRAM (e.g., NVIDIA RTX 3080/A100).

Procedure:

- Data Curation: Query ChEMBL for a specific target (e.g., EGFR kinase). Extract SMILES strings and corresponding IC50 values. Convert IC50 to pIC50 (-log10(IC50)). Apply a threshold (e.g., pIC50 > 6.0 = active, < 5.0 = inactive) for classification.

- Featurization: Use RDKit to convert each SMILES string into a molecular graph. Nodes represent atoms (featurized with atomic number, degree, hybridization). Edges represent bonds (featurized with bond type, conjugation).

- Data Split: Partition the dataset into training (70%), validation (15%), and test (15%) sets using stratified splitting based on activity class.

- Model Architecture: Implement a 5-layer Graph Convolutional Network (GCN) or Message Passing Neural Network (MPNN). Follow convolutions with a global mean pooling layer and a final fully connected layer with a sigmoid output.

- Training: Train for 200 epochs using the Adam optimizer and Binary Cross-Entropy loss. Monitor validation loss for early stopping.

- Evaluation: Apply the trained model to the held-out test set. Calculate AUC-ROC, precision, recall, and F1-score.

Protocol 2: Generative Molecular Design with a Diffusion Model

Objective: To generate novel, synthetically accessible small molecules with high predicted affinity for a target protein pocket.

Materials & Reagents:

- Dataset: 3D protein-ligand complex structures from PDBbind. Ligand scaffolds from REAL database.

- Software: Python with PyTorch, RDKit, Open Babel. Access to a pretrained model like DiffDock or a framework like MolDiff.

- Hardware: High-performance GPU (≥24GB VRAM, e.g., NVIDIA A100/RTX 4090).

Procedure:

- Target Preparation: Obtain the 3D structure of the target protein (e.g., from AlphaFold DB or PDB). Define the binding pocket coordinates using a tool like fpocket or from a reference co-crystal ligand.

- Conditioning: Encode the protein pocket as a 3D graph or volumetric grid, representing amino acid types, charges, and hydrophobicity at each node/voxel.

- Generative Process:

a. Forward Diffusion: Start from a known ligand pose (

x_0). Iteratively add Gaussian noise over T steps (e.g., 1000) to obtain a fully noised state (x_T). b. Reverse Diffusion (Training): Train a neural network (e.g., a SE(3)-equivariant network) to predict the noise added at each step, conditioned on the protein pocket representation. c. Sampling (Inference): Start from random noise (x_T). Use the trained network to iteratively denoise for T steps, generating a novel 3D molecular structure (x_0) within the pocket. - Post-Processing & Filtering: Convert generated 3D structures to SMILES. Filter for synthetic accessibility (SA Score), drug-likeness (QED), and predicted affinity using a rapid scoring function (e.g., CNN-based or MM/GBSA).

Visualizations

Diagram 1: AI-Driven Small Molecule Discovery Workflow

Diagram 2: Key Signaling Pathways in Modern ML for Drug Discovery

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for AI/ML-Enabled Small Molecule Discovery

| Resource Category | Specific Tool / Database / Platform | Primary Function in Research |

|---|---|---|

| Chemical & Bioactivity Data | ChEMBL, BindingDB, PubChem | Provides large-scale, annotated chemical structures and bioactivity measurements for model training and validation. |

| Protein Structure Data | Protein Data Bank (PDB), AlphaFold Protein Structure Database | Sources of 3D protein structures (experimental & predicted) for structure-based design and complex modeling. |

| Generative & Modeling Software | RELAX, DiffDock, OpenFold, NVIDIA BioNeMo | Specialized software frameworks and pre-trained models for generative chemistry, molecular docking, and protein folding. |

| Cheminformatics & Featurization | RDKit, Open Babel, DeepChem | Open-source libraries for manipulating chemical structures, calculating molecular descriptors, and preparing ML-ready datasets. |

| Machine Learning Frameworks | PyTorch, PyTorch Geometric, JAX | Core programming frameworks for building, training, and deploying custom deep learning models, especially on GPU hardware. |

| High-Performance Compute (HPC) | NVIDIA DGX Cloud, Google Cloud A3 VMs, AWS EC2 P5 Instances | Cloud-based platforms offering on-demand access to state-of-the-art GPU clusters (e.g., H100) for training large models. |

| Synthetic Accessibility | AiZynthFinder, ASKCOS, Retrosim | Tools for predicting or planning synthetic routes for AI-generated molecules, ensuring practical feasibility. |

The AI/ML Toolkit: Key Algorithms and Their Practical Application in the Discovery Pipeline

Within the broader thesis of AI-driven small molecule discovery, Virtual Screening 2.0 represents a paradigm shift from traditional physics-based docking to machine learning (ML)-enhanced workflows. This evolution is critical for interrogating vast chemical spaces, such as ultra-large libraries exceeding billions of molecules, where classical methods are computationally intractable. The core thesis posits that integrating deep learning models for binding affinity prediction, molecular generation, and ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) profiling early in the screening funnel accelerates the identification of viable lead compounds with optimized polypharmacology and developability profiles.

Core ML Model Architectures and Performance Data

Current ML models for virtual screening leverage diverse architectures trained on large-scale bioactivity data. Performance is benchmarked on standard datasets like DUD-E, LIT-PCBA, and PDBbind.

Table 1: Performance Comparison of Key ML Model Architectures for Virtual Screening

| Model Architecture | Typical Use Case | Key Benchmark Dataset | Average Enrichment Factor (EF1%) | AUC-ROC | Key Advantage |

|---|---|---|---|---|---|

| Graph Neural Networks (GNNs) | Binding affinity prediction | PDBbind Core Set | ~25-35* | 0.85-0.92 | Learns directly from molecular graph; captures topology. |

| 3D Convolutional Neural Networks (3D-CNNs) | Structure-based screening (Pocket-specific) | DUD-E | ~30-40* | 0.80-0.90 | Incorporates explicit 3D spatial/electrostatic features. |

| Transformer-based (e.g., BERT-like) | Ligand-based screening & QSAR | LIT-PCBA | N/A | 0.75-0.88 | Excellent for large, sparse bioactivity data. |

| Equivariant Neural Networks | Pose scoring & affinity | PDBbind | N/A | 0.87-0.94 | Rotationally invariant; robust to pose alignment. |

| Random Forest / XGBoost | Initial library triage | Various PubChem assays | ~15-25* | 0.70-0.82 | Interpretable; low computational cost for training. |

*EF1% values are model and target-dependent; ranges represent high-performing examples from recent literature.

Application Notes & Detailed Protocols

Protocol 3.1: Structure-Based Virtual Screening with a Pre-Trained GNN

Objective: To prioritize compounds from a 10-million-molecule library for a defined protein target (e.g., KRAS G12C) using a pre-trained graph-based affinity prediction model.

Materials: See "Scientist's Toolkit" below. Software: Python (>=3.8), PyTorch or TensorFlow, RDKit, PyMOL/Open Babel, MPI for distributed computing (optional).

Procedure:

- Target Preparation: Using PyMOL, prepare the protein structure (PDB ID: 6OIM). Remove water molecules, add missing hydrogens, and assign correct protonation states at pH 7.4. Define the binding site as all residues within 8Å of the native ligand.

- Compound Library Preprocessing: Load the SMILES strings of the library. Use RDKit to generate canonical SMILES, strip salts, and apply standard curation (remove metals, correct valencies). Generate 3D conformers for each molecule (max 5 conformers per molecule using the ETKDG method).

- Molecular Featurization: For the GNN input, convert each molecule into a graph representation. Nodes represent atoms, featurized with atomic number, degree, hybridization, formal charge, and aromaticity. Edges represent bonds, featurized with bond type, conjugation, and stereochemistry.

- Docking (Optional but Recommended for 3D Context): Perform rapid docking (e.g., using Vina or QuickVina 2) of all preprocessed compounds into the defined binding site to generate an initial pose. This pose provides the spatial context for structure-based GNNs.

- Model Inference: Load the pre-trained GNN model (e.g., a modified AttentiveFP or PotentialNet architecture). Input the featurized molecular graphs and, if applicable, the protein pocket graph or docking pose coordinates. Run batch inference on a GPU cluster to generate a predicted binding score (pKi or pIC50) for each molecule.

- Post-processing and Prioritization: Rank all compounds by their predicted score. Apply a simple pharmacophore filter or rough PAINS (Pan-Assay Interference Compounds) filter to remove obvious false positives. Select the top 50,000 compounds for the next stage.

- Validation: If available, use known active and decoy molecules for the target to calculate the enrichment factor (EF1%) of the prioritized list to benchmark model performance on this specific task.

Protocol 3.2: Ligand-Based Similarity Searching with a Transformer Model

Objective: To identify novel chemotypes active against a target using only known active compounds (e.g., 5-10 reference actives).

Procedure:

- Reference Set Compilation: Curate a set of 5-10 known active compounds with confirmed potency (< 100 nM). Ensure chemical diversity within the set.

- Model Fine-Tuning (Optional): If a large, related bioactivity dataset exists, fine-tune a pre-trained molecular Transformer model (e.g., ChemBERTa, MoLFormer) on this auxiliary task to improve its representation for the target class.

- Embedding Generation: Use the (fine-tuned) Transformer to generate a continuous vector embedding (e.g., 512-dimensional) for each reference active and for every molecule in the screening library (from their SMILES strings).

- Similarity Calculation: Calculate the cosine similarity between the embedding of each library molecule and the centroid of the reference actives' embeddings.

- Diversity Selection: Rank by similarity score. Apply a maximum common substructure (MCS) or Tanimoto similarity (on fingerprints) filter to the top 10,000 compounds to ensure the final prioritized set of 1,000 molecules contains diverse scaffolds while remaining within the relevant chemical space.

Visualizations

Diagram Title: VS 2.0: ML-Accelerated Virtual Screening Workflow

Diagram Title: Molecular Graph Neural Network Featurization

The Scientist's Toolkit

Table 2: Essential Research Reagents & Solutions for Virtual Screening 2.0

| Item Name | Category | Function & Relevance |

|---|---|---|

| Curated Benchmark Datasets (DUD-E, LIT-PCBA, PDBbind) | Data | Standardized datasets for training and fair benchmarking of ML models, containing known actives, decoys, and binding affinities. |

| Ultra-Large Chemical Libraries (e.g., Enamine REAL, ZINC20) | Compound Library | Source of billions of purchasable molecules for virtual screening, providing the search space for AI-driven discovery. |

| RDKit | Software/Chemoinformatics | Open-source toolkit for cheminformatics; used for molecule manipulation, descriptor calculation, fingerprint generation, and conformer generation. |

| PyTorch Geometric / DGL | Software/ML Framework | Specialized libraries for building and training Graph Neural Networks (GNNs) directly on molecular graph data. |

| Pre-Trained Molecular Language Models (e.g., ChemBERTa, MoLFormer) | ML Model | Transformer models pre-trained on millions of SMILES strings, providing powerful molecular representations for transfer learning. |

| High-Performance Computing (HPC) Cluster with GPU Nodes | Hardware | Essential for training large ML models and running inference on billion-molecule libraries in a feasible timeframe. |

| Automated Cloud Pipelines (e.g., Kubernetes on AWS/GCP) | Infrastructure | Orchestrates scalable, reproducible virtual screening workflows, managing data flow and distributed computation. |

| QSAR-ready Curated Corporate/Bioassay Databases | Proprietary Data | High-quality, internally consistent bioactivity data crucial for fine-tuning general ML models to specific target classes or therapeutic areas. |

Within the broader thesis of AI-driven small molecule discovery, de novo molecular design represents a paradigm shift from virtual screening to generative creation. Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs) are two foundational deep learning architectures that enable the generation of novel, synthetically accessible, and biologically relevant chemical structures. These models learn the underlying probability distribution of known chemical space from datasets like ChEMBL or ZINC and sample new molecules from this learned distribution, optimizing for desired properties.

Comparative Framework: GANs vs. VAEs in Molecular Generation

Table 1: Architectural & Performance Comparison of GANs and VAEs for Molecular Design

| Feature | Generative Adversarial Network (GAN) | Variational Autoencoder (VAE) |

|---|---|---|

| Core Principle | Two-player game: Generator vs. Discriminator | Probabilistic encoder-decoder with latent space regularization |

| Training Stability | Can be unstable; prone to mode collapse | Generally more stable and predictable |

| Latent Space | Often discontinuous; difficult for interpolation | Continuous and smooth, enabling easy interpolation |

| Example Output Diversity (Valid/Unique %)* | ~95% / ~85% (Organ, 2017) | ~95% / ~80% (Gómez-Bombarelli, 2018) |

| Explicit Probability Model | No | Yes (approximate posterior) |

| Primary Strength | High-quality, sharp molecular structures | Structured latent space for optimization |

| Key Challenge | Training difficulty, evaluation of convergence | Can produce blurry/over-regularized outputs |

| Typical SMILES Representation | Sequential (character-by-character) | Sequential or continuous (via tokenization) |

Note: Representative benchmark values from seminal papers; actual performance is dataset and implementation-dependent.

Experimental Protocols

Protocol 3.1: Training a VAE for Molecular Generation

This protocol outlines the steps for training a VAE on a SMILES dataset to generate novel molecules.

Materials & Software:

- Hardware: GPU (e.g., NVIDIA V100, A100) with ≥16GB VRAM.

- Dataset: Preprocessed SMILES strings (e.g., from ChEMBL, ~1-2 million compounds).

- Libraries: PyTorch or TensorFlow, RDKit, NumPy, Pandas.

- Preprocessing Scripts: For SMILES canonicalization, tokenization, and dataset splitting.

Procedure:

- Data Preprocessing: a. Canonicalize all SMILES strings using RDKit and remove duplicates. b. Apply a length filter (e.g., keep molecules with 40-120 characters). c. Split data into training, validation, and test sets (80/10/10). d. Create a character vocabulary (all unique characters in SMILES) and tokenize each SMILES string into integer indices. e. Pad sequences to a fixed maximum length.

Model Architecture Definition (PyTorch-like pseudocode):

Training Loop: a. Initialize model, optimizer (Adam), and loss functions (Reconstruction: Cross-Entropy, KL Divergence). b. For each epoch: i. Pass a batch of tokenized SMILES through the encoder. ii. Sample latent vector

zusing the reparameterization trick:z = mu + epsilon * exp(0.5 * logvar). iii. Decodezto reconstruct the input sequence. iv. Calculate total loss:Loss = BCE_Reconstruction + β * KL_Loss(β can be annealed). v. Perform backpropagation and update weights. c. Monitor validation loss and early stopping.Generation: a. Sample a random vector

zfrom the standard normal distributionN(0,1). b. Passzthrough the decoder autoregressively to generate a token sequence. c. Convert tokens to characters to obtain a SMILES string. d. Validate chemical validity using RDKit.

Protocol 3.2: Training a Conditional GAN (cGAN) for Property-Guided Generation

This protocol describes training a GAN conditioned on a molecular property (e.g., LogP, QED) to bias generation.

Materials & Software: As in Protocol 3.1, with additional property calculation routines (e.g., RDKit's Descriptors).

Procedure:

- Data Preparation & Conditioning:

a. Follow Step 1 from Protocol 3.1.

b. Calculate target properties for all molecules in the training set.

c. Discretize the continuous property value into

ncondition labels (e.g., low, medium, high LogP).

Model Architecture (Generator & Discriminator):

Adversarial Training: a. Initialize Generator (G), Discriminator (D), and two optimizers. b. For each training iteration: i. Train D: Sample real SMILES with their conditions. Generate fake SMILES from G using random noise and target conditions. Update D to correctly classify real and fake. ii. Train G: Generate fake SMILES. Update G to maximize the probability that D classifies them as real given the condition (minimize adversarial loss). iii. Incorporate a auxiliary reconstruction loss (e.g., Teacher Forcing) for stability.

Conditional Generation: a. Define a target condition (e.g., "high QED"). b. Sample noise

zand embed the condition. c. Input the concatenated vector to the trained Generator to produce novel molecules with the desired property bias.

Visualization of Architectures & Workflows

Diagram 1: VAE for Molecular Design Workflow (94 chars)

Diagram 2: Conditional GAN Training Cycle (85 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Resources for Generative Molecular Design Experiments

| Item | Function & Purpose | Example/Provider |

|---|---|---|

| Chemical Databases | Provide large-scale, annotated molecular structures for training. | ChEMBL, PubChem, ZINC, GOSTAR |

| Cheminformatics Toolkit | Handles molecule I/O, standardization, descriptor calculation, and validity checks. | RDKit (Open-Source), Open Babel |

| Deep Learning Framework | Provides flexible environment for building and training GAN/VAE models. | PyTorch, TensorFlow/Keras, JAX |

| Molecular Representation | Defines how molecules are encoded as model inputs/outputs. | SMILES, SELFIES, DeepSMILES, Graph (w/ node/edge features) |

| GPU Computing Resource | Accelerates model training, which is computationally intensive. | NVIDIA DGX Stations, Cloud GPUs (AWS, GCP), Colab Pro |

| Training Benchmark Datasets | Standardized datasets for fair model comparison. | MOSES, GuacaMol benchmarking suites |

| Evaluation Metrics | Quantify performance of generative models (beyond validity). | Validity, Uniqueness, Novelty, Frechet ChemNet Distance (FCD), SAScore distributions |

| Automated Validation Pipeline | Scripts to filter, deduplicate, and assess generated molecules. | Custom scripts using RDKit, MolVS (standardizer) |

The central thesis of modern computational drug discovery posits that the integration of artificial intelligence (AI) and machine learning (ML) can drastically reduce the cost, time, and attrition rates of small molecule therapeutic development. A critical pillar of this thesis is the accurate in silico prediction of key molecular properties, namely bioactivity against intended targets and ADMET profiles. Early and reliable prediction of these properties allows for the virtual screening of vast chemical libraries, prioritizing only the most promising candidates for synthesis and in vitro testing. This Application Note details current methodologies, protocols, and resources for implementing AI/ML models in ADMET and bioactivity prediction workflows.

Core Data & Benchmark Performance

Current state-of-the-art models leverage large, curated biochemical and pharmacokinetic datasets. Performance is typically measured via metrics such as Area Under the Receiver Operating Characteristic Curve (AUC-ROC), Mean Absolute Error (MAE), or Concordance Index (C-index). The table below summarizes benchmark performance for selected key properties on common test sets.

Table 1: Benchmark Performance of Contemporary AI/ML Models for Key Property Prediction

| Property Category | Specific Endpoint | Exemplary Model Type | Typical Dataset Size | Benchmark Performance (AUC-ROC/MAE) | Primary Data Source |

|---|---|---|---|---|---|

| Bioactivity | Inhibitory Concentration (IC50) | Graph Neural Network (GNN) | >500,000 compounds | MAE: 0.5 - 0.7 pIC50 | ChEMBL, PubChem BioAssay |

| Absorption | Human Intestinal Absorption (HIA) | Random Forest / XGBoost | ~1,000 compounds | AUC-ROC: 0.90 - 0.95 | ChEMBL, DrugBank |

| Distribution | Volume of Distribution (Vd) | Gradient Boosting Machines | ~1,200 clinical drugs | MAE: 0.3 - 0.4 log L/kg | Obach et al. (2008) Dataset |

| Metabolism | Cytochrome P450 Inhibition (CYP3A4) | Deep Neural Network (DNN) | >50,000 compounds | AUC-ROC: 0.85 - 0.90 | PubChem BioAssay |

| Excretion | Clearance (CL) | Multitask Neural Network | ~800 clinical drugs | MAE: 0.3 - 0.35 log mL/min/kg | AstraZeneca's Open Data |

| Toxicity | hERG Channel Inhibition | Attention-Based GNN | >12,000 compounds | AUC-ROC: 0.88 - 0.93 | ChEMBL, Tox21 |

Experimental Protocols

Protocol 1: Building a Graph Neural Network (GNN) for Bioactivity Prediction

Objective: To train a GNN model capable of predicting pIC50 values for compounds against a specified protein target.

Materials: See "The Scientist's Toolkit" below.

Methodology:

- Data Curation: Query the ChEMBL database for a target of interest (e.g., kinase, GPCR). Extract SMILES strings and associated bioactivity measurements (IC50, Ki). Convert all values to pIC50 (-log10(IC50)).

- Data Preparation: Apply standard scaling to the pIC50 values. Split the data into training (70%), validation (15%), and hold-out test (15%) sets using stratified splitting based on activity brackets.

- Molecular Graph Representation: For each SMILES string, use RDKit to generate a molecular graph. Nodes represent atoms, encoded with features like atom type, degree, hybridization. Edges represent bonds, encoded with type and conjugation.

- Model Architecture: Implement a GNN using a framework like PyTorch Geometric. A standard architecture includes:

- Three Message Passing Neural Network (MPNN) layers to aggregate atomic neighbor information.

- A global mean pooling layer to generate a single molecular fingerprint vector from the updated atom embeddings.

- Two fully connected (dense) layers with ReLU activation and dropout (rate=0.2) to map the fingerprint to the final pIC50 prediction.

- Training: Use Mean Squared Error (MSE) as the loss function and the Adam optimizer. Train for a fixed number of epochs (e.g., 300), evaluating the model on the validation set after each epoch. Employ early stopping if validation loss does not improve for 30 consecutive epochs.

- Evaluation: Apply the best model (lowest validation loss) to the hold-out test set. Report MAE, Root Mean Squared Error (RMSE), and R².

Protocol 2: Implementing a Multitask DNN for ADMET Profiling

Objective: To train a single Deep Neural Network (DNN) that predicts multiple ADMET endpoints simultaneously, leveraging shared feature representations.

Methodology:

- Dataset Assembly: Compile a unified dataset where each compound (represented by a molecular fingerprint) has labels for multiple ADMET tasks (e.g., HIA, CYP3A4 inhibition, hERG inhibition). Use

-999as a placeholder for missing labels for any compound-task pair. - Feature Generation: Generate ECFP4 (Extended Connectivity Fingerprint) fingerprints (2048 bits, radius 2) for all compounds using RDKit.

- Model Architecture: Build a multitask DNN.

- Shared Bottom Layers: Three dense layers (1024, 512, and 256 neurons) with ReLU activation and Batch Normalization. This section learns a general molecular representation.

- Task-Specific Heads: For each ADMET endpoint, create a separate branch originating from the last shared layer. Each branch consists of two dense layers (128 and 64 neurons) culminating in a single output neuron (with sigmoid for classification, linear for regression).

- Training with Masked Loss: Use a weighted sum of task-specific losses. For each batch, compute the loss only for tasks where the label is not

-999. This allows training on datasets with partial annotations. - Validation & Interpretation: Monitor individual task performance on a validation set. Use permutation feature importance on the shared layers to identify molecular substructures globally important for ADMET properties.

Visualizing the AI-Driven Discovery Workflow

(Diagram Title: AI-Driven Small Molecule Screening and Optimization Workflow)

(Diagram Title: Architecture of a Multitask Neural Network for ADMET)

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions for AI/ML in ADMET & Bioactivity Prediction

| Tool/Resource | Type | Primary Function in Workflow |

|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Converts SMILES to molecular graphs, generates fingerprints (ECFP, MACCS), calculates molecular descriptors, and handles substructure searching. |

| PyTorch Geometric / Deep Graph Library (DGL) | Deep Learning Framework Extension | Specialized libraries for building and training Graph Neural Networks (GNNs) on molecular graph data. |

| ChEMBL Database | Public Bioactivity Database | Provides a vast, curated source of bioactive molecules with drug-like properties, including binding data and ADMET information. |

| Tox21 Challenge Data | Public Toxicology Dataset | Offers a standardized set of ~12,000 compounds tested across 12 quantitative high-throughput screening (qHTS) assays for nuclear receptor and stress response toxicity. |

| OCHEM Platform | Web-Based Modeling Platform | Allows users to upload datasets, generate multiple machine learning models using various descriptors and algorithms, and perform predictions for ADMET endpoints. |

| SwissADME / pkCSM | Web-Based Prediction Tool | Provides rapid, rule-based and ML-powered predictions for key ADME parameters (absorption, metabolism) and toxicity, useful for initial screening and model comparison. |

| MolBERT or ChemBERTa | Pre-trained Chemical Language Model | Transformer-based models pre-trained on large corpora of SMILES strings, providing powerful molecular representations that can be fine-tuned for specific prediction tasks. |

Application Notes

Within the AI-driven small molecule discovery thesis, Reinforcement Learning (RL) provides a framework for navigating the vast chemical space by sequentially building molecules to optimize multiple, often competing, objectives. This approach moves beyond simple generative models by implementing a reward function that explicitly balances the key drug discovery parameters of potency (biological activity against a target), selectivity (minimizing off-target effects), and synthesizability (ease of chemical synthesis). Recent advancements in 2023-2024 highlight the integration of policy-based RL (e.g., Proximal Policy Optimization) with deep molecular generators (e.g., Graph Neural Networks) to produce novel, synthetically accessible leads with validated multi-parameter profiles.

Quantitative Data Summary

Table 1: Comparison of RL Agent Architectures for Multi-Objective Molecule Generation (2023-2024 Benchmarks)

| RL Agent Type | Molecular Representation | Average Potency (pIC50) | Selectivity Index (vs. Kinome) | Synthesizability Score (SAscore 1-10) | Diversity (Tanimoto) | Reference Dataset |

|---|---|---|---|---|---|---|

| PPO + GNN | Graph | 8.2 ± 0.5 | 42.5 | 3.1 | 0.71 | ChEMBL, ZINC |

| DQN + SMILES LSTM | String (SMILES) | 7.8 ± 0.7 | 28.3 | 4.5 | 0.65 | ChEMBL |

| SAC + Fragment | Fragment-based | 7.5 ± 0.6 | 35.1 | 2.8 | 0.82 | CASF |

| Multi-Task PPO | Graph + 3D Pharmacophore | 8.5 ± 0.4 | 50.2 | 3.4 | 0.68 | PDBbind, ChEMBL |

Table 2: Key Reward Function Components and Their Weighting Ranges

| Objective | Typical Metric | Reward Component Formula (Simplified) | Reported Weight (λ) Range |

|---|---|---|---|

| Potency | Docking Score / pIC50 Prediction | R_pot = -log(IC50) or -Docking Score | 0.4 - 0.6 |

| Selectivity | Off-target Prediction (e.g., for kinase A vs B) | Rsel = (ActivityA) / (Σ Activity_off-target) | 0.2 - 0.3 |

| Synthesizability | SAscore, RAscore, Retro* Success Rate | R_syn = 10 - SAscore or Binary(Retro* success) | 0.1 - 0.3 |

| Drug-Likeness | QED, Lipinski's Rule of 5 | Rdrug = QED * (1 - RuleOf5Violations) | 0.05 - 0.1 |

Experimental Protocols

Protocol 1: Training a Multi-Objective RL Agent for De Novo Design

Objective: To train a Proximal Policy Optimization (PPO) agent coupled with a Graph Neural Network (GNN) policy network to generate molecules optimizing the combined reward Rtotal = λ1*Rpot + λ2R_sel + λ3R_syn.

Materials: See "The Scientist's Toolkit" below. Procedure:

- Environment Setup: Configure the molecular generation environment. The state (st) is the current partial molecular graph. The action (at) is the addition of a specific atom/bond or attachment of a validated fragment from a predefined library.

- Reward Calculation: At each step, compute intermediate rewards. Upon episode termination (molecule completion), calculate final rewards:

- Rpot: Input the final SMILES string into a pre-trained, validated pIC50 predictor model (e.g., ChemProp model on ChEMBL data) for the primary target.

- Rsel: Input the SMILES into a separate off-target activity predictor (e.g., a multi-task kinase inhibitor model). Calculate the ratio of predicted primary target activity to the sum of top 5 off-target activities.

- R_syn: Compute the Synthetic Accessibility score (SAscore) using the RDKit implementation. Reward = 10 - SAscore (lower is more synthesizable).

- Agent Training:

- Initialize the PPO agent with a GNN-based actor and critic network.

- For 1,000,000 episodes: a. Let the agent interact with the environment, collecting trajectories (st, at, rt, s{t+1}). b. Every 5,000 episodes, update the policy network using the PPO clipping objective, maximizing the expected cumulative reward. c. Validate generated molecules every 25,000 episodes by docking a subset (e.g., 100 top-reward) against the target protein structure (PDB ID specific).

- Evaluation: After training, sample 10,000 molecules from the trained policy. Filter for compounds with predicted pIC50 > 8.0, selectivity index > 30, and SAscore < 4. Select top 50 candidates for in silico synthesis planning via a retrosynthesis tool (e.g., AiZynthFinder).

Protocol 2: In Silico Validation of RL-Generated Hits

Objective: To computationally validate the multi-parameter profile of molecules generated by the trained RL agent.

Materials: Molecular docking suite (e.g., AutoDock Vina, Glide), off-target prediction web service (e.g., SwissTargetPrediction), retrosynthesis software. Procedure:

- Potency Confirmation (Docking):

- Prepare the protein target structure: Remove water, add hydrogens, assign charges (using UCSF Chimera or Maestro).

- Prepare ligand structures: Generate 3D conformers for the top 50 RL-generated molecules (using RDKit's EmbedMolecule).

- Define a docking grid centered on the known active site.

- Run molecular docking for all ligands. Retain poses with docking scores ≤ -9.0 kcal/mol for further analysis.

- Selectivity Profiling:

- Submit the SMILES of the docked hits to the SwissTargetPrediction server.

- Analyze the top 15 predicted off-targets. Manually curate to identify targets within the same protein family (e.g., other kinases). A compound is considered selective if the primary target is the top prediction and predicted probabilities for closely related off-targets are < 30%.

- Synthesizability Assessment:

- Input the SMILES of each validated hit into a local AiZynthFinder installation configured with a relevant reagent database (e.g., Enamine Building Blocks).

- Set a threshold of ≥ 80% probability for each reaction step in the proposed route.

- A molecule is deemed readily synthesizable if a route with ≤ 5 linear steps and all step probabilities ≥ 80% is identified.

Visualizations

Title: RL Multi-Objective Molecule Generation Workflow

Title: Multi-Objective Reward Function Structure

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for RL-Driven Molecule Discovery

| Item / Software | Provider / Example | Function in Protocol |

|---|---|---|

| Chemical Databases | ChEMBL, ZINC, Enamine REAL | Source of training data (bioactivity) and purchasable building blocks for synthesizability assessment. |

| Deep Learning Framework | PyTorch, TensorFlow | Backend for building and training the GNN and RL agent networks. |

| RL Library | OpenAI Gym, Stable-Baselines3 | Provides environment scaffolding and standard RL algorithm implementations (PPO, SAC). |

| Molecular Representation Kit | RDKit, DeepChem | Handles molecule manipulation, fingerprint generation, SAscore calculation, and 3D conformation. |

| Activity Prediction Model | ChemProp, Directed Message Passing NN | Pre-trained or fine-tunable models for predicting pIC50 and off-target activities from structure. |

| Docking Software | AutoDock Vina, Schrodinger Glide | Computational validation of predicted potency via binding pose and affinity estimation. |

| Retrosynthesis Tool | AiZynthFinder, ASKCOS | Plans synthetic routes for generated molecules to validate synthesizability. |

| Off-Target Prediction Service | SwissTargetPrediction, ChEMBL | Provides computational off-target profiling to assess selectivity. |

This application note examines INS018055, a novel inhibitor for idiopathic pulmonary fibrosis (IPF) discovered by Insilico Medicine's AI platform, Pharma.AI. This case study is framed within the broader thesis that AI-driven small molecule discovery research represents a paradigm shift by integrating generative chemistry, target prediction, and translational medicine into a unified, accelerated workflow. The transition of INS018055 from AI-generated hit to clinical Phase II trials validates key tenets of this thesis: the ability to rapidly identify novel chemistry against novel targets with a high probability of clinical translatability.

INS018_055 was generated using the following integrated AI modules:

- PandaOmics: Target identification and prioritization for IPF.

- Chemistry42: Generative chemistry for novel molecular structure design.

- inClinico: Clinical trial outcome prediction for de-risking.

Table 1: Key Quantitative Milestones for INS018_055

| Metric | Data | AI Platform Contribution |

|---|---|---|

| Target Identification to Lead Candidate | < 18 months | PandaOmics & Chemistry42 |

| Novel Target (Hypothesis) | TNIK (Traf2- and Nck-interacting kinase) | PandaOmics multi-omics analysis |

| Preclinical In-Vivo Efficacy (BLEO mouse) | ~50% reduction in lung fibrosis score | Validated AI-predicted target hypothesis |

| Phase I Safety (SAD/MAD) | Well-tolerated, no severe adverse events | inClinico prediction support |

| Clinical Trial Phase (as of 2024) | Phase II (NCT05938920 & NCT05946517) | - |

| Phase II Patient Enrollment | ~60 patients (each trial) | - |

| Key Preclinical Attributes | Anti-fibrotic, anti-inflammatory | Multi-mechanism predicted by AI |

Diagram Title: AI to Clinical Workflow for INS018_055

Detailed Experimental Protocols

Protocol 3.1: In-Vitro Kinase Inhibition Assay for TNIK Purpose: To determine the half-maximal inhibitory concentration (IC50) of INS018_055 against recombinant TNIK kinase. Procedure:

- Prepare reaction buffer: 20 mM HEPES (pH 7.5), 10 mM MgCl2, 1 mM EGTA, 0.01% Brij-35, 0.1 mg/mL BSA.

- Serially dilute INS018_055 in DMSO (e.g., 10 mM to 0.1 nM, 11-point 3-fold dilution).

- In a 384-well plate, mix 5 μL of compound/DMSO, 10 μL of TNIK enzyme (final 1 nM), and 10 μL of ATP/substrate mix (final ATP at Km concentration, peptide substrate).

- Incubate at 25°C for 60 min. Stop reaction with 25 μL of detection reagent (e.g., ADP-Glo).

- Measure luminescence. Fit dose-response curve to calculate IC50.

Protocol 3.2: In-Vivo Efficacy in Bleomycin-Induced Mouse Model of Pulmonary Fibrosis Purpose: To evaluate the anti-fibrotic effect of INS018_055. Procedure:

- Induce fibrosis in C57BL/6 mice (n=8-10/group) via oropharyngeal instillation of bleomycin (1.5-2.0 U/kg).

- Commence treatment (e.g., oral gavage of 10 mg/kg INS018_055, BID) on day 7 post-bleomycin.

- Sacrifice mice on day 21. Perform bronchoalveolar lavage (BAL) for inflammatory cell count and cytokine analysis (e.g., TGF-β1, IL-6).

- Inflate and fix lungs with formalin. Section and stain with Hematoxylin & Eosin (H&E) and Masson's Trichrome.

- Score fibrosis blindly using the Ashcroft scale. Perform hydroxyproline assay on lung homogenate for total collagen quantification.

Diagram Title: Proposed Signaling Pathway for INS018_055

Protocol 3.3: Phase I Clinical Trial Design (Single/Multiple Ascending Dose - SAD/MAD) Purpose: To assess safety, tolerability, and pharmacokinetics (PK) of INS018_055 in healthy volunteers. Procedure:

- Cohort Design: Randomized, double-blind, placebo-controlled. 6-8 SAD cohorts (oral dosing from 1 mg to 100 mg). 4-5 MAD cohorts (dosing for 10-14 days).

- PK Sampling: Serial blood collection pre-dose and up to 72-96 hours post-dose. Analyze plasma concentration using validated LC-MS/MS method to determine Cmax, Tmax, AUC, t1/2.

- Safety Monitoring: Record all adverse events (AEs). Perform vital signs, ECG, clinical labs (hematology, chemistry, urinalysis) at baseline and regular intervals.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Replicating Key Experiments

| Item / Reagent | Vendor Examples (Illustrative) | Function in INS018_055 Research Context |

|---|---|---|

| Recombinant Human TNIK Kinase | SignalChem, Thermo Fisher | Primary target for in-vitro biochemical inhibition assays. |

| ADP-Glo Kinase Assay Kit | Promega | Homogeneous, luminescent assay for measuring TNIK kinase activity and compound IC50. |

| Bleomycin Sulfate | Merck | Agent for inducing pulmonary fibrosis in murine in-vivo efficacy models. |

| Hydroxyproline Assay Kit | Sigma-Aldrich, Abcam | Colorimetric quantification of collagen content in lung tissue homogenates. |

| Anti-α-SMA Antibody | Abcam, Cell Signaling | Immunohistochemistry marker for identifying activated myofibroblasts in lung sections. |

| Human TGF-β1 ELISA Kit | R&D Systems, BioLegend | Quantification of a key pro-fibrotic cytokine in BAL fluid or cell culture supernatant. |

| LC-MS/MS System (e.g., Triple Quad) | Sciex, Waters, Agilent | Gold-standard for bioanalytical method development and PK analysis of INS018_055 in plasma. |